For years, compute was treated like something you could keep scaling in the background. Add capacity. Add cloud. Add another cluster. Keep going. The abstraction held for a long time because the physical consequences stayed mostly out of sight.

AI changed that.

It forced the industry to look directly at the parts of digital growth it had got comfortable ignoring: electricity demand, cooling limits, land use, water stress, supply bottlenecks, and the simple fact that infrastructure doesn't magically expand because a roadmap says it should.

The International Energy Agency now estimates that data centres consumed around 415 terawatt-hours of electricity in 2024, or about 1.5 per cent of global electricity use, and projects that figure could reach around 945 terawatt-hours by 2030.

That alone would be enough to force a more serious conversation about AI infrastructure. But the bigger shift is still coming. Quantum computing is often discussed as though it belongs to some distant, self-contained future.

It doesn’t.

It's emerging into the same energy systems, the same real estate pressures, the same cooling constraints, and the same security environment that AI is already straining. That's why quantum matters now, even before fault-tolerant systems arrive at scale. AI made compute visible. Quantum will make it strategic.

AI Already Broke The Illusion Of Infinite Compute

AI didn’t just increase demand. It changed how demand behaves. Workloads are no longer steady or predictable. They spike, scale, and shift depending on how models are trained, deployed, and used in production. That variability makes infrastructure harder to plan and even harder to optimise.

At the same time, the distance between software decisions and physical consequences has collapsed. Choices made in model design or data strategy now ripple directly into power draw, cooling requirements, and capacity planning. What used to sit comfortably in separate conversations now lives in the same one.

The scale problem is no longer theoretical

The old assumption was simple enough: demand for compute would keep rising, and infrastructure would rise with it. That assumption is harder to defend now.

The IEA’s modelling shows that global data centre electricity demand is on track to more than double by 2030, with AI as the main driver of that growth. It also notes that this demand isn't evenly spread.

It's geographically concentrated, which means the stress shows up hardest in specific regions, grids, and development corridors rather than being distributed neatly across the system. In the US, the picture is even sharper. Lawrence Berkeley National Laboratory found that data centres consumed about 4.4 per cent of total US electricity in 2023.

That's up from 58 terawatt-hours in 2014 to 176 terawatt-hours in 2023, with a projected range of 325 to 580 terawatt-hours by 2028. Between 2017 and 2023, data-centre power demand more than doubled, largely because of AI servers and the cooling they require.

That changes the tone of the conversation. We're no longer talking about digital growth as a mostly virtual phenomenon. We're talking about physical demand moving faster than physical systems tend to move.

Infrastructure is now a limiting factor

Energy is the obvious constraint, but it's not the only one. The actual problem is broader and more awkward. It includes power availability, cooling architecture, planning delays, grid interconnection, and the fact that capacity is often needed in the same handful of places at the same time.

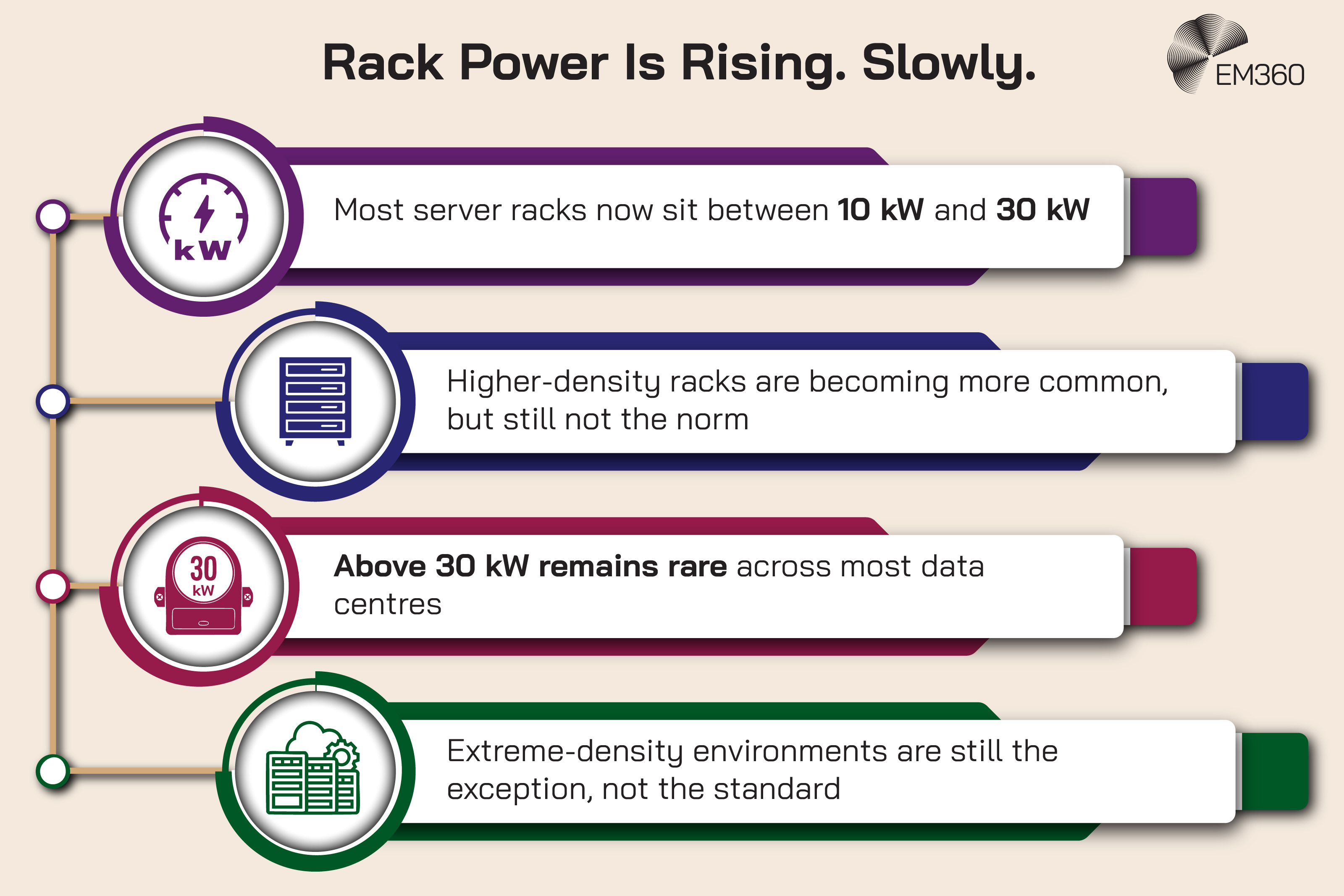

Uptime Institute’s 2025 global survey says the sector is facing rising costs, worsening power constraints, and growing difficulty in forecasting future capacity requirements while trying to meet AI demand. Average rack densities are still rising, and sustainability reporting has not improved in 2025.

China’s AI Strategy Reset

How Beijing’s industrial AI, patents, and tight governance are shifting global competition beyond consumer chatbots.

That combination matters. It means operators are being pushed to scale faster while still struggling to measure and explain the full operational cost of that scale. This is where the language around “elastic” compute starts to break down. Software can scale quickly. Infrastructure usually can’t.

Substations, transmission upgrades, land approvals, cooling retrofits, and large-load agreements don't behave like cloud provisioning. They behave like infrastructure. Which means they arrive on infrastructure timelines.

Quantum Doesn’t Replace AI. It Compounds The Problem

Quantum changes the shape of the problem rather than the scale alone. It introduces a class of compute that behaves differently at every level, from how it processes information to how it has to be housed and maintained. That makes it harder to compare directly with what came before.

For enterprise leaders, that difference matters more than the performance gains. Planning for quantum isn’t just about adding capacity. It’s about accommodating a system that follows a completely different set of rules, while still coexisting with everything already in place.

Why quantum infrastructure is fundamentally different

Quantum computing is often framed as a faster, more powerful version of classical computing. That's not quite right. It's a different kind of compute with a very different hardware stack.

Classical systems can still run in a wide range of commercial data-centre environments. Quantum systems, especially superconducting approaches, need highly controlled conditions to function at all. That usually means cryogenic cooling, specialised control electronics, complex wiring, and unusually tight environmental tolerances.

Some systems operate at temperatures measured in millikelvin, which is only a fraction above absolute zero. That matters because the infrastructure problem isn't just “more compute.” It's more compute with more specialised operating conditions, more hardware dependencies, and less tolerance for failure.

IBM’s current roadmap puts a large-scale fault-tolerant system in 2029, targeting 200 logical qubits and 100 million quantum gates. Microsoft says its Majorana 1 architecture is designed with a path to one million qubits on a single chip.

Inside AI’s New Power Bottleneck

The Dominion deal reveals how grid capacity and regulated utilities are becoming core to AI and hyperscale data centre strategy.

Whether those timelines hold exactly as stated is less important than what they reveal: the major players are planning for scale, and scale in quantum means a very different set of infrastructure demands from the ones most enterprise estates were built around.

Faster compute, higher hidden costs

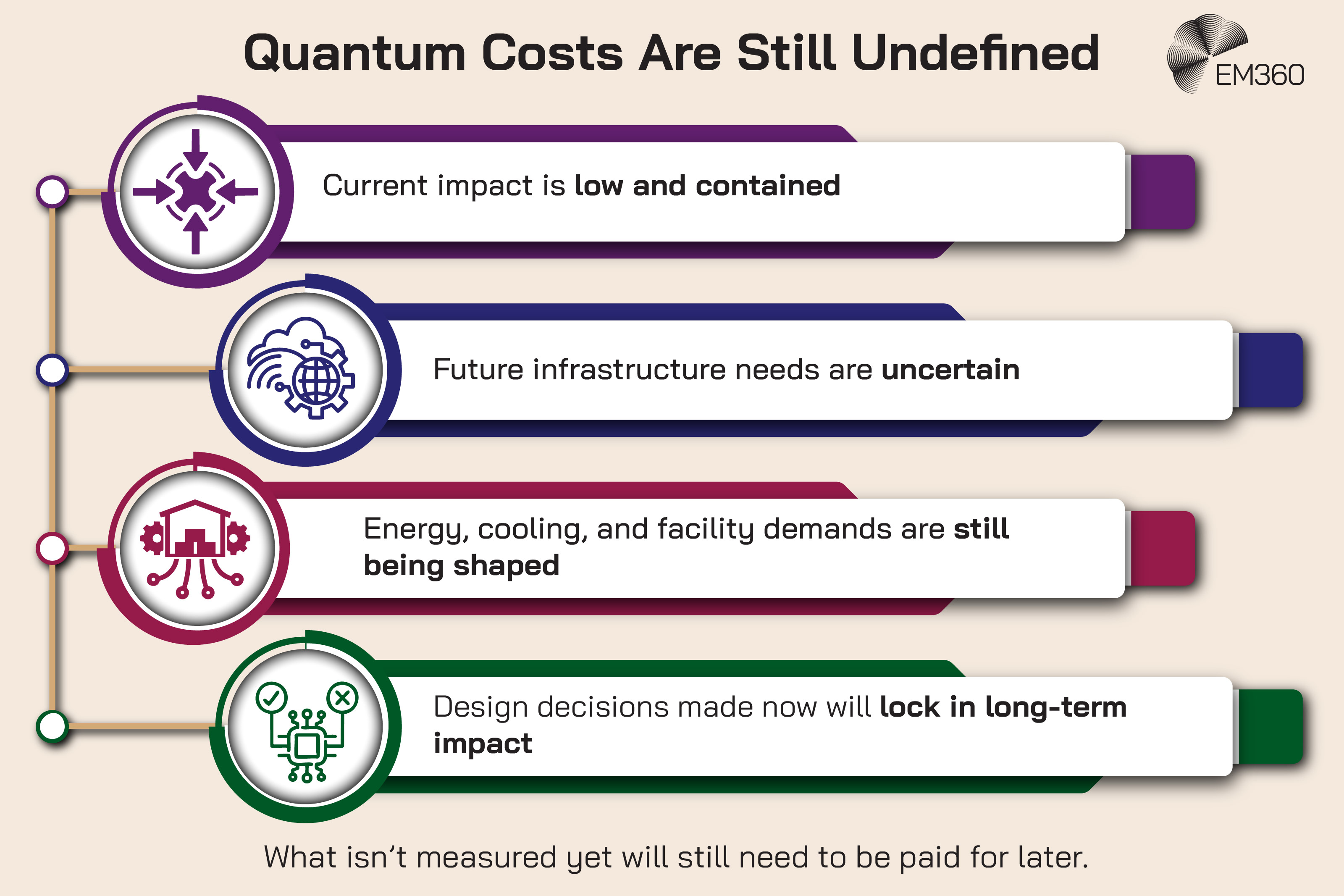

This is the part that gets flattened by hype. Quantum may solve some problems dramatically faster than classical systems. That doesn't automatically mean it will be lighter on energy, cooling, or materials.

A system can be computationally efficient and still be physically intense. Those are not the same thing.

Nature Reviews Clean Technology published a commentary arguing that the energy and physical resource demands of future quantum-accelerated data centres are still unknown and deserve far more attention. That uncertainty matters because it cuts against the neat assumption that “faster” will also mean “cleaner” or “cheaper” in infrastructure terms.

There are already signs of how difficult this gets at scale. Research published in 2024 notes that sending microwave signals from room-temperature electronics into cryogenic environments isn't viable for the millions of qubits required for fault-tolerant systems because of the heat load of cabling and the cost of electronics. In other words, the control layer itself becomes part of the scaling problem.

That's the more honest way to frame quantum energy consumption right now. Not as a settled number, because it's not, but as an emerging infrastructure challenge with enough warning signs to justify planning before the numbers are perfect.

The real risk is planning blind spots

The most dangerous assumption isn't that quantum will arrive tomorrow. It's that enterprises can safely ignore it until it does.

That's how blind spots form. Not because the risk was invisible, but because it looked too early to act on.

Balancing AI Growth and Power

Explores the tension between generative AI scale, surging electricity use and climate goals, and how design choices can narrow the energy gap.

Quantum is still early enough that many of its infrastructure costs are not well modelled. But that’s exactly why the planning gap matters. AI has already shown what happens when demand scales faster than the systems around it can adapt. Quantum is now on track to enter that same environment with even more specialised requirements and even less operational slack.

This is what quantum readiness should mean at board level. Not “buy a quantum computer.” Not “wait for a breakthrough.” It means identifying where quantum intersects with infrastructure strategy, security exposure, procurement decisions, and long-term capacity planning before those issues become urgent and expensive at the same time.

Post-Quantum Cryptography Is The First Real Deadline

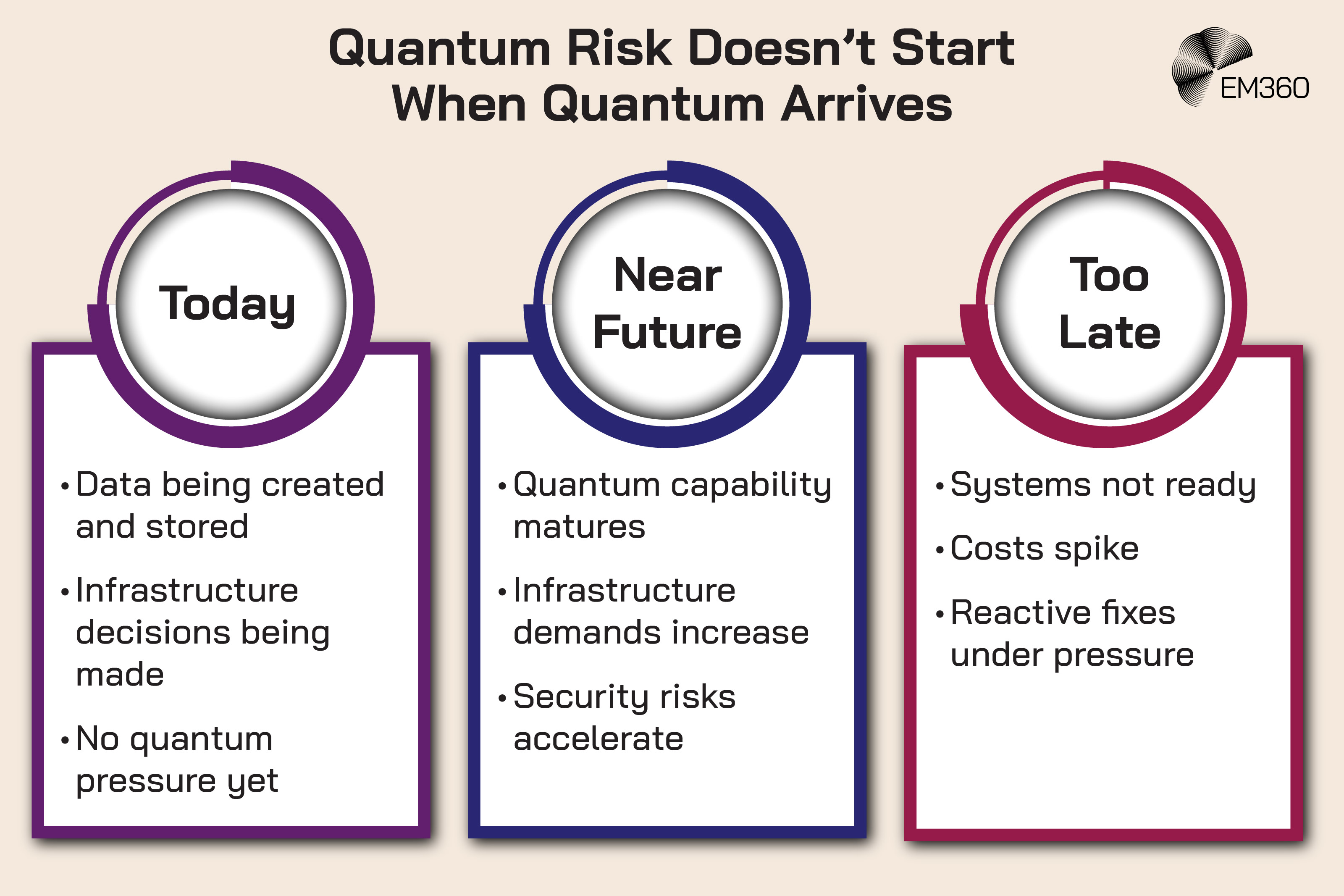

The timeline for quantum risk doesn’t start when large-scale quantum systems arrive. It’s already in motion. Sensitive data being created and stored today often has a long lifespan, especially in sectors where records need to remain confidential for years or even decades.

That shifts the question from “when will quantum break encryption?” to “which data is exposed if it eventually does?” Once you look at it that way, the urgency stops being theoretical. It becomes a matter of how far back your current decisions will reach.

Why waiting for Q-Day is a mistake

Quantum infrastructure may still be emerging, but quantum security risk is already a present-tense problem.

The simplest reason is also the least dramatic. Sensitive data doesn't stop mattering just because it was encrypted years earlier. If an attacker can steal encrypted data now and decrypt it later with a sufficiently capable quantum computer, the damage is delayed, not avoided.

That's the logic behind “harvest now, decrypt later” attacks, and it's why organisations with long-lived sensitive data don't get to wait for perfect certainty. NIST has been unusually direct on this point. It says now is the time to migrate to post-quantum encryption standards and notes that three post-quantum cryptography standards are ready to implement now.

When Quantum Becomes Strategy

Converging breakthroughs, vendor moves and market capital show quantum shifting from isolated research to an enterprise planning issue.

Google has taken a similarly public stance. In February 2026, it issued a call to action around securing the quantum era, said it has been preparing for a post-quantum world since 2016, and pointed again to crypto agility as a central requirement.

What enterprise readiness actually looks like

This is where the conversation needs to stay grounded. Post-quantum cryptography isn't a single software patch. It's a migration problem.

NIST’s guidance is useful because it strips the issue back to the real work: organisations need to identify where vulnerable algorithms are used, plan to replace or update them, and prepare for the fact that products, services, and protocols will need changes across the stack.

In practice, that means four things.

- First, build a cryptographic inventory. You cannot migrate what you have not identified.

- Second, prioritise systems based on sensitivity and lifespan. Data that needs to remain secure for years has a different urgency from data with a short useful life.

- Third, design for crypto agility. The goal isn't merely to swap one algorithm for another. It's to make future changes possible without breaking everything around them.

- Fourth, test migration paths early. Interoperability problems rarely announce themselves politely.

This is unglamorous work. Which is usually how you can tell it matters.

The strategy gap most organisations still have

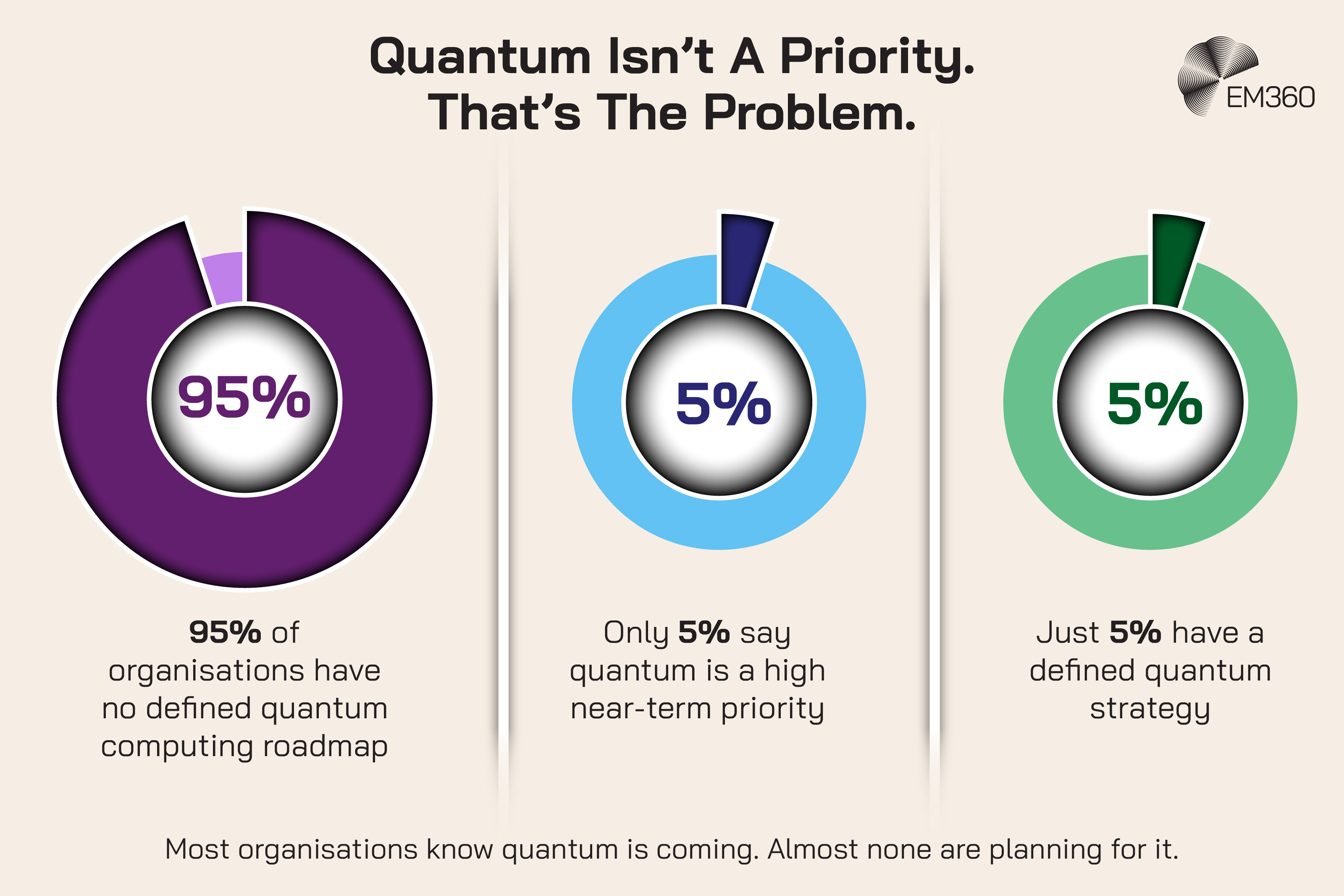

The readiness gap is now well documented. ISACA’s 2025 Quantum Computing Pulse Poll found that 95 per cent of organisations don't have a defined quantum computing roadmap, while only 5 per cent say quantum is a high near-term priority and only 5 per cent say their organisations have a defined quantum strategy.

At the same time, 62 per cent of respondents worry that quantum computing will break today’s internet encryption.

DigiCert found a similar pattern. While 69 per cent of organisations said they recognise the risk quantum computing poses to current encryption standards, only 5 per cent had implemented quantum-safe encryption.

That gap tells you something important. Awareness is no longer the problem. Translation is. A lot of organisations know quantum matters. Far fewer have turned that knowledge into a roadmap, a budget, or a sequence of decisions.

That's a leadership problem before it's a technical one.

The Environmental Cost Of Innovation Is Compounding

The pressure isn’t confined to electricity usage alone. As infrastructure scales, the supporting systems that keep it running start to carry their own weight. Each decision about how compute is delivered brings trade-offs that aren’t always visible at first glance.

That’s where the conversation starts to widen. It moves from how much power is used to how that power is supported, managed, and sustained over time. Once those layers come into focus, the full footprint of modern compute becomes harder to ignore.

Energy is only part of the equation

Once compute becomes a physical constraint, environmental trade-offs stop being a side issue.

Electricity gets most of the attention because it's the easiest metric to picture, but cooling methods, water use, land requirements, and local ecosystem pressure all sit inside the same infrastructure story.

UNEP said that rapid data-centre expansion is increasing both energy and water consumption, while leaving many countries without comprehensive frameworks for sustainable data-centre operations.

Water is where lazy generalisations do real damage. Uptime Institute makes the point well: water use is local. It depends on the watershed, climate, cooling system type, and competition for supply in that specific location. Some legacy facilities use large amounts of water through open evaporative cooling.

Newer facilities can use little to no water for cooling with water-efficient, waterless, or hybrid systems. The right question isn't “do data centres use water?” Of course they do. The right question is whether that water use fits the local environment and the chosen cooling design.

That's a much less convenient headline. It's also much more useful.

Sustainability reporting isn’t keeping up

This is where the governance problem becomes harder to ignore. If infrastructure is scaling faster than reporting quality improves, leadership ends up making decisions with incomplete visibility.

Uptime’s 2025 survey found that the collection and reporting of key sustainability metrics had not improved in 2025. That should concern anyone treating ESG, resilience, and infrastructure planning as separate conversations, because they are not separate anymore.

Rising power demand, cooling complexity, and siting pressure all change the sustainability profile of digital estates. If reporting lags behind growth, strategy lags behind reality. And once that happens, sustainability language becomes performance instead of governance. It sounds good in slides. It doesn't hold up under operational pressure.

Quantum will raise the stakes further

Quantum isn't yet driving the aggregate environmental footprint that AI already is. That distinction matters. But it would be a mistake to stop there.

The issue isn't current scale. It's future design direction.

If quantum systems continue moving towards fault tolerance and higher qubit counts, they will do so through more specialised facilities, more demanding thermal management, and more complex control architectures.

Nature’s March 2026 commentary is clear that the energy and physical resource impacts of future quantum-accelerated data centres are still unknown. That's not a reason to dismiss the issue. It's a reason to treat it as an emerging sustainability and procurement question now, while the architecture is still being shaped.

By the time these costs are obvious, they will already be harder to change.

What Future-Ready Infrastructure Actually Needs

Knowing the constraints is one thing. Acting on them is where most organisations start to stall. Infrastructure decisions have traditionally been driven by performance, cost, and time to deploy. That model no longer holds up when resource limits are part of the equation.

What’s changing is the role infrastructure plays in decision-making. It’s no longer a downstream consideration that supports strategy. It’s becoming one of the inputs that shapes it from the start.

Infrastructure strategy has to become energy-aware

For a long time, energy sat in the background of enterprise technology strategy. It was an operational cost, a facilities issue, or someone else’s problem.

That no longer works.

The IEA’s projections and Berkeley Lab’s US figures both point in the same direction: power demand from digital infrastructure is now moving fast enough to shape planning choices, site selection, capital decisions, and risk exposure.

An energy-aware infrastructure strategy starts by treating electricity as a design constraint instead of an afterthought. Not just how much a workload consumes, but where it runs, what cooling it triggers, what resilience it needs, and whether the surrounding grid can actually support the growth being planned.

That's a more mature question than “how do we get more compute?” It asks what kind of growth your infrastructure can actually sustain.

Hybrid compute will be the new normal

Quantum isn't going to replace classical computing. It's not going to replace AI either. It's going to sit alongside both.

That matters because future infrastructure will be designed around hybrid computing, not around one dominant model. Classical systems will continue to handle most enterprise workloads. AI systems will continue driving accelerated compute demand. Quantum, where it proves useful, will add a specialised layer for particular classes of optimisation, simulation, and cryptographic impact.

Uptime’s 2025 survey notes that enterprises continue to adopt hybrid IT strategies and that 45 per cent of workloads still reside in corporate facilities. That's a useful reminder that the future doesn't arrive by clean replacement. It arrives by messy overlap.

The smarter strategic question isn't “when will quantum take over?” It's “how will our estate handle a mixed compute environment with very different operating characteristics?”

Security must be built for a post-quantum world

The safest assumption now is that quantum-safe migration will take longer than many organisations expect.

That alone makes quantum-safe security a present design issue.

If your cryptographic estate is sprawling, undocumented, or hard to change, then the challenge isn't theoretical. The challenge is already inside your architecture.

NIST has made clear that migration should begin now, and Google’s latest position reinforces the same point: crypto agility is central because the organisations that can update or replace cryptographic algorithms without major disruption will be in a much stronger position than the ones that cannot.

Security teams know this. The harder part is getting the wider business to understand that post-quantum readiness isn't a future innovation project. It's foundational maintenance for digital trust.

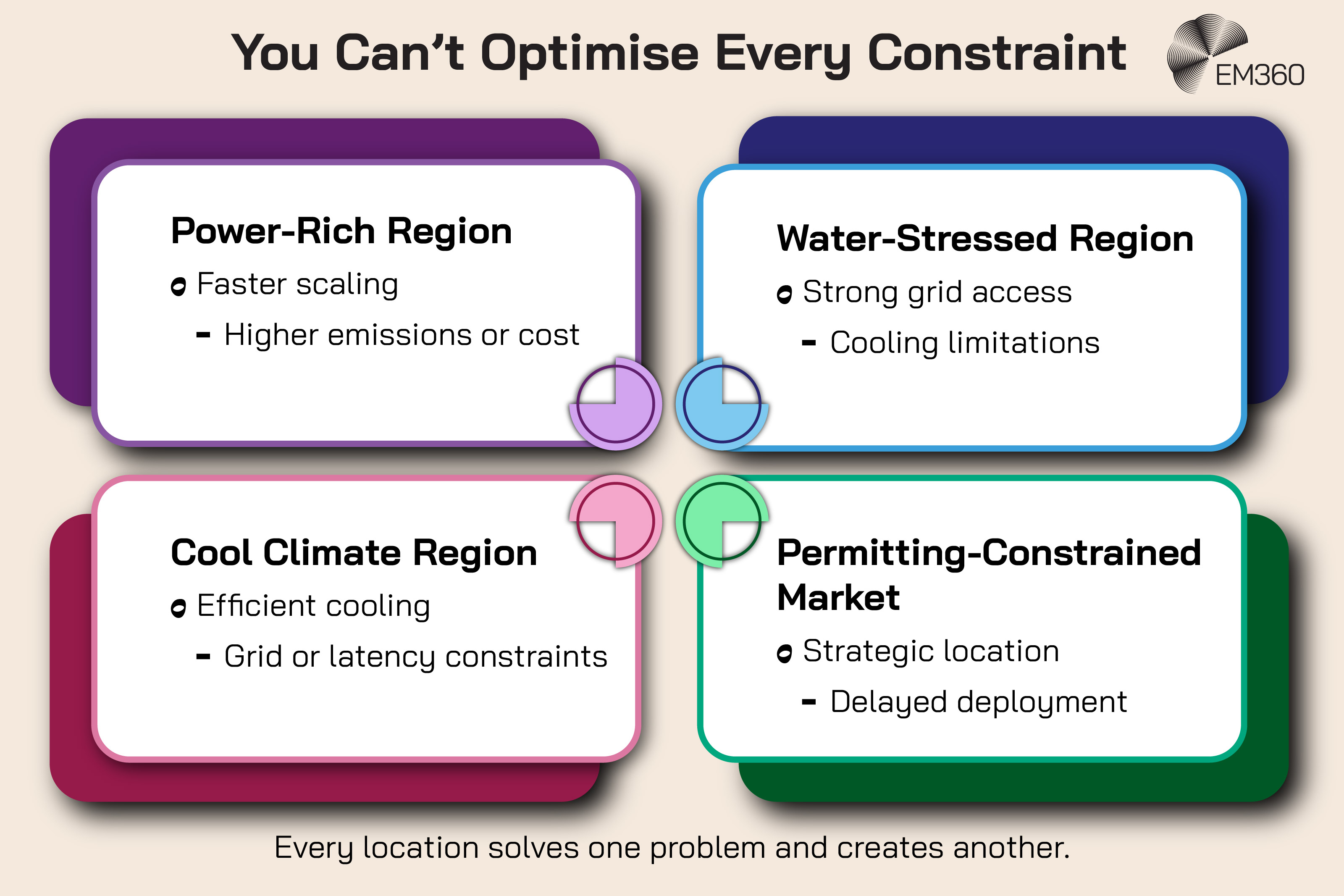

Location, cooling, and design will define scalability

The future of infrastructure will be shaped just as much by where systems are built as by what they are built to do.

Power-rich regions, water-stressed regions, areas with long grid connection delays, and markets with land or permitting friction don't offer the same path to scale. Cooling choice will increasingly follow local conditions rather than generic best practice.

Uptime’s water research makes this point clearly: site selection and facility design must reflect watershed capacity, climate, and cooling system type. That means data-centre design is no longer a downstream implementation detail. It's part of enterprise technology strategy.

And quantum will only sharpen that truth. Specialised hardware is unforgiving. It doesn't care how elegant the roadmap looked if the site, cooling, or control environment was wrong.

Final Thoughts: Compute Only Scales If It’s Governed

AI made the cost of compute impossible to ignore. Quantum makes it harder to postpone.

This isn’t about what’s coming next. It’s about whether the infrastructure behind your decisions can hold up under it. The organisations that get this right will treat compute as something to manage with intent, not something that quietly expands in the background.

That’s where the real advantage is starting to take shape. And it’s exactly where EM360Tech keeps the conversation focused as these shifts move from theory into reality.

Comments ( 0 )