For a long time, enterprise technology treated scale as proof of success.

More users meant growth. More workloads meant demand. More automation meant progress. If the system held together, the assumption was that you were doing something right. If it started to strain, the answer was usually more capacity, more tooling, more cloud, more investment. Keep scaling. Keep moving.

That logic worked for years because the consequences were easy to hide. Infrastructure sat in the background. Governance gaps could be patched over. Security debt was often invisible until something went badly wrong. The system looked stable enough from a distance, even when it was already carrying too much weight.

Compute changed that.

AI infrastructure made the physical cost of digital growth impossible to ignore. Suddenly, decisions that once felt purely technical were tied directly to energy demand, cooling limits, land use, supply constraints, and long-term cryptographic risk. Scale stopped looking abstract. It started looking expensive, uneven, and hard to govern.

That matters, but not only because compute is under pressure. It matters because compute is showing us something bigger. The real problem isn't that one part of the stack got harder to expand.

The real problem is that enterprise systems are reaching a point where scale starts exposing everything around them: weak governance, poor visibility, fragmented accountability, and operating models that were never built for this level of complexity.

Compute Made The Cost Of Scale Impossible To Ignore

What changed isn't just the pace of growth, but the nature of the constraints behind it.

For years, scale was treated as a software problem. Something you could optimise, distribute, or abstract away with the right architecture. That assumption held because the limits sat far enough in the background to be ignored.

That’s no longer the case. The boundaries are now visible, measurable, and increasingly difficult to work around.

AI infrastructure has turned scale into a physical constraint

Enterprise technology spent years talking about compute as though it were elastic by default. Need more capacity? Spin it up. Need to support a larger model? Add more infrastructure. Need to serve more users? The cloud would handle it.

That story is harder to tell now.

The International Energy Agency says global electricity demand from data centres is set to more than double by 2030 to around 945 terawatt-hours, with AI as the most significant driver. It also says electricity demand from AI-optimised data centres is projected to more than quadruple by 2030.

In the United States, data centres are on course to account for almost half of electricity demand growth between now and 2030.

Those numbers matter because they force a different kind of conversation. This is no longer just about model performance or cloud spend. It's about whether physical infrastructure can keep up with the pace of digital ambition.

Uptime Institute’s 2025 global survey makes the pressure even clearer. It says the data-centre sector is facing rising costs, worsening power constraints, and growing difficulty in meeting AI demand.

It also found that the collection and reporting of key sustainability metrics had not improved in 2025, even as operators faced increasing power pressure and higher-density requirements.

That's a much more awkward reality than the language of scale tends to suggest. Software can move quickly. Power infrastructure doesn't. Grid upgrades, cooling design, land approvals, supply chains, and large-load planning all move on slower timelines.

Once AI pushed compute into the foreground, the fiction of frictionless scale got a lot harder to maintain.

Quantum readiness is exposing long-term risk we haven’t planned for

Quantum computing sharpens that pressure in a different way.

It's still often discussed as a future technology, which makes it easy to treat as somebody else’s problem. But the first serious enterprise consequence of quantum isn't a futuristic machine appearing overnight.

It's the fact that organisations already have to make decisions today about security, infrastructure planning, and cryptographic migration before the technology reaches full scale.

NIST released its first three final post-quantum cryptography standards in 2024 and says organisations should begin applying them now. Its migration guidance is blunt about the reason.

Encrypted data remains at risk from “harvest now, decrypt later” attacks, where adversaries collect encrypted data today in the hope of decrypting it later with a sufficiently capable quantum computer. NIST also says organisations need to identify where vulnerable algorithms are used and plan to replace or update them.

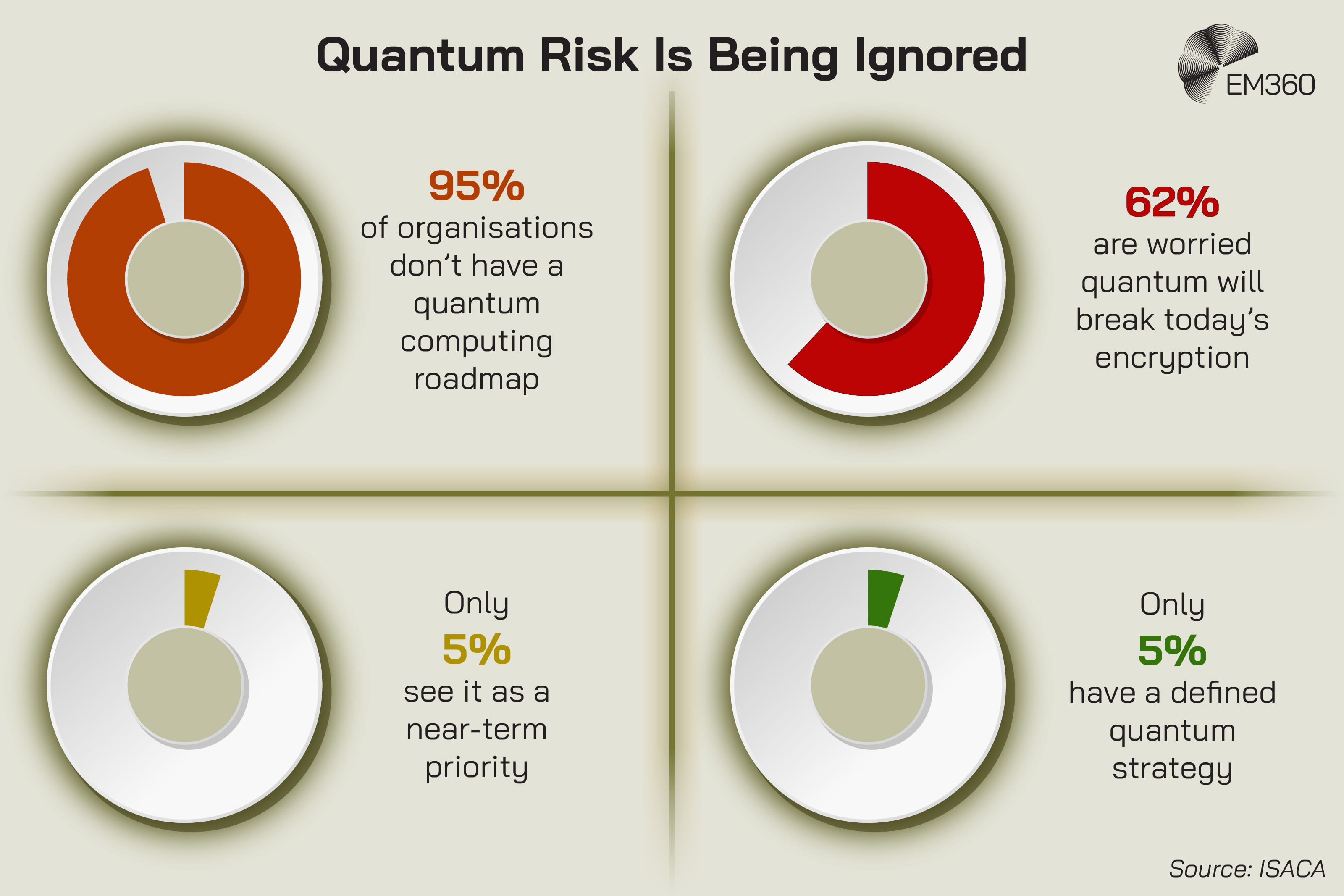

That's not a future problem. That's a migration problem that’s already started. The readiness gap is equally revealing. ISACA’s 2025 Quantum Pulse Poll found that 95 per cent of organisations don't have a quantum computing roadmap.

It also found that 62 per cent of respondents are worried quantum computing will break today’s internet encryption, yet only 5 per cent say it's a high near-term priority and only 5 per cent say their organisations have a defined quantum strategy.

At the same time, the major technology players are openly planning for scale. IBM says its Starling system is slated to run one hundred million gates on 200 logical qubits in 2029. Microsoft says its Majorana 1 architecture is designed with a path to one million qubits on a single chip.

Those timelines may shift, but the signal is clear enough. The leading vendors aren't treating quantum as a distant thought experiment. That's why compute matters here, but not as an isolated theme. It's the first domain where the hidden cost of scale became measurable. It's evidence, not the whole case.

How Technical Pressure Becomes Systemic

The pressure isn't isolated to one part of the stack. It follows a consistent path as systems expand.

What starts as a technical challenge rarely stays contained. As scale increases, the impact spreads outward, pulling more of the organisation into the problem. Teams that were once adjacent become responsible. Decisions that used to be local start carrying wider implications.

That's where scale begins to change the shape of the organisation itself.

From performance to governance

This is where the conversation gets more interesting.

When systems scale, problems don't stay where they started. A technical issue becomes an ownership issue. A performance challenge becomes a governance problem. A promising capability becomes a decision bottleneck because no one is fully sure who is accountable for its risks, its outputs, or its failure modes.

We’re already seeing that play out in AI adoption. McKinsey’s 2025 global survey found that 47 per cent of respondents say their organisations have experienced at least one negative consequence from generative AI use.

It also found that organisations vary widely in how closely outputs are reviewed: 27 per cent say all gen AI output is reviewed before use, while a similar share say 20 per cent or less is checked.

That's not just a tooling issue. It's a governance issue.

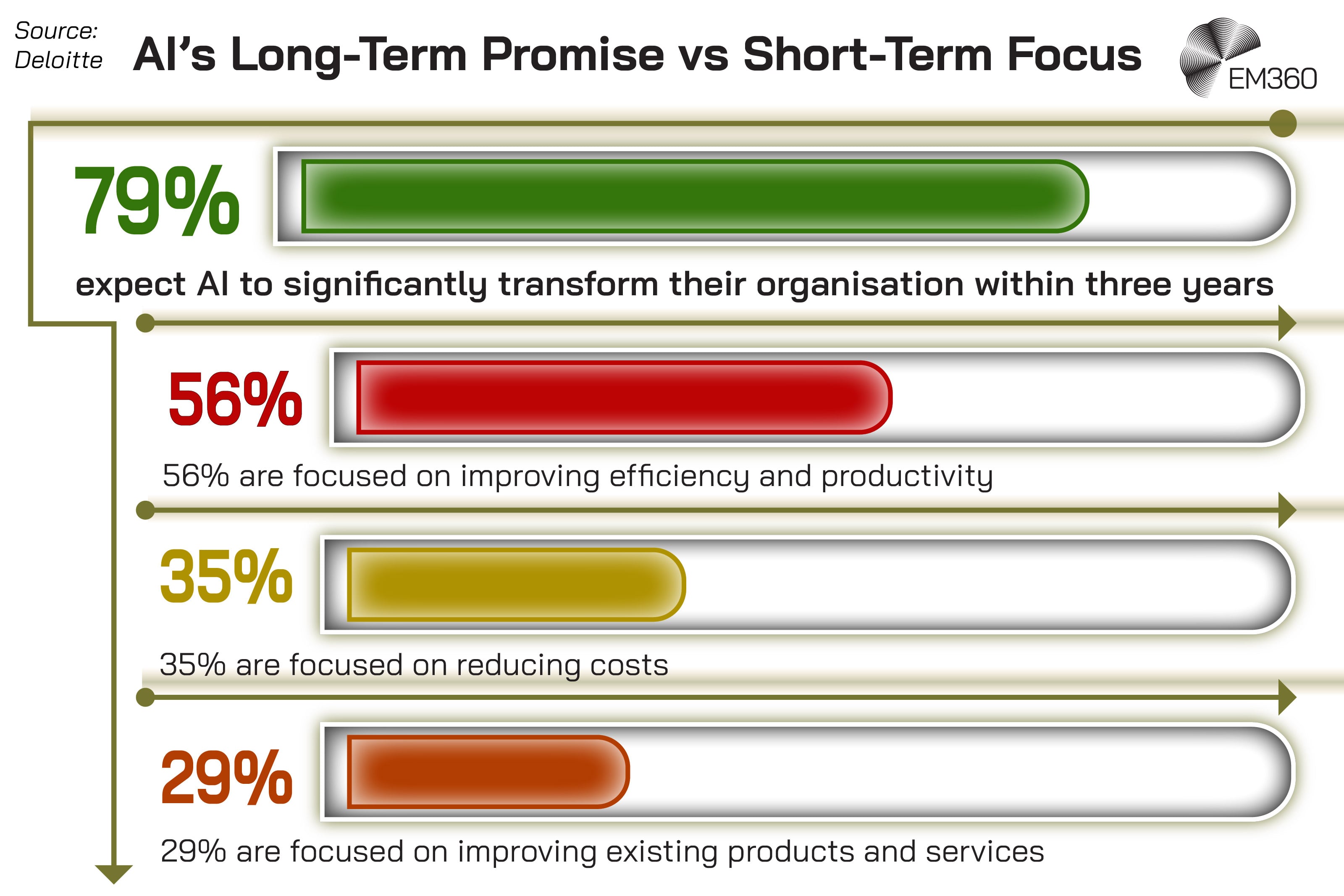

Deloitte’s 2024 year-end generative AI report makes the same point from another angle. It says organisational change only happens so fast, that regulation and risk loom large, and that agentic AI is on the rise even though the broader challenges facing generative AI still apply.

In other words, scale isn't just increasing what these systems can do. It's increasing the burden of deciding how they should be governed, by whom, and at what level of oversight. That's what systems pressure looks like when it stops being purely technical.

From infrastructure to sustainability and cost accountability

The same shift is happening with infrastructure.

Once compute starts drawing this much power, sustainability is no longer a nice-to-have layer sitting somewhere off to the side. It becomes part of infrastructure strategy, cost accountability, and risk management. The old habit of separating operational decisions from environmental consequences stops making sense.

The IEA’s analysis makes that impossible to ignore. It notes that data-centre power demand is rising sharply, that demand is geographically uneven, and that critical minerals and wider energy-security considerations are now part of the same picture. Uptime adds a governance layer to that by showing that sustainability reporting isn't improving at the same rate as demand pressure.

That gap matters. If infrastructure is scaling faster than organisations can measure, report, and justify its consequences, then leadership is making decisions with incomplete visibility. At that point, “sustainable AI” stops being a branding line and becomes a much harder question about cost, resilience, and local impact.

From security controls to systemic exposure

Security is where the pattern becomes clearest.

The World Economic Forum’s Global Cybersecurity Outlook 2026 says organisations are embracing AI and automation at scale even as governance frameworks and human expertise struggle to keep pace. It places that inside a broader risk landscape shaped by geopolitical fragmentation, widening capability gaps, and more complex attacks.

That's a useful framing because it moves the conversation away from isolated controls and towards systemic exposure.

At a certain point, the risk is no longer just whether one tool is configured correctly or one team is following policy. The risk is that interconnected systems are scaling faster than the organisation’s ability to understand their dependencies.

Supply chains, AI models, cloud estates, cryptographic exposure, and human oversight all start interacting in ways that are difficult to see until something goes wrong. That's not unique to compute. Compute was just mthe first to make it visible.

Why Systems Break Before They Fail

The warning signs rarely show up where most teams are looking.

By the time pressure becomes visible at the surface, the underlying structure has usually been under strain for a while. Small gaps start to connect. Dependencies tighten. What once felt manageable begins to behave unpredictably.

That shift is subtle at first, but it changes how the system responds long before anything actually stops working.

Scale increases complexity faster than control mechanisms evolve

When people hear “system failure”, they usually imagine an outage.

That's often the wrong image.

Modern enterprise systems usually break before they fail outright. They break when visibility drops. They break when teams can't agree on ownership. They break when governance is fragmented across platforms, functions, and committees that move more slowly than the systems they are supposed to oversee.

That's one reason scale feels manageable right up until it doesn't. The technical estate may still be running. The dashboards may still be green. But the organisation is already losing the ability to reason clearly about what is connected to what, what can be changed safely, and where the most serious risk really sits.

The system is functioning. Control is what starts to erode.

Visibility lags behind growth

That erosion gets worse when growth outruns measurement.

McKinsey’s survey found that most organisations aren't yet implementing the adoption and scaling practices associated with value creation, and that more than 80 per cent aren't seeing a tangible impact on enterprise-level EBIT from generative AI. At the same time, AI use is spreading across more functions, which means the challenge is no longer confined to a few experimental teams.

That kind of expansion creates an observability problem as much as a performance problem. The more distributed the system becomes, the harder it is to see the whole thing clearly. Data visibility fragments. Monitoring becomes uneven. Risk management lags. Leaders end up making enterprise decisions from partial operational pictures.

Once that happens, maturity starts to matter more than momentum.

Decision-making becomes the bottleneck

There’s also a human limit here that organisations don't always like admitting.

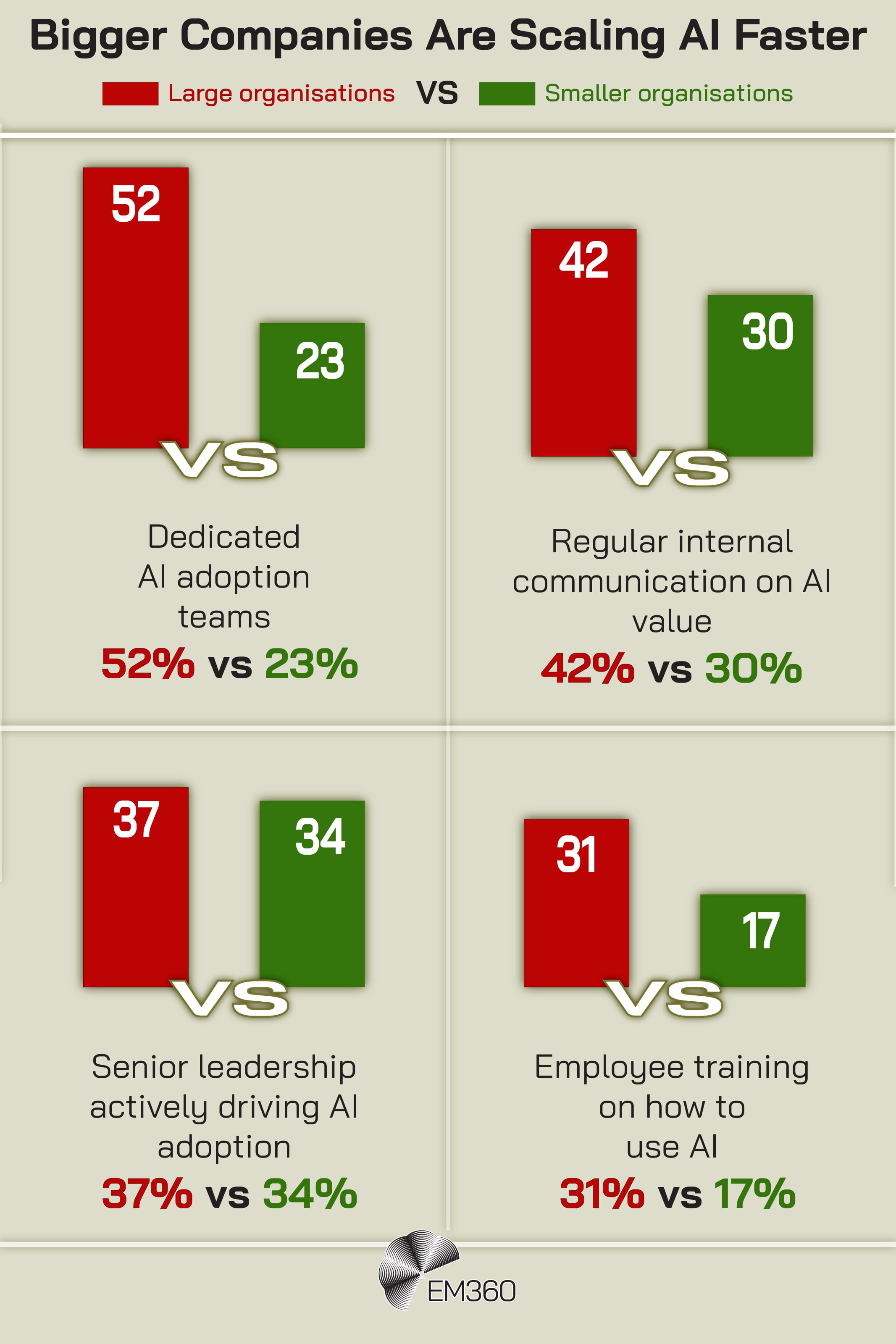

McKinsey’s January 2025 workplace report found that nearly all companies are investing in AI, but only 1 per cent describe themselves as mature on deployment. It also says the biggest barrier to scaling isn't employees who refuse to use the technology, but leaders who aren't steering fast enough.

That should sound familiar to anyone trying to scale enterprise systems responsibly. At some point, the bottleneck isn't compute, not bandwidth, not tooling. It's whether decision-makers can set priorities, create guardrails, and build enough organisational clarity for scale to happen without chaos.

That's why systems maturity under pressure matters. It's not a question of whether the technology works. It's a question of whether the organisation can still govern what the technology creates.

What Mature Systems Do Differently At Scale

Not every organisation responds to this pressure in the same way.

Some keep adding layers in an attempt to stabilise what is already under strain. Others step back and rethink how their systems are structured, governed, and supported as they grow.

That difference becomes more visible the further systems scale.

They design for consequences, not just capability

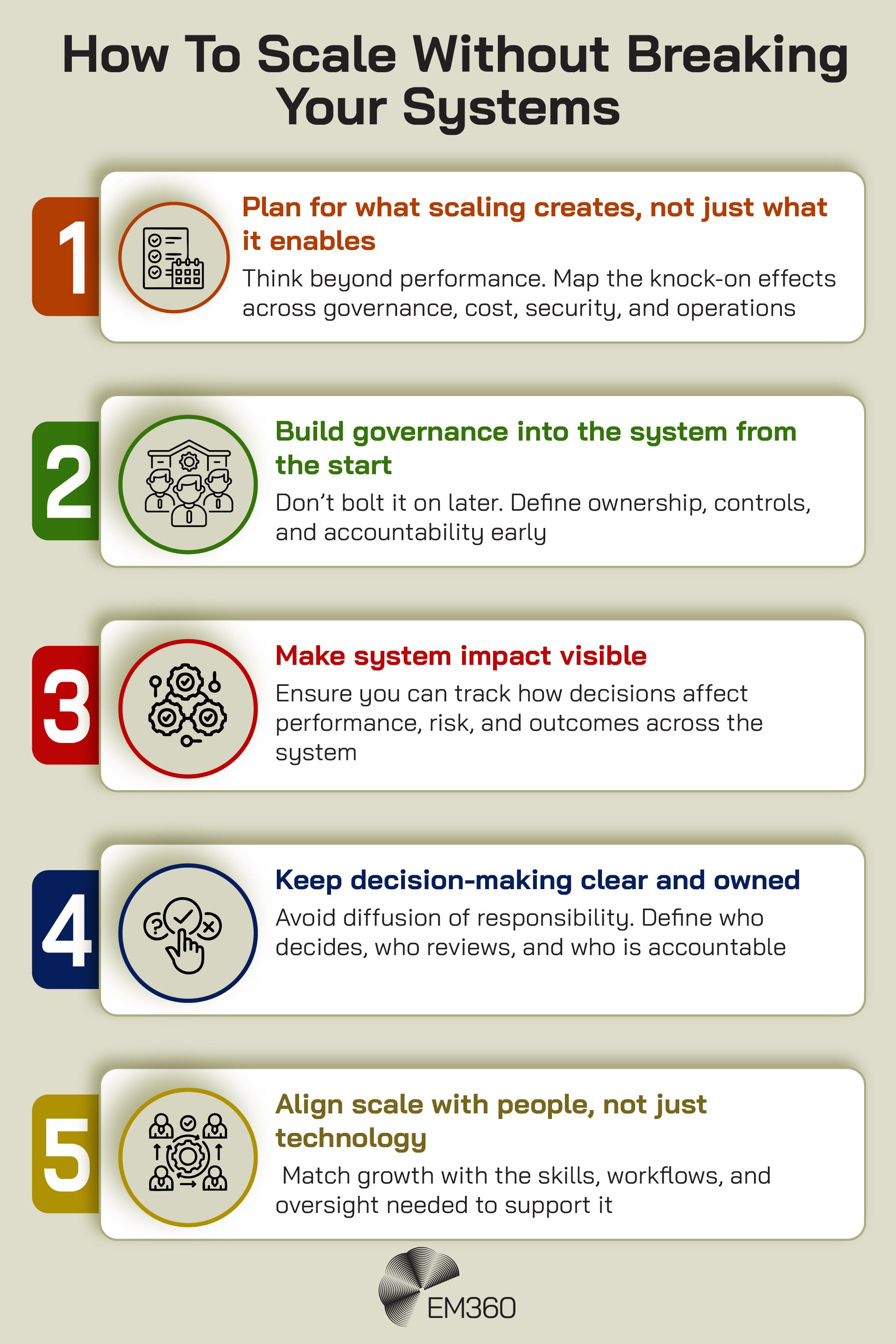

Mature organisations don't treat scale as a straight line from more demand to more capacity.

They assume that every major expansion of capability has second-order effects. More AI adoption changes governance requirements. More compute changes energy and infrastructure exposure. More automation changes the burden placed on human oversight. More connectivity changes resilience obligations.

That mindset matters because it shifts the planning question. Instead of asking, “Can we scale this?”, mature systems ask, “What happens around this system if we do?”

That's systems thinking in practice. Not abstraction. Not theory. Just a more honest way of designing enterprise technology strategy.

They treat governance as infrastructure

The organisations that hold up best under pressure are usually the ones that stop treating governance as a final review step.

They build it into the system itself.

The World Economic Forum’s cyber outlook makes clear that AI and automation are advancing inside a landscape where governance frameworks and human expertise are already under strain. NIST’s post-quantum guidance says organisations must identify where vulnerable cryptography exists and begin planning migrations now. Those aren't side tasks. They're infrastructure tasks in their own right.

That's the shift. Governance by design is no longer just a nice phrase for conference slides. It's part of what makes a system scalable in the first place.

They align technical scale with human capacity

Mature systems also make a less glamorous point that matters a great deal: technical scale and human capacity have to grow together.

If leaders can't understand the implications of the systems they are funding, if teams don't have the workflows to review outputs safely, or if accountability is spread so thinly that no one owns the risk properly, scale becomes fragile very quickly.

This is where responsible growth starts to look less like an engineering challenge and more like organisational design. Skills matter. Reporting lines matter. Decision rights matter. Human-in-the-loop oversight matters, not because people are slowing innovation down, but because trust and judgement don't scale automatically.

That's one of the harder lessons enterprise technology keeps relearning.

What Compute Signals For Autonomy And Connectivity

The signals aren’t limited to one layer of enterprise technology.

Once you know what to look for, the same pressure starts to show up in different places, each with its own version of the problem. The details change, but the direction doesn’t. Scale keeps pushing responsibility outward, into areas that were never designed to carry it.

Autonomy will shift the burden onto human oversight

Compute exposed the physical limits of scale. Autonomy is starting to expose the cognitive and governance limits.

Deloitte says agentic AI is on the rise, but also stresses that the wider barriers facing generative AI still apply. McKinsey’s latest survey shows broader AI adoption, uneven oversight, and a large share of organisations already experiencing negative consequences.

That combination points to the same pattern. As organisations hand more decision-making to autonomous or semi-autonomous systems, the burden doesn't disappear. It shifts. Humans are left to define boundaries, monitor behaviour, investigate failures, and carry accountability for outcomes they may not fully control in real time.

That's not a future governance problem. It's already forming.

Connectivity will scale trust, not just traffic

Connectivity is showing the same pressure from a different angle.

Ericsson says total monthly global mobile network data traffic reached 200 exabytes in the fourth quarter of 2025, up 22 per cent year on year, and that video accounted for 76 per cent of all mobile data traffic at the end of 2025. It also says 5G subscriptions reached 2.9 billion by the end of 2025, accounting for one-third of all mobile subscriptions.

Those figures tell a simple story on the surface: more traffic, more 5G, more participation. But the more important story is what comes with that growth.

GSMA’s Mobile Net Zero 2025 guidance says operators should integrate climate risk assessment into network planning, investment, and technology deployment decisions, design equipment for greater operational resilience, and address cross-sector interdependencies during climate-related disasters.

That's the same pattern again. Connectivity at scale isn't just a network story. It's a trust story, a resilience story, and a sustainability story. The more connected the system becomes, the more obligations it carries.

These aren't three separate trends. They're three versions of the same pressure.

Final Thoughts: Scaling Systems Means Owning Their Consequences

Compute made one thing very hard to ignore: scale has a cost, and that cost doesn't stay neatly contained inside the system that triggered it.

AI infrastructure forced the issue first. It showed that digital growth has physical limits, environmental consequences, and long-tail security implications that many organisations were not planning for seriously enough.

But the bigger lesson isn't about compute alone. It's about what scale reveals once systems become large enough, connected enough, and important enough to strain the structures around them.

That's where enterprise systems now sit.

The organisations that keep treating scale as a capability race will keep discovering limits the hard way. First in compute. Then in autonomy. Then in connectivity. The organisations that do better will be the ones that treat scale as a consequence-management problem from the start.

They will design for pressure, build governance into the architecture, and treat visibility, resilience, and human capacity as part of what makes growth viable. That's the more useful way to read what is happening now. Not as a string of disconnected technology trends, but as a broader test of systems maturity under pressure.

It's also where EM360Tech’s wider coverage becomes most valuable, because the pattern only really comes into focus when you look across domains rather than inside one silo at a time.

Comments ( 0 )