For a while, the easiest way to understand generative AI was to think of it as a very fast thinking partner. You asked a question, it answered. You gave it a draft, it improved it. You dropped in a block of data, and it helped you make sense of the mess. Useful, yes. Sometimes impressively so. But still mostly reactive.

But over the past few months, OpenAI has pushed GPT-5.4 as a model designed for professional work, with native computer-use capabilities and stronger performance on knowledge-work tasks.

Microsoft has been building Copilot and Copilot Studio around agents that can generate documents, charts, presentations, and automated workflows. Anthropic’s computer use tooling lets Claude navigate software through screenshots, mouse control, and keyboard input.

Cognition’s Devin continues to position itself as an AI software engineer, while Rabbit’s teach mode points to a consumer-facing version of the same idea: systems that don’t just answer, but act.

Seen one by one, these look like product updates. Seen together, they reveal something bigger. AI is moving beyond generating text and starting to execute structured digital tasks inside real workflows. That's what makes this moment different. The first generation of generative AI helped people think. The next generation is being built to do the work itself.

The Pattern Emerging Across The AI Industry

The most important signal here is convergence. This shift isn't coming from one company chasing a headline. It is appearing across several AI ecosystems at once, which usually means the market has found a direction worth betting on.

OpenAI’s GPT-5.4 is probably the clearest recent example. The company describes it as built for professional work, highlights performance on knowledge-work tasks, and says it can operate across tools, software environments, spreadsheets, presentations, and documents.

Microsoft is pushing in a similar direction through Copilot Studio and Microsoft 365 Copilot, where agents can now generate Word documents, Excel worksheets, PowerPoint presentations, and broader workflow outputs using natural language. Anthropic’s computer use tool gives Claude the ability to capture screenshots, move a cursor, click, type, and interact with desktop interfaces.

Devin sits inside the same pattern from the software side, positioning itself as an AI engineer rather than a coding autocomplete tool. Rabbit’s teach mode applies a consumer version of the model, letting users demonstrate app-based tasks that an agent can later repeat.

An important caveat is that these systems aren't identical. Some focus on coding, some on workplace productivity, some on desktop interaction, and some on app automation. But the shared goal is hard to miss. They’re all trying to move AI from conversation to task execution. Not full autonomy.

Not a replacement for whole departments. Something more specific, and in some ways more important: bounded systems that can complete pieces of professional work inside environments businesses already use. That convergence is the real story behind the latest wave of AI releases.

Why Knowledge Work Is The Next Frontier For AI

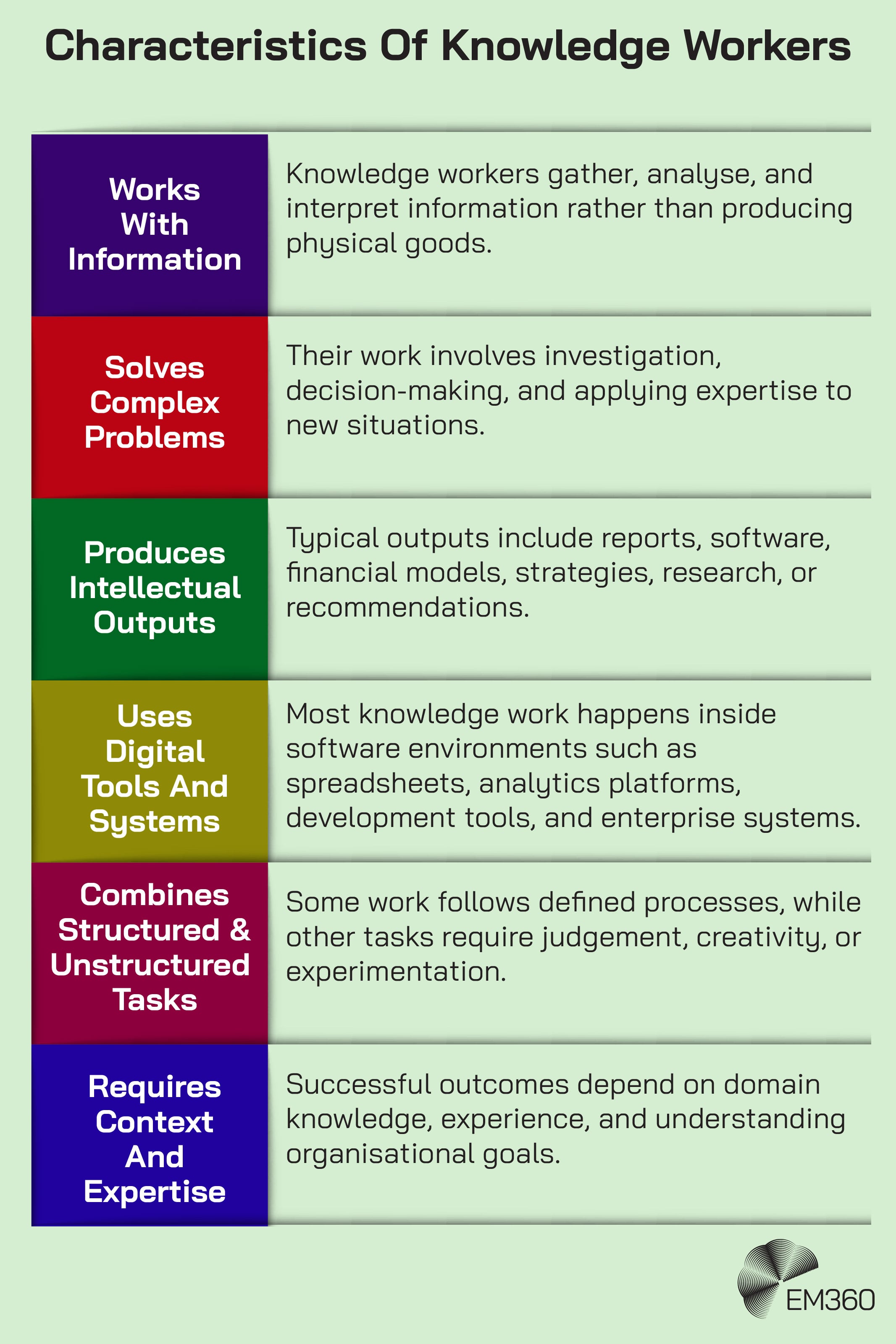

The phrase “knowledge work” comes from management thinker Peter Drucker, who used it to describe work built around information, judgement, and problem-solving rather than physical production.

Analysts building models, developers debugging code, finance teams running scenarios, operations teams coordinating workflows, and support teams investigating issues all fall into that category. The output may be a spreadsheet, a report, a recommendation, a fix, or a decision. But the raw material is usually information.

That matters because a surprising amount of knowledge work is more structured than it first appears. Much of it involves gathering inputs, checking systems, applying known rules, synthesising documents, and producing outputs in a repeatable format. That doesn't make it trivial.

It does make it increasingly reachable for AI systems that can reason across multiple steps, pull context from large volumes of material, and use software tools rather than just describe them.

When Data Must Be Real Time

Why streaming analytics is now core to enterprise strategy, separating fast-moving leaders from lagging, batch-bound competitors.

The market data backs up the relevance of this shift. McKinsey found that 92% of companies plan to increase AI investment over the next three years, but only 1% describe their organisations as mature in deployment, meaning AI is fully integrated into workflows and driving substantial outcomes.

That gap says a lot. Leaders clearly see value in AI-enabled work, but most organisations are still in the awkward middle ground between experimentation and operational reality.

The Technologies That Make AI Knowledge Work Possible

This shift isn't happening because one magic feature suddenly appeared. It is happening because several enabling technologies are starting to show up together in the same systems.

The first is stronger AI reasoning models. Newer models are better at handling multi-step logic, following instructions through longer tasks, and producing more coherent outputs when the job isn't just “answer this question” but “get from here to there without losing the thread.” That doesn't eliminate mistakes, but it does make more structured tasks viable. OpenAI’s GPT-5.4 is a good example, positioned as combining reasoning, coding, and agentic workflows in one model.

The second is long context AI. Large context windows let models process much more information in one go, including lengthy documents, codebases, spreadsheets, logs, and research materials. OpenAI says GPT-5.4 supports up to one million tokens of context in the API and Codex, which matters because many real business tasks fall apart when the system cannot hold enough context to work consistently.

The third is AI tool use. Instead of stopping at generated text, models can now call tools, search connectors, use APIs, access enterprise data sources, and hand off actions to external systems. Microsoft’s Copilot Studio is explicitly being built around this idea, while OpenAI’s tool search is designed to make tool-heavy workflows more efficient and less expensive to run.

Inside SOAR Security Stacks

Exposes how SOAR platforms sit atop SIEM, threat intel and scanners to automate playbooks and centralize incident workflows at scale.

The fourth is computer use AI. This is the ability to interact directly with software interfaces by reading screens and performing actions through a cursor or keyboard. Anthropic’s documentation is very direct about what this means in practice: screenshot capture, mouse control, keyboard input, and desktop automation. OpenAI is now moving in the same direction with GPT-5.4.

The fifth is AI workflow orchestration. This is less flashy, but arguably more important. A useful AI knowledge worker doesn't just perform one isolated action. It chains actions together across tools and steps. None of these capabilities alone creates an AI knowledge worker. The shift becomes possible because they are now appearing together inside the same systems. That's what makes this moment feel different from earlier AI hype cycles, which often promised automation without the technical scaffolding to deliver it.

What AI Knowledge Work Actually Looks Like In Practice

The easiest way to make this less abstract is to look at where task execution is already starting to show up.

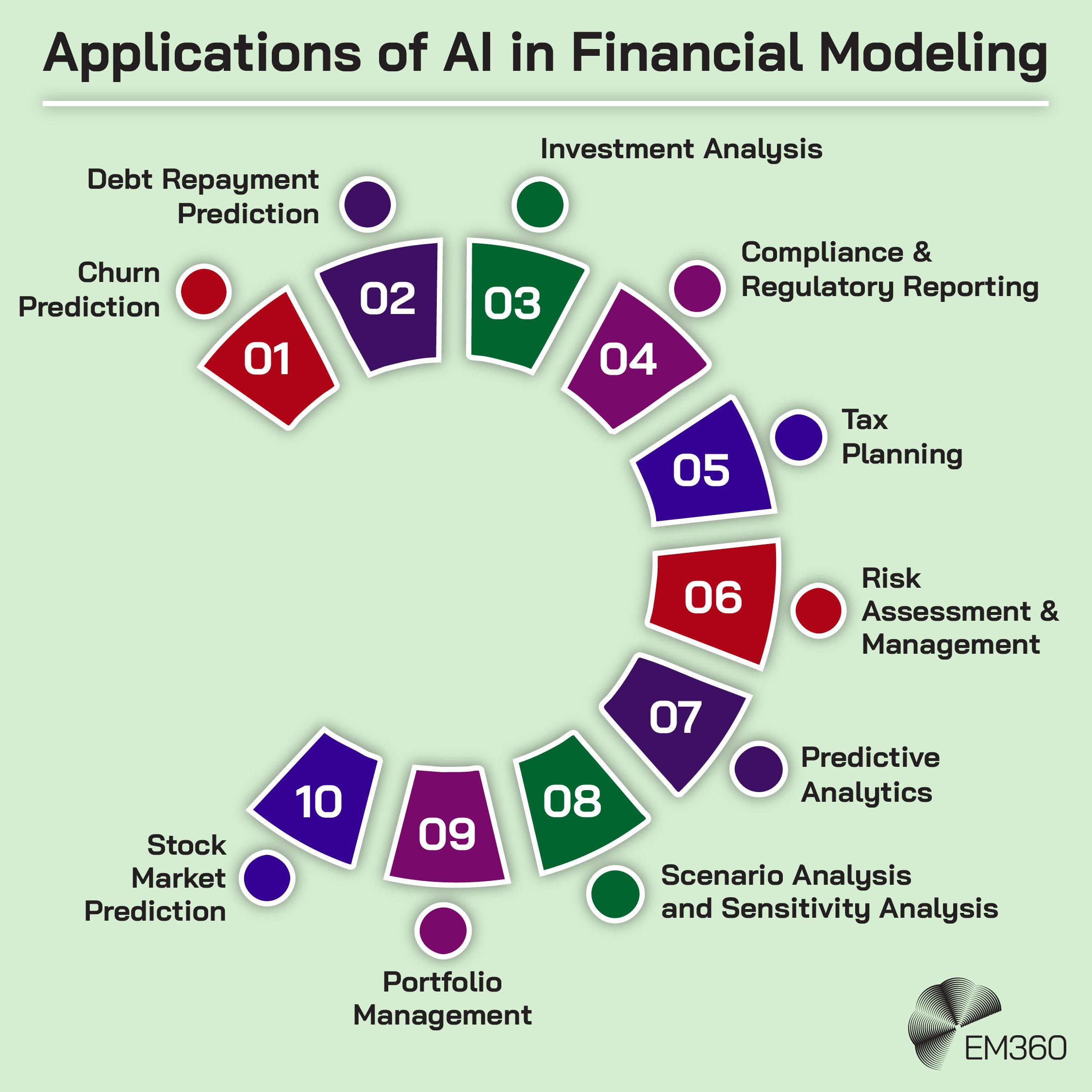

In finance and analysis, AI systems are beginning to generate spreadsheet models, run scenario analysis, summarise market research, and pull together decision-ready material from multiple sources. OpenAI’s Excel integration and financial data connectors make that direction explicit. The value isn't just faster summarisation. It is reducing the distance between a business question and an actionable output.

In software development, the picture is even clearer. Devin is built around the idea that an agent can work through engineering tasks using tools, code editors, and browsers. Whether or not any one vendor’s claims hold up in every environment, the pattern is unmistakable. AI is moving from assisting with code generation to participating in debugging, documentation, review, and other defined engineering tasks.

In operations and reporting, AI systems can already compile summaries, aggregate metrics, and coordinate data across internal tools. Microsoft’s examples of agents generating reports, presentations, and analysis inside Copilot are useful here because they show AI moving into the routine production layer of knowledge work, not just the brainstorming layer.

When Banks Bet Big on AI

Spencer Tuttle dissects the trade-offs as banks scale AI—balancing compliance, trust and ROI under mounting efficiency pressure.

In customer support, agents can gather information from knowledge bases, logs, prior cases, and connected systems to propose likely resolutions or prepare a cleaner handoff to a human. Microsoft has cited real customer examples where agents now handle materially more conversations and resolve more cases without escalation.

None of this means humans disappear. Right now, the more credible model is collaboration rather than replacement. These systems act more like assistive collaborators than independent decision-makers. Humans still review, validate, prioritise, and intervene. The real change is that AI is beginning to handle more of the task execution layer, not just the thinking layer.

What Enterprise Leaders Should Start Preparing For

AI systems are starting to participate in everyday knowledge work, which means enterprise leaders are moving into unfamiliar territory. The question is no longer whether AI can assist with work, but how organisations should structure teams and workflows around it. Several early signals already point to what this transition may look like.

Human agent collaboration will become a new operating model

Microsoft’s 2025 Work Trend Index says 81% of leaders expect agents to be moderately or extensively integrated into their AI strategy within the next 12 to 18 months. That doesn't suggest a world where humans vanish. It suggests a world where more teams supervise, direct, and review AI systems performing bounded tasks. Managing those relationships will become part of normal operating practice.

Workflow design will matter more than model choice

The obsession with choosing the “best model” misses the harder question. Where does the model fit? Value comes from integrating AI into actual processes, with clear handoffs, permissions, and outcomes. McKinsey’s maturity gap points to the same truth. Many firms are investing, but far fewer have figured out how to embed AI into workflows in a way that consistently produces business value.

Governance becomes more complex when AI executes actions

When Quantum Sensing Meets AI

How pairing quantum sensors with AI moves signal detection from hardware limits toward intelligence-driven advantage in defense and enterprise.

It is one thing to monitor generated text. It is another to monitor systems that click, type, retrieve, move data, or trigger downstream effects. Anthropic’s own documentation warns about risks such as prompt injection and sensitive-account access in computer-use settings. Governance for agentic systems will need to cover permissions, traceability, containment, and accountability far more tightly than ordinary chatbot usage.

Skills will shift toward supervision and systems thinking

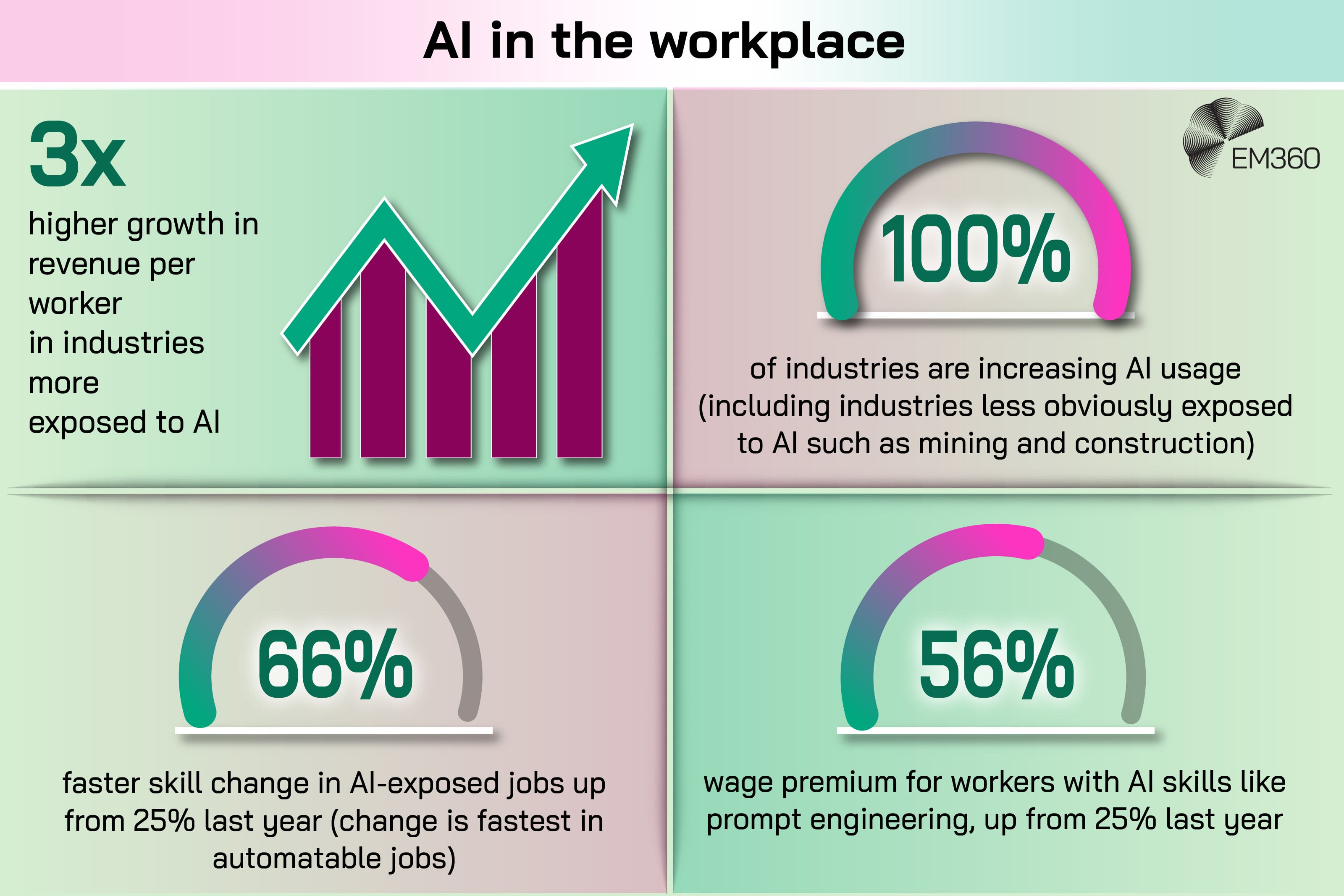

The labour picture is more complicated than simple replacement stories suggest. PwC’s 2025 AI Jobs Barometer found that AI-exposed industries saw productivity growth rise sharply, that AI-skilled workers commanded a 56% wage premium in 2024, and that skills required in AI-exposed jobs are changing 66% faster than in less exposed ones. The World Economic Forum says nearly 40% of skills required on the job are expected to change by 2030. Put plainly, the future of knowledge work is likely to reward people who can work with intelligent systems, judge outputs, and design better workflows around them.

Final Thoughts: The Age Of AI Knowledge Work Has Already Started

AI systems are starting to execute pieces of professional work inside the same tools and workflows people use every day. That shift changes the nature of the enterprise AI conversation.

The question is no longer just what AI can generate. It is how organisations structure work when some parts of analysis, reporting, coding, and coordination can be handled by machines.

That turns knowledge work into a design challenge. Leaders will need to decide which tasks belong to humans, which can be delegated to AI systems, and how those pieces connect inside real workflows. The companies that treat AI as a workflow redesign problem rather than just a software upgrade are far more likely to see meaningful productivity gains.

The technology enabling AI knowledge work is moving quickly. Understanding how it fits into real organisations is the harder part. EM360Tech will keep following the systems, strategies, and leadership decisions shaping the next phase of enterprise AI.

Comments ( 0 )