For a long time, compute felt almost weightless.

You bought capacity. You spun up services. You moved workloads into the cloud. And if you needed more, the answer usually looked simple. Add instances. Expand storage. Increase spend. The whole experience of modern cloud computing was designed to make infrastructure feel elastic, remote, and somehow slightly unreal.

That was the point. Abstraction removed friction. It sped up delivery, reduced operational drag, and gave enterprise teams room to build faster than they could when every decision depended on physical hardware sitting in a known room.

Now that illusion is starting to .

The rise of AI has pushed compute infrastructure back into contact with the physical world. Energy, cooling, land, transmission, water, and grid access don’t sit quietly in the background anymore. Instead they’re turning into visible constraints.

The International Energy Agency estimates that data centres used around 415 terawatt-hours of electricity in 2024, about 1.5 per cent of global electricity consumption, and projects that figure to reach roughly 945 terawatt-hours by 2030. That isn't a minor side effect. It's a systems signal.

So the real shift isn't that compute got more powerful. It’s that its consequences are now impossible to ignore.

Compute Was Designed To Feel Infinite

This didn't happen because the industry was careless. It happened because abstraction was useful. It solved real problems. It just didn't erase the physical systems underneath them.

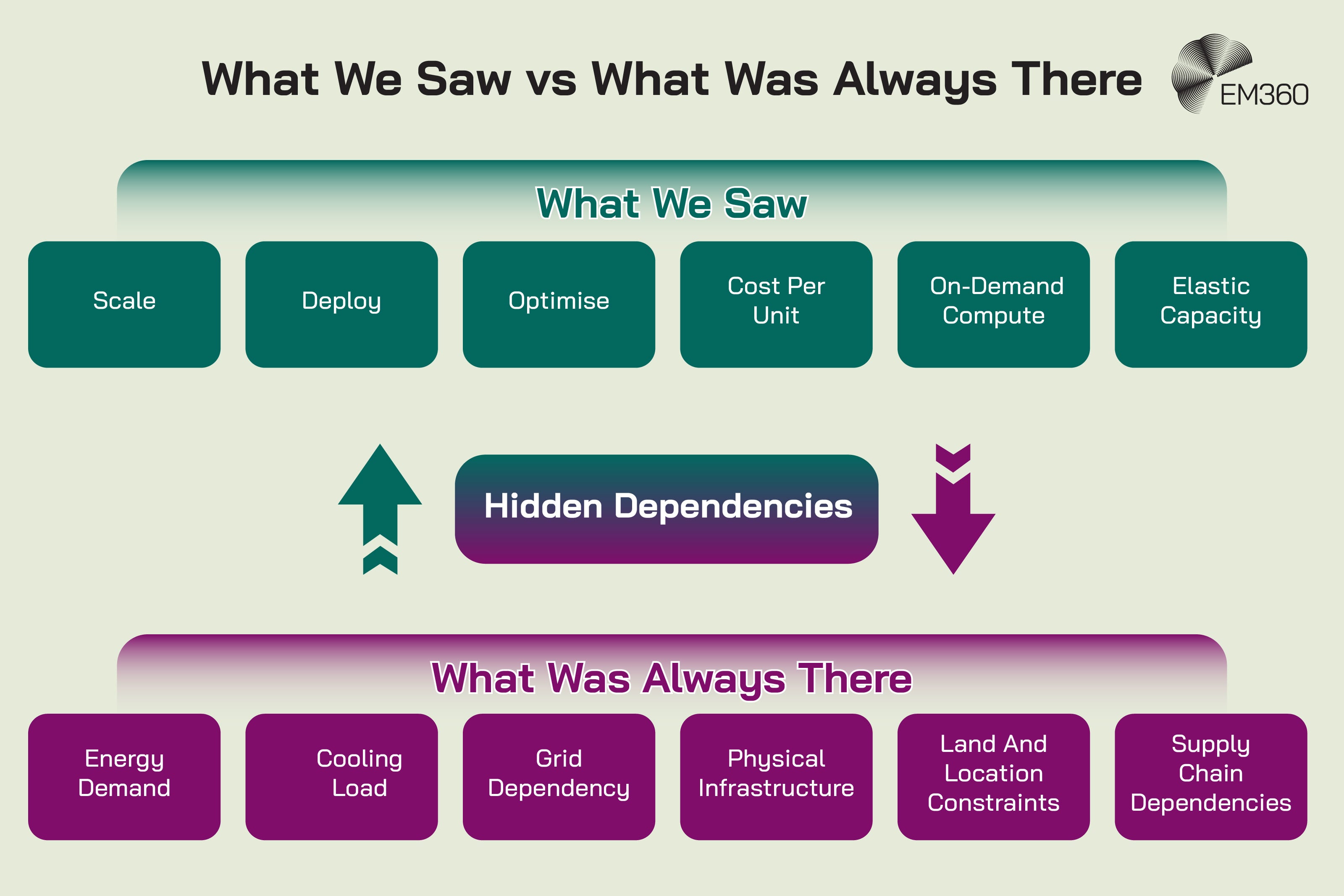

Cloud changed how enterprises thought about infrastructure by moving attention away from hardware ownership and toward service consumption. That made sense. When infrastructure becomes easier to provision, teams can focus on products, platforms, and outcomes instead of racks, procurement, and floor space. But cloud abstraction removed friction.

It didn't remove energy demand, material constraints, or dependence on the wider built environment. Those stayed put. They just became easier to ignore.

Abstraction removed friction, not physical limits

The cloud era trained organisations to think in logical layers. Capacity became something you requested, not something you physically planned for. Location became less visible. Hardware became somebody else’s operational problem. Even scalable infrastructure started to sound less like engineering and more like software.

That mental shift delivered speed, but it also encouraged a very specific blind spot. It made compute feel detached from power systems, cooling systems, supply chains, and land use, even though none of those things ever left the picture.

That distinction matters now because the physical layer has started pushing back. The IEA notes that there is no AI without energy, and that training and deploying AI models happens in large, power-hungry data centres. It also warns that affordable, reliable, and sustainable electricity supply will be a crucial determinant of who can scale AI effectively.

Efficiency became the only visible metric

Once compute was abstracted, the clearest questions became familiar ones. What does it cost? How fast does it run? Can it scale? Those are reasonable questions. They're also incomplete.

For years, compute efficiency was measured mainly in performance per dollar, utilisation, and delivery speed. The visible scorecard rewarded cheaper units of compute, faster deployment, and greater flexibility.

It didn't do much to account for external infrastructure strain, local environmental pressure, or the way concentrated demand can distort regional energy systems. The metric was not wrong. It was just too narrow.

That narrowness helps explain why so many enterprise strategies still treat rising data centre demand like a procurement challenge instead of a structural one. If the only cost you can see is the invoice, the real cost arrives much later, usually wearing work boots and carrying a transformer shortage.

China’s AI Strategy Reset

How Beijing’s industrial AI, patents, and tight governance are shifting global competition beyond consumer chatbots.

AI Is Forcing Compute Back Into The Physical World

AI didn't invent the material limits of digital infrastructure. It exposed how thoroughly we had learned to look past them.

The difference is scale, intensity, and continuity. Traditional enterprise workloads could often be planned around fairly stable assumptions. AI workloads break that rhythm. They raise the baseline and then keep it there.

Workloads are growing faster than infrastructure assumptions

There is a useful distinction here between training and inference. Training a model is the heavy, concentrated process where a system learns from vast amounts of data. Inference is what happens every time that model is used to answer a prompt, classify an image, or support a workflow in production.

Training gets attention because it's spectacularly compute-intensive. Inference matters because it turns that demand into a constant operational condition. That changes the shape of infrastructure planning. It's no longer just about rare spikes. It's about sustained AI workloads layered into normal business operations.

The U.S. Department of Energy said in late 2024 that data centre load growth had already tripled over the past decade and was projected to double or triple again by 2028, with total data centre electricity use rising from 176 terawatt-hours in 2023 to between 325 and 580 terawatt-hours by 2028.

Power and cooling are now first-order constraints

This is where the conversation becomes much less abstract.

AI doesn't just need more servers. It needs more electricity delivered to the right place at the right time, and it needs far more heat removed from dense environments that were not always built for this kind of high-performance computing.

Inside GigaChat’s China Chip Bet

Russia’s flagship LLM pivots to Chinese silicon, revealing how AI ambitions now hinge on constrained compute and alternative supply chains.

The IEA warns that electricity grids are already under strain in many places and estimates that around 20 per cent of planned data centre projects could be at risk of delay unless those risks are addressed. It also notes that wait times for critical grid components such as transformers and cables have doubled in the past three years.

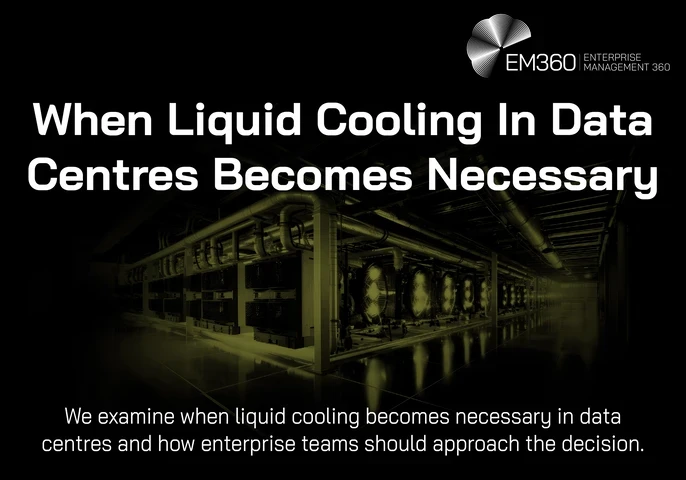

Cooling is part of the same story. As AI clusters become denser, traditional air-based approaches struggle to keep pace. Schneider Electric argues that liquid cooling is moving from niche to mainstream because rack densities associated with advanced AI are now pushing beyond the range that older thermal assumptions can comfortably handle.

Vendor material always deserves a raised eyebrow, but on this point it aligns with what the wider market is already showing. Thermal management is no longer a technical footnote. It's an infrastructure risk.

Location now matters again

Cloud taught the industry to speak as if place barely mattered. AI is undoing some of that.

When compute demand intensifies, location becomes strategic again. Proximity to generation capacity matters. Grid availability matters. Cooling options matter. So do land, permitting, and regional bottlenecks.

The IEA notes that AI-focused data centres are far more geographically concentrated than many other large electricity users, with nearly half of U.S. data centre capacity concentrated in five regional clusters. It also found that 50 per cent of U.S. data centres under development are being built in pre-existing large clusters, increasing the risk of local bottlenecks.

That's a very different reality from the older story where scale felt mostly like a software problem.

The Cost Of Compute Was Always There We Just Didn’t See It

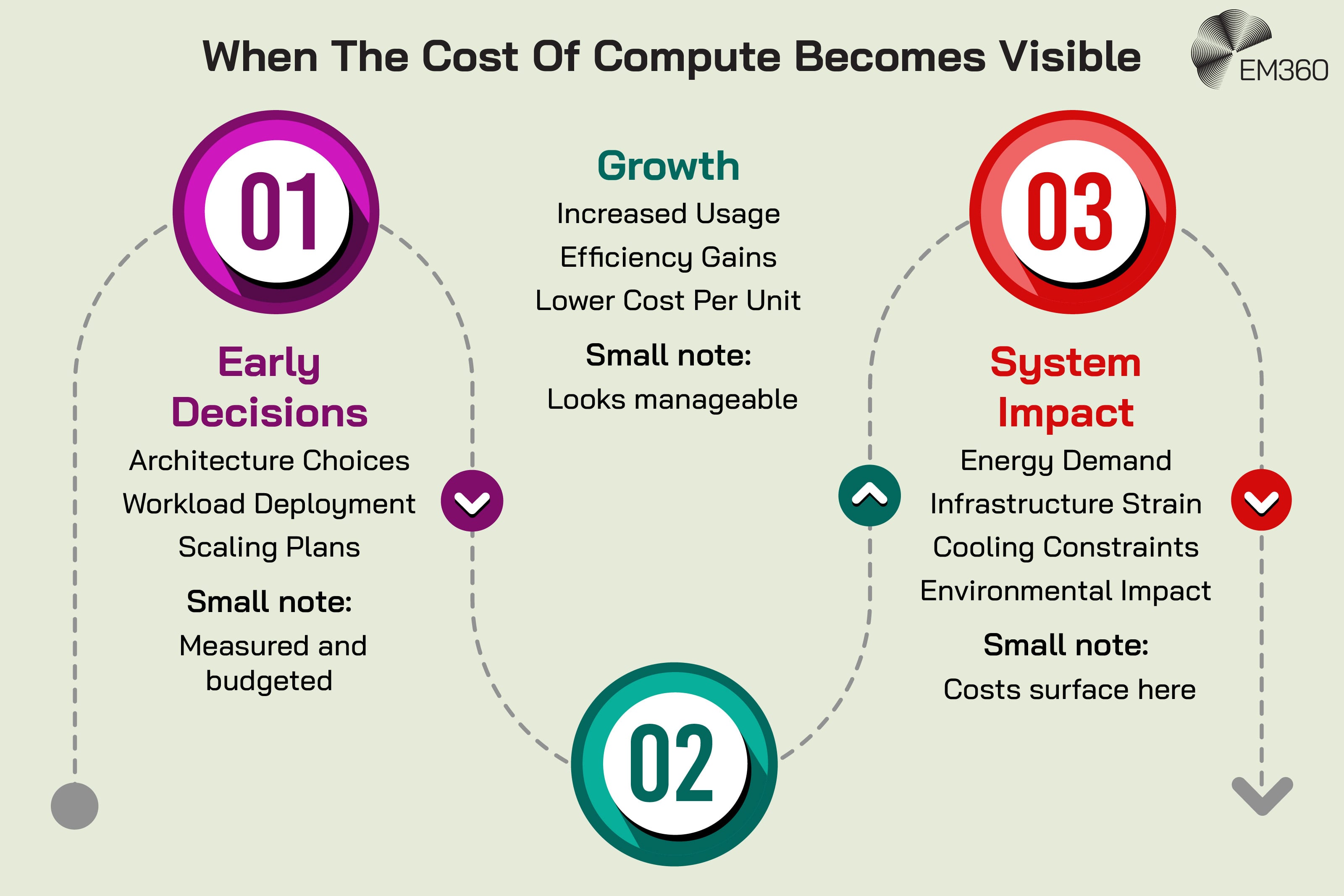

When people talk about the cost of compute, they usually mean spending. That's the easiest version to measure and the easiest to budget for. It's also the least complete.

The real cost is broader. It includes the energy system that has to support the workload, the infrastructure that has to be built around it, the environmental consequences that follow, and the communities that end up carrying more of the burden than the dashboard ever shows.

AI Export Controls At The Edge

AI hardware and model exports now sit at the center of US‑China tensions, reshaping supply chains, chip access and geopolitical risk.

Financial cost hid environmental and system cost

Pricing models for compute are designed to express usage. They're not designed to express impact. That creates a familiar kind of distortion. If a service becomes easier to buy and easier to scale, it feels manageable even when the supporting system is becoming more fragile.

The IEA’s numbers make that distortion harder to miss. Global investment in data centres nearly doubled since 2022 and reached half a trillion dollars in 2024.

At the same time, the organisation says local impacts are far more pronounced than the global percentage alone might suggest because data centres are concentrated and can account for substantial shares of electricity consumption in specific markets. That's the crucial difference between affordability and system readiness.

One tells you whether you can buy the compute. The other tells you what the world around that compute has to absorb.

Efficiency gains increased total consumption

This is the part that tends to frustrate people because it sounds counterintuitive until you sit with it for a moment.

Cheaper, faster, more efficient compute should reduce strain. In one narrow sense, it does. But if those gains make compute easier to use at far greater scale, total demand can still rise sharply. The efficiency improvement is real. So is the rebound effect.

The IEA builds this tension directly into its scenarios. Its high-efficiency case still sees very large electricity demand from data centres in 2035, just at a lower level than the base case. In other words, better efficiency matters. It just doesn't cancel out growth on its own.

That's one of the reasons the current conversation feels so slippery. The industry isn't wrong to pursue efficiency. It's wrong when it treats efficiency as a complete answer.

Sustainability became a reporting layer instead of a design input

Inside SpaceX’s AI Pivot

SpaceX shifts from rockets to a Starlink and xAI-driven model, betting that AI revenue will eclipse launch as it chases a $28.5T market.

This is where many enterprise organisations quietly get themselves into trouble.

Sustainability in technology is often treated as something to measure after decisions have already been made. The architecture is chosen. The workload is deployed. The scaling path is underway. Then, somewhere later, reporting and ESG teams are asked to account for the consequences. At that point, most of the meaningful design decisions are already behind them.

That sequence made a certain kind of sense when compute was growing but still felt comfortably virtual. It makes much less sense now. Once AI sustainability starts depending on where you build, what you power with, how you cool, and how flexibly you can operate, sustainability stops being a downstream reporting problem. It becomes an architectural one.

This Is No Longer A Sustainability Issue It’s A Systems Problem

That's the deeper correction taking shape now.

If the conversation stays trapped at the level of emissions reporting, it remains too small. What is emerging isn't simply a greener data centre debate. It's a question about how enterprise systems interact with energy systems, infrastructure systems, policy systems, and governance systems all at once.

Compute is now part of the energy system

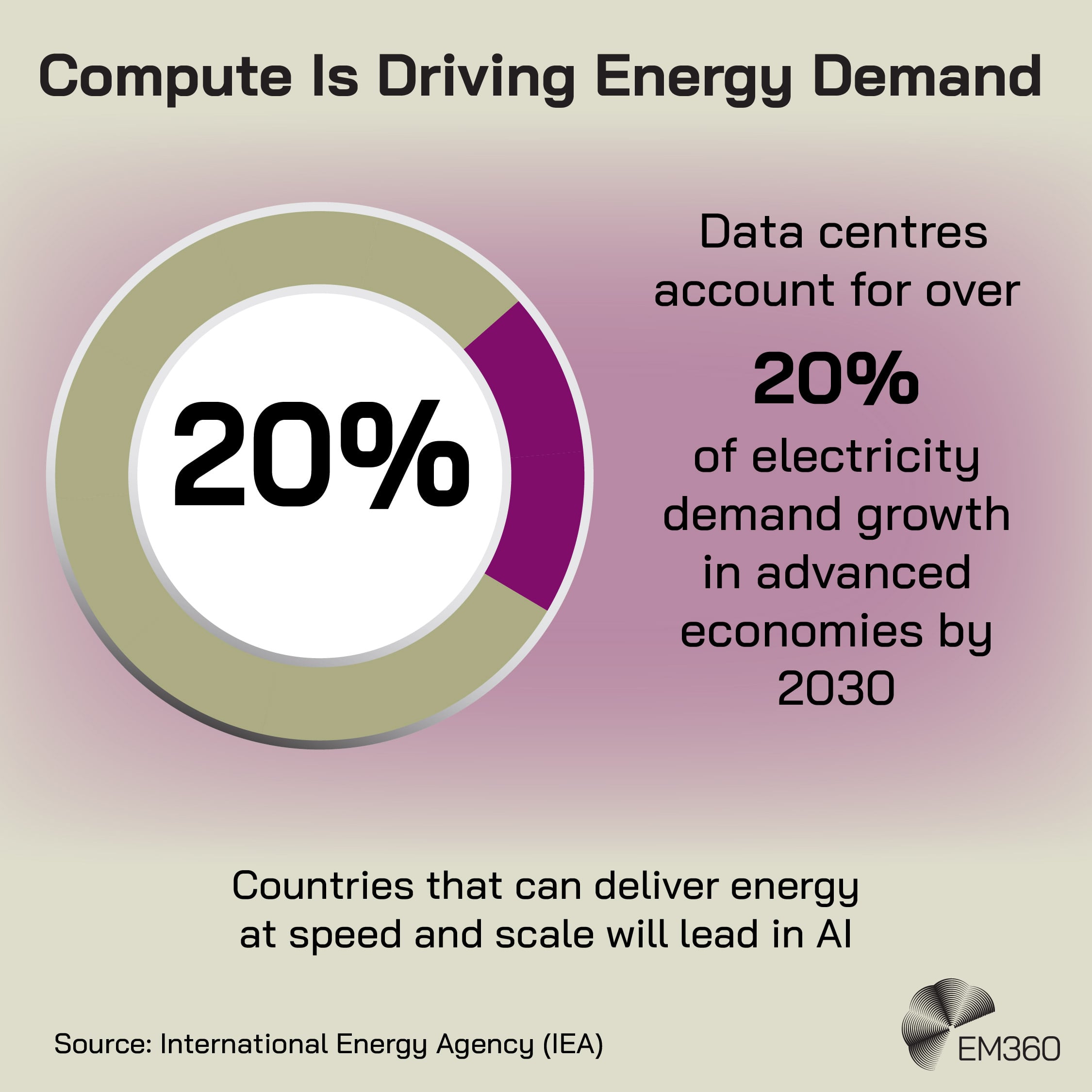

The IEA says this clearly enough that it's worth slowing down over. Data centres are one of several drivers of accelerated electricity demand growth, and in advanced economies they account for more than 20 per cent of demand growth to 2030. It also argues that countries able to deliver energy at speed and scale will be best placed to benefit from AI.

That isn't the language of a background utility. That's the language of system dependency.

Once compute sits this close to the shape of the grid, it starts influencing load balancing, generation choices, backup strategies, transmission needs, and local reliability. It becomes part of the energy conversation whether infrastructure teams are ready for that or not.

Infrastructure decisions now carry environmental consequences

This is where architecture choices stop being neutral.

Workload placement affects where demand concentrates. Cooling choices affect water and power trade-offs. Siting decisions affect which communities absorb noise, land use pressure, emissions, or utility strain.

Reuters reported that rising AI-driven electricity demand in the United States has already become entangled with decisions to keep heavily polluting coal generation online for longer, with activists and health advocates identifying the AI boom as a major emerging threat to air quality.

That's an example, but a useful one. It shows what happens when the physical consequences of digital growth become somebody else’s problem until they no longer can.

Ownership is becoming less clear

Cloud already complicated ownership. AI is making it messier.

A provider may own the facility. A utility may own the supply relationship. A customer may drive the demand signal. A policy maker may shape what is possible through planning and energy regulation. But when the environmental or infrastructure strain becomes visible, responsibility is rarely neat.

This ambiguity matters because it weakens action. If everyone shares responsibility in theory, it becomes very easy for no one to own the trade-offs in practice. That's one reason transparency matters so much.

The Guardian reported that major U.S. tech firms successfully lobbied the European Union to keep many individual data centre environmental metrics confidential, limiting external scrutiny of their local impacts. That may help operators protect commercial information. It doesn't help the wider system understand what it's being asked to carry.

What Mature Systems Do Differently At Scale

The useful question now isn't whether compute has consequences. It clearly does. The useful question is what mature organisations do once that becomes impossible to ignore.

The answer isn't panic, and it isn't performative green language. It's better system design.

They treat compute as a constrained resource

Immature systems behave as if more compute will always be available eventually. Mature ones plan around limits.

That changes how they think about architecture, resilience, and demand. They model trade-offs earlier. They ask which workloads genuinely need the highest-cost infrastructure. They think about flexibility, location, and energy availability before the capacity crisis turns into a delivery crisis.

That's a more serious version of enterprise IT strategy than simply securing more access to GPUs or more cloud budget. It treats compute like something that exists inside a wider physical and economic system, because it does.

They bring sustainability into architecture decisions

Once sustainable infrastructure is understood as a design issue, the timing changes.

Instead of asking how to explain the footprint after the build, mature organisations ask how system design can reduce unnecessary demand, avoid the most constrained regions, improve operational flexibility, and make infrastructure choices legible to the people responsible for risk.

They pull sustainability upstream, where it can still shape trade-offs instead of merely describing them. That isn't softer than engineering. It's more honest about what engineering is now required to handle.

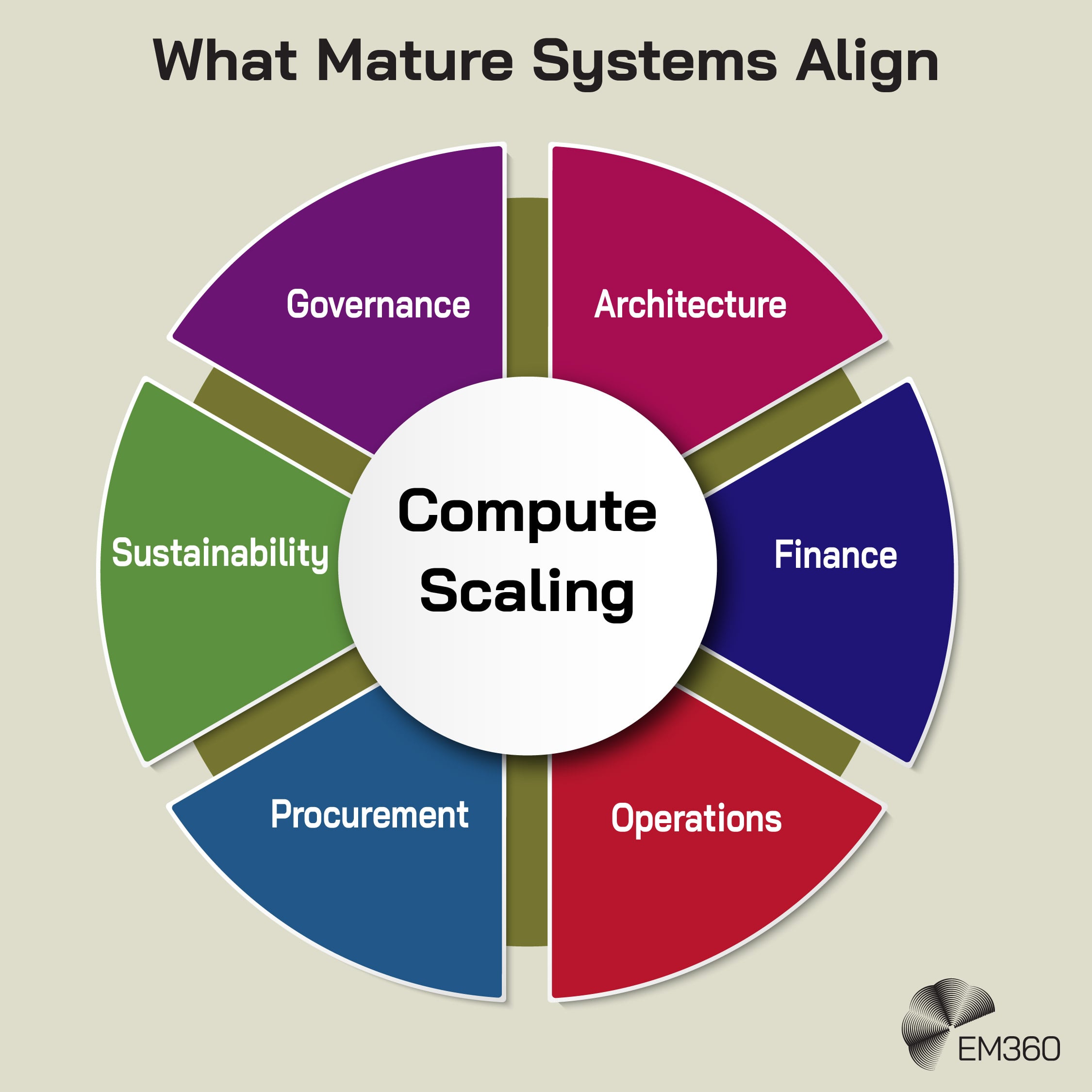

They align infrastructure, cost, and accountability

This is where real maturity usually shows up. Not in slogans. In coordination.

Teams handling architecture, finance, operations, procurement, sustainability, and governance need a shared view of what scaling compute actually means. Not just in spend, but in dependence, timing, and consequence. The organisations that handle this best aren't necessarily the ones with the flashiest AI strategy.

They're usually the ones that can still see the whole system while other people are arguing over one layer of it.

That alignment is becoming more important as power relationships tighten. Reuters reported that utilities are signing increasingly direct, long-term arrangements with large technology companies to support new data centre capacity and speed power delivery. That alone tells you something.

Compute growth is now close enough to energy infrastructure that the relationship can no longer stay vague.

Why This Matters Now

Timing is doing a lot of work here.

AI adoption is still accelerating. Infrastructure investment is still surging. At the same time, energy systems are under pressure, regulatory scrutiny is rising, and communities are pushing back more openly against the local consequences of data centre growth.

That combination matters because it shifts enterprise risk from the purely technical into the systemic.

The IEA says data centres could account for nearly half of U.S. electricity demand growth between now and 2030. The DOE, meanwhile, says data centres consumed about 4.4 per cent of U.S. electricity in 2023 and could reach roughly 6.7 to 12 per cent by 2028.

Those aren't abstract projections tucked away in a niche whitepaper. They're warning lights attached to mainstream digital infrastructure.

That doesn't mean every enterprise suddenly needs to become an energy company. It does mean technology leaders can no longer pretend that digital transformation risks end at software architecture, security posture, or cloud cost management. The system underneath the system has entered the room, and it's louder than it used to be.

Final Thoughts: Compute Forces Systems To Grow Up

For years, compute was abstracted so successfully that many organisations stopped thinking about the physical world required to support it. Cloud made that feel normal. AI is making it untenable.

That's the real lesson here. The cost of compute was never just financial. It always included energy, infrastructure, environmental burden, and the limits of the systems around it. We were simply allowed to look away for a while. Now we aren't.

The organisations that adapt will not just optimise compute. They will redesign how it fits into energy, infrastructure, and decision-making systems. The ones that do not will keep scaling into constraints they never truly accounted for.

EM360Tech will keep following how AI, infrastructure, and system design are changing under real-world pressure, so enterprise leaders can make decisions that still make sense once the architecture diagram meets the grid.

Comments ( 0 )