For a long time, enterprise infrastructure was defined by friction.

You waited for hardware. You fought for budget. You sized for peak demand and hoped you guessed correctly. If a team needed more capacity, a new environment, or room to test something ambitious, the answer often depended on procurement cycles, rack space, and how patient everyone was willing to be.

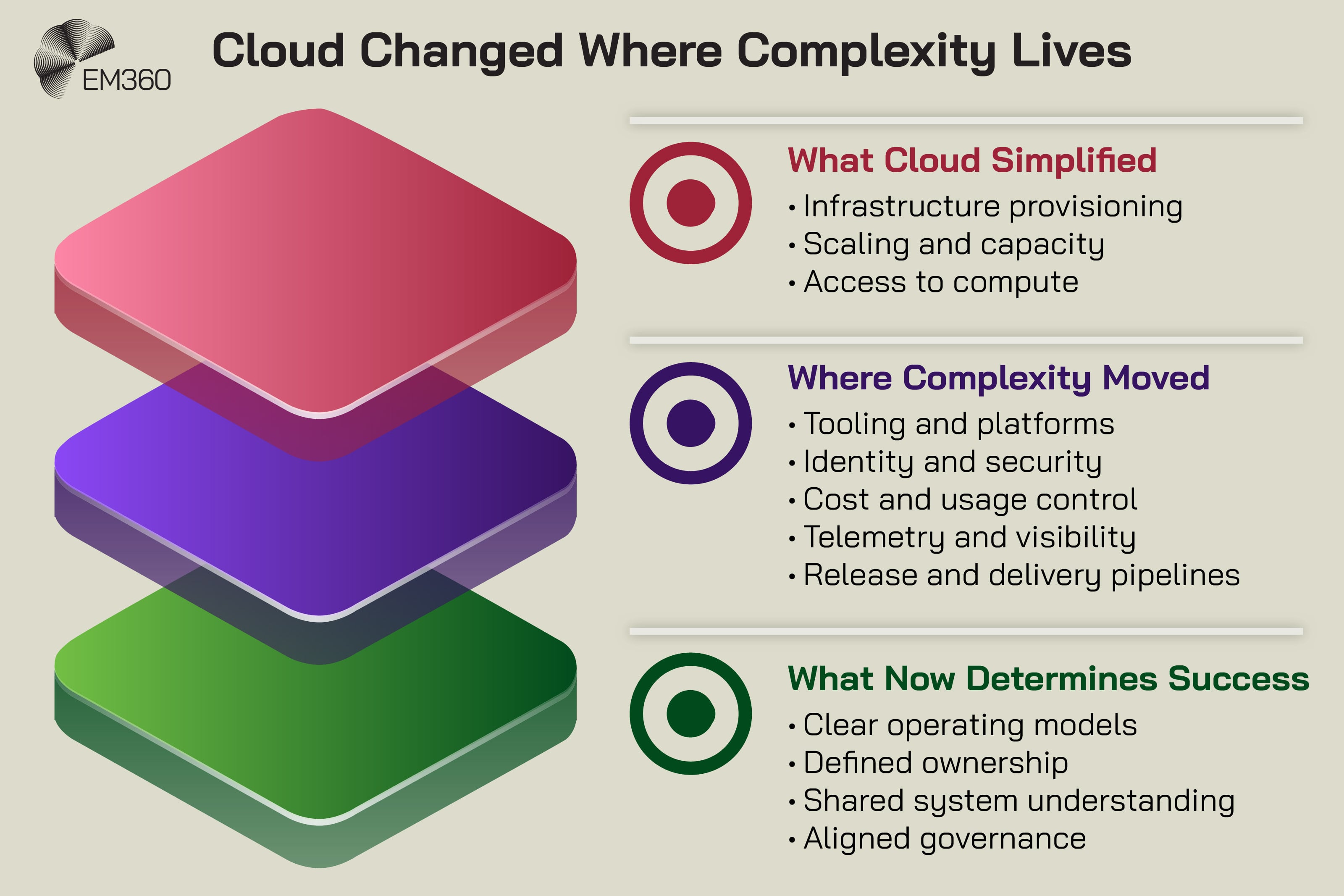

Cloud computing changed that. It made infrastructure easier to access, easier to scale, and far less dependent on physical constraints. That shift was real, and it mattered. It’s a big part of why cloud became the default foundation for modern digital delivery.

But something else happened along the way.

The harder infrastructure was to provision, the more obviously it was the problem. Once cloud removed that bottleneck, other problems had room to show themselves properly. Coordination got messier. Visibility got patchier. Ownership got blurrier.

And as cloud-native architectures, containers, Kubernetes, hybrid environments, and AI workloads piled on top of each other, the operational burden didn’t disappear. It moved. That’s where many enterprises are now.

Not stuck because cloud infrastructure failed, but slowed down because the layers above it became harder to understand and govern than the infrastructure ever was.

Cloud Solved The Problem It Was Designed To Solve

Cloud deserves credit for the thing it actually fixed.

It removed a huge amount of infrastructure friction at the base layer. Teams no longer had to treat compute, storage, and networking like scarce physical assets that needed weeks or months of planning before anything useful could happen.

Infrastructure became something that could be provisioned on demand, scaled quickly, and consumed as a service rather than built from scratch every time. That changed the economics of delivery and the speed of experimentation in ways on-premises environments rarely could.

Infrastructure friction disappeared, but only at the base layer

This matters because it’s easy to overcorrect and tell the story as if cloud simply replaced one set of headaches with another. That’s too neat, and it misses the point.

Cloud did simplify a major part of enterprise IT. It made it easier to launch environments, support growth, absorb spikes in demand, and avoid large upfront capital costs. For organisations under pressure to move faster, that wasn’t a minor gain. It was a structural one.

Even now, the business case for scalable infrastructure remains strong because access to capacity is no longer the primary blocker it once was.

The stack didn’t get simpler, it got taller

The problem is that simplification at one layer often creates expansion at another.

When infrastructure becomes easier to get, organisations tend to build more. More services. More environments. More integrations. More deployment pipelines. More data flows. More specialised tooling.

What looked like a simpler stack from the outside often becomes a taller one in practice, with more abstraction layers and more dependency chains between teams and systems. HashiCorp’s 2025 Cloud Complexity Report captures that shift well.

It found that today’s infrastructure spans multiple tools, providers, and environments, with organisations using more than five tools and services on average to manage cloud environments.

That’s the trade-off cloud introduced. It made access to infrastructure easier, but it also made it much easier to create distributed systems that are harder to coordinate once they’re in production.

The New Shape Of Cloud Complexity

This is the part many enterprise teams now feel every day.

When DevOps Becomes Strategy

How culture, automation and shared ownership turn software delivery into a core lever for speed, resilience and business agility.

The complexity that cloud environments removed was mostly physical and provisioning-related. The complexity it exposed sits higher up the stack, where architecture, operations, governance, and human coordination all intersect. That’s why so many organisations can truthfully say their cloud environments are more flexible than ever while also admitting they’re harder to run.

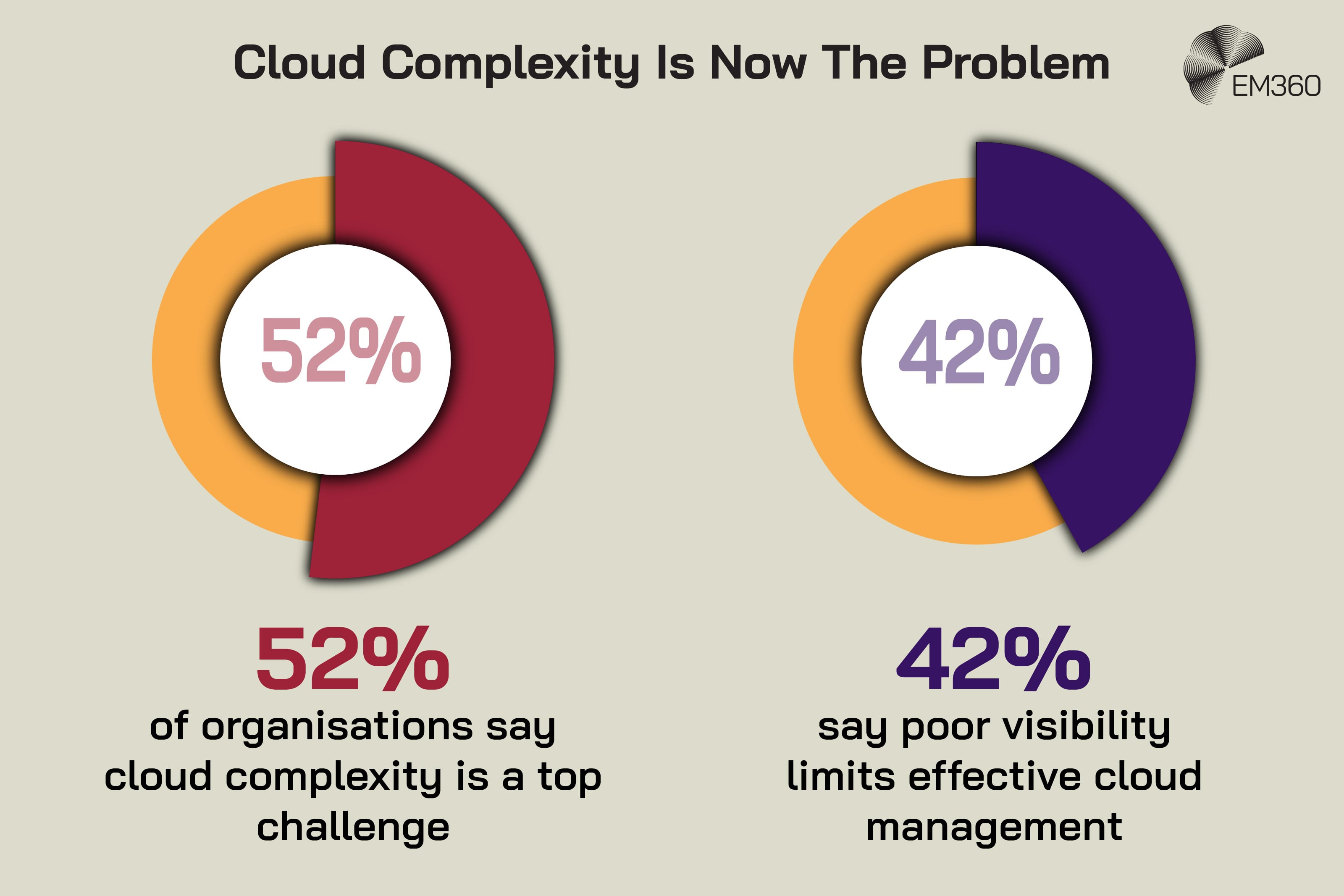

HashiCorp found that 52% of organisations now say cloud complexity is a top challenge, and 42% cite poor visibility as a major barrier to managing cloud infrastructure effectively.

From hardware constraints to coordination overhead

The old problem was getting infrastructure.

The newer problem is getting people, platforms, policies, and services to behave coherently once the infrastructure is already there. That sounds less dramatic than waiting six weeks for hardware, but it’s often more expensive. Coordination overhead slows delivery in quieter ways.

It shows up in handoffs between teams, duplicated tooling decisions, unclear dependencies, inconsistent controls, and long incident investigations where everyone can see part of the issue but nobody can see the whole thing. The infrastructure is available. The challenge is operating it without creating operational drag everywhere else.

Tooling sprawl became the new bottleneck

This is where the cloud story gets more uncomfortable.

As estates grow, organisations rarely manage them through one clean, standardised control plane. They accumulate cloud tools over time, often for sensible reasons in isolation. One for provisioning. Another for monitoring. Another for security. Another for cost control. Another for CI/CD. Another for policy.

Another because a specific team needed something quickly and got it approved before the wider operating model caught up.

That fragmentation is now a serious constraint. Flexera’s 2025 State of the Cloud Report found that 84% of organisations see managing cloud spend as their top cloud challenge, while HashiCorp’s research points to multi-tool complexity as a direct drag on visibility and agility.

At that point, infrastructure management stops being about infrastructure alone. It becomes a problem of platform sprawl, process inconsistency, and organisational design.

Coordination Complexity Is Now The Real Constraint

This is where cloud stops feeling like a technical story and starts feeling like an enterprise operating model story.

Inside Enterprise IDP Stacks

See how leading internal platforms unify Kubernetes, CI/CD and multi-cloud estates into a governed self-service layer for large engineering teams.

The modern delivery chain is tightly coupled, even when teams would prefer to think of it as modular. Application decisions affect platform decisions. Platform decisions affect security decisions. Security decisions affect release velocity. Release velocity affects user experience and product delivery.

What looks like a local technical choice often has consequences much further downstream.

Backend, frontend, and infrastructure are now tightly coupled

That coupling is easy to miss if each team is only looking at its own part of the stack.

Backend development isn’t separate from infrastructure concerns because services depend on deployment patterns, networking, secrets management, runtime behaviour, and service-to-service communication.

Frontend frameworks aren’t exempt either, because modern user experiences often rely on APIs, edge services, observability layers, and release pipelines that sit deep inside cloud-native environments. The boundary between application logic and operational logic is much thinner than it used to be.

Containers and orchestration increased interdependence

Docker containerization helped standardise packaging and deployment. Kubernetes helped orchestrate workloads at scale. Both were valuable steps forward. Neither made systems inherently easier to understand.

CNCF’s latest annual survey shows just how central this model has become. It reports that 82% of container users now run Kubernetes in production, with Kubernetes increasingly positioned as the foundation for both cloud-native and AI workloads.

That level of adoption makes sense, but it also means enterprises are now managing more layers of orchestration, more service interactions, and more operational states across environments that are already difficult to keep consistent.

Containers solved one problem very well. They made workloads more portable and consistent. What they didn’t solve was the coordination burden that appears once hundreds of services, pipelines, policies, and teams all depend on that consistency at once.

Visibility Gaps Make Decision Making Harder, Not Easier

One of cloud’s promises was better insight.

Cost, Control and CDE Strategy

Why boards should treat cloud-based development as a security asset, not just a cost line, amid pressure to ship more with fewer resources.

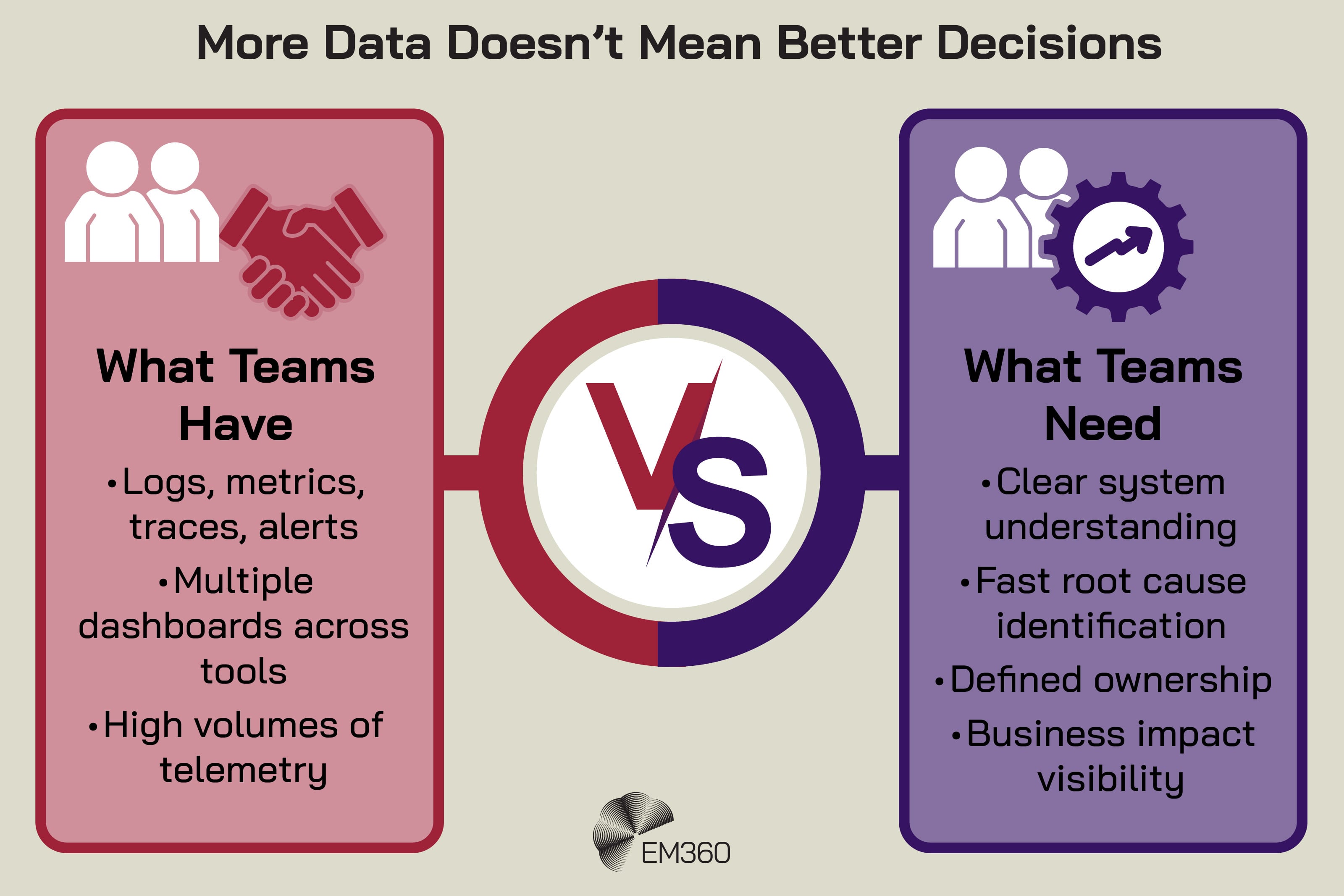

In theory, digital infrastructure should be easier to observe than physical infrastructure because everything produces telemetry. Logs, traces, metrics, events, alerts, dashboards. The problem is that more data doesn’t automatically create more clarity. Sometimes it just creates more noise with a better user interface.

Observability improved, but clarity didn’t always follow

This is the difference between observability and understanding.

A team can have extensive monitoring and still struggle to answer simple operational questions quickly.

- What changed?

- Where is the real bottleneck?

- Which dependency is failing first?

- Who owns the affected service?

- What is the business impact if this continues for another hour?

Splunk’s State of Observability 2025 makes the business stakes clear. It found that leading observability practices are linked to better productivity, revenue outcomes, and stronger incident response planning. That’s useful because it moves the discussion away from tooling for tooling’s sake and back to decision quality.

Fragmented telemetry creates blind spots across teams

The real issue is fragmentation.

Different teams often see the same system through different tools, different thresholds, and different levels of context. Engineering sees latency. Security sees identity activity. Finance sees spend. Product sees customer impact. Leadership sees service risk. None of those views is wrong. They’re just incomplete on their own.

That matters because cloud visibility isn’t simply about gathering more telemetry. It’s about creating enough shared understanding that incidents, risks, and trade-offs can be interpreted consistently across functions.

When that shared picture is missing, decisions slow down, accountability gets fuzzy, and operational confidence drops even when the data itself is technically available.

Ownership Is Now Distributed, And Often Undefined

This is probably the most frustrating part of modern cloud operations, because it’s rarely obvious until something goes wrong.

Cloud environments are full of shared surfaces. A service may be written by one team, deployed by another, governed by a third, and dependent on external platforms none of them fully control. Vendors publish shared responsibility models, but real enterprise delivery doesn’t map neatly onto those diagrams.

When 97% Is Still Off-Cloud

Appvia’s COO outlines why most workloads remain on-prem and what leaders must change in platform strategy to unlock real cloud value.

Shared responsibility models don’t map cleanly to real teams

On paper, the split is straightforward.

The provider owns some layers. The customer owns others. But that model is too abstract to resolve most day-to-day operational ambiguity. It doesn’t tell you who owns identity hygiene across connected services, who is responsible for telemetry quality, who resolves policy drift between teams, or who gets the final say when speed and control collide.

That’s why cloud governance often feels weaker in practice than it does in architecture diagrams. Not because responsibility doesn’t exist, but because it’s distributed across teams that don’t always share the same incentives, language, or visibility.

AI and automation are accelerating ownership ambiguity

AI is now making that problem harder.

Gartner says 75% of enterprise software engineers will use AI code assistants by 2028, up from less than 10% in early 2023. It also predicts that up to 40% of enterprise applications will include integrated task-specific AI agents by 2026.

That means more generated code, more automated actions, more agent-driven workflows, and more operational logic moving through systems at machine speed.

At the same time, the identity surface is expanding sharply. Sysdig’s 2025 research found that machine identities outnumber humans 40,000 to one, creating a major management and security challenge as cloud footprints and AI adoption grow. That’s not just a security stat. It’s an ownership stat.

Every new non-human identity increases the number of things that must be governed, monitored, and understood even when no single team feels like it fully owns the problem.

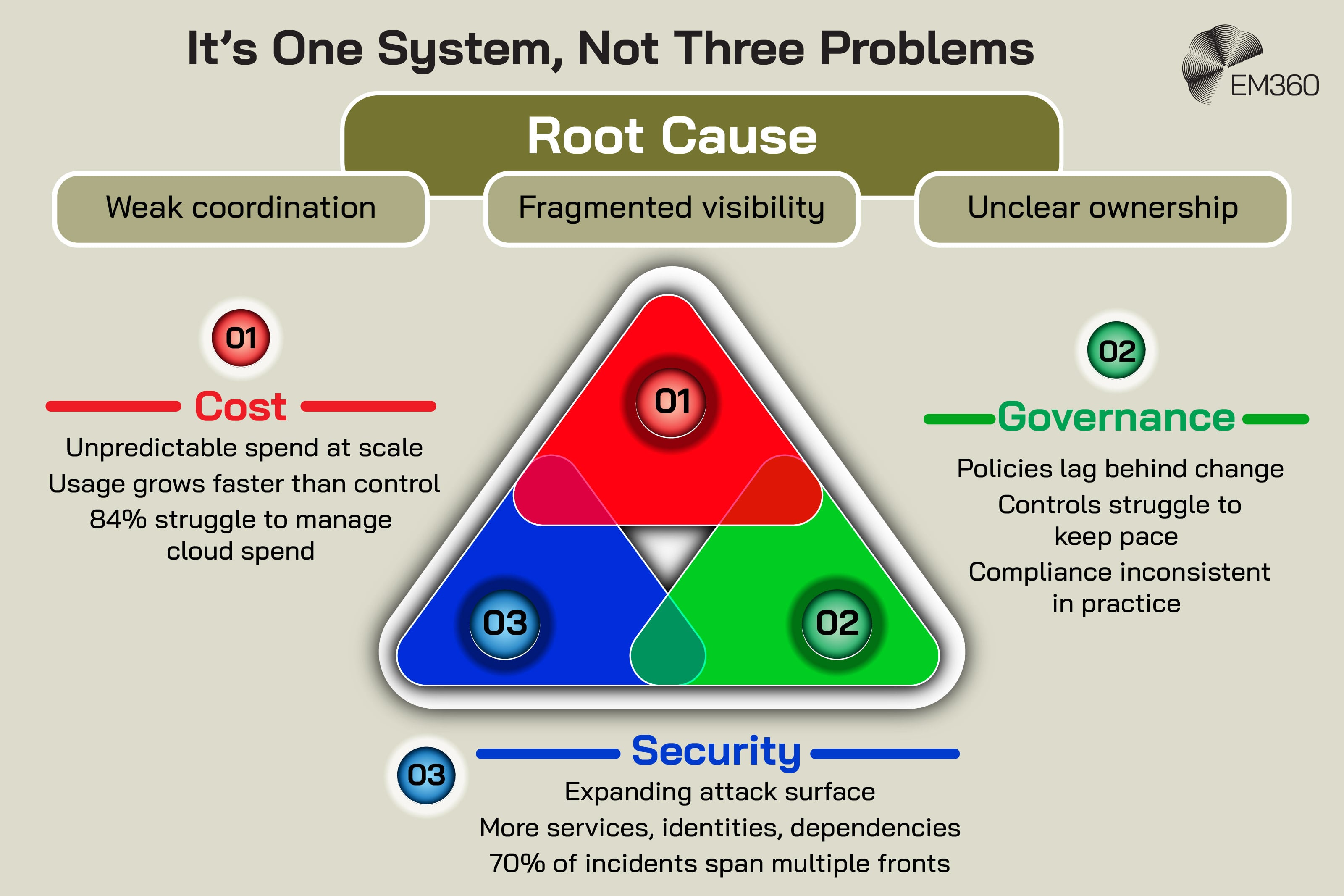

Cost, Security, And Governance Became System Problems

This is where the consequences stop being operationally annoying and start becoming strategically significant.

When coordination is weak, visibility is fragmented, and ownership is unclear, cost control, security posture, and governance maturity all suffer together. They aren’t separate issues anymore. They’re outputs of the same system.

Cost is no longer predictable at scale

Cloud spend becomes difficult to manage when usage grows faster than shared control.

Flexera found that cloud spend is expected to increase by 28% in the coming year, while 84% of respondents still rank spend management as their top challenge. That points to a simple truth. Cost isn’t just about how much infrastructure is being used.

It’s about whether the organisation has enough operational discipline to understand why it’s being used, by whom, under which incentives, and with what business value attached.

Security expands with every new dependency

Security gets pulled into the same pattern.

Each new service, integration, identity, automation layer, and workload increases the attack surface. Palo Alto Networks’ 2025 Unit 42 incident response research found that 70% of the incidents they responded to happened across three or more fronts, underlining how interconnected modern attack surfaces have become.

In cloud-heavy environments, that means security can’t be treated as a control layer bolted onto the edge of the system. It has to move with the system itself.

Governance struggles to keep pace with speed

Governance is where all of this becomes visible at once.

Policies are often written more slowly than environments change. Standards are agreed more slowly than teams ship. Controls are reviewed more slowly than automation introduces new dependencies. None of that means governance is obsolete. It means static governance models are poorly suited to enterprise cloud environments that evolve continuously.

That’s why many organisations feel compliant in principle but inconsistent in practice. Their systems move too quickly for control models designed around slower, simpler infrastructure estates.

Platform Engineering Is The Enterprise Response To This Shift

This is why platform engineering has become such a serious conversation.

Not because it removes complexity, but because it gives enterprises a better chance of managing it deliberately.

Internal platforms reduce cognitive load and standardise workflows

The strongest case for platform engineering isn’t technical fashion. It’s operational relief.

A well-designed internal platform reduces the number of decisions every team has to make from scratch. It creates standard paths for deployment, security, observability, and service management, while still allowing for flexibility where it actually matters.

Gartner predicts that by 2026, 80% of large software engineering organisations will establish platform engineering teams to provide reusable services, components, and tools for application delivery. That forecast makes sense because standardisation is becoming one of the few realistic ways to reduce cognitive overload in cloud-native delivery.

The goal is not less complexity, but managed complexity

That distinction matters.

There’s no credible path back to a genuinely simple enterprise stack. Not if organisations want the speed, scale, resilience, and AI-readiness they now expect from modern environments. The smarter goal is to create operating models that absorb complexity without letting it fragment the whole organisation.

Docker’s 2025 State of Application Development reflects that shift well. Based on more than 4,500 developers, engineers, and technology leaders, it found that security is now a shared responsibility across teams and that friction still exists in day-to-day workflows despite better tools. That’s exactly the kind of problem internal platforms are meant to address.

Not by pretending the system is simple, but by giving people a more coherent way to work inside its complexity.

Where Cloud Expectations Went Wrong

It’s worth being precise here.

Cloud didn’t promise to eliminate complexity forever. It promised to remove a specific kind of infrastructure friction, and it did. The confusion came later, when many organisations treated that simplification as if it would naturally flow upward into architecture, operations, governance, and ownership as well.

It didn’t.

Simplicity at one layer created complexity at another

That’s not failure. It’s redistribution.

The more abstract the infrastructure layer became, the more visible the surrounding system became. That surrounding system includes tooling, identity, cost, telemetry, release management, platform standards, developer experience, and organisational coordination.

Those things were always there in some form. Cloud simply made them impossible to ignore at enterprise scale.

Enterprise success now depends on operating model maturity

This is the real dividing line now.

The organisations that handle cloud complexity best aren’t necessarily the ones with the most advanced architectures. They’re usually the ones with the clearest operating models. They know where standards live, how teams interact, which platforms are strategic, which telemetry matters, and how ownership works when systems cut across functions.

That kind of maturity isn’t glamorous, but it’s becoming a real competitive advantage. Especially as AI pushes more logic, more automation, and more operational weight into environments that are already difficult to govern cleanly.

Final Thoughts: Cloud Simplified Infrastructure, But Demands Operational Discipline

Cloud computing did exactly what it promised. It made infrastructure easier to access, easier to scale, and easier to treat as a service rather than a physical limitation.

What it didn’t do was make the rest of the system simple.

Instead, it shifted the burden upward into coordination, visibility, ownership, governance, and platform design. That’s why the most important cloud conversation now isn’t about whether to adopt it. That argument was settled years ago. The harder question is whether the organisation has the operational discipline to run what cloud made possible.

That question is only getting sharper. AI will add more services, more agents, more identities, and more automation into environments that are already dense with dependencies. The enterprises that cope best won’t be the ones that chase complexity most aggressively. They’ll be the ones that can shape it, govern it, and keep it legible as it grows.

That’s where the future of cloud operations is really being decided. And it’s exactly the kind of shift EM360Tech will keep unpacking through the people, patterns, and operational realities shaping enterprise technology now.

Comments ( 0 )