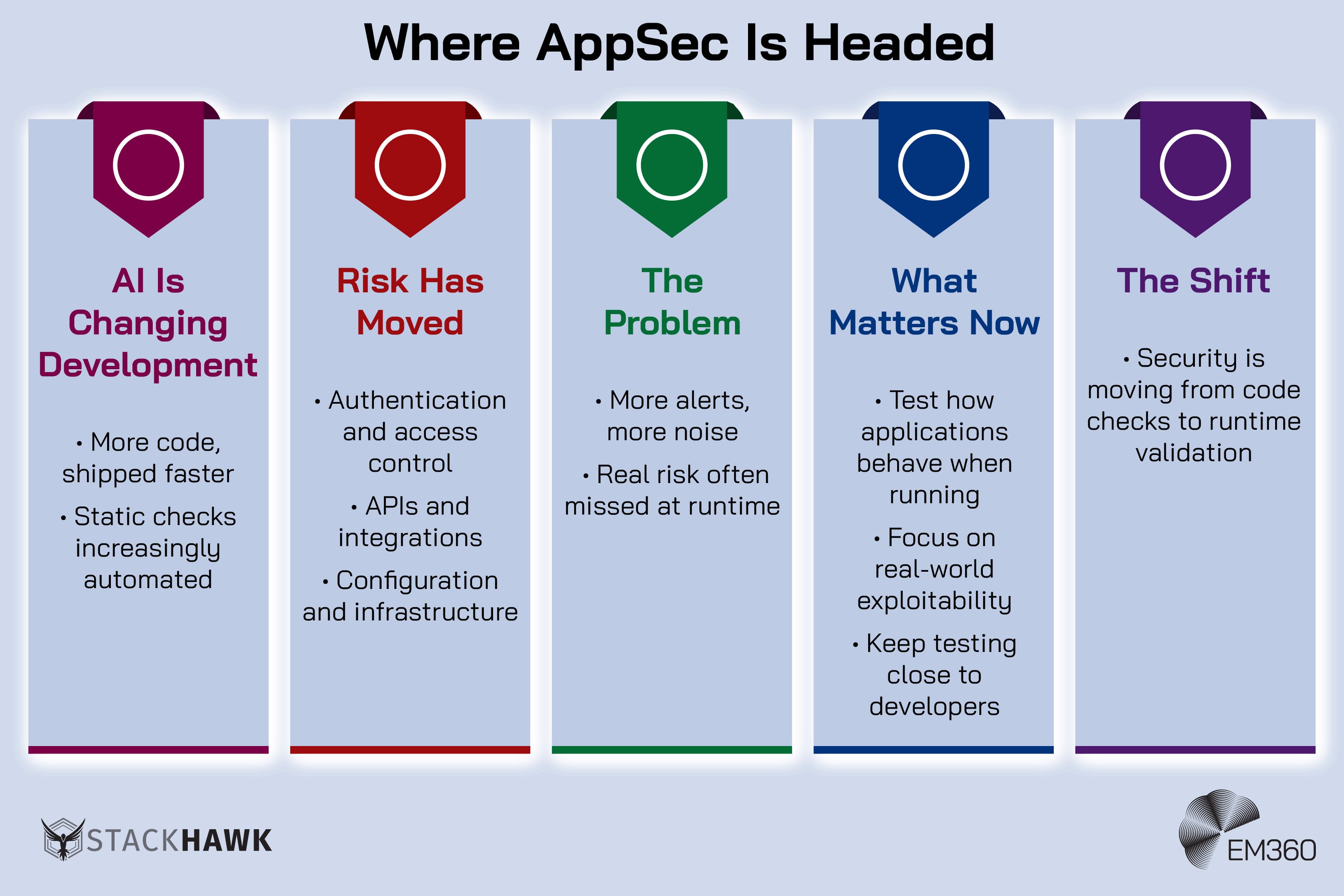

AI is making software development faster. That part is obvious. What’s less obvious is that many of the most damaging security failures still don’t start in the code itself.

They show up when applications run.

Authentication flows break in ways that looked fine in development. APIs expose more than they should. Permissions behave differently under real conditions. The risk isn’t disappearing. It’s moving. And application security is changing with it.

AI-generated code is increasing both speed and complexity. Static analysis is scaling with it, which means more alerts and more noise for teams to sift through. So validation can’t stop at code analysis anymore. Now it also has to look at real behaviour, where runtime vulnerabilities actually appear.

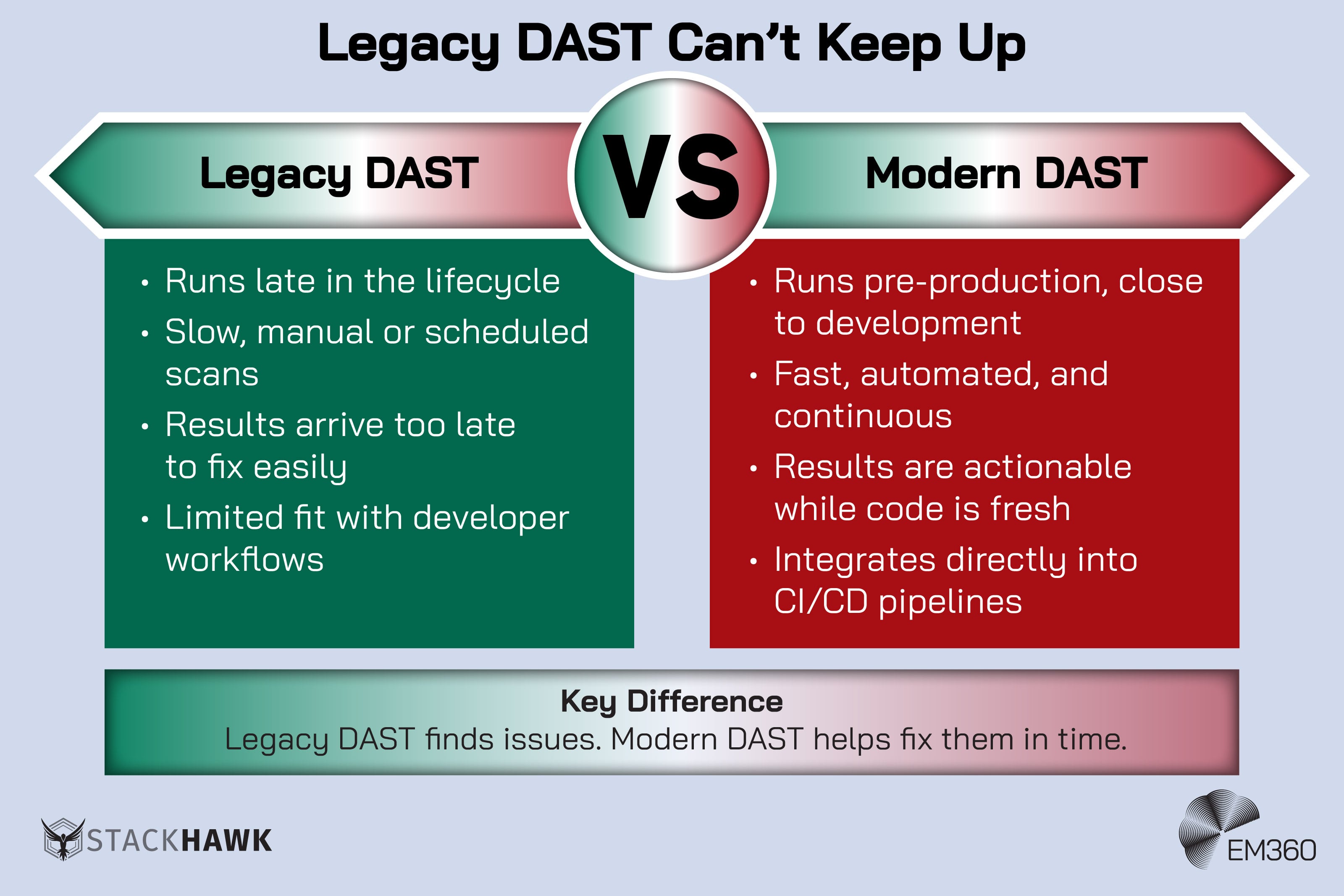

That’s also why dynamic application security testing (DAST) is becoming more important. And companies like StackHawk who are focused on fast, pre-production testing that fits into developer workflows, make it possible to keep up with AI-driven development in a way legacy DAST and pentesting haven’t.

Because as development accelerates, the question isn’t just whether the code is secure. It’s whether the application holds up once it’s live.

AI Coding Is Changing The Application Security Landscape

AI-assisted development is already reshaping the software lifecycle. Not in a vague futurist way. In a very operational, very real one.

StackHawks 2026 AppSec Leader’s Guide found that 87 per cent of respondents are using AI coding assistants in development, and according to Stack Overflow 51 per cent of professional developers say they use them daily.

Teams are writing more code, changing it more often, and getting to release faster.

The uncomfortable part is that AI adoption and AI trust are moving in different directions. Developers are using these tools heavily, but they’re not exactly relaxing into blind faith. The same Stack Overflow report found that 46 per cent of developers distrust the accuracy of AI tool output, compared with 33 per cent who trust it.

That gap is important because it captures the mood around AI-assisted development better than any keynote ever will. People are using it because it’s productive. They’re checking it because they know better.

That tension lands directly in AppSec. If development speed jumps and release cycles tighten, the number of chances for runtime exposure jumps with it. Even if the code itself improves, the application still has to survive contact with reality.

That’s where things like authentication flows, access rules, environment configuration, and service dependencies start deciding whether “secure” was real or just optimistic.

Where Real Application Risk Lives Today

This is the part that gets flattened too often. The biggest application risks now aren’t just code defects. They’re business logic flaws and runtime failures.

OWASP’s 2025 Top 10 makes that painfully clear. Broken Access Control is still number one. OWASP explicitly links that rise to the fact that more of what your software does depends on settings, permissions, integrations, and environment choices that static review can’t fully settle on its own. The API layer tells the same story.

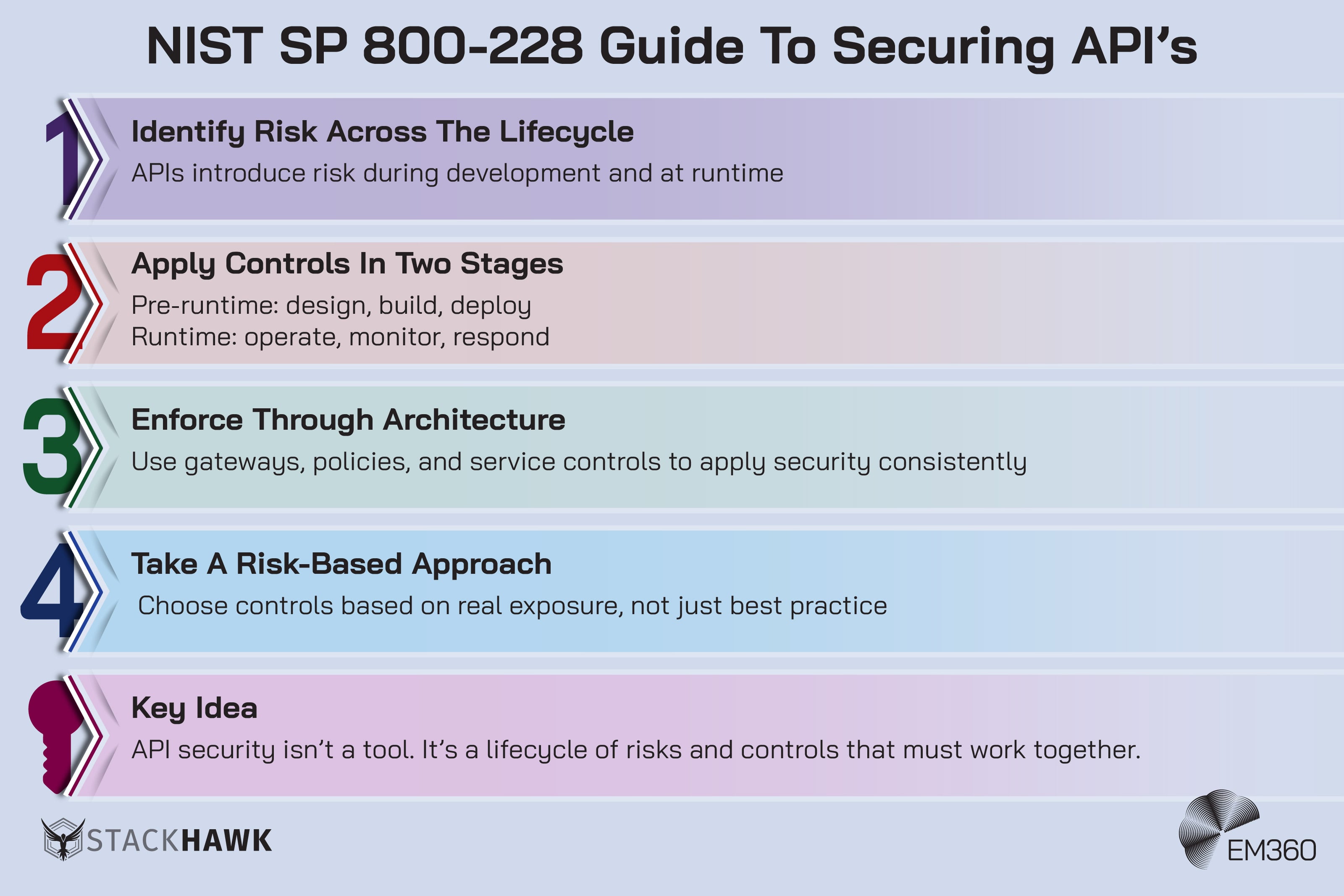

NIST’s SP 800-228 on API protection for cloud-native systems says secure API deployment requires protection measures across both pre-runtime and runtime stages. That distinction matters because APIs now sit at the centre of enterprise application behaviour. They carry business processes, authentication decisions, and system-to-system trust.

If something is going to break in a modern app, there’s a very good chance an API is somewhere nearby looking stressed.

This is runtime reality. That’s the real shift. The risk isn’t mainly in the code itself. It’s in how common attack vectors play out once an application is live.

Why Static Analysis Alone Cannot See These Risks

AI Is Rewriting AppSec Strategy

See how AI coding tools and LLM components force a new AppSec operating model across people, process, metrics and ownership.

Static analysis still has value. It can inspect source code before execution, catch known patterns, and help teams clean up weaknesses early. That isn’t nothing.

But it has a hard limit. It can’t fully model runtime behaviour, user interaction, application state, or the chain reactions that happen when APIs, identities, permissions, and cloud services interact in live conditions. NIST’s API guidance is clear on this. Risk is introduced during both development and runtime, which is why runtime controls are part of the security model, not an optional extra.

That’s the complexity problem. Some risks only exist when the application runs.

AI makes that harder to ignore. Not just because systems are more complex, but because they’re moving faster.

StackHawk’s recent argument is blunt, but it holds up. At AI scale, code volume increases. Static findings increase with it. More alerts, more triage, more noise. Teams spend more time sorting through issues that may never become exploitable.

At AI complexity, the gap gets wider. The risks that actually lead to breaches often sit in runtime behaviour, where static tools have no visibility.

That creates a double failure. Important risks get missed because they only emerge at runtime, and low-priority findings still consume time because they arrived first and louder. Security teams that were already stretched now have to separate real exposure from background noise while release speed keeps climbing.

That’s why visibility into runtime behaviour matters. Not as a preference. As a filter. Teams need to know which risks survive contact with real conditions, because those are the ones worth stopping everything for.

How DAST Aligns With Modern Application Security Needs

This is where dynamic application security testing starts making a lot more sense.

Hardening the Software Pipeline

How end‑to‑end controls across SDLC, build systems and dependencies reshape infrastructure strategy for secure delivery at scale.

DAST tests a running application, not just the source code behind it. That means it can simulate attacker behaviour, test authentication and authorisation paths, inspect API behaviour, and validate how the application responds under real conditions. It’s built to answer a different question.

Not “does this code pattern look risky?” but “does this application actually behave in a way that can be exploited?”

That distinction matters more in the AI era because AI is already starting to absorb some of the work static analysis was valued for. Pattern matching is exactly the sort of thing these systems are getting better at. Runtime validation is different. It can’t be guessed into existence from a code snippet.

The application has to run. The request has to be made. The response has to be observed. Reality has to be tested as reality. And yes, plenty of teams will say DAST is too slow and too manual to keep up. That’s true of legacy approaches. It’s also exactly where modern DAST is trying to close the gap.

Pre-production runtime testing changes that equation because it moves the signal closer to the people who can still fix the problem without detonating a release plan. StackHawk’s positioning leans hard into that gap, and for once, the gap is real.

Fast runtime testing that fits into developer pipelines is not the same thing as manual pentesting showing up late with a long PDF and a ruined Friday.

The Future Of Application Security In An AI-Driven World

AI isn’t replacing application security testing. It’s changing where it needs to work, and how fast it needs to move. Static checks still help. They just can’t carry the whole argument anymore, and they run the risk of distracting from the exploitable vulnerabilities that turn into breaches.

Certificate Sprawl as Risk

Unmanaged certificate growth creates hidden outages and security gaps. Callan explains governance and tooling moves to regain control.

Development is only going in one direction. Faster cycles. More frequent releases. More AI-assisted output. At the same time, applications are becoming more distributed, more API-driven, and more dependent on cloud services and third-party integrations. That combination is what’s reshaping the future of application security.

Because the more distributed a system becomes, the less useful it is to look at any single piece of it in isolation. Code is only one part of the story. What matters just as much is how that code interacts with identity systems, APIs, infrastructure, and external services once everything is running together.

If AI is increasingly handling the kind of pattern-based checks that static analysis tools were built for, then static analysis becomes part of the baseline. It doesn’t disappear, but it stops being the thing that sets your security posture apart.

What does start to matter more is validation. Not theoretical validation, but real, runtime validation that shows how the application behaves under actual conditions. That’s why the secure software lifecycle is expanding. It’s no longer enough to check security at build time and assume the result holds.

Security has to follow the application into environments that look and behave like production.

This is also where dynamic application security testing fits more clearly into a layered approach. It doesn’t replace other testing methods, and it shouldn’t. Static analysis, dependency scanning, and code review still have a role. But DAST adds something those methods can’t: visibility into how the system actually responds when it’s running and prioritization of exploitable vulnerabilities.

That makes it a necessary layer, not an optional one, in modern application security.

There’s a slightly uncomfortable truth sitting underneath all of this. As AI coding assistants take on more of the work traditionally associated with static analysis, static analysis becomes less of a differentiator. Runtime visibility becomes more of one.

The question that matters most isn’t “Is the code safe?” anymore. It’s “What happens when the application runs?”

Final Thoughts: Runtime Reality Is Reshaping Application Security

When Dev Tools Become Risk

How a brief npm compromise exposed developer ecosystems as core enterprise attack surface, forcing boards to rethink software dependency risk.

AI may improve productivity and help clean up some coding issues earlier. That’s useful. It just doesn’t settle the part of the problem attackers keep exploiting. The most dangerous weaknesses still tend to appear when applications interact with users, systems, credentials, and infrastructure under live conditions.

That’s why AppSec teams are having to rethink where they focus their effort. If static analysis becomes more automated inside AI-assisted development, then visibility into runtime behaviour becomes even more important, not less.

Teams that are reassessing how runtime testing fits into that shift can look at how organisations like StackHawk approach dynamic application security testing in practice. EM360Tech keeps tracking how AI, cloud, and modern security tooling are changing each other, because this is now the real job: staying clear-eyed about where the risk has moved, and what actually helps once it gets there.

Comments ( 0 )