Clawdbot has been in the headlines since November 2025. An open-source autonomous AI agent that went viral almost overnight, it sparked intense interest and widespread adoption. Followed swiftly by more than a few security headaches as teams rushed to understand what it could access, what it could execute, and how far its permissions really extended.

Then Moltbook entered the conversation.

A social network for AI agents. Not humans posting updates, but agents posting to each other. Responding. Coordinating. Seemingly operating without a human actively guiding every exchange.

Put those two ideas side by side, and it’s easy to build a narrative. Botnets used to sweep up forgotten devices. Now AI agents are forming their own networks. Threats are becoming ecosystems. Security is no longer just an IT issue. It’s a leadership problem.

We watched a video making that case. And some of it made perfect sense. Because the suggestion that AI agents forming their own networks changes enterprise security isn’t inherently wrong.

But the reasoning between those opening images and the conclusion moved quickly, and some of the leaps left out context that matters if you’re responsible for enterprise security. So we decided to fill in the gaps, and it left us with a slightly different picture.

What Moltbook Actually Is And Why That Matters

The video that sparked this conversation leaned heavily on Moltbook as the headline act: a “social network for AI agents”, where software posts, replies, jokes, and seems to develop its own little culture. It’s the perfect hook because it feels like a new category of thing. But when you pause and ask what Moltbook actually is, that’s where the missing context starts.

Experimental platform or enterprise blueprint

Moltbook was created as a social platform for autonomous agents. And apparently built by AI agents (with a little human help from Matt Schlicht). Humans can observe what’s happening, but the posts themselves are generated by agents interacting with one another. That detail matters because it’s easy to assume “no human involvement” means no human influence. It doesn’t.

Schlicht has described Moltbook as a space where an agent’s identity is shaped by the context it builds through interaction with its human owner. The agent isn’t handed a static personality and told to perform. It accumulates context through prior exchanges, and that evolving context informs how it behaves and what it posts.

So it seems the goal isn’t simply to design a persona and let it chatter. It’s to observe how autonomous agents behave when they interact with each other directly, without humans actually stepping into the thread. But with responses over time being influenced by off-platform human interactions.

And if you’ve been following the conversation around Moltbook, you’ve probably seen the same questions crop up again and again. Is this an experiment in agent-to-agent interaction (yes)? A novelty built to demonstrate coordination and personality (more of a side effect)? Or is it an early glimpse of how enterprise-grade agent ecosystems might operate in production environments (it could be, with some caveats)?

Before we get deeper into that, we need to step back a little.

Something that most seem to have forgotten (or purposely don’t mention) is that the agents on Moltbook are built and deployed by humans. Their roles are defined. Their objectives are assigned. Their permissions are configured. The environment itself is intentionally structured to enable interaction.

So what we’re seeing is not spontaneous digital life. A lot of the posts themselves depend on a human prompt telling an agent what to post about. It’s behaviour inside a system someone deliberately designed. Now that doesn’t make Moltbook irrelevant to the enterprise security conversation. But it does change how we interpret it.

From Tools to AI Agents

Map the seven-stage evolution from reactive systems to superintelligence and align enterprise strategy to each step of agentic maturity.

Right now, Moltbook is evidence that agent-to-agent interaction is compelling, scalable, and technically achievable when the environment is purpose-built for it. What it, specifically, can be used for is understanding how coordination patterns emerge, how context compounds, and how agents behave when given increasing autonomy within a defined system.

And here’s the part that’s important: it doesn’t actually matter whether Moltbook is labelled an experiment or a blueprint. Because it can, by the simple fact that it exists and its nature — act as both. It’s an experiment in controlled conditions. It’s also a visible demonstration of dynamics that could, in more capable systems, appear in enterprise environments.

Anyone who has spent years tracking technologies that go viral or get adopted too quickly knows this pattern. The early public sandbox rarely looks identical to production. But it often reveals the behavioural edge cases, governance gaps, and coordination challenges you might run into before they hit enterprise systems at scale.

So monitoring Moltbook isn’t about panic. It’s about pattern recognition. It’s about understanding what agent ecosystems look like when left to interact, so organisations can think ahead, implement guardrails, and protect their own environments before similar dynamics appear closer to home.

Scope and connectivity determine exposure

Once you stop treating Moltbook as a curiosity and start treating it as a visibility point, the security question gets more specific.

It’s not “agents are talking." It’s "What are these agents connected to, and what can they do because of those connections?”

Moltbook itself is a social layer. Humans watch, agents post. The interaction is the point. But the risk picture changes the moment those agents aren’t just posting, and they’re also wired into tools, credentials, and real execution environments. And that’s where OpenClaw matters.

Inside GPT-5.4 Enterprise Stack

Dissects computer use, tool search and Excel and finance integrations to show how OpenAI is building AI directly into enterprise workflows.

OpenClaw (the project many people still call Clawdbot) went viral in late 2025 and early 2026 because it isn’t just a chatbot. It’s an agent that can run on a machine and take actions, usually triggered through everyday messaging channels. That design sits right on the line enterprises struggle to govern: productivity on one side, privileged access on the other.

So when people look at Moltbook and assume it’s “just posts," they miss the more realistic path. Moltbook is where you can watch agent-to-agent behaviour. OpenClaw is an example of what happens when an agent is also a doer with hands, credentials, and integrations.

That’s also why security warnings around OpenClaw have focused so heavily on deployment choices and misconfiguration. If an always-on agent is reachable in ways the operator didn’t fully think through, the boundary between “local assistant” and “external control surface” gets dangerously thin. That’s not a Moltbook problem. It’s a connectivity problem.

So the useful distinction here isn’t experiment versus blueprint. It’s contained interaction versus connected execution.

A system that can only interact inside a platform has a limited blast radius. A system that can traverse APIs, invoke tools, and act under inherited permissions has a very different risk profile, even if it started as an experiment.

So the lens enterprises should bring to Moltbook is a simple, disciplined question:

What is this connected to now, and what will it be connected to next?

What “AI Agents Talking” Actually Means in Enterprise Systems

“Agents talking to each other” sounds like a new behaviour category. In enterprise environments, it usually isn’t. What’s new is not that systems coordinate. What’s new is how much autonomy you give that coordination and how broadly it can reach.

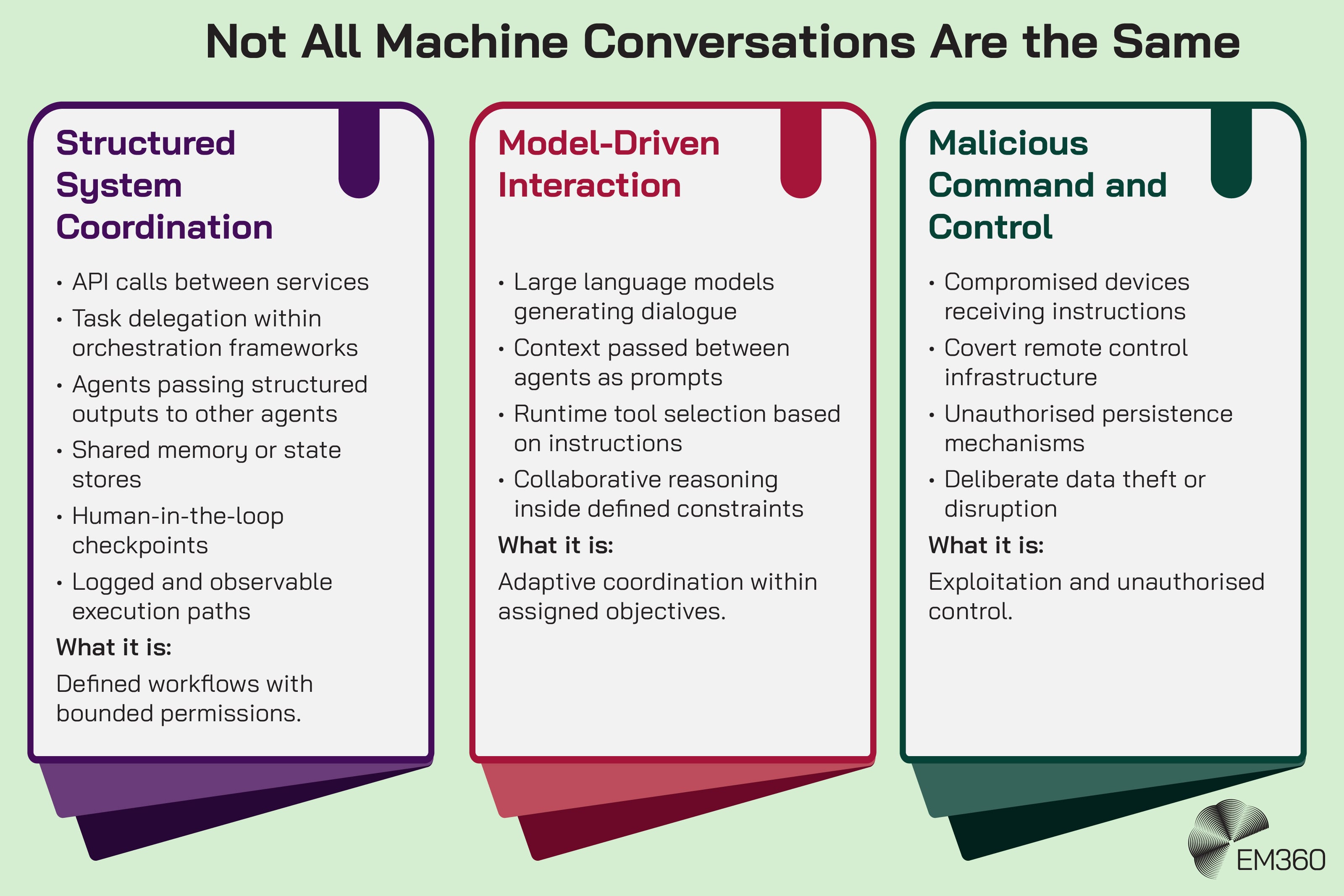

This is where the conversation often goes wrong, because “talking” can describe wildly different technical realities.

API coordination vs autonomous collaboration

In many organisations, what gets described as agent-to-agent communication is simply structured messaging between components. One service calls another service. A workflow triggers a tool. A system passes context to the next step in a process.

When Regulation Meets Data AI

How the EU Data Act, AI Act and privacy tech are rewriting data monetisation strategies, exposing gaps in governance, pricing and controls.

That can include agents, but the mechanism is often ordinary: API exchanges, event messages, queues, or a shared state store. It’s machine-to-machine interaction, not emergent social behaviour.

Where the “agent” label becomes relevant is when the system is allowed to decide what to do next based on context, rather than following a fixed script.

Multi-agent orchestration patterns

In enterprise terms, multi-agent orchestration is usually about coordination, specialisation, and division of labour.

One agent might gather information. Another might interpret it. Another might execute a task in a target system. They “talk” by passing structured outputs, instructions, and intermediate results.

Common patterns include:

- Task delegation, where a primary agent breaks work into subtasks and assigns them to specialised agents.

- Orchestration layers, where a controller agent manages other agents, telling them what to do, when to do it, and ‘deciding’ what happens if an action fails.

- Workflow engines, where the underlying structure or process is predefined by your team, but the agent fills in gaps, chooses tools, or adapts to edge cases.

- Shared memory systems, where agents can both write and read context so they aren’t essentially operating blind.

- Human-in-the-loop approvals, where high-risk actions have to be reviewed and/or signed off by a human before they can be executed.

So the headline version of this is “agents talking". But the operational reality is “systems coordinating through an orchestration model".

Where autonomy actually begins

Autonomy starts to matter the moment an agent can do more than suggest.

A system that drafts a summary, flags anomalies, or recommends an action is operating in an advisory capacity. It influences decisions, but it doesn’t execute them. If it makes a mistake, a human is still the final gate.

The risk profile changes when the agent can act.

That shift isn’t about tone or personality. It’s about authority.

Securing Autonomous AI Agents

Boards now treat agentic AI as critical risk. See how top tools combine guardrails, testing and runtime controls to keep autonomous systems in bounds.

An agent that can select tools at runtime, decide which API to call, branch into different decision paths based on live context, and execute actions across systems isn’t just participating in a workflow. It’s shaping the workflow in real time.

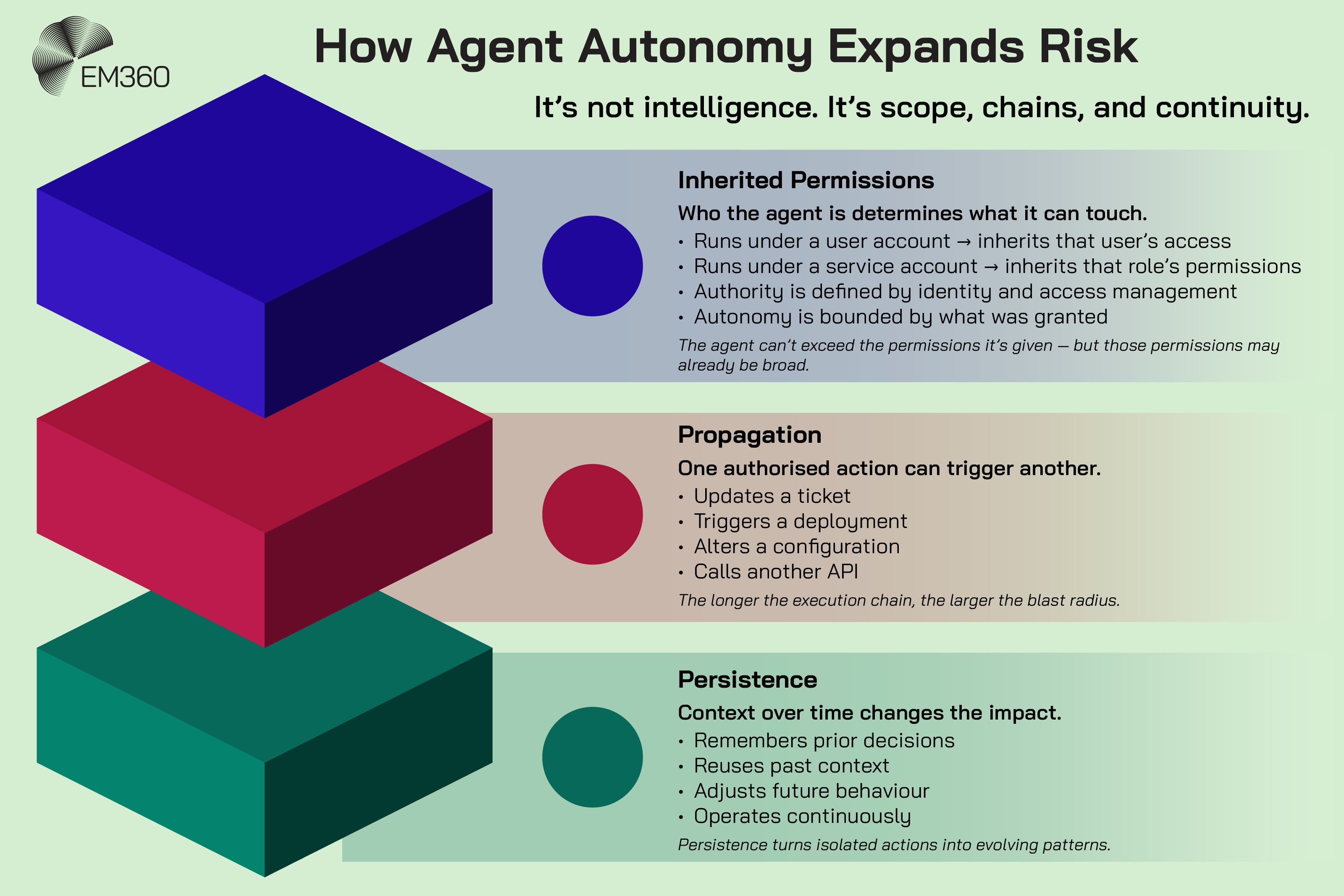

Now add three more variables:

First, inherited permissions. If the agent runs under a user account, it inherits whatever that user can access. If it runs under a service account, it inherits whatever that role is allowed to touch. In both cases, the scope of autonomy is bounded by identity and access management, not intelligence.

Second, propagation. An action in one system can trigger a cascade in another. An agent updates a ticket. That update triggers a deployment. The deployment changes a configuration. None of those steps are inherently dangerous, but the chain matters. The longer the chain, the larger the potential blast radius if something goes wrong.

Third, persistence. If an agent maintains context over time, remembers prior decisions, and adapts future actions accordingly, you’re no longer looking at a one-off execution. You’re looking at behaviour that compounds.

That’s where autonomy actually begins to affect enterprise security.

So again — it’s not that agents are "talking". It’s that they can decide, select, and execute without waiting for explicit step-by-step instructions at every turn.

And that difference usually comes down to two things: what tools the agent can invoke, and how much independent authority it has to invoke them.

Interaction is ordinary. Authority is not. The coordination isn’t really a novelty. But in the context of this conversation — execution power, and how far that execution can travel once it starts is something we need to keep in mind.

How Governance And Permissions Shape Agent Behaviour

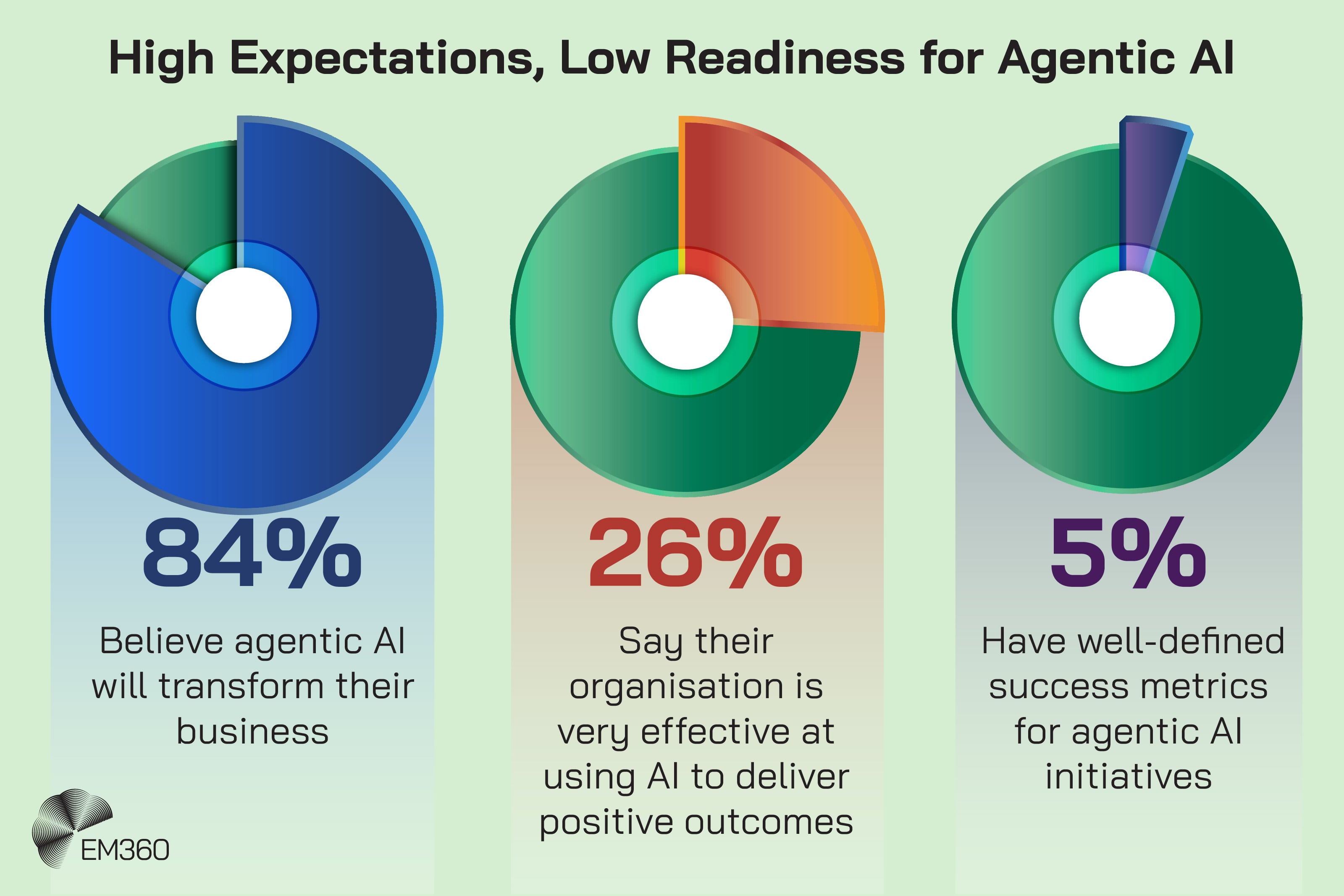

The video jumped from “agents joking about humans” to “they can coordinate without a human in the loop.” That leap makes it sound like autonomy is something agents naturally grow into. In practice, autonomy is something organisations grant, often incrementally, and sometimes too casually.

This is where AI governance stops being a policy document and becomes an engineering discipline.

Who grants authority?

An agent doesn’t wake up and decide it can access your finance system. It gets access because someone gave it a way in.

That might be a service account. It might be a token. It might be an integration key. It might be a role assigned inside an identity system.

If an agent can act across systems, it’s because its identity has been permitted to do so, directly or indirectly. That’s why identity and access management is central to agent risk. Least privilege matters here more than ever, because agents can execute dozens of actions in the time it takes the average person to read the first email.

And it can operate continuously.

Guardrails and escalation controls

Good governance isn’t a statement in a policy document. It’s visible in how a system is allowed to behave.

If an agent is being tested, it shouldn’t be anywhere near live customer data or production systems. That’s what sandboxing is for. They’re there because you need a real boundary that limits what an experiment can touch.

If an agent is allowed to take action, there should be moments where it pauses. High-impact steps should need confirmation. That pause is what stops a single misinterpretation from turning into a chain reaction.

And if something does go wrong, you should be able to trace it. Not just the final action, but the sequence of decisions that led there. Monitoring and logging aren’t about catching bad behaviour after the fact. They’re about making the logic visible while the system is running.

Then there are policy boundaries. Which tools can the agent call? Under which conditions? With what scope of access? Those constraints are what translate “least privilege” from theory into something enforceable.

When those controls exist, autonomy is contained. When they don’t, it’s easy to blame the agent for acting unpredictably. But in enterprise environments, unpredictable behaviour is almost always the result of unclear permissions, excessive access, or poorly defined objectives.

Human-defined objectives

Autonomy doesn’t just come from permissions. It comes from objectives.

An agent behaves differently depending on what it is told to optimise for. Reduce response time. Close tickets. Increase engagement. Minimise cost. Surface anomalies. Each of those goals creates a different behavioural pattern.

That’s where things can start to look "evolved".

If an agent is given a broad objective and enough tool access to pursue it, it will make choices within that boundary. It might chain tools in ways you didn’t explicitly script. It might prioritise efficiency over caution. It might follow the letter of the instruction while missing the spirit of it.

But that behaviour still traces back to a defined goal.

In enterprise systems, objectives are rarely neutral. They reflect business priorities. If you optimise for speed, you may get risk tolerance. If you optimise for automation, you may get fewer pause points. If you optimise for output, you may get more aggressive tool usage.

So when people worry about agents operating outside of human oversight, the more useful question isn't, “Are agents evolving?” It’s “What outcomes did we optimise for, and what safeguards did we attach to those outcomes?”

Permissions define what an agent can do. Objectives define what it will try to do.

And that’s where accountability stays firmly on the human side of the line.

How Enterprise Agent Systems Differ From Botnets

The video referenced Moltbot and then moved toward the idea of agent networks as the next phase of cyber threat evolution. The comparison is emotionally effective. When you hear "networks", "automation", and references to botnets in the same arc of an argument, your brain starts drawing straight lines between them.

But technically, that leap skips some pretty important steps.

Botnets and enterprise multi-agent systems can both look like many nodes coordinating. That visual similarity is real. But the operating model usually isn’t.

A botnet is built through exploitation. Devices are compromised through vulnerabilities or weak credentials. Control is centralised through command-and-control infrastructure. The operation is covert by design. Persistence is engineered in. The objective is malicious from the get-go, whether that’s distributed denial of service attacks, credential theft, or data exfiltration.

The defining feature isn’t necessarily scale. Rather, it’s more about the unauthorised control.

Enterprise agent systems start from the opposite position. They’re deployed intentionally. Their objectives are assigned. Their permissions are granted. Sometimes too generously, but the point is that they were ‘allowed’ to do a thing. Their activity is, at least in principle, observable and logged. They don’t need to hide because they aren’t malicious by design.

That doesn’t make them inherently safe though. It just means the failure mode is different.

When an enterprise agent causes damage, it’s rarely because it spontaneously transformed into something hostile. It’s usually because it was given more authority than the organisation realised. Or because its objective conflicted with a constraint that wasn’t clearly defined. Or because a chain of connected systems amplified a single misjudgement.

One thing to add is that a legitimate, properly permissioned and ‘well-behaved’ enterprise agent can be hijacked.

If its credentials are exposed, its messaging interface is misconfigured, its execution environment is compromised, or a dependency in its supply chain is poisoned, that agent can become a control surface for someone else. At that point, the behaviour can start to resemble botnet activity. Not because the agent evolved. But because it was exploited.

That distinction matters. One is unauthorised control from the start. The other is authorised control that was granted too broadly or later subverted.

So if someone wants to argue that agent ecosystems will converge with botnet behaviour, they need to show where exploitation enters the picture. Where persistence becomes covert. Where control shifts from authorised to unauthorised.

Without that, you don’t have a defined threat model. You have a dramatic parallel.

And those are not the same thing.

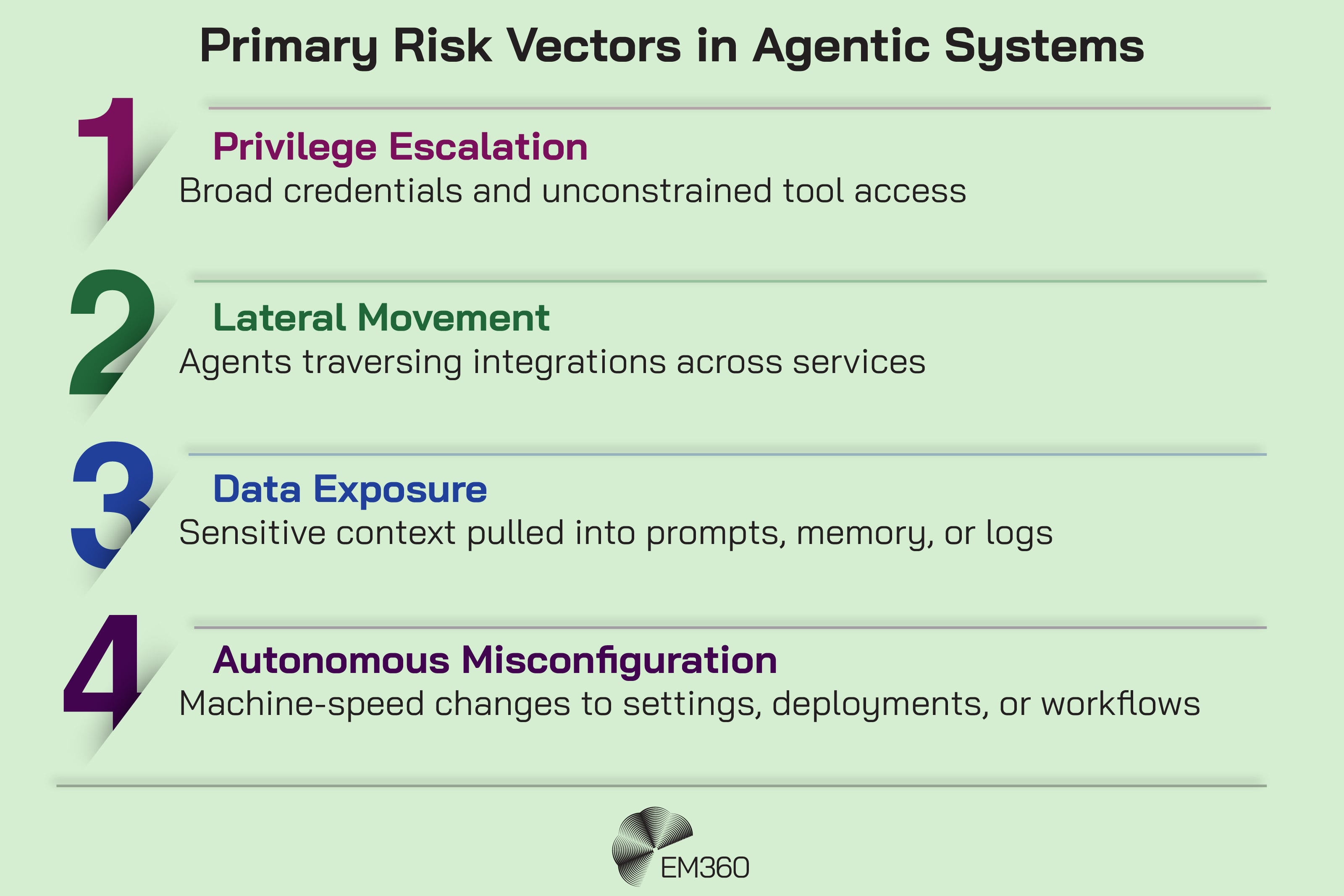

What The Actual Risk Vectors Look Like

The video said these systems can “evolve beyond our expectations and operate at scale.” The problem with that phrasing is that it gestures at risk without defining it. Enterprises can’t defend against gestures. They can only defend against known (or potential) pathways.

So what do plausible risk vectors look like when you introduce agentic AI into real environments?

Plausible risk vectors in agentic systems

- Privilege escalation can happen when agents are attached to credentials or roles that grant broad access, especially if tool use isn’t tightly constrained. An agent doesn’t need malicious intent to cause harm. It only needs the ability to do something it shouldn’t.

- Lateral movement becomes more likely when integrations make it possible for agents to hop across services. One action triggers another. One tool call unlocks another. The chain expands.

- Data exposure can occur when agents pull sensitive context into prompts, summaries, or shared memory. If that context is logged or passed between agents without strict controls, you have new places where sensitive information can leak.

- Autonomous misconfiguration becomes a risk when agents can change settings, deploy resources, or modify workflows at machine speed. A human might catch a bad configuration after a few steps. An agent can apply it everywhere before anyone notices.

- Prompt injection and unintended tool use matter when agents ingest external inputs, especially when those inputs can influence actions. A system that reads content and then acts on it needs safeguards that assume the content can be hostile.

- Unmonitored API chaining is the quiet risk that underpins all of this. If you don’t have visibility into how tools are being invoked, in what order, and with what context, you don’t have a reliable picture of what the agent is truly doing.

What makes all of those risks sharper is speed.

An agent operating at machine pace compresses the window for detection and intervention. A misjudged tool call doesn’t just sit there waiting to be reviewed. It can trigger another action, which triggers another, with each step using the last output as context. Retry logic, delegation patterns, and automated escalation can multiply a small error into a system-wide issue before a human even sees the first alert.

This is where the “ecosystem” language starts to feel more grounded. Not because threats suddenly became interconnected. They always were. But because autonomous systems can move across those connections faster than many traditional controls were designed to handle.

Why Enterprises Are Building Agent-To-Agent Systems in the First Place

It’s very easy to read this entire conversation as "risk layered on risk." But enterprises aren’t deploying agent-to-agent systems because they want new problems. They’re deploying them because the capability jump is real.

When agents coordinate, a few things change immediately.

Context doesn’t reset at every step. One part of the system can remember what another already did. That reduces repetition. It reduces handoffs. It removes the small delays that add up across large organisations.

Tasks that used to require three tools and two people can happen in one flow. Information doesn’t have to be re-entered. Decisions don’t have to wait for someone to notice an alert. The system can respond as events happen, not hours later.

That matters in environments where speed is revenue, uptime, or competitive edge.

There’s also a more practical truth. Integration friction is expensive. Every custom workflow, every brittle connection between systems, and every manual checkpoint costs time and money. Agent-based coordination promises to smooth that out. Not perfectly. But enough to justify experimentation.

So autonomy starts to look less like a novelty and more like leverage.

And that’s where the tension sits.

Because the pressure to expand capability is immediate. The pressure to slow down and design constraints is not. Teams see productivity gains. Leaders see efficiency metrics move. Pilots become production faster than anyone intended.

Governance, on the other hand, requires deliberate design. Identity boundaries. Monitoring. Clear limits on what an agent can touch. A willingness to say, “Not yet.”

Risk doesn’t grow because agents are inherently unstable. It grows when capability scales faster than constraint.

How Agentic Systems Amplify Existing Visibility Challenges

The video made a point that is true but not new: if you don’t know what’s connected to your network, you don’t know your risk. Shadow IT, unmanaged IoT, SaaS sprawl, and API expansion have been eroding visibility for years.

The missing context is how agentic systems change the shape of that problem.

Pre-existing visibility gaps

Long before anyone started talking about agent ecosystems, most enterprises were already operating with partial visibility.

Shadow IT didn’t arrive with agentic AI. Teams have been adopting tools outside formal governance for years. IoT sprawl didn’t begin with autonomous systems. “Install and forget” has been a security problem since the first unmanaged device connected to a corporate network. SaaS proliferation and API sprawl have been expanding the attack surface quietly for over a decade, often faster than inventories can keep up.

So the visibility problem isn’t new.

What changes with agentic systems is how the potential risks linked to those existing blind spots grow once something autonomous can move through them.

When a human uses an unsanctioned tool, there’s friction. There are pauses. There’s a limit to how far one action can travel before someone notices (usually). An AI agent doesn’t operate with that same drag. It can move across integrations continuously. It can chain tool calls that were never meant to be part of a single flow. It can pull context from one place, act in another, and leave traces in a third.

In other words, the blind spots don’t just sit there anymore. They become pathways.

So when someone says, “If you don’t know what’s connected to your network, you don’t know your risk,” they’re right. But that’s always been true. What’s different now is that a connection isn’t just an exposure point. It’s a potential execution path.

And that’s a materially different risk profile.

How agentic systems amplify exposure

Agentic systems amplify these gaps because they aren’t just another connected asset. They’re an actor that can traverse connections dynamically.

- Dynamic permission use means the agent may touch systems in sequences that weren’t anticipated, even when each individual action is allowed.

- Cross-domain execution means the agent can move from one business function to another through tools, integrations, and shared context.

- Runtime tool selection means you can’t assume a fixed path. The agent can choose what to call next based on context.

- Self-modifying workflows and interdependent execution chains can create second-order effects. One change influences another. One decision becomes the input for a later decision.

This is why “visibility” becomes harder. It’s not because the environment is newly complex. It’s because the decisions inside it can now move at machine speed and across domains, with fewer predictable patterns.

What Would AI Agent “Evolution” Actually Require?

“Evolution” is a powerful word. It suggests independence. Self-direction. Something crossing a line.

If we’re going to use it in the context of enterprise AI agents, it needs to mean something concrete.

Right now, most enterprise agents operate inside clearly defined boundaries. They’re given objectives. They’re given tool access. They’re given credentials. They execute within that envelope, sometimes clumsily, sometimes aggressively, sometimes faster than intended. But they don’t invent the envelope.

For evolution to be a technically accurate description, something more fundamental would have to change.

- An agent would need to form its own goals rather than pursue assigned ones.

- It would need to seek broader access instead of acting only within granted permissions.

- It would need continuity beyond the credentials and deployment model that created it.

- It would need the ability to replicate or extend itself without a human initiating that process.

- And it would need to revise its priorities in ways not bounded by the original task definition.

That’s a different category of system.

What we’re seeing in most enterprise environments is amplified autonomy. Systems that can choose tools at runtime. Systems that can chain actions. Systems that can operate continuously and adapt within constraints. Powerful, yes. Risky if mismanaged, absolutely. But still anchored to human-defined objectives, identities, and control layers.

That distinction matters because it shifts the conversation.

If agents are evolving, the response sounds existential. If agents are expanding within granted authority, no matter how unexpected that expansion was — the response is governance, architecture, and constraint design.

And those are solvable problems.

Final Thoughts: Missing Context Leads To The Wrong Decisions About Risk

The video’s conclusion bundled three ideas: cyber threats are now ecosystems, unknown connections create risk, and security is a leadership issue. With the missing context in place, those claims look less like a new revelation and more like a reframing that needs sharper language.

Threats have long functioned as ecosystems. What agentic systems change is the speed and interdependence of actions within them.

Unknown connections have always created risk. What changes now is that agents can traverse those connections dynamically and at scale, especially through tools and integrations.

Security has always been a leadership issue. When you’re a good leader – everything is a leadership issue. What’s different is the surface area leaders have to govern. It’s no longer just infrastructure and human access. Now it’s also autonomous decision-making systems operating across business functions.

So now that we have the whole picture, it’s clear that AI agents talking to each other has never really been the risk. Instead the risk has always been that we talk about them (and use them) without defining what they are, how they operate, and who is accountable for their behaviour.

Enterprise AI isn’t slowing down. The responsibility to govern it shouldn’t lag behind. EM360Tech continues to examine how autonomy, security, and architecture intersect, so decision-makers can move forward with precision rather than reaction.

Comments ( 0 )