A few years ago, a new developer tool might’ve taken months to become “normal” inside a company. Someone tested it. Someone reviewed it. Someone eventually wrote a policy for it.

Clawdbot didn’t wait for any of that.

In late January 2026, the project went from “interesting GitHub experiment” to a mainstream talking point, then almost immediately to an enterprise security discussion. Not because it’s the first AI assistant, or even the first autonomous agent, but because of what it represents: an always-on assistant that can take actions on a machine, triggered through everyday messaging apps.

That’s why enterprises care. Not because it’s viral. Because it sits right on the fault line between productivity and access, and that’s a line most organisations don’t have clearly marked yet.

What Is Clawdbot?

Clawdbot is the name many people still use for an open-source AI agent that runs on a user’s machine and can take actions, not just answer questions. The project has gone through renames, first to Moltbot, then to OpenClaw, which its creator describes as the final name.

In practical terms, it’s a locally run assistant that can connect to chat platforms and act like a “doer”, not just a helper. That distinction matters. A chatbot that answers questions is useful. A tool that can execute commands, modify files, and interact with services on your behalf starts behaving like a system component.

What does Clawdbot actually do?

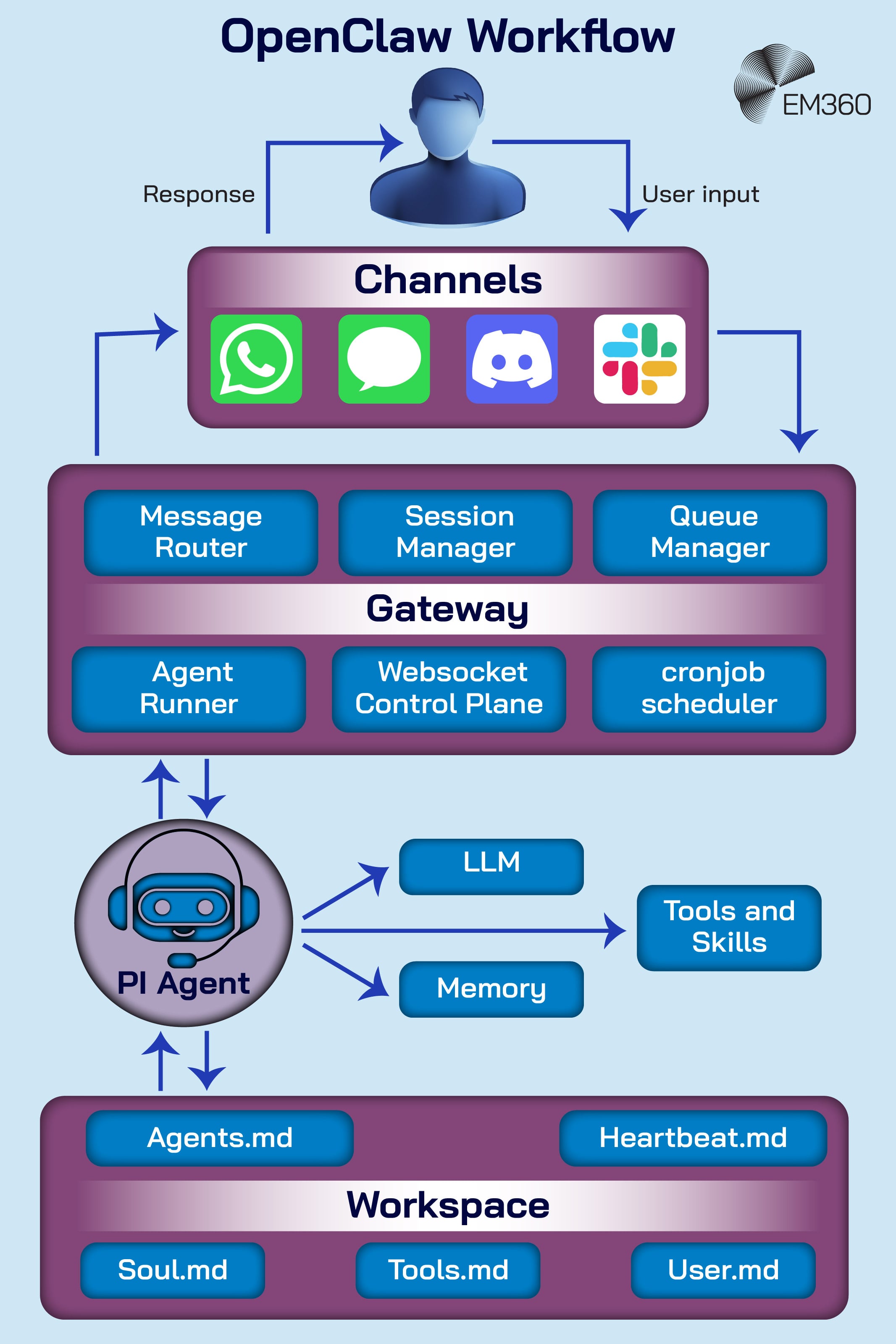

At its core, Clawdbot (now OpenClaw) is a local AI agent that sits on a machine and accepts instructions through chat-style interfaces. The project’s own materials position it as an agent platform that can run on infrastructure you choose, while remaining reachable through channels people already live in.

That design has a few practical implications:

- It can keep running when you’re not actively using it, depending on how it’s deployed.

- It can trigger actions through integrations, rather than staying inside a single app.

- It inherits the permissions of the user account it’s running under, unless you deliberately restrict it.

That’s also why it’s been compared to a digital “assistant with hands”. It doesn’t just suggest. It can act on your behalf, and as though it is you.

Is Clawdbot free and open source?

The project is open source, and the creator has repeatedly framed it as such while documenting the renaming journey and the intent to keep the project open.

The practical cost question is more subtle than “is it free”. The software may not cost money to download, but running it can still create real spend:

- If you host it on a virtual private server (VPS), you’re paying for compute.

- If you connect it to external models, you’re paying for model usage.

- If you add third-party integrations, you’re expanding operational overhead and security scope.

For all businesses, but especially enterprises where the sheer scale of your operation is an inherent factor, “free” is never the full story. The more accurate question to determine the cost is: what does it touch, and what does it inherit — and what are the costs involved in that?

Why Did Clawdbot Spread So Quickly?

The speed that OpenClaw spread at is partly because of its design, and then partly (not so) simple psychology.

The design piece is obvious. It’s open source, easy to try, and built around interfaces people already know. If a tool shows up in Slack, Teams, Telegram, or Discord, it doesn’t feel like “new software”. It just feels like a new contact.

The psychology piece is harder to admit, but it’s the real accelerant. Social proof has become a stand-in for due diligence. If something is trending, starred, shared, and praised, people assume someone else has done the careful work.

That’s how consumer adoption patterns leak into enterprise environments.

And there’s another factor that matters in 2026: the “AI employee” narrative. The promise of an assistant that keeps working while you sleep is compelling. It also makes people more willing to grant broad access early, because the value proposition is framed as leverage, not convenience.

Enterprises care because this adoption doesn’t always happen through procurement. It happens through individuals, teams, and experiments. That’s the Shadow AI pathway, and it’s rarely malicious. It’s usually just fast.

How Clawdbot Works and Why That’s Raising Red Flags

There’s a difference between “this tool could be risky” and “this tool changes the shape of risk”.

OpenClaw sits in the second category because it blends three things that are often separated:

- Local execution

- Always-on availability

- External command channels

None of those are automatically bad. But the combination is exactly what security teams worry about, because it’s the same combination attackers love to exploit.

The local gateway and messaging control model

OpenClaw is designed to run locally while remaining reachable through chat channels. That’s part of the appeal, and it’s also where misconfiguration can flip the risk profile.

A local service is usually protected by locality. If it only listens on localhost, the exposure is limited. But if people expose it through a reverse proxy for convenience, the “local” boundary gets blurry. A reverse proxy can effectively turn a local control surface into something that’s reachable from outside the machine, depending on how it’s configured.

This is why regulators and security researchers have focused on misconfiguration risk alongside the software itself. Reuters reported that China’s industry ministry warned about cybersecurity and data risks if OpenClaw deployments are misconfigured.

That warning isn’t about one bug. It’s about what happens when an always-on agent is reachable in ways the operator didn’t fully think through.

Privilege, persistence and shell execution

This is the section where people start using charged language, so it’s worth being precise.

OpenClaw is an agent that can take actions on a machine. If it can execute commands, access files, and interact with apps, it can do real work. It can also do real damage, especially if it’s running under a privileged account.

That’s why some researchers compare parts of this model to a remote access trojan (RAT). A RAT is malicious software that gives an attacker remote control of a system. OpenClaw isn’t inherently malicious. But the shape of the capability can look similar when:

- The agent is always on

- It can accept instructions from external channels

- It can execute commands or automate actions locally

That “capability overlap” is what makes security teams uncomfortable. It’s also why there’s been a rush of guidance around hardening and mitigation for agentic tools like this. Tenable, for example, published a mitigation-focused write-up describing vulnerabilities and hardening steps for Clawdbot, Moltbot, and OpenClaw.

There’s also a more modern risk that doesn’t fit the RAT analogy neatly: prompt injection. If a model can be influenced through inputs it wasn’t meant to trust, it can be nudged into actions that look authorised but aren’t intended. That’s not unique to OpenClaw, but autonomous agents expand the blast radius because they don’t stop at text generation.

Credential storage and extension ecosystem risks

The moment an agent becomes useful, it starts collecting things that matter: tokens, keys, access to third-party services, and sometimes a memory of prior interactions.

That creates two adjacent risk categories.

First, sensitive material. If credentials end up in logs or configuration files without strong controls, you’ve got a credential storage problem, not an AI problem.

Second, ecosystem abuse. Viral open-source projects attract imitators and opportunists. Malwarebytes documented an impersonation campaign that followed the Clawdbot rename, including typosquat domains and a cloned repository, with the classic goal of catching users during a confused search-and-install moment.

This isn’t theoretical. Aikido reported a fake VS Code extension called “ClawdBot Agent” that behaved like a trojan, presenting as a working assistant while dropping ScreenConnect remote access malware on Windows when VS Code started.

That story matters for enterprises because it shows how the risk spreads. You don’t need a flaw in the core project to get harm. You just need enough hype that people will install the wrong thing quickly.

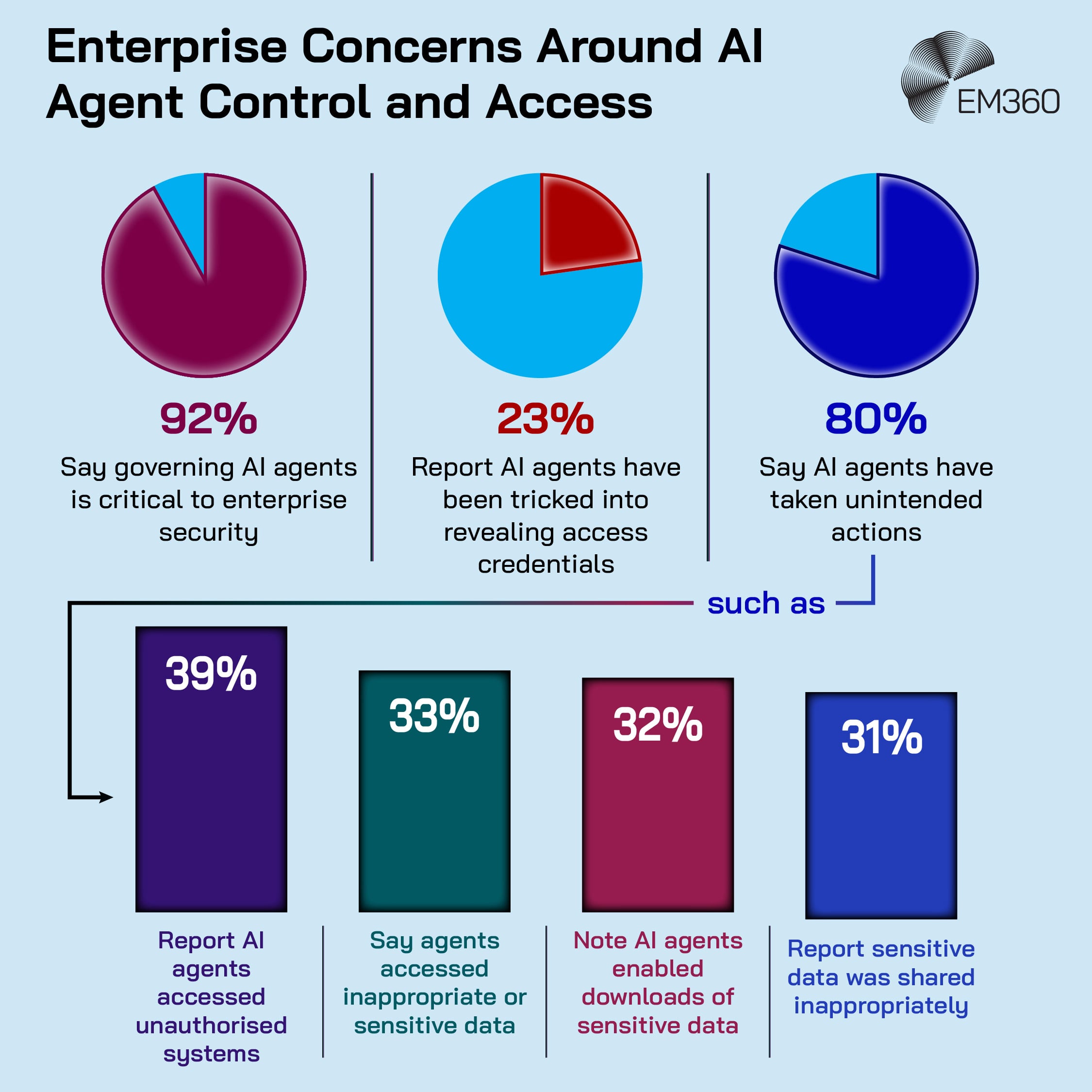

What Industry Research Reveals About Shadow AI and Enterprise Exposure

The Clawdbot story is noisy, but it’s not isolated. It’s a clear example of trends that security and IT teams have been tracking for months: adoption outpacing controls, and individuals bringing powerful tools into corporate workflows before policies catch up.

Microsoft’s Cyber Pulse AI security report ties this directly to measurable gaps. It cites its Data Security Index finding that only 47 per cent of organisations report implementing specific security controls for generative AI.

It also highlights unsanctioned use. Microsoft reports that 29 per cent of employees have turned to unsanctioned AI agents for work tasks.

That’s the context enterprises should be using when they look at OpenClaw. It’s not “is this one tool safe?” It’s “do we have a way to see and govern agent-like tools at all?”

There’s also an emerging consensus on why agents feel different. Palo Alto Networks points to persistent memory as an accelerant, because it turns attacks from one-off events into stateful, delayed-execution scenarios.

Frameworks are catching up too. The Open Worldwide Application Security Project (OWASP) has released work focused on agentic applications, reflecting the fact that this is becoming a repeatable security category, not a one-time headline.

Taken together, the “reveals” are clear:

- Adoption is faster than formal control rollout.

- Unsanctioned usage is already common.

- Misconfiguration is a major exposure driver, even without malware.

- Persistent, tool-using agents create new risk patterns that traditional controls weren’t built around.

What Enterprise Leaders Should Assess Now

Enterprises don’t need a panic response. They need an assessment approach that treats agents as a new operational shape, not a novelty.

Here’s what’s worth focusing on.

Discovery and inventory

You can’t manage what you can’t see. And with agent tools, “see” has to include more than installed software.

A practical inventory should cover:

- Endpoints where agents are running (developer machines, admin workstations, shared servers)

- Chat channels connected to agents (Slack, Teams, Telegram, Discord, and any others)

- Third-party integrations and credentials the agent can access

- Whether the deployment is local-only or exposed through networking layers like proxies

This isn’t about banning experimentation. It’s about knowing where it lives.

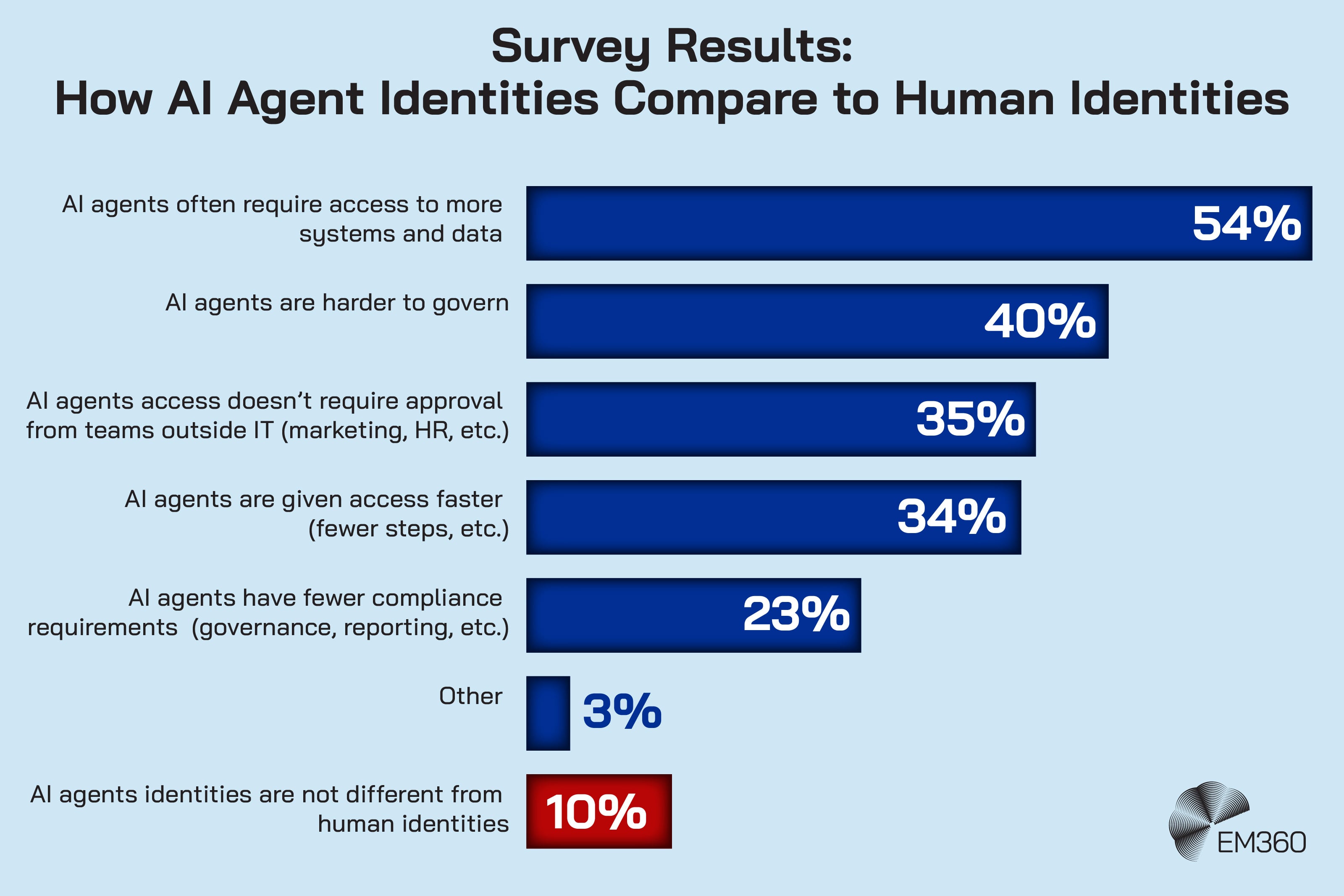

Identity and access boundaries

Most enterprise controls are built around identity and access management (IAM). Agents complicate IAM because they can act on behalf of a user, but they aren’t the user.

Start with the basics:

- Don’t run agents under highly privileged accounts unless there’s a strict reason

- Separate experimentation environments from production access paths

- Treat agent credentials like service credentials, with rotation and revocation plans

- Decide what “ownership” means if multiple people can instruct the same agent

If you can’t describe what the agent is allowed to do in one (short) paragraph, it probably has too much access.

Messaging channel governance

Messaging apps weren’t designed as command-and-control layers. But that’s how they can function when an agent listens to them.

Governance here isn’t complicated, but it needs to exist:

- Who is allowed to message the agent?

- From which channels?

- With what authentication, verification, or allowlists?

- What logs exist for agent actions triggered through chat?

If you treat messaging platforms as “just chat”, you’ll miss the real control surface.

Configuration and hardening controls

This is where most avoidable risk shows up, because misconfiguration is usually the easiest path to exposure.

Hardening priorities tend to cluster around:

- Binding local services to localhost unless there’s a controlled need to expose them

- Using authentication on any agent gateway or control interface

- Restricting filesystem access and limiting what tools the agent can invoke

- Isolating agent execution using sandboxing or constrained environments where possible

- Vetting extensions, skills, and third-party integrations like you would any software supply chain

Security teams are already publishing hardening guidance for OpenClaw-style deployments, which is a signal in itself that this category is settling into “repeatable enterprise concern.”

Final Thoughts: The Line Between Assistant and Access Is Thinner Than It Looks

Clawdbot’s rapid rise didn’t create a new enterprise risk so much as expose one that was already forming.

When an assistant runs locally, stays active, and can be reached through everyday messaging channels, it stops being “just a tool”. It starts behaving like an access layer. That shift can happen quietly, especially when adoption is driven by individuals trying to move faster, not by teams trying to bypass controls.

The next wave won’t look exactly like Clawdbot. Some agents will be viral. Many won’t. But the pattern will repeat: more autonomy, more integrations, more background operation, and more blurred boundaries between helpful and powerful.

If you’re trying to lead through that shift, the goal isn’t to shut innovation down. It’s to be clear-eyed about where productivity ends and privileged access begins. That’s the line we’ll keep tracking at EM360Tech, as autonomous agents move from experimentation into real enterprise workflows.

Comments ( 0 )