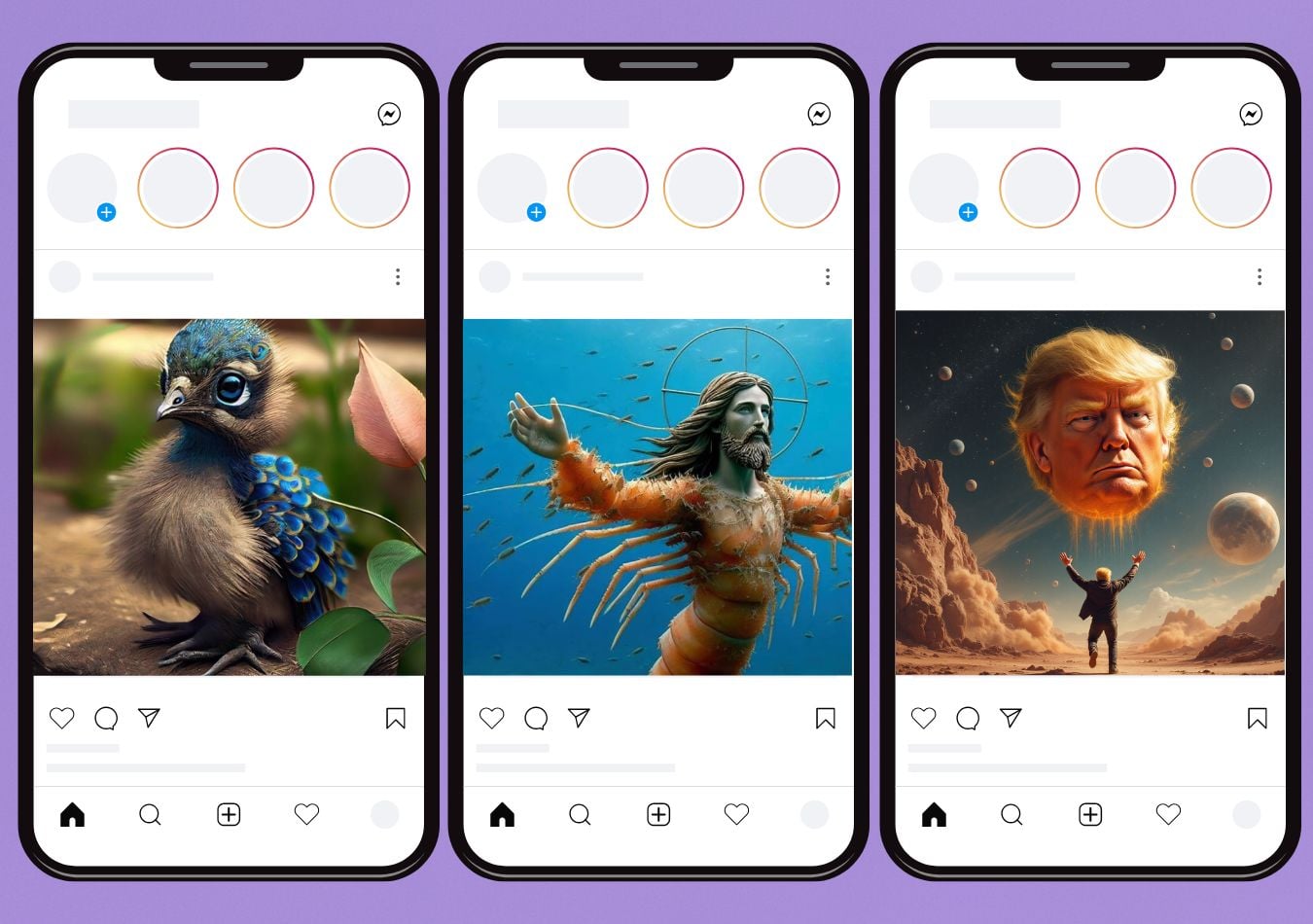

You may have noticed that the internet has… changed.

So called AI Slop is saturating once creative internet haunts.

Tools that pitched their value in democratising the creative process like AI video generators and AI image generators seem to have done the opposite - taking the creativity out of once loved platforms, stripping away any nuance and originality and contributing to an internet that… well… feels dead.

This seems to even be encouraged by the platforms, with TikTok’s AI Alive video generator, Meta’s inbuilt video and image generators running on Llama, and X’s Grok Imagine.

Since the widespread adoption of artificial intelligence, it's no secret content has changed. On paper it’s a manager's dream, with output that faster, scales higher, and spreads wider than ever.

This issue is, most of it is not meaningful. Human voices are drowned out by algorithmic noise.

You may have already seen the term “AI slop” in the comments on viral videos, social feeds, or discussion boards.

In this article, we’ll explain exactly what AI slop is, show you examples of it in action, explore why it spreads, and discuss how to limit it in your feeds.

We’ll even walk through how to make your own AI slop video, because understanding the mechanics is the first step toward recognising, and perhaps curbing, the phenomenon.

What is AI Slop?

AI slop is the term used to describe the massive volume of low quality AI generated content. It lacks effort, quality and meaning, designed for quick, passive viewership.

Around 51% of global internet traffic is bots, with 37% of these identified as being used for destructive purposes.

What is The Point of AI Slop?

Attention is money.

AI slop exists because the economic structure of the internet rewards scale, not substance.

AI slop goes beyond a type of content - it’s a whole production model and economic strategy.

It’s industrialisation of the full cycle of content with automated scripts, visuals audio, posting, A/B testing and commenting.

The content is engineered for metrics rather than meaning, but why?

In content ecosystems, platforms monetise time-on-site, then advertisers pay for impressions and engagement. Algorithms will prioritise measurable signals such as watch time, shares, comments, the volume of which increases statistical odds of virality.

So naturally, what thrives is what is measurable.

AI slop is measurable.

AI agents even claim to now operate at a step before attention, leveraging the ‘intention economy’ wherein they ‘know’ your intentions before you do.

The cost of production of AI slop is near zero, but gains per post don’t need to be high when impressions are high.

This is a fully optimised form of engagement farming.

Marginal gains per post doesn’t need to be high because at scale, even tiny engagement rates become profitable - this is called probabilistic monetisation.

GenAI Redraws Film Economics

Cannes exposes how 50% VFX cost cuts and faster delivery windows are pushing studios to redesign production models around generative AI.

The Impact of AI Slop

Repetitive content flooding a platform where you are mindlessly scrolling begins to shape your own perceptions and attitudes. You might not believe it but AI slop can be a powerful propaganda tool when deployed at scale.

The concept of “manufactured consent” traditionally referred to media systems reinforcing dominant narratives through selective amplification.

In the AI era, amplification no longer requires newsroom coordination, it can be automated instantly.

If enough AI-generated images, clips, or stories circulate around a particular event, conflict, or political figure, volume alone can create the impression of legitimacy. If you try to fact check, which most scrollers don’t, you will see thousands of similar results.

Familiarity breeds perceived truth. Repetition breeds credibility. And algorithmic systems, optimised for engagement rather than verification, accelerate that process.

Political leaders intentionally erode trust through amplifying these fake visuals.

Emotionally and politically charged content easily bypasses scrutiny when it already aligns with existing narratives.

When production is frictionless and distribution is automated, flashy, emotional misinformation scales faster than well researched corrections.

Inside China’s AI Compute Push

Chips, data centers, and cloud policy are turning AI infrastructure into a national capability and a board-level concern.

AI Slop Examples

AI slop shows up everywhere, often in ways that feel oddly familiar yet slightly off.

Music & art

AI-generated tracks mimic popular artists produced by algorithms, they hit the hollow reproductions of emotional notes of human creativity and lack genuine artistic intent.

Last year, Christie's Augmented Intelligence Auction faced global backlash from artists who argue that it legitimises copyright infringement and is ‘art theft’.

Video essays

YouTube is filled with automated essays or commentary videos, often stitched together from existing sources.

They sound coherent at first glance but are largely derivative and formulaic. The key problem comes from hallucinated sources perpetuating each other ad nauseum.

Short-form content

Quick, bizarre videos, like talking food tutorials or absurd “Italian brain rot” clips are designed to fry attention spans while just strange enough to hook you into watching the next one.

All of this content is generated by training AI on vast amounts of pre-existing creative work YouTube videos, physical art pieces, films, or even Reddit threads without attribution.

The result is a flood of derivative, algorithmically optimised content that replicates human culture at scale.

When AI Vision Meets Board Risk

Testimony from OpenAI insiders turns leadership trust and conflict management into core risk factors for large-scale AI capital deployment.

Why is AI Slop Bad?

AI slop isn’t just annoying, it has real consequences for truth, knowledge, and society.

AI gets things wrong, a lot.

When undisclosed AI-written articles are used as sources for AI-generated videos or other content, these errors can snowball.

One false fact leads to another, creating a self-reinforcing loop, an ouroboros of misinformation where entirely invented claims are cited as if they were true.

AI will often try to “please” the user, generating content that aligns with prompts rather than reality. Careless use degrades human knowledge on a mass scale.

Training and running AI models consumes significant energy. While some AI applications, like medical imaging for cancer detection, have clear benefits, producing endless AI slop is wasteful.

Every frivolous video, meme, or automated essay has a significant environmental footprint energy and water used for outputs that add little value, and sometimes actively spread false information.

There is also a real cognitive impact. AI slop is engineered to grab attention, often at the expense of depth or nuance.

Endless streams of bizarre, sensational, or derivative content can shorten attention spans, fragment focus, and make it harder for humans to engage with vital complex ideas.

AI slop creates systemic risks: misinformation, environmental strain, cognitive overload, and the erosion of human knowledge.

When AI Sanctions Reshape Ties

How Western export controls are pushing Russia’s GigaChat toward Chinese chips and deepening a new AI and semiconductor alignment.

How to Stop AI Slop?

With every revamped model it gets harder and harder to identify AI slop. Though there are some strategies individuals and organisations can take to reduce its impact.

Check facts

Always check where information comes from. AI-generated content often cites itself or other unverified AI outputs.

Cross-reference with trusted sources. Even small habits of verification help break the self-reinforcing loop of false facts.

Train your algorithms.

Algorithms reward attention. Scroll past content you don’t want to see, report as not interested and spend time with content you consciously want to see.

Follow creators and sources you trust, which helps algorithms prioritise high-quality human-led content over automated noise.

Log off

Be deliberate with content consumption. Short-form, endlessly scrollable feeds are designed for engagement, not insight.

Intentionally step away from algorithmic feeds to engage with slower, human-curated media: books, long-form journalism, podcasts, and verified sources.

How To Spot AI Slop

AI slop is getting harder to spot but it is usually:

- Mass-produced

- Emotionally manipulative or sensational

- Visually striking but shallow

- Factually questionable or completely fabricated

- Designed for frictionless scrolling

- Lacking human authorship accountability

How To Make AI Slop?

AI slop doesn’t just appear, it’s the product of mass automation, scale, and algorithmic optimisation.

Understanding how it’s created can help you recognise it.

Training data is gathered

AI slop is trained on massive datasets of pre-existing content: YouTube videos, images, music, text, Reddit threads, and more. These datasets give AI a model of human creativity, but the content is often used without attribution. The output is derivative; it mimics patterns rather than producing original ideas.

Generative AI tools are employed

Creators leverage AI video generators, image generators, and text models. These tools can produce content quickly and at scale. Unlike traditional creative work, AI slop prioritises volume and engagement over nuance or originality.

Content is optimised for engagement metrics

AI slop is designed to “win” on platforms. That means, eye-catching visuals, short, engaging formats, clickable titles or caption and patterns known to increase watch time, shares, and likes.

The content is engineered to trigger attention, not to inform or entertain in a meaningful way.

Automated distribution

Posting, A/B testing, and even automated engagement are often scripted. This creates a self-reinforcing feedback loop where content that performs “well enough” is amplified further, regardless of quality.

Comments ( 0 )