For years, Formula 1 teams have chased the same dream every engineering-heavy industry chases. Find the edge. Remove friction. Optimise the system until performance stops feeling like luck and starts feeling repeatable.

That’s sensible right up until the optimisation changes the thing itself. In racing, that can mean cars so tuned to aerodynamic performance that the airflow behind them becomes harder for the next car to work with. The edge doesn’t just improve competition. It reshapes it.

That same tension is showing up far beyond sport. Enterprises now simulate supply chains, model risk, predict demand, sequence production, and test decisions before anyone commits real money or real time to them. But some of those problems don’t just get bigger as more variables are added. They become structurally harder.

That matters because there comes a point where throwing more classical compute at the problem stops being a serious answer and starts being a very expensive coping mechanism.

That’s where quantum computing starts to matter. Not as a sci-fi shortcut. Not as a replacement for everything that already works. As a response to a very specific kind of pressure: when simulation, optimisation, and decision-making become too complex for sequential systems to handle cleanly or fast enough.

The future question isn’t whether every business needs quantum. It’s which problems are beginning to outgrow the tools we already trust.

Why Some Problems Outgrow Classical Computing

Classical systems are extraordinarily capable, but they still work through problems in a fundamentally step-by-step way. That's not a flaw. It’s why modern computing exists. The trouble starts when the number of possible states, routes, scenarios, or combinations grows so fast that the system spends more time drowning in options than producing a useful answer.

This is the heart of computational complexity. The problem isn't simply that the computer is slow. The problem is that the search space becomes absurd.

That distinction matters for enterprise leaders because it changes the business conversation. If a routing model, risk engine, or simulation platform takes too long to return an answer, the issue may not be poor engineering or underpowered infrastructure. It may be that the problem itself is becoming intractable at classical scale.

IBM’s framing is useful here. Quantum computing isn't being developed to replace laptops or standard analytics platforms. It's being developed to tackle problems classical systems cannot solve, or cannot solve fast enough to be genuinely useful.

That's also why the market conversation has matured. McKinsey says the sector is moving away from obsessing over raw qubit counts and towards stability, error correction, and practical workflows.

The interesting question now is less “How many qubits do you have?” and more “What kind of problem can this system actually help solve?” It’s a much healthier question. Also, frankly, a much less annoying one.

Sports Analytics Shows The Problem First

Sport is a useful place to watch this play out because the constraints are visible. You can see the optimisation. You can see the payoff. And you can see the point where the solution starts to alter the experience itself.

That makes sport a clean microcosm of a much wider enterprise issue: when systems become increasingly data-rich, increasingly model-driven, and increasingly shaped by prediction rather than reaction.

Formula 1 and the limits of optimisation

Formula 1 is a strong example because it shows how engineering optimisation can solve one problem while creating another. Teams optimise for downforce, stability, and cornering performance. The cars get faster. The models get better. The design converges around what works.

But that same aerodynamic optimisation can make following another car harder because turbulent air disrupts the performance of the car behind. The result is familiar to anyone who has ever watched one team optimise itself into a wall of dominance. The system becomes more efficient and less open. Performance rises. Variability falls.

That's not just a racing story. It's a systems story. In business, over-optimised processes can reduce waste, standardise quality, and improve forecasting. They can also make the organisation less flexible, less exploratory, and more brittle when conditions change. A supply chain tuned too tightly for normal operations struggles when disruption hits.

A model trained too narrowly on yesterday’s conditions can misread tomorrow’s market. Efficiency is valuable. So is room to move.

Predictive playbooks and simulation in football

American football shows a different version of the same pressure. The NFL’s Next Gen Stats system, developed with Zebra Technologies and running on AWS, captures player location, speed, distance travelled and acceleration 10 times per second, creating more than 200 new data points on every play of every game.

The league now uses that data to derive advanced metrics through machine learning and to support planning, analysis, and decision-making.

That's already a serious simulation environment, and it's still only the beginning. Once real-time tracking is combined with predictive modelling, coaches and analysts are no longer just reviewing what happened. They're building probability maps around what’s likely to happen next.

This is where sport starts to look a lot like logistics, finance, or infrastructure planning. Every extra player state, movement path, strategic choice, and counter-move expands the problem space. Useful models become harder to build, harder to run, and harder to trust at speed. That doesn't mean quantum is already calling live plays.

It means this is exactly the kind of decision environment where classical systems begin to strain first.

Where Quantum Computing Changes The Equation

The simplest way to think about quantum computing applications is this: quantum systems handle information differently, which makes them promising for certain kinds of optimisation and simulation problems that become unwieldy for classical systems. That doesn't mean they “try everything at once” in the cartoon version people love to repeat.

It means they use quantum mechanics to represent and manipulate problems in a different computational framework. For the right problem classes, that can matter a great deal.

Just as importantly, the industry isn't betting on a clean handover from classical to quantum. IBM’s roadmap and March 2026 quantum-centric supercomputing blueprint both centre on hybrid quantum-classical systems where QPUs work alongside CPUs and GPUs in coordinated workflows.

That matters because it's a far more plausible enterprise path. Quantum handles the hard part it's suited for. Classical systems handle the orchestration, storage, pre-processing, post-processing, and everything else enterprises still need to run.

This is also why error correction and stability have become so important. Google’s Willow work and the related Nature paper focused on reducing errors as systems scale, while NVIDIA’s April 2026 Ising announcement focused on AI models for calibration and quantum error-correction decoding.

The field is telling us, quite loudly now, that useful quantum computing depends less on flashy demos and more on making these systems reliable enough to join real workflows. That's a much more enterprise-relevant story than “one day a quantum computer will solve everything in five minutes.”

Future Applications Beyond Sport

The strongest future applications aren’t the ones that sound the most futuristic. They're the ones where classical systems are already close to their practical limits, where the cost of approximation is high, and where better answers would change real outcomes.

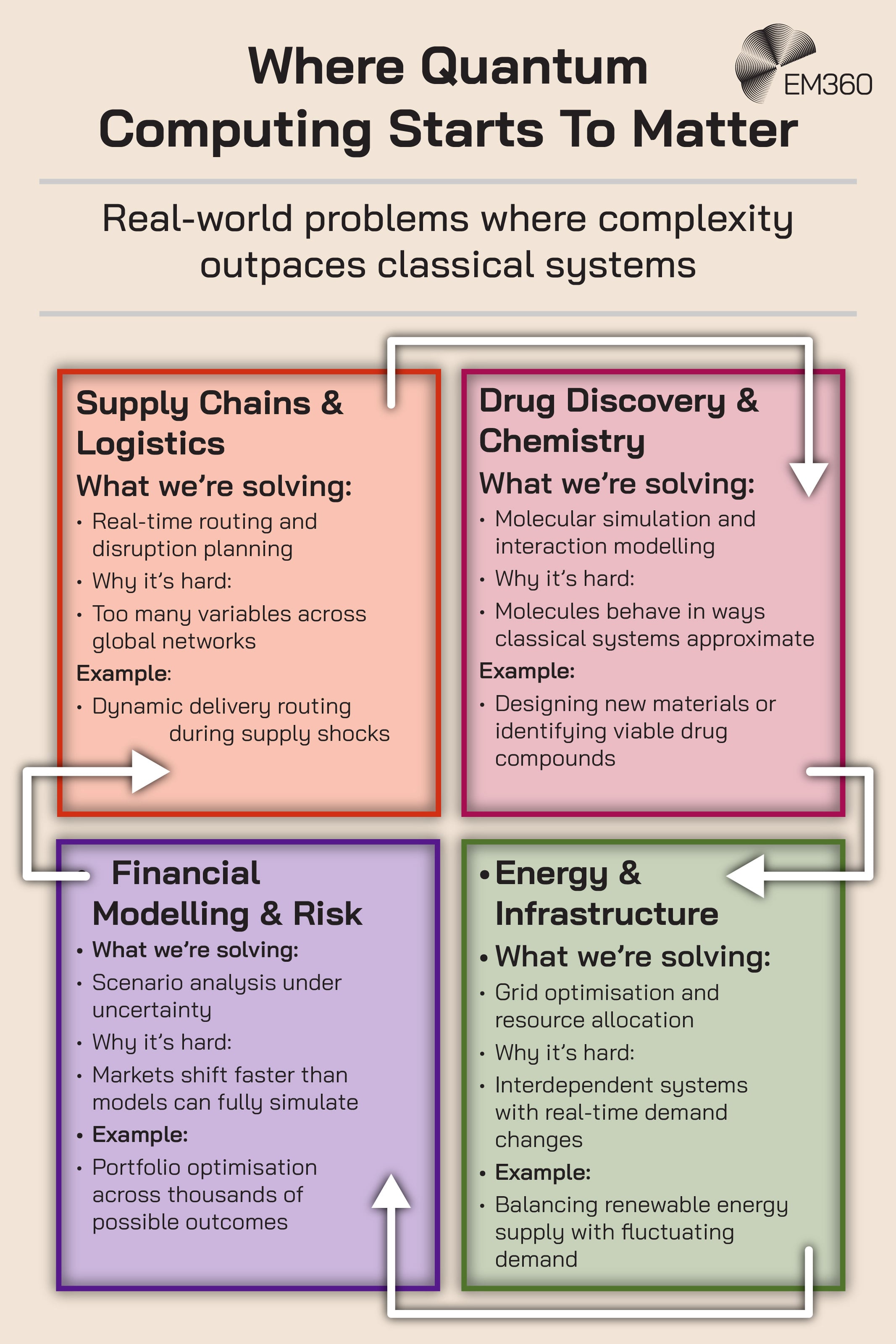

Supply chain and logistics optimisation

Supply chains are full of interconnected decisions that refuse to stay still. Routing affects inventory. Inventory affects production. Production affects labour, maintenance, and supplier exposure.

Add geopolitical shocks, weather disruption, cyber incidents, and volatile demand, and the optimisation problem becomes less like a spreadsheet and more like trying to navigate a maze while the walls keep moving.

The World Economic Forum’s 2025 manufacturing and supply chains paper makes the case clearly: traditional digital tools are hitting limits in the face of growing complexity, and early industrial quantum use cases are already appearing in scheduling, logistics planning, and production sequencing.

This isn't just theory. In March 2025, D-Wave and Ford Otosan said a hybrid quantum application had been deployed in production to improve vehicle sequencing for Ford Transit manufacturing.

Ford Otosan produces more than 1,500 vehicle variants, and the companies positioned the deployment as something that went beyond what purely classical methods had achieved. That's the kind of case study enterprise readers should pay attention to.

Not because it proves universal quantum transformation is here, but because it shows where optimisation modelling is already reaching for new tools.

Drug discovery and molecular simulation

Drug discovery is one of the most cited quantum use cases for a reason. Molecules are quantum systems. Simulating their behaviour accurately is hard, expensive, and still shaped by approximation. Classical systems can do remarkable work here, but not without trade-offs in scale, fidelity, or runtime.

IBM’s March 2026 announcement about a half-Möbius molecule and its March 2026 materials science update both position quantum simulation as a practical way to study molecular and material behaviour that's difficult to model classically.

That doesn't mean pharmaceutical breakthroughs suddenly become instant. It means the modelling layer could become more faithful, which matters because better simulation upstream can improve how scientists narrow candidate spaces, test assumptions, and understand interactions before real-world experimentation begins.

That's a quieter claim than the hype version, but it's also the one that stands up.

Financial modelling and risk analysis

Finance has lived in the land of scenario analysis for years, so it makes sense that it remains one of the sectors McKinsey identifies as likely to see strong quantum growth. Portfolio optimisation, pricing under uncertainty, fraud detection, and risk modelling all involve massive state spaces and trade-offs under incomplete information.

Those are exactly the kinds of problems that become expensive to approximate badly and difficult to solve well.

There’s also a more immediate financial angle to quantum, and it's less glamorous. Security. Capgemini’s July 2025 research found that 65% of organisations were concerned about harvest-now, decrypt-later attacks, and nearly two-thirds considered quantum computing the most critical cybersecurity threat in the next three to five years.

So while one future application is better financial modelling, another is simply making sure the financial sector is still protected when today’s encryption is no longer enough.

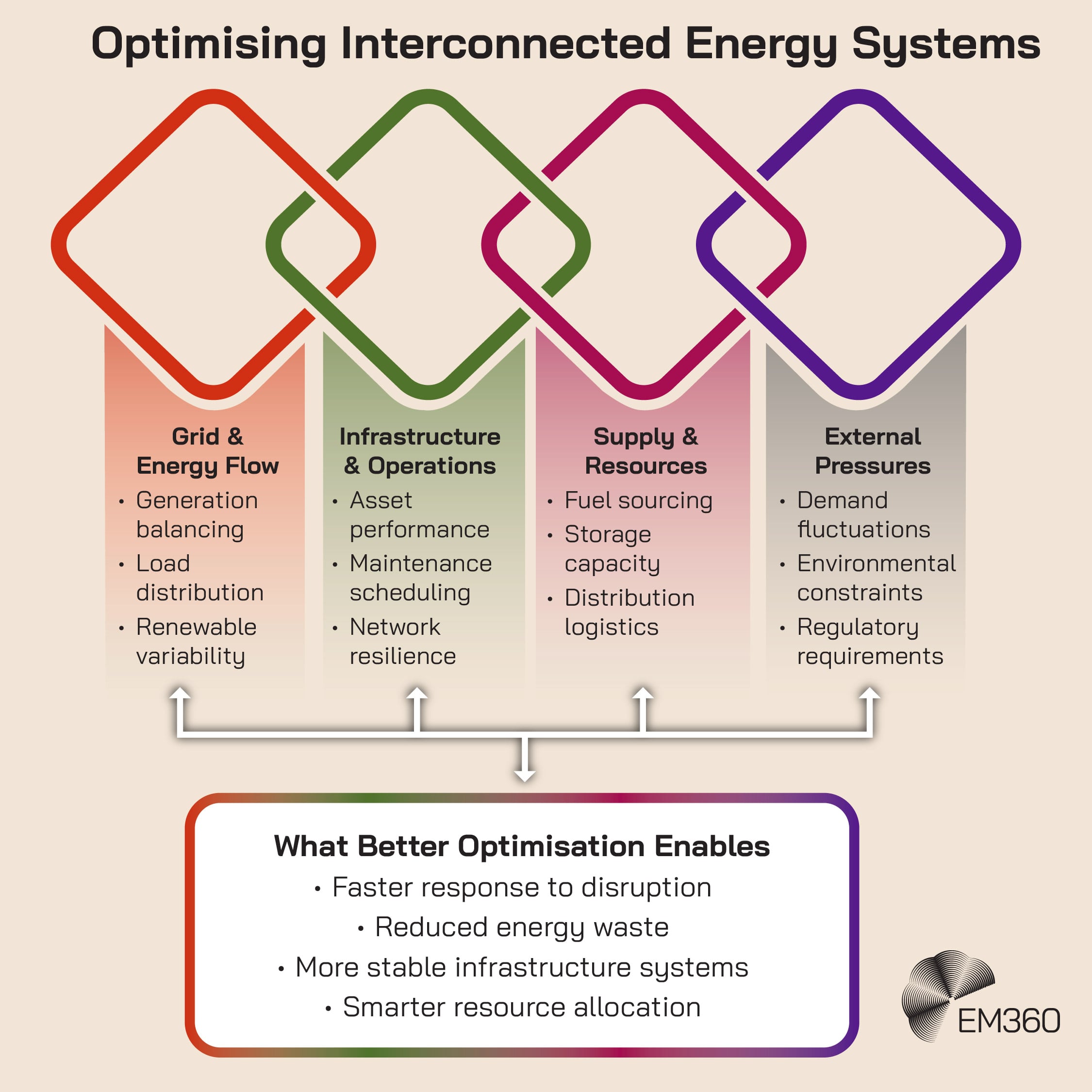

Energy and infrastructure systems

Energy systems are one of the clearest examples of why this matters. Grid optimisation, resource allocation, generation balancing, network resilience, and sustainability constraints all sit on top of each other. They're not separate decisions. They're one interdependent system pretending to be several neat ones.

Quantum doesn't remove that complexity, but it may improve how planners model and respond to it.

The World Economic Forum’s industrial report ties quantum directly to resilience, precision, and security in critical systems, while IBM’s quantum-centric supercomputing work continues to position optimisation, chemistry, and materials science as core targets.

That makes this especially relevant to organisations trying to scale responsibly. Better optimisation in energy and infrastructure doesn't just mean efficiency. It can also mean less waste, faster response to disruption, and better decisions under environmental and operational constraint.

That's a much more interesting enterprise question than whether quantum is “the next big thing.” The bigger question is whether our current systems are enough for the complexity we keep creating.

The Risk Of Perfect Optimisation

There is, however, a catch. The more we optimise, the more we risk converging on systems that are efficient and less adaptable. Sport makes this visible because people notice when the game becomes too predictable. Enterprises often notice later, because predictability can look like maturity right up until conditions change.

This is the real risk of over-optimisation. Models can narrow decision-making so effectively that human judgement gets treated as noise. Teams can become so reliant on simulations and automated recommendations that they stop asking whether the system is still aligned with reality. Governance gets thinner.

Curiosity gets weaker. The system keeps producing answers, so everyone relaxes. That's usually when trouble starts.

OECD’s March 2026 paper is useful here because it frames quantum readiness as capability building, not blind adoption. It highlights barriers including limited maturity, unclear use cases, high access costs, and talent shortages. That's a reminder enterprises need to hear. New computational power doesn't remove the need for judgement. It increases it.

The more sophisticated the system, the more carefully leaders need to think about where to trust it, where to challenge it, and where to keep humans firmly in the loop.

Final Thoughts: Optimisation Changes The System, Not Just The Outcome

The real story here isn't that quantum computing will let organisations simulate everything. It's that our appetite for simulation keeps expanding, and some of the problems we care about most are beginning to outgrow the systems we built to manage them.

That's why quantum matters. Not because it replaces classical computing. Not because it makes complexity disappear. Because it may expand the range of problems we can approach with more useful models, better simulations, and more realistic optimisation.

McKinsey’s market outlook, IBM’s quantum-centric architecture, and OECD’s readiness work all point in the same direction. The near future belongs to organisations that understand where quantum fits, where it doesn't, and how hybrid workflows are likely to shape the transition.

The harder question is what happens after that. Every optimisation changes the system around it. Sometimes that makes the system stronger. Sometimes it makes it narrower. The organisations that benefit most from quantum will not be the ones chasing novelty for its own sake.

They will be the ones disciplined enough to balance precision with adaptability, and ambitious enough to design systems that can evolve instead of simply converge.

For leaders trying to make sense of where quantum strategy fits into real enterprise decision-making, EM360Tech continues to bring together the research, context, and conversations that make the next wave of computing easier to think about before it becomes impossible to ignore.

Comments ( 0 )