Data collected by enterprises is crucial to helping them make better business decisions. But simply collecting high-quality and reliable data isn’t enough for analytics or business intelligence – especially as the volume of data increases.

This leads to two-thirds of data going unused in an age where data can unlock valuable insights, drive innovation and give a company the competitive edge it needs to survive and thrive in today’s business landscape.

And this data doesn’t go unused because businesses don’t know how to leverage it, but simply because they can’t find it.

That’s where data aggregation and aggregators enter the picture, allowing businesses big and small to find the valuable data they store and leverage it to make better, data-driven decisions.

What is data aggregation?

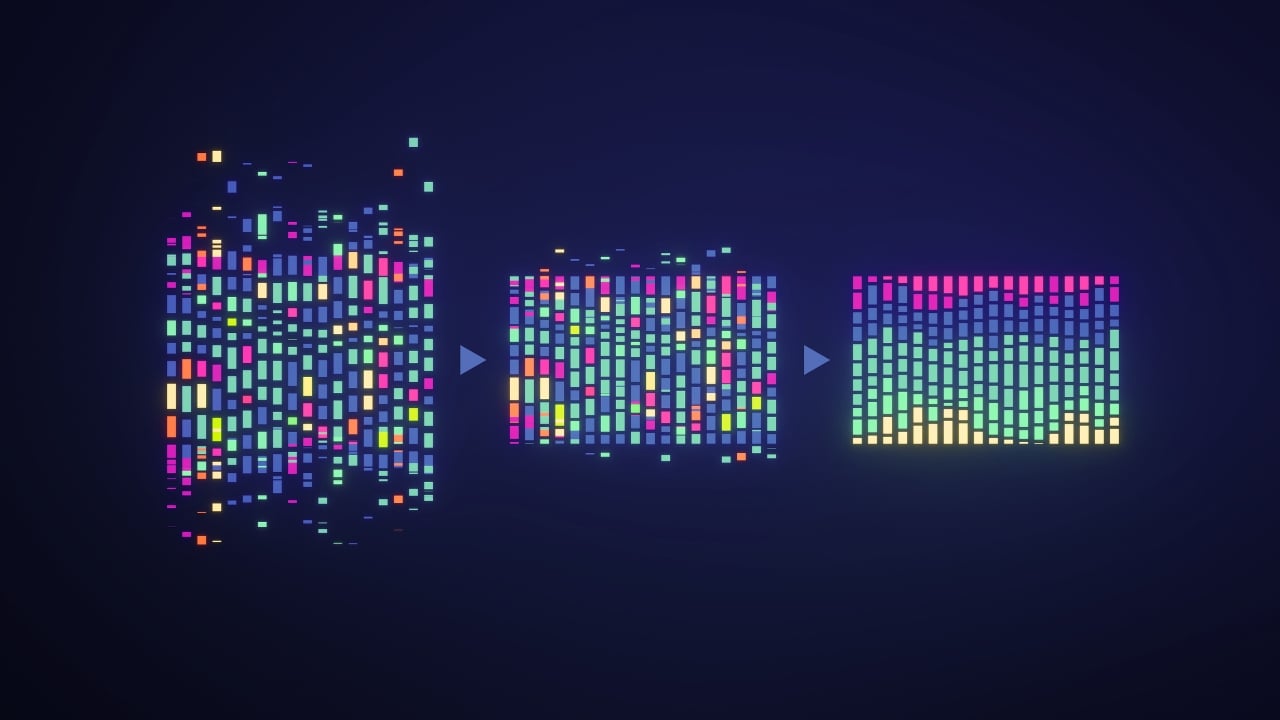

Data aggregation is the process of collecting data from multiple sources and combining it into a single, unified view.

At its most basic level, this involves compiling information from a range of prescribed databases and organising it into a simpler, easy-to-use medium, usually using sum, average, mean, or median references.

Data aggregation is useful because it allows you to see the bigger picture. By combining information from different sources, you can identify patterns, trends, and relationships that wouldn't be evident if you looked at each source individually.

This can be helpful for businesses, organizations, and even individuals in many ways, providing a clearer picture of data so they can make more informed choices and better business decisions.

What are data aggregators? Definition

Data aggregators are companies that collect data from various sources and compile it into a single, unified view. This can be done on a large scale, like with business listings for search engines, or on a smaller scale, like summarizing information from different databases.

There are many benefits to using data aggregators. They can save time and effort by providing users with a central location for all their data needs. They can also help to identify trends and patterns that would be difficult to see if the data were scattered across different sources.

Types of data aggregators

There are two main types of data aggregators which can be separated based on how they collect and organize data:

1. Time-based data aggregators

Time-based data aggregators collect data points over a specific period and grouping them together based on time intervals. For instance, imagine you're a retail manager trying to understand customer buying habits in your city.

A spatial aggregator would gather data on customer purchases from all your stores within a particular period (like a month). This would allow you to see the total sales across all locations and identify trends, such as which stores are performing better or if there are areas with higher demand for specific products.

Why CIOs Bet on Integrators

How top system integrators turn fragmented SaaS, legacy stacks, and IoT into a coherent platform for scalable digital operations.

2. Spatial data aggregators

Spatial data aggregators group data points based on geographical location. This is useful for understanding how things vary across different regions. For instance, going back to the retail manager example, time aggregators would focus on a single store and analyze data over time (e.g., daily, weekly, and monthly sales figures).

This way, you can track sales trends over a longer period, identify seasonal fluctuations, and measure the effectiveness of marketing campaigns.

Top 10 Data Aggregators

There are hundreds of great data aggregators available that can help you streamline this process, many of which are pre-built into data analytics platforms. Of course, however, not all of these tools are made the same.

In this list, we’re counting down ten of the best data aggregators on the market today, ranking them based on their ease of use, price and popularity with users.

Comments ( 0 )