Every March, World Backup Day rolls around with a familiar message: back up your data before something breaks. It’s a useful reminder, and not an outdated one. Backup and disaster recovery still matter because failure hasn’t gone anywhere. If anything, modern systems have made the consequences wider, faster, and harder to contain.

Uptime Institute’s 2025 outage analysis found that more than half of respondents said their most recent significant outage cost over $100,000, and one in five put that cost above $1 million.

But the way enterprise teams think about resilience is changing. The old model was largely about protecting data, writing recovery plans, and hoping the runbook held up when a real incident hit. Modern infrastructure is aiming for something more ambitious.

Instead of waiting for people to step in, more environments are being designed to detect failure, respond automatically, and keep services running while recovery happens in the background. Microsoft’s Azure architecture guidance describes self-healing in almost those exact terms: detect failures, respond gracefully, and recover in line with availability targets.

That’s why self-healing infrastructure is worth talking about now. Not because it sounds futuristic, and not because AI has suddenly made operations magical, but because resilience is moving from something people do after failure to something the platform is expected to do by design.

That’s the real shift. Backup still matters. Disaster recovery still matters. But the centre of gravity is moving from manual recovery owned by humans to codified resilience owned by the platform.

The Backup Era Of Infrastructure Resilience

For a long time, infrastructure resilience meant having copies, contingencies, and a plan. Teams backed up data, built failover procedures, documented recovery steps, and hoped they’d never need to use them at 2 a.m. on a Sunday.

That model made sense in environments where infrastructure was relatively fixed, applications changed more slowly, and recovery could be organised around known assets rather than constantly shifting services.

The catch was always the same: when something serious happened, recovery depended heavily on people. Someone had to notice the issue, work out what had failed, decide which runbook applied, approve the next step, and carry it out without making the situation worse. Uptime Institute’s analysis is telling here.

It found that failure to follow procedures has become an even greater cause of outages than the year before, which is a useful reminder that resilience can still fall apart at the human layer, even when the technology stack looks mature on paper.

That older model also treated backup as the heart of resilience. In some ways, World Backup Day still reflects that mindset. The official campaign positions 31 March as a prompt to protect data before loss or theft happens.

That’s a sensible message, but it belongs to an era where resilience was defined mainly by whether you could restore what was lost after the fact. Modern infrastructure is starting to ask a different question: how much of that failure can the system absorb, route around, or repair before customers notice at all?

Cloud-Native Infrastructure Changed The Recovery Model

Cloud-native infrastructure changed the shape of the problem. Once infrastructure became programmable, rebuildable, and easier to replace, recovery stopped being only about preserving what already existed. It became about recreating what was needed, quickly and consistently.

That sounds like a technical detail, but it changes the whole operating model. In older environments, a failed server was a machine to repair. In cloud-native environments, it is often something to replace. AWS makes this point directly in its Well-Architected guidance.

Its recommendation is to automate healing on all layers and, where possible, design services to be stateless so resources can be restarted or replaced without losing data or availability.

Infrastructure As Code Made Recovery Repeatable

Infrastructure as Code helped make that possible. Once environments are defined in code, the infrastructure itself becomes versioned, reproducible, and much easier to rebuild in a controlled way. Recovery becomes less dependent on memory, heroics, or tribal knowledge and more dependent on whether the platform can redeploy the intended state cleanly.

That matters because repeatability is the quiet foundation of resilience. A team can’t build self-healing behaviour on top of an environment that has to be manually reconstructed every time something breaks. Microsoft’s design guidance for self-healing workloads stresses exactly this kind of design discipline, including decoupled components, recovery aligned to service-level objectives, and testing failure paths rather than assuming the happy path tells the full story.

Container Platforms Introduced The First True Self-Healing Systems

Container platforms pushed the model further. Kubernetes, in particular, gave enterprises one of the clearest practical examples of self-healing already in production. Its own documentation says Kubernetes restarts failed containers, replaces failed Pods, reschedules workloads when nodes become unavailable, and keeps the system aligned with the desired state.

That’s not theoretical. It's now basic infrastructure behaviour in many environments.

It’s also important to be honest about what that means. Kubernetes can heal around certain classes of failure, but it doesn't solve every problem by itself. The documentation notes that storage failures and underlying application issues may still require deeper intervention. That distinction matters because a lot of market chatter uses “self-healing” as if it means “self-sufficient.”

Usually, it doesn’t. Usually, it means the platform can absorb and remediate a known set of faults without waiting for a person to step in.

Automated Recovery Is The Bridge To Self-Healing Systems

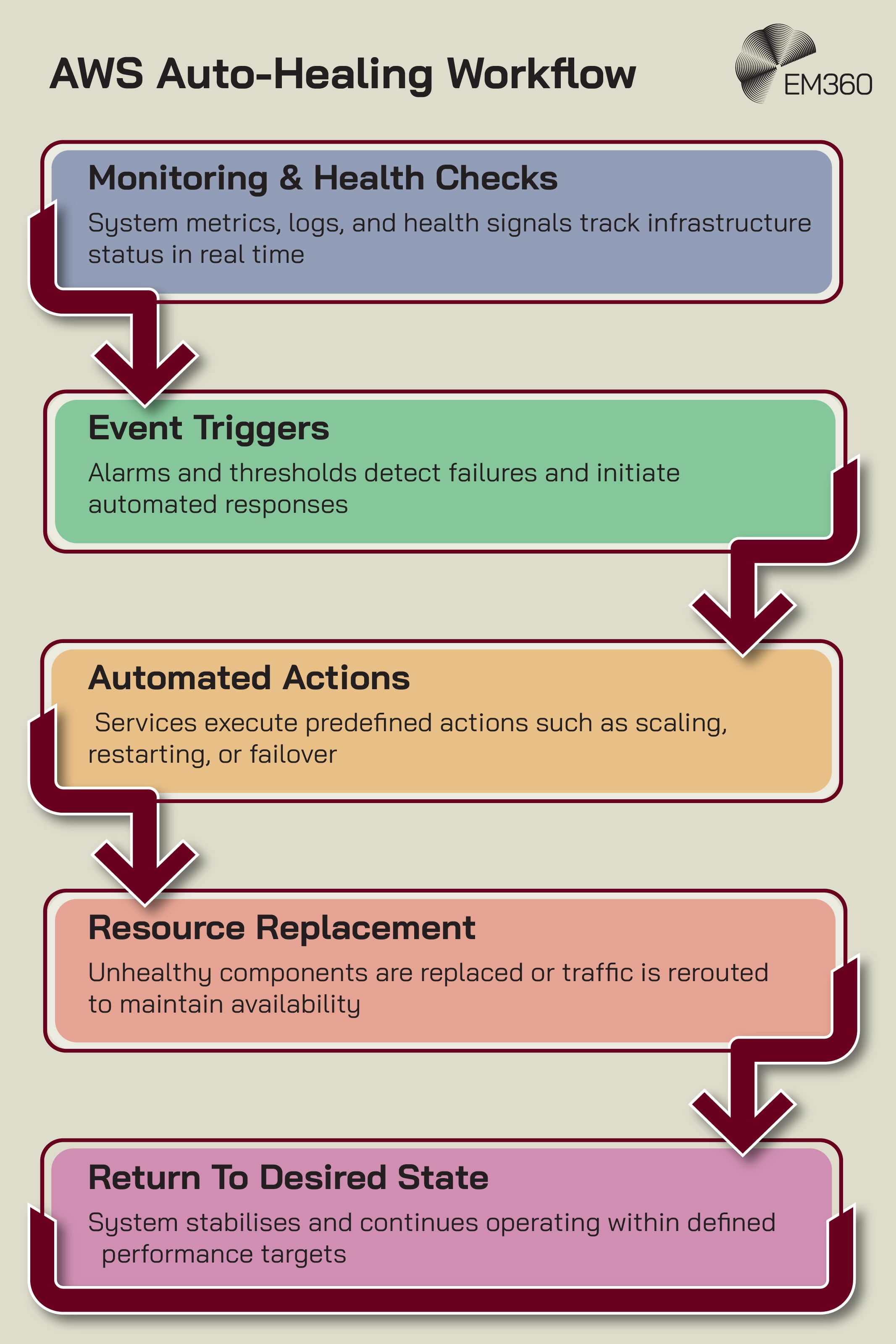

Between old-school disaster recovery and true self-healing sits a middle stage: automated recovery. This is where systems respond to alerts, health checks, and state changes with predefined actions such as failover, restart, replacement, scaling, or rollback.

That middle layer matters because it is where most enterprises actually live today. AWS describes this as automated healing that can be triggered by monitoring and event systems, with services like Auto Scaling, EventBridge, Lambda, and Systems Manager handling replacement and remediation.

The key point is that the logic is still mostly rule-based. The system knows what to do because someone defined the response in advance.

That’s not a weakness. It’s a sign of maturity. Good automated recovery reduces response time, lowers dependence on manual intervention, and makes resilience more consistent. But it still depends on strong platform design. If monitoring is poor, dependencies are messy, or the architecture is too tightly coupled, automation tends to fail in very creative ways.

Infrastructure teams know this already. Automation is brilliant right up until it starts automating bad assumptions at speed.

Why Self-Healing Infrastructure Is A Platform Maturity Problem

This is where the real argument begins. Self-healing infrastructure isn't mainly an automation story. It's a platform maturity story.

Enterprises do not become self-healing because they bought one more clever tool. They become self-healing because the internal platform is mature enough to support automatic recovery safely.

That means clear service boundaries, strong observability, known dependency maps, reliable rollback paths, policy controls, tested recovery points, and enough operational discipline that automated action doesn't create fresh chaos.

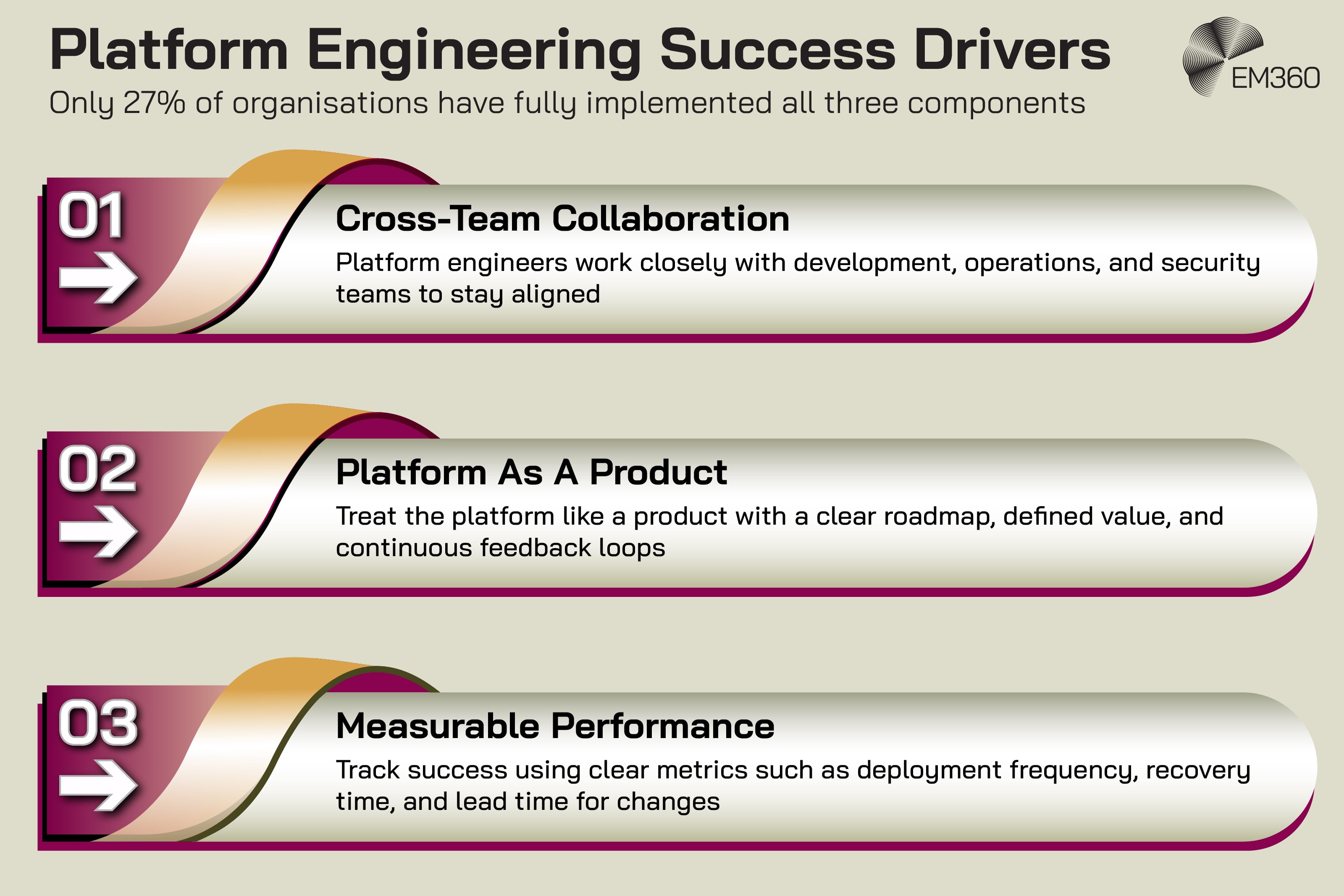

Google Cloud’s platform engineering research is useful here because it shifts the conversation away from tooling theatre. It found that only 27% of adopters had fully integrated the three key elements it identified for platform engineering success.

At the same time, 86% of respondents said platform engineering is essential to realising the full business value of AI, and 94% said AI is critical or important to the future of platform engineering. That's a strong signal that enterprises increasingly understand the platform as the foundation, not the afterthought.

That framing also explains why some self-healing projects stall. The problem is rarely that teams do not understand automation. The problem is that resilience has not been productised. It has not been built into the platform as a repeatable capability with rules, guardrails, ownership, and feedback. So the organisation ends up with a patchwork of scripts, manual exceptions, and optimistic dashboards. Which isn't self-healing. That’s just automation with trust issues.

The Role Of Observability And Operational Feedback Loops

A system can’t heal itself if it can’t tell what’s happening. That's where observability stops being a monitoring buzzword and becomes infrastructure plumbing.

For self-healing behaviour to work, the platform needs usable signals. It needs to know when something is degraded, whether that degradation matters, what dependencies are involved, and which response is safe. Microsoft’s self-healing guidance puts monitoring and logging at the core of the design, not as a nice-to-have attached later.

AWS makes the same point by tying automated remediation to alarms, events, and health checks. Splunk’s State of Observability 2025 report adds a useful human angle. It found that when ITOps and engineering teams work closely with security, 64% report fewer app and infrastructure performance issues. That matters because resilience isn't just about data collection.

It's about whether the organisation can turn signals into action without drowning in alerts, silos, or conflicting tools. A platform with noisy visibility and unclear ownership isn't ready for self-healing. It's barely ready for Tuesday.

Where AI And AIOps Actually Fit Into Self-Healing Infrastructure

AI is part of this story, but not in the way the market likes to pretend.

AIOps, or artificial intelligence for IT operations, can help teams detect anomalies, correlate events, identify likely root causes, and prioritise responses. In some environments, it can also support more adaptive remediation. That’s useful. It can make operations faster and less reactive. But it doesn't remove the need for a strong platform underneath.

Google Cloud’s 2025 DORA report is especially valuable because it makes this point without the usual AI fireworks. It found that AI adoption is now near-universal, with 90% of respondents using AI at work and more than 80% saying it has improved productivity. But it also found that AI adoption continues to have a negative relationship with software delivery stability.

Google’s conclusion is blunt: AI accelerates development, but that acceleration exposes downstream weaknesses unless teams already have robust testing, version control, and fast feedback loops.

That's exactly why self-healing infrastructure is a platform maturity story. AI can strengthen a mature environment. It can help a platform notice more, reason faster, and respond with better context. What it cannot do is rescue weak operational foundations.

If dependencies are poorly mapped, remediation paths are untrusted, or teams do not understand their own blast radius, AI just helps the platform make bigger mistakes more efficiently.

Backup Still Matters In Autonomous Resilience

None of this means backup is obsolete. It means backup has changed jobs.

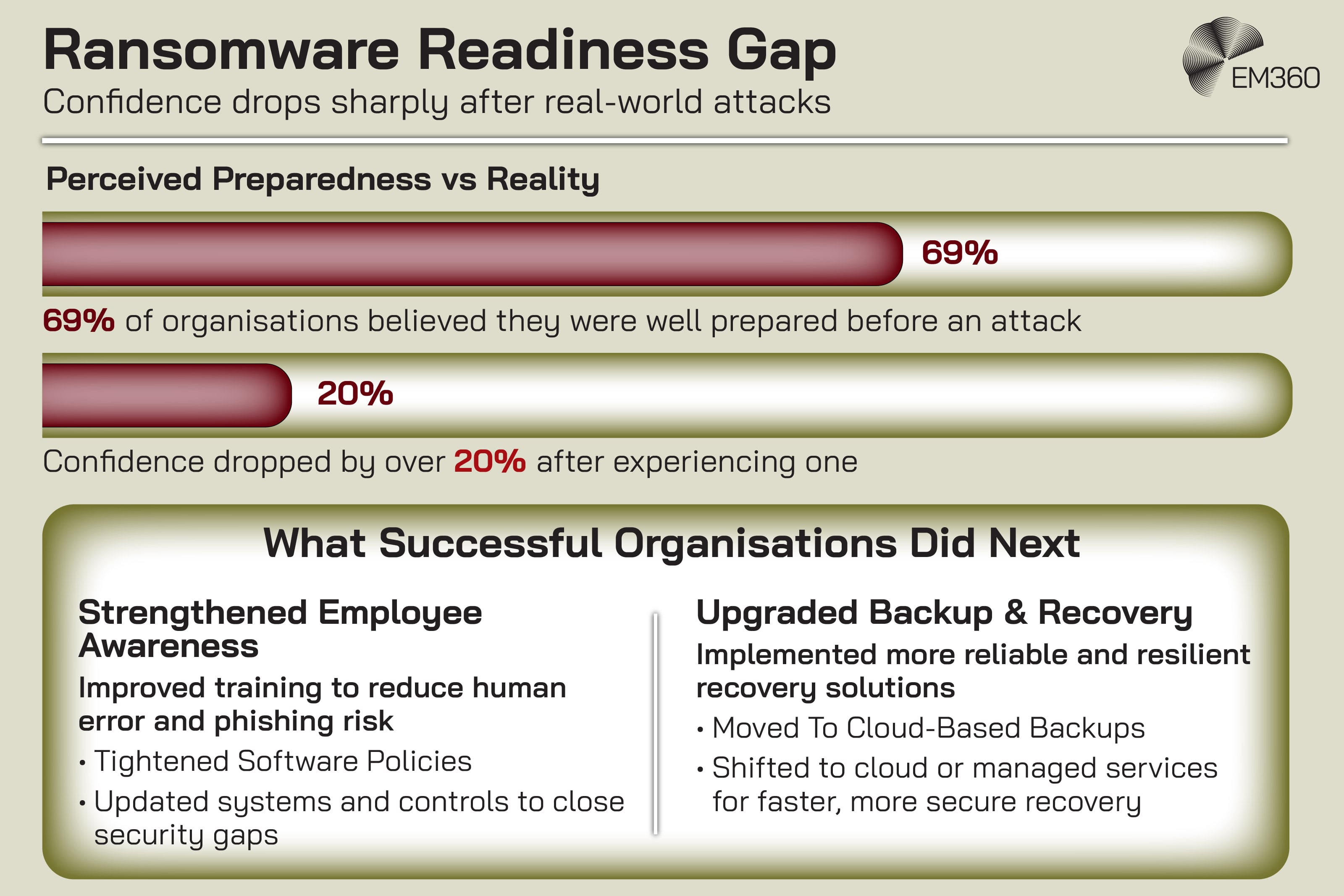

In a more autonomous resilience model, backup is no longer the whole recovery strategy. It's a foundational layer inside it. That matters most when failure isn't local and temporary, but destructive. Ransomware, data corruption, compromised workloads, and catastrophic dependency failures still require trusted recovery points.

A platform can restart a broken service, but it cannot invent clean data out of thin air. That's why the backup conversation still belongs here, and why the World Backup Day reference works in the first place. The official campaign is built around the idea of protecting data before loss or theft happens.

That remains true. It's just no longer sufficient by itself for modern enterprise resilience. Backup now has to sit alongside orchestration, policy-driven rebuilds, immutable infrastructure, health-based remediation, and, where needed, automated restore workflows.

Security pressure makes that even clearer. Veeam’s 2025 ransomware report surveyed 1,300 organisations, including 900 that had experienced at least one ransomware attack in the previous 12 months. When incidents are that common, “we have backups somewhere” isn't a resilience strategy.

The real question is whether the platform can recover to a trusted state quickly, safely, and without rebuilding every decision from scratch during a crisis.

What Self-Healing Infrastructure Looks Like In Practice

In practice, self-healing infrastructure usually looks less dramatic than the phrase suggests. It's not a server whispering to itself in the dark. It's a collection of mature patterns working together.

A container fails and is restarted automatically. A node drops out and workloads are rescheduled elsewhere. A health check fails and traffic is routed away from the unhealthy instance. A snapshot gives the team a clean point-in-time recovery option. An event triggers an automated workflow that replaces a failed resource.

A policy blocks remediation that would create a wider blast radius. Each piece is manageable on its own. The value comes from how well they work together.

That last point is worth sitting with. Enterprises often talk about self-healing as if it were one capability to buy. It's usually a stack of capabilities to earn. The more mature the platform, the more safely those capabilities can be combined. The less mature the platform, the more likely the organisation is to confuse automation activity with operational resilience.

Why Self-Healing Infrastructure Is Becoming A Strategic Priority

This shift is becoming strategic because the old model is starting to strain under the weight of modern systems. Architectures are more distributed, dependencies are harder to see, change happens faster, and failures increasingly involve third parties, cloud services, and security exposure rather than one obvious broken box in one obvious room.

That's why outage readiness is becoming a board-level concern rather than a niche infrastructure conversation. PagerDuty’s global survey of 1,000 IT and business executives found that 88% expecedt another incident on the scale of the July 2024 global IT outage within the next 12 months, while 86% said companies have focused too much on security and not enough on readiness for service disruptions.

That's a strong signal that resilience is now being judged by continuity under pressure, not just prevention on paper.

So yes, self-healing infrastructure is a technology topic. But it is also a leadership topic, a platform engineering topic, and a resilience design topic. Enterprises are not really chasing self-healing because the phrase sounds advanced.

They’re chasing it because manual recovery doesn't scale well against modern complexity, modern risk, or modern expectations.

Final Thoughts: Self-Healing Infrastructure Starts With Platform Discipline

World Backup Day still points to something real. Systems fail. Data gets lost. Recovery still matters. But modern infrastructure is pushing beyond the older rhythm of failure first, recovery second. The deeper shift isn't from backup to AI. It's from manual recovery to platform-owned resilience.

Self-healing infrastructure only works when the underlying platform is disciplined enough to support it. That means clear design, strong signals, tested recovery paths, sensible boundaries, and the operational maturity to automate with confidence rather than hope.

Backup, disaster recovery, automation, and AI all still belong in that picture. They just no longer sit at the centre in the same way.

That’s where this gets interesting for enterprise teams. The question is no longer whether infrastructure can recover after failure. More and more, the question is whether the platform has been built to participate in recovery before humans have to take the wheel. EM360Tech continues to track how cloud platforms, resilience models, and modern IT operations are evolving for teams trying to answer exactly that.

Comments ( 0 )