Open Data Day is a global moment for the open data community. Across the world, organisations, developers, researchers, and civic groups host events that highlight how accessible data can support transparency, research, and innovation. In 2026, the event runs from 7–13 March and is coordinated by the Open Knowledge Foundation through the Open Knowledge Network.

The idea behind it is simple. When data is accessible and reusable, more people can build useful things with it. That principle started in civic technology and government transparency initiatives. Today it reaches much further.

Open data has quietly shifted from a civic ideal to something closer to digital infrastructure. It’s not just a nice-to-have resource for a hackathon. It’s a real input to enterprise analytics, AI-adjacent decision systems, and data-driven products. It adds context your internal systems can’t generate on their own, and it pushes organisations to take external data seriously as a dependency.

To make sense of open data in 2026, three lenses matter most. The ecosystem that produces it. How enterprises turn it into operational value. And the governance needed when public datasets enter enterprise data pipelines.

What Open Data Day Reveals About the Modern Data Economy

Open Data Day exists because “data access” is still too often treated as a privilege. The event pushes back on that by celebrating reuse. It’s a reminder that data doesn’t become useful just because it exists. It becomes useful when it can be accessed, understood, and applied.

The Open Data Day site is explicit about this idea. It frames the week as a global celebration of open data, driven by local events that use data in communities, and it emphasises that outputs are open for everyone to use and reuse.

That reuse-first mindset is what makes this relevant to enterprise audiences. It forces a clearer conversation about what “open” really means, and why modern organisations are increasingly built on data they didn’t collect themselves.

Open data explained

A practical, widely used definition is simple: data is open if it can be freely accessed, used, modified, and shared by anyone for any purpose, with minimal conditions like attribution or share-alike.

Two points are easy to miss, and they matter.

First, openness is not the same as being online. A dataset can be “public” and still be unusable. Open data needs to be legally open, meaning it’s published under an open data licence that permits reuse, and technically open, meaning it’s available in machine-readable, bulk-friendly formats without unreasonable barriers.

Second, licensing is not paperwork. It’s the foundation that allows reuse at scale. Without clear terms, “open” becomes a risk, not a resource. That’s why open data communities lean on established licensing patterns. The Open Data Day site itself includes licensing information for its code, text, and event data.

If you want a clean enterprise translation, it’s this: open data is the data equivalent of open source’s intent. It’s about permission and practicality, not vibes.

From transparency movement to digital infrastructure

Open data’s roots are still visible. Governments publish data to support transparency. Researchers publish data to support discovery and verification. International bodies publish indicators so the world can compare like with like.

What’s changed is how that data behaves once it’s available.

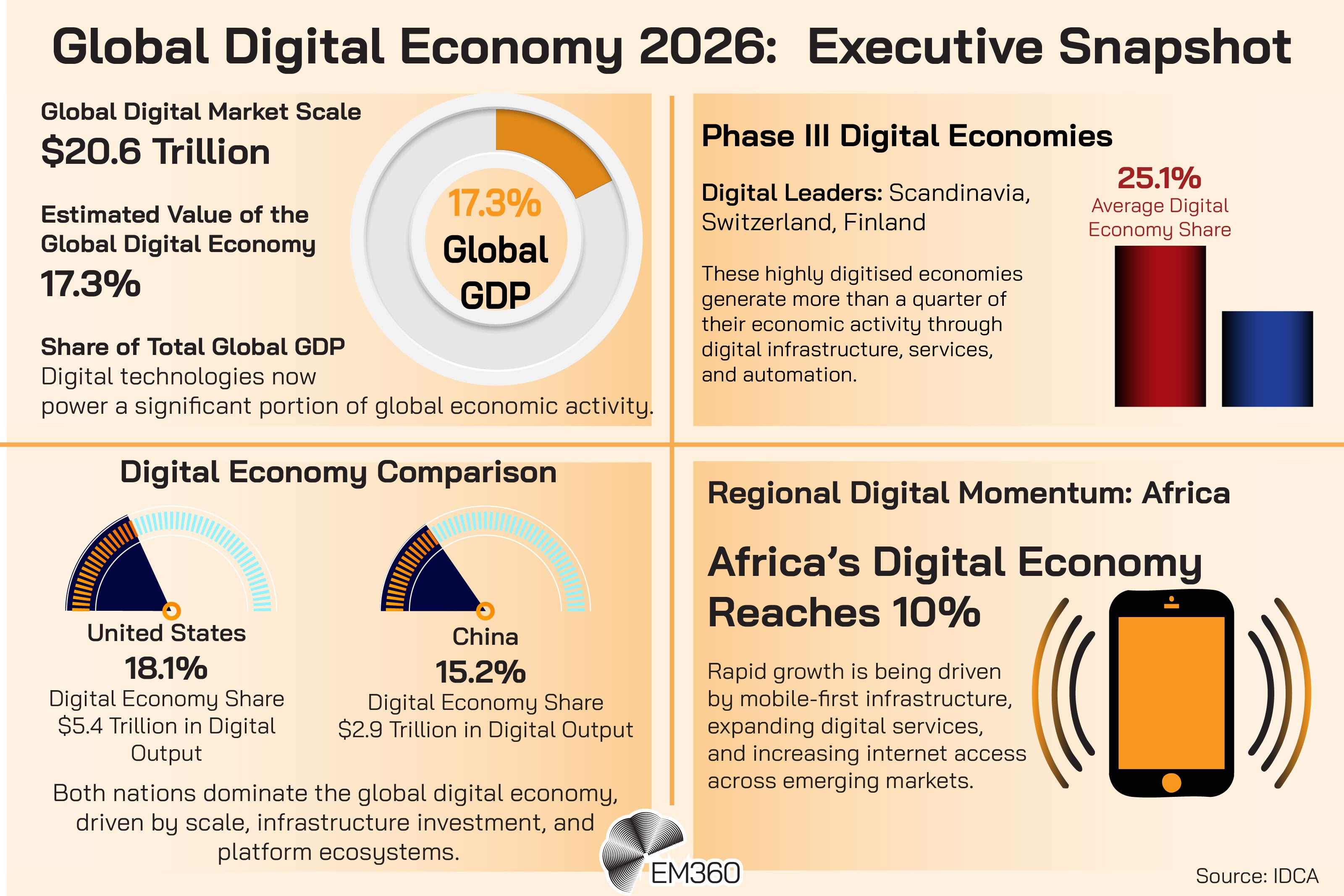

It doesn’t stay in the public sector lane. It gets ingested by companies that need context for planning, forecasting, risk decisions, and product development. It becomes part of the digital economy, because the digital economy runs on shared inputs. The same way roads and ports enable trade, data availability enables modern analysis and automation.

Once you see open data as infrastructure, you stop asking “why would a business care?” and start asking “what happens when this external layer is missing, wrong, or poorly governed?”

How Open Data Became Part of the Global Data Ecosystem

Enterprises rarely consume open data as a standalone truth. They consume it as part of a data ecosystem: publishers, portals, standards, APIs, and downstream users who transform raw datasets into operational tools.

That ecosystem thinking matters because it explains why open data can feel either powerful or pointless. A static dataset with unclear fields and no update cadence doesn’t function as infrastructure. A well-documented, regularly updated, machine-readable dataset with stable access does.

In practice, the “open data” story is usually a story about how well the surrounding system supports reuse.

Who produces the world’s open datasets

Most high-value open data comes from familiar sources, even if enterprises don’t always label it as “open”.

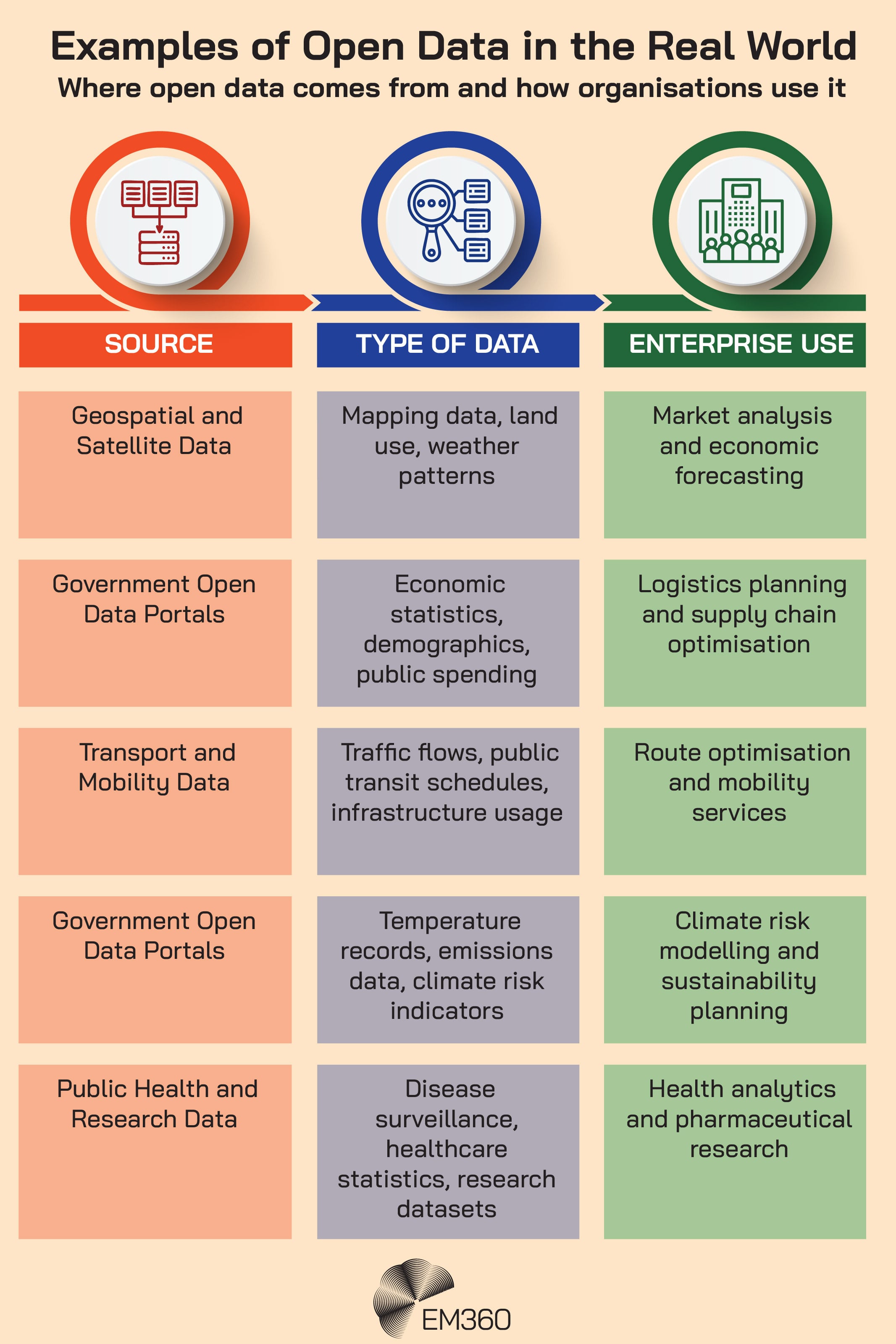

Government and public agencies publish data about populations, transport, public finance, procurement, and national statistics. Research institutions publish datasets linked to scientific outputs. International organisations publish cross-country indicators and development data. Geospatial and earth observation sources feed mapping, logistics, agriculture, and climate analysis.

A useful rule of thumb is that these datasets describe the world outside the enterprise boundary. That’s why they’re valuable. They’re not trying to tell you what happened in your systems. They’re telling you what changed in the environment your systems operate inside.

This is also where “high value datasets” become a practical concept for enterprise readers. Not because the term is trendy, but because it points to the categories businesses repeatedly rely on: geospatial data, climate data, demographic and economic indicators, and infrastructure information.

Open data and the emerging data sharing ecosystem

Open data is also part of a wider shift in how data is exchanged. Fully open datasets sit at one end of a spectrum. Further along you get governed sharing models, designed for trust, interoperability, and sector-level collaboration.

The European Commission’s work on Common European Data Spaces reflects that direction. It describes support for data spaces through reference architecture, interoperability specifications, data models, and related building blocks, with active rollout across multiple sectors and domains.

For enterprise strategy, this matters because it shows where the broader market is heading: towards structured data exchange where reuse is planned, not accidental. Open data is a piece of that future, and it often acts as the entry point because it lowers the barrier to experimenting with external datasets.

By the time organisations start thinking seriously about data sharing, many are already using open data. They just haven’t named it, governed it, or treated it as a dependency.

Why Enterprises Are Turning Open Data Into Operational Intelligence

Most enterprises have plenty of internal data. What they often don’t have is context. Open data supplies that context, and it’s why open datasets are increasingly part of modern enterprise data strategy.

The strongest way to describe the value is straightforward: open data makes internal data more useful. It does that by turning isolated operational signals into something you can interpret against real-world conditions.

Open data as context for enterprise analytics

Enterprise analytics improves when it can distinguish between what’s happening inside the business and what’s happening outside it.

That’s where open data helps. Demographic datasets can clarify market shifts. Economic indicators can explain demand pressure. Infrastructure and transport data can explain operational constraints. Environmental datasets can change how organisations model exposure and risk.

This isn’t theory. It’s what decision intelligence looks like when it’s grounded in reality instead of only in internal reports. And it’s why open data often shows up in business intelligence environments as an external reference layer, even when teams don’t talk about it in those terms.

Open datasets in AI and machine learning pipelines

Open data also plays a role in AI and machine learning, but the enterprise pattern is more nuanced than “train a model on public datasets”.

Open datasets are commonly used to prototype and test methods before sensitive data is involved, to enrich models with external signals, and to support validation when teams want to confirm outputs against authoritative reference sources.

This is also why open data publishing is being adapted for modern AI consumption. The US Department of Commerce has published “Generative Artificial Intelligence and Open Data: Guidelines and Best Practices”, positioning it as guidance for publishing open data optimised for generative AI systems, with a focus on documentation, formats, licensing, and data integrity and quality.

That’s a useful signal for enterprise leaders: open data is increasingly being shaped for machine consumption. If your organisation is building AI data pipelines, the quality and structure of external datasets will matter more, not less.

How open data supports product innovation

Open data also underpins real products. Mapping and mobility are obvious examples, but the pattern is broader: climate intelligence services, public finance transparency tooling, and sector-specific dashboards often start with open datasets and add value through integration, interpretation, and delivery.

The commercial value usually isn’t the dataset itself. It’s what happens around it. Reliable ingestion. Clean transformations. Consistent definitions. User experiences that turn raw information into decisions.

That’s where data monetisation can be misunderstood. With open data, the monetisation typically sits in the service layer, not in the data layer. Open datasets provide raw material. Enterprises create value by making it usable at scale.

The Governance Challenge When Open Data Enters Enterprise Systems

Open data can be free to access. It’s not free to trust.

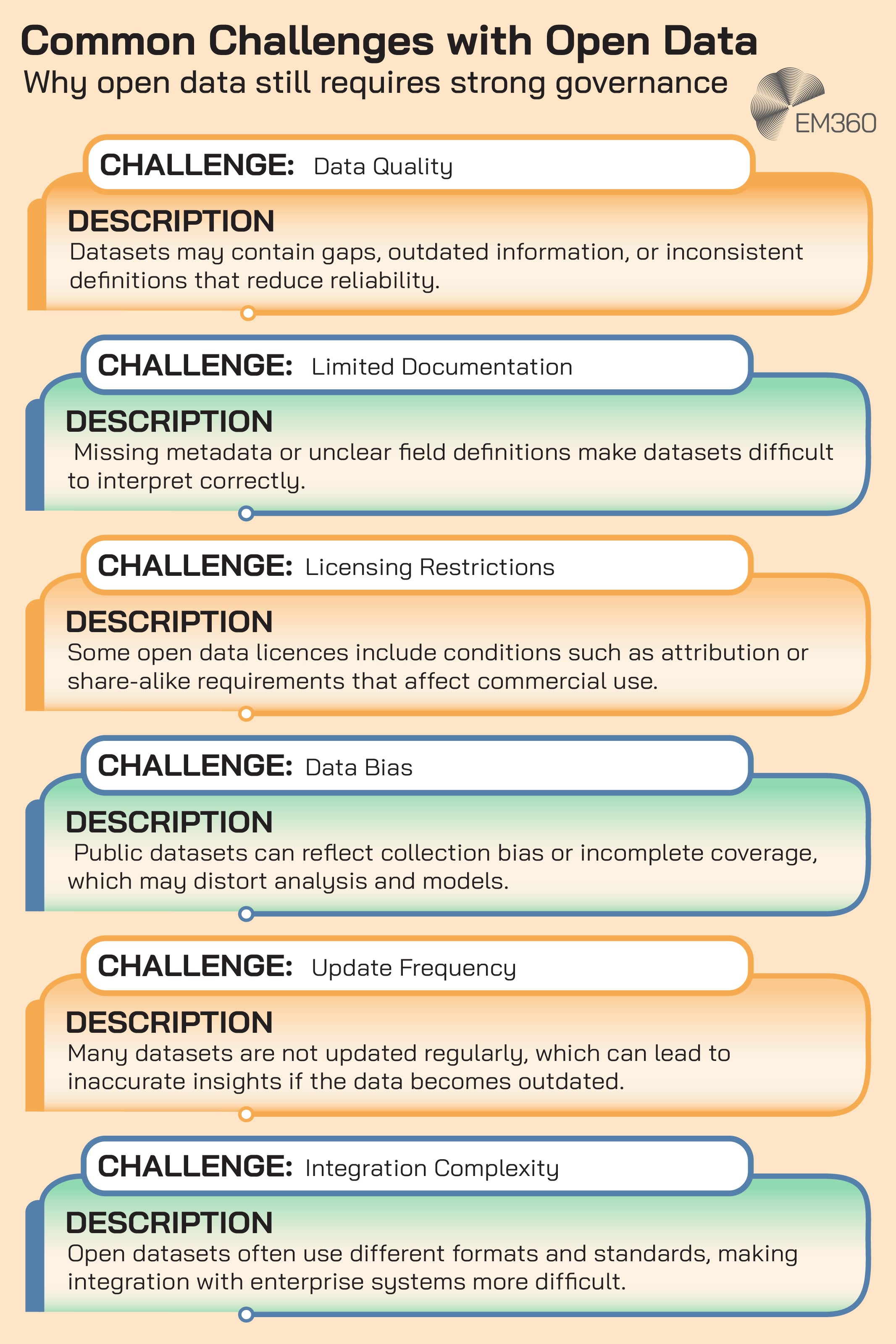

Once open datasets feed dashboards, risk models, or AI systems, the cost of getting them wrong rises fast. That’s why governance becomes the dividing line between open data as a helpful input and open data as a hidden liability.

Data provenance and trust

If your organisation can’t answer basic questions about a dataset, it can’t safely operationalise it.

Where did it come from? How was it collected? What does each field mean? How often is it updated? What changes were made over time?

This is where metadata and dataset documentation stop being background detail and start being production requirements. Provenance, in particular, is what allows teams to defend decisions, troubleshoot anomalies, and prove that outputs are based on authoritative sources rather than random downloads.

In the real world, trust isn’t a feeling. It’s a chain of evidence.

Licensing and legal considerations

Open data licences are the guardrails that define whether a dataset can be used commercially, whether attribution is required, and what obligations follow downstream.

That matters because “open” isn’t one licence. It’s a category. Some licences are permissive. Others carry conditions that can clash with enterprise product plans, especially when datasets are mixed into proprietary offerings.

The Open Data Day site itself highlights how different artefacts can have different licence models, including event data files under the Open Database License.

The point for enterprise teams is simple: licensing has to be part of the intake process for external data sources. If it’s discovered late, it becomes expensive to unwind.

Bias, accuracy, and operational risk

Open datasets can be biased, incomplete, or outdated. They can also be misunderstood, especially when definitions don’t match how an enterprise measures the same concept internally.

The risk gets worse when open data is blended into polished dashboards and confident-looking models. Outputs can appear authoritative because they’re clean and quantified, even when the underlying inputs are flawed.

Enterprise-grade use of open data depends on disciplined validation and monitoring. Not because open data is inherently untrustworthy, but because external data introduces external uncertainty. It needs the same seriousness you’d apply to any data source that influences decisions and compliance outcomes.

Why Open Data Is Becoming Infrastructure for the AI and Data Economy

Open data is evolving because the systems around it are changing.

AI systems are hungry for well-documented, machine-readable inputs. Analytics platforms are built to blend internal and external data sources. Sector ecosystems are moving toward structured sharing models where interoperability is a competitive advantage.

That shift shows up in policy and standards work. Europe is building the foundations for cross-sector exchange through common data spaces. The US Department of Commerce is publishing guidance designed to make open data more usable for generative AI systems, with emphasis on documentation, formats, licensing, and quality.

Taken together, these aren’t side quests. They’re signals that open data is being treated as part of the modern data economy’s plumbing.

For enterprises, the strategic implication is uncomfortable but useful. You can’t treat external data as optional context while your decisions, models, and products increasingly depend on it. If open data is part of your operational reality, then it’s part of your infrastructure whether you’ve acknowledged it or not.

Final Thoughts: Open Data Is Quietly Becoming Enterprise Infrastructure

Open Data Day celebrates a basic principle: data should be accessible and reusable, so more people can do meaningful work with it.

For enterprise leaders, the deeper lesson is that open data has moved beyond civic transparency. It now sits inside global data ecosystems, feeds enterprise analytics and AI data pipelines, and increasingly shapes products and strategy. That’s why governance matters so much. The moment open data becomes a dependency, it needs provenance, licensing clarity, and validation discipline.

The organisations that treat open data as infrastructure, not background noise, will be better positioned for the next phase of the data economy. They’ll make faster decisions with better context, and they’ll do it without gambling on inputs they can’t defend.

If you want more analysis like this, grounded in what data, AI, and infrastructure trends mean for enterprise strategy, then EM360Tech is an ideal place to start.

Comments ( 0 )