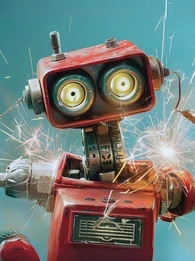

Has your Facebook feed been taken over by unusual pictures of Jesus and flight attendants or other strange AI-generated images? You might have seen evidence of something called dead internet theory.

In this article, we’re delving deep into dead internet theory, exploring what it is, how the internet is changing in the age of AI, and whether dead internet theory is true.

What is Dead Internet Theory?

Dead internet theory is the idea that the content on the internet is mostly machine-generated or automated by artificial means, such as by generative AI or by bots.

Because of the rise of machine-generated content and ‘bots’, believers in the theory suggest that the date the internet officially ‘died’ is around 2016.

The original theory suggests that this is to intentionally minimize organic human activity and manipulate users, such as in advertising to consumers. But some believe that this is used by government agencies to manipulate voters and public perception.

With the rise of AI, however, what was once thought of as an outlandish conspiracy theory is now gaining more traction as AI becomes more prominent and the average user finds themselves having to interrogate the source of content they consume.

Core Components of the Dead Internet Theory

1. The Rise of Bots

A core aspect of the dead internet theory relies on the explosive rise of bots. The theory suggests a significant portion of online activity, from content creation to social media interactions, might be driven by automated programs.

These bots can churn out content, inflate engagement metrics, and even manipulate conversations. Users widely became aware of this during the 2016 presidential election with both left and right-wing users accusing the opposing side of using bots to spread ‘fake news’ and sway opinion.

The proliferation of ‘bot’ users later became a major sticking point during Elon Musk’s acquisition of Twitter, now known as X, in 2022. Soon after his initial offer for the platform, Musk announced his intention to terminate the agreement, on the basis that Twitter had breached their agreement by refusing to down on bots.

The tech mogul disputed Twitter’s claim that less than 5% of their daily users were bots, employing two research teams who found that the number was more realistically 11-13.7% and, crucially, that these bot users were responsible for a disproportionate amount of the content generated on the platform.

Believers in dead internet theory repeatedly cite a key example of bots from X known as ‘I Hate Texting’. This was when a wave of tweets all started with "I hate texting" followed by a longing for a physical connection such as ‘I hate texting, I just want to hold your hand" and "I hate texting, just come live with me."

These tweets quickly achieved tens of thousands of likes, but with such a strange and repeatable format, many suspected they weren't from real people.

LLMs Reshaping Digital Workflows

How large language models move from chatbots to core engines for support, content, legal and clinical workflows across the enterprise.

2. Rise of AI

Similar to the rise of bots, the explosive prevalence of AI has contributed massively to the belief in dead internet theory gaining traction. AI-generated content including images, videos and text is advancing at breakneck speed. Large language models like ChatGPT and Gemini can even surpass some human benchmarks. Many, especially older, less tech-savvy users, are unable to identify AI generated content.

This is only becoming more indistinguishable from human-generated content as the large language models implement deep learning techniques and continuously improve.

Advancements in AI allow for the creation of realistic-looking content, such as images, videos, and even text that can mimic human writing styles. This raises concerns about the authenticity of online information and the potential for deepfakes to manipulate public opinion.

The dead internet theory also highlights the role of algorithms in shaping online experiences. These algorithms can determine what content appears in your search results, social media feeds, and recommended videos.

This can mean the mass creation of AI-generated content that pollutes feeds to generate engagement and revenue. There's a recent trend of unusual images circulating on Facebook that combine religious figures like Jesus with flight attendants. These images are typically generated by artificial intelligence and shared by accounts that appear to be spam.

The reason for this trend is not fully understood but it is likely that this is ‘engagement bait’, designed to grab attention and get a reaction out of viewers, encouraging them to like, comment or share the post. This can be a way to boost the visibility of the spam account.

Productivity Suites As AI OS

Why Google and Microsoft are racing to make office platforms the operating system for enterprise AI, not just collaboration front ends.

These posts could also be used to identify users likely to engage with spam and clickbait content, unaware of AI that seems obvious to more tech-savvy users. If someone doesn't question the strange image or the legitimacy of the account posting it, they might be less likely to spot the signs of social engineering or phishing scams in the future.

Once identified, these users could then be specifically targeted by the spammers with more believable scams or fake content, potentially leading them to click on malicious links or share personal information, especially when their Facebook account may already be rich in personal information that can be exploited.

3. Rise of Algorithmic Curation

The domination of algorithmic curation has meant the original chronological model of the internet, where users saw and interacted with posts based on recency, is dead. For better or worse, the older model seemed to offer a more level playing field for content creators. Anyone could publish a website or participate in online discussions with a relatively equal chance of being seen by others.

This more democratic model fostered open discourse and debate, with social media sites like Twitter operating as a digital ‘town square’. Users often encountered opposing viewpoints more readily, leading to potentially messy but necessary conversations.

Algorithmic echo chambers can create a sense of false consensus, where users only see information that confirms their existing beliefs and discourages challenging viewpoints.

If seeing or interacting with users from the other side of the political spectrum only the most extreme controversial viewpoints break through as this drives user engagement.

Managing AI Hype and Reality

Use the strangest tech milestones to stress-test assumptions, avoid overreach and position AI bets ahead of competitors.

4. Algorithmic Radicalization

Algorithmic radicalization is the process wherein algorithms on social media sites like Facebook and YouTube steer users towards progressively more extreme content over time, potentially leading them to develop radicalized extremist political views.

Ultimately, algorithms are built with the end goal of providing content to the user that keeps them on the site for as long as possible, thus seeing more ads and driving more revenue.

This often means serving content to users that evoke strong emotions, which can include extreme or sensational content. As users engage with extreme content, algorithms suggest more extreme content in the future. This can lead users down a "rabbit hole" where they are increasingly isolated from mainstream viewpoints and exposed to more and more radical ideas.

Dead internet theory argues that algorithmic curation has become so powerful that the open exchange of ideas on the internet is dead, replaced by whatever drives user engagement, including extremist content.

Inside xAI Aurora for X

How Musk is folding multimodal image generation into X to compete with OpenAI and Google, and what it signals for social platforms as AI studios.

Is Dead Internet Theory true?

The dead internet theory isn't entirely true, but it raises important questions about the way the internet works today. The rise of AI-generated content blurs the line between human and machine-created information, making it harder to know if images are real. This is only getting more difficult as AI improves.

It's also true that algorithms prioritize content that keeps users engaged, regardless of its accuracy or value and users are primarily exposed to information that confirms their existing beliefs, limiting their exposure to new ideas and fostering polarization. This can lead to the spread of misinformation and the marginalization of diverse perspectives,

However, this does not mean that the internet is ‘dead’, at least not yet. Niche communities, independent platforms, and opportunities for open dialogue still exist on a smaller scale.

The internet is constantly evolving with new tools and platforms emerging all the time, challenging the dominance of any single entity or algorithm.

For example, the social media app ‘BeReal’ gained massive popularity in 2022. This new platform focused on capturing unedited, real-time moments for people that knew each personally and disrupted the trend of meticulously curated public feeds often seen on other platforms.

The dead internet theory raises important questions about the role of technology in our online lives. While it might be an exaggeration to say the internet is completely "dead," the rise of bots and AI undoubtedly challenges our perception of authenticity and necessitates critical thinking when navigating the online world.

Comments ( 0 )