A distant, dystopian future...

That is the universe that enthrals viewers of Charlie Brooker’s Black Mirror. But as technological innovation accelerates, Brooker’s fictional world is inching closer to the realm of reality.

The popular Netflix series, which first aired in 2011, challenges the way the world views technology through stories showing the unsettling nature of technological advancements.

Each episode portrays a seemingly remote future where futuristic gadgets and technologies destroy the lives of its characters. But Black Mirror’s sinister stories of tomorrow are inching uncomfortably close to the world of today.

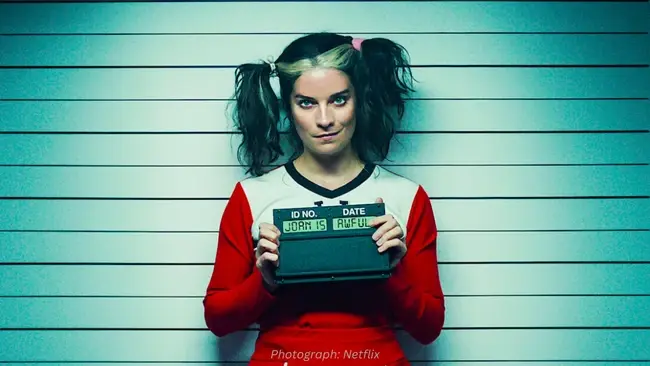

An episode from the series’ most recent season, dubbed ‘Joan is Awful’, has recently made headlines for appearing to have predicted the impact of generative AI on the film industry.

The plot involves actors being replaced by digital replicas of themselves generated using generative AI – a possibility that has recently fuelled strikes in Hollywood as studios explore the possibility of using AI replicas to replace human actors.

The Alliance of Motion Picture and Television Producers (AMPTP) recently put forward a “groundbreaking AI proposal,” which included a clause requiring consent from actors for the “creation and use of digital replicas or for digital alteration of a performance.”

The proposal shook Hollywood. Duncan Crabtree-Ireland, the chief negotiator for the SAG-AFTRA union, complained that the move would involve actors having their faces “scanned for the rest of eternity, in any project they want, with no consent” in return for one day’s pay.

And it’s not just actors concerned about the rise of generative AI in the film industry. Similar concerns about the unregulated use of AI were raised by the Writer’s Guild of America when it began a strike of its own in May.

In a proposal, the union called on the AMPTP to “regulate the use of artificial intelligence on MBA-covered projects, meaning that “AI can’t write or rewrite literary material; can’t be used as source material; and MBA-covered material can’t be used to train AI.”

But the AMPTP rejected their proposal, instead suggesting that annual meetings should be held to “discuss advancements in technology.”

Joan is a legal minefield

From a legal standpoint, the case of Joan is Awful appears pretty watertight in the Black Mirror episode. But in reality, the usage of generative AI to create content still poses the important legal question of ownership.

It is a question that has no robust answer by way of current IP laws. Patent law generally considers the inventor as the first owner of the invention, and lawmakers are struggling to understand how to position laws that protect copyrighted work while fostering innovation.

This has led to a range of legal challenges against AI companies in recent months. US Comedian Sarah Silverman, along with two other authors, recently sued ChatGPT creator OpenAI for allegedly scraping their work from illegal websites after they discovered the chatbot was regurgitating summaries of their content.

Meanwhile, in January, online image repository Getty Images filed a lawsuit against the art generator Stability AI for allegedly stealing millions of copyrighted-protected images to train its text-to-image generator Stable Diffusion.

In the case of these generative AI tools, there is currently the human who creates the initial prompt but the AI tool creates the output.

An AI may also be used to prompt other AI tools, so AI can act as both the prompt and the creator. Other parties need to also be considered, including the developers of the AI tool, as well as the owners of the data that comprise the dataset used to train the AI tool.

LLMs Reshaping Digital Workflows

How large language models move from chatbots to core engines for support, content, legal and clinical workflows across the enterprise.

The latter was a key component of the reasoning behind Italy’s ban of ChatGPT in April. Italy banned ChatGPT for all users accessing the platform with an Italian IP due to four key points of contention.

Two of these were claims by the Italian data protection authority were that OpenAI did not properly inform users that it had collected personal data and that ChatGPT did not require users to verify their age, even though the content that ChatGPT can generate is at times intended for mature audiences.

OpenAI addressed these points by making their privacy policy more accessible to people before registering with ChatGPT, as well as rolling out a new tool to verify the age of users

But this could well be a sign of things to come. As AI becomes more advanced and so too does the type of content that it can generate, the approach taken by Garante should be one taken by all data protection agencies in order to ensure that personal data used to train such algorithms cannot be misused – and to prevent a Black Mirror style AI and GDPR nightmare.

Poe as a Multi‑Model AI Layer

Explore Poe’s role as an orchestration tier that abstracts models, simplifies bot creation, and accelerates AI experimentation at scale.

The Black Mirror prophecies

Of course, it’s not just Joan is Awful that has got people talking about Black Mirror and its increasing similarity to reality.

In the years after their release, several other episodes have also transcended from fiction to reality with the advancement of technology.

An episode called ‘Nosedive’, for instance, explores a world where society is ruled by an online ranking system and people rate each other based on interactions.

These ratings become a form of currency, impacting people’s socioeconomic status from higher-ranked people getting discounts on apartments all the way to not being eligible for medical treatment if someone is ranked poorly.

While a system like this may seem unlikely, the truth is that a very similar system is already here and is used across multiple regions in China.

China’s Social Credit System ranks people based on their “trustworthiness,” with individuals and businesses with low scores becoming ineligible for government assistance programs like subsidies and loans.

In another episode called “Be Right Back,” a grieving woman buys a robot who is able to look and sound like her recently deceased boyfriend using data scraped from his social media accounts, text messages and work chats.

Although this may seem far off, similar technologies have already been developed. In 2017, Eugenia Kuyda invented the Roman bot – an AI program fed on text messages written by her late best friend into a neural network.

This gave the bot his vocabulary and speech patterns, allowing her to communicate with her from beyond the grave.

And that was 6 years ago. Since the launch of generative AI systems like ChatGPT – which are able to replicate human language with almost perfect accuracy – a more sophisticated version of the Black Mirror tech could be right around the corner.

Comments ( 0 )