Everyone wants smarter automation, sharper forecasts, and faster AI-driven decisions. Fair enough. But a lot of organisations are still shaky on a more basic question: what actually happened across the business, and can we trust the answer?

That matters more than it sounds. You can’t build reliable prediction on top of muddled reporting. You can’t hand decision-making to AI when the underlying numbers are inconsistent, late, or scattered across systems. And you definitely can’t call your business data-driven if only a small slice of the company can interpret the data in front of them.

That’s why descriptive analytics still matters. Not as a legacy reporting habit, and not as the dull cousin of predictive analytics, but as the layer that gives leaders a shared view of reality. In an enterprise environment shaped by AI, embedded analytics, and rising pressure to justify every decision with evidence — that foundation is doing more work than ever.

What Is Descriptive Analytics

At its core, descriptive analytics is about turning past and current business data into a clear picture of performance. It shows what happened, where it happened, and how it changed over time. That sounds simple, but in practice it covers the dashboards, reports, scorecards, summaries, and visualisations that many teams rely on every day.

Simple definition with enterprise context

A plain-English definition helps here. Descriptive analytics examines historical data so organisations can understand trends, patterns, and results. That usually means tracking performance against key performance indicators, spotting changes in behaviour, and giving teams a shared reference point for discussion.

Microsoft describes it in exactly those terms, and IBM places it at the start of the broader analytics chain, before predictive and prescriptive models come into play.

In an enterprise setting, descriptive analytics is less about “looking backwards” for its own sake and more about operational visibility.

- A finance team uses it to track margin shifts.

- A sales leader uses it to monitor pipeline movement.

- A security team uses it to review incident patterns.

- An operations team uses it to watch uptime, fulfilment delays, or service backlogs.

Different teams, same basic purpose: establish a reliable view of what the business is actually doing.

What descriptive analytics looks like in practice

Most enterprises are already using descriptive analytics, even if they don’t label it that way. Monthly revenue dashboards are descriptive analytics. Customer churn reports are descriptive analytics. Weekly infrastructure performance views, audit logs, service desk trend reports, and supplier performance summaries all sit in the same category.

That’s worth stating clearly because descriptive analytics is often framed as “basic.” It isn’t basic. It’s foundational. A sales report that shows win rates by region may look straightforward, but it still shapes territory planning, budget decisions, and hiring priorities.

A dashboard that shows repeat incident volume in one business unit may become the reason a CIO changes tooling, staffing, or governance. Microsoft’s own examples focus on reports that summarise sales, finance, and KPI performance because that's exactly where descriptive analytics proves its value.

Where Descriptive Analytics Fits In The Analytics Stack

Enterprise analytics is usually discussed in layers. That’s useful because it shows that descriptive analytics isn't competing with more advanced approaches. It supports them.

Descriptive vs diagnostic vs predictive vs prescriptive analytics

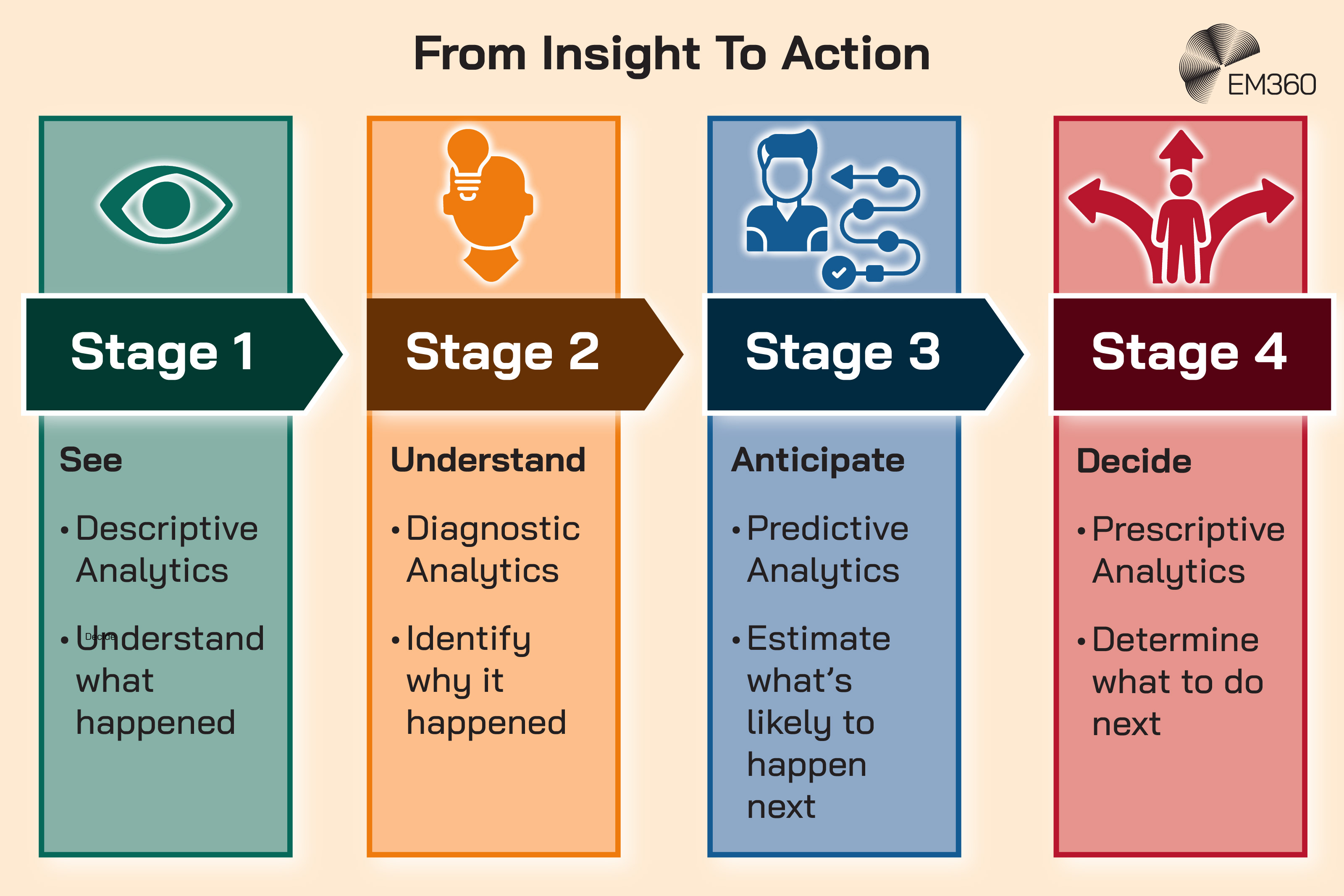

The easiest way to separate the four is by the question each one answers.

- Descriptive analytics answers: what happened?

- Diagnostic analytics answers: why did it happen?

- Predictive analytics answers: what's likely to happen next?

- Prescriptive analytics answers: what should we do about it?

From Audits to Continuous Trust

Shift compliance from annual snapshots to embedded, automated controls that keep hybrid estates aligned with fast-moving global regulations.

Microsoft and IBM both draw this distinction in similar ways. Microsoft describes descriptive analytics as interpreting past data and KPIs to identify trends and patterns, while predictive analytics uses historical data and modelling techniques to forecast future outcomes.

IBM likewise describes descriptive analytics as summarising and visualising historical trends, then positions predictive analytics as the layer that forecasts future events or behaviours.

That progression matters because organisations often talk about predictive and prescriptive analytics as though they exist in a vacuum. They don’t. A weak descriptive layer leads to weak downstream insight. If you don’t have agreement on what happened, your models don’t have a stable base to learn from, and your recommendations won’t inspire much trust.

Why descriptive analytics is still the foundation

This is where a lot of AI conversations go slightly off the rails. There's a tendency to treat reporting as old-fashioned and prediction as strategic. The reality is messier. Predictive models and AI assistants can move quickly, but they still depend on clean definitions, trusted data, and coherent reporting structures.

Recent research makes that point pretty bluntly. BARC’s Data, BI and Analytics Trend Monitor found that practitioners place greater importance on fundamentals such as security, data quality, governance, data-driven culture, and data literacy than on more hyped technical trends.

In the same research, data security, data quality management, and data governance ranked as the top priorities.

That tells you something important. Mature organisations aren't abandoning descriptive analytics. They're trying to make it more trustworthy, more accessible, and more connected to decision-making because they know everything else depends on it.

Core Use Cases For Descriptive Analytics In The Enterprise

Descriptive analytics cuts across functions because every business unit needs a clear line of sight into performance. The use cases change, but the logic stays the same.

Operational performance and KPI tracking

Inside Tumblr’s Policy Overhaul

Tumblr’s path shows how moderation tooling, ad tech integration and data policies can erode a platform’s core value proposition.

This is the most obvious use case, and still one of the most important. Leaders use descriptive analytics to track utilisation, cycle time, uptime, defect rates, costs, and other operational KPIs. These views don’t just keep score. They help teams spot drift early, compare business units, and identify where a process is becoming unstable.

That's one reason descriptive analytics remains so central in infrastructure and operations environments. Before you discuss automation or optimisation, you need a dependable view of throughput, latency, downtime, backlog, or service health.

Customer and revenue insights

Commercial teams rely on descriptive analytics just as heavily. Sales dashboards, churn reports, campaign performance summaries, and customer support trend views all help explain how the business is performing right now.

Even simple descriptive views can be strategically important. A report showing declining renewal rates in a segment may trigger a pricing review. A dashboard showing slower deal progression in one market may point to a positioning problem. A trend line showing repeated customer issues in one product area may shift product and support priorities.

None of that's futuristic. It's still deeply valuable.

Risk, compliance, and security monitoring

Some of the most practical descriptive analytics use cases sit in risk and control functions. Audit trails, access reports, policy exception dashboards, incident summaries, and security event trends all fall into this category.

These aren't glamorous outputs, but they matter in real organisations. They give compliance, security, and governance teams a working picture of exposure. They also create a shared record that other leaders can act on.

Before you explain why incidents are rising or forecast where the next one may land, you need a reliable view of the current pattern.

How Descriptive Analytics Is Evolving With AI And Embedded BI

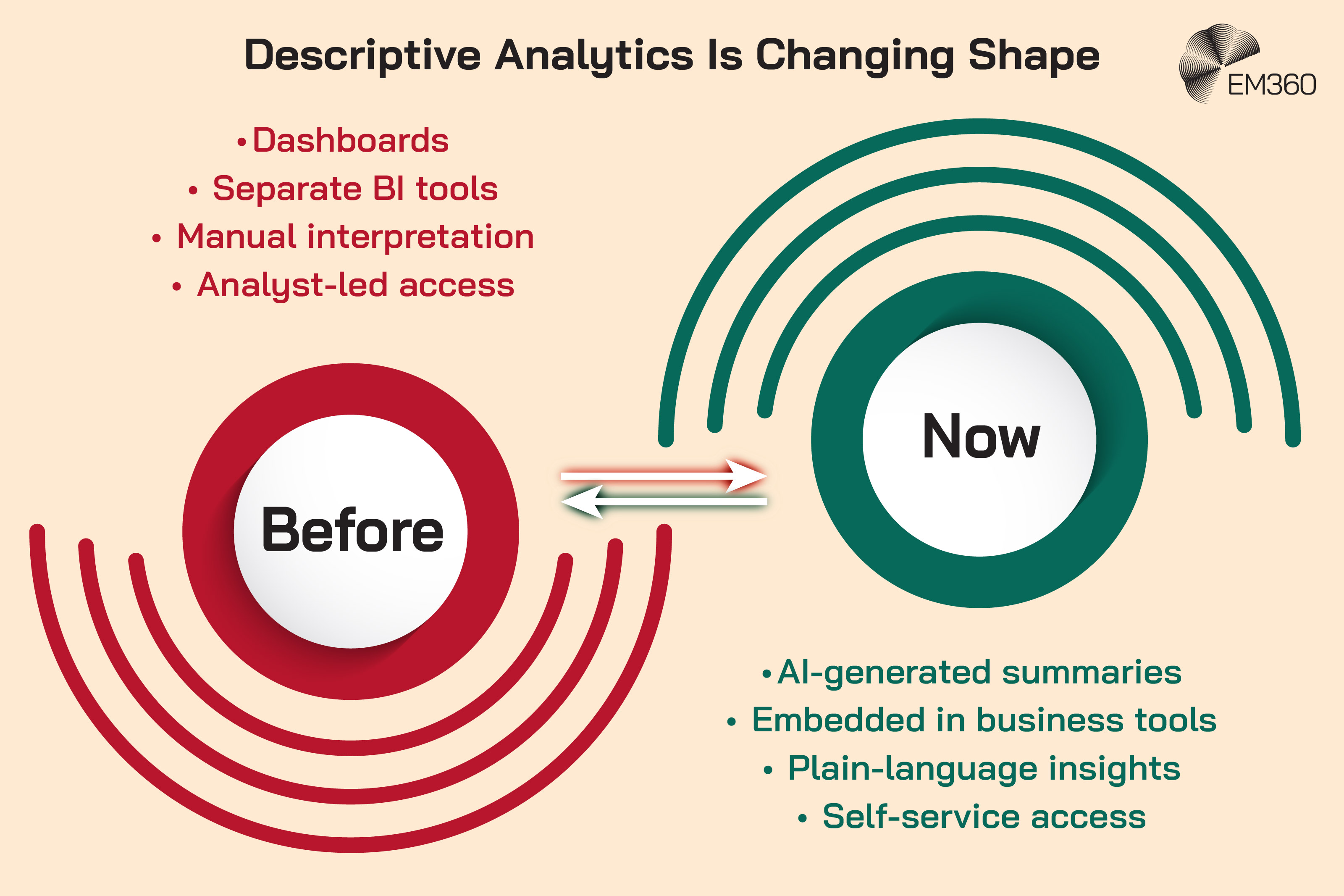

Descriptive analytics isn't standing still. What is changing is the way people access it, interpret it, and act on it. The shift is less about replacing dashboards and more about making them easier to use, easier to understand, and harder to ignore.

From dashboards to AI-generated insights

AI Companions, Youth Risk

Evaluates privacy exposure, harmful content risk and governance gaps when teens rely on an always-on AI within a messaging platform.

One clear trend is the rise of AI-assisted summaries inside analytics environments. Oracle’s 25C sales update is a straightforward example. It adds AI-powered descriptive analytics to dashboard visualisations, including automated analysis, anomaly and pattern detection, and concise narrative summaries of what the data shows.

Oracle is also clear about the boundary: this feature focuses on descriptive analytics and doesn't yet move into diagnostic or prescriptive guidance.

That distinction is useful because it shows where the market is heading. Descriptive analytics is becoming more conversational and more readable. Instead of expecting every user to interpret charts unaided, vendors are increasingly generating plain-language summaries of trends, anomalies, and shifts.

That lowers the effort needed to understand what changed, especially for people who aren't analysts first.

Strategy’s 2025 global survey reinforces the same direction. It found that 43 per cent of organisations are already using AI-powered analytics in production, while 56 per cent cite improved decision-making as the top goal.

At the same time, the top technical concern is inaccurate or inconsistent AI-generated answers, and compliance has overtaken cost as the main adoption challenge. So yes, descriptive analytics is becoming more AI-assisted. But the pressure to keep it accurate and governed is rising with it.

Analytics in the flow of work

Another major shift is where analytics shows up. For years, a lot of business intelligence lived in separate tools that only some people used consistently. That model is wearing thin. The direction of travel now is embedded analytics, or putting insight directly into the systems where work already happens.

Salesforce’s 2025 survey of 552 senior US business decision-makers points to why. Ninety per cent said direct access to the data they need inside the programs and apps they use most would help them perform better, and 86 per cent said they would use data more often if that were the case.

When Google Lost Social Media

Dissects Google+ as a failed market entry and shows how weak differentiation and late timing can sink even the strongest tech incumbents.

That matters because it changes the role of descriptive analytics. It's no longer just a report someone checks after the fact. It increasingly becomes a live layer inside CRM, ERP, collaboration, service, and planning environments. When that happens well, descriptive analytics stops being a specialist activity and becomes part of how people work.

Natural language querying and self-service analytics

The other obvious shift is how users interact with data. More people now expect to ask questions in plain language instead of learning the structure of an analytics platform first.

Salesforce found that 85 per cent of surveyed business leaders think they would be better at their jobs if they could ask their data questions in natural language, while 72 per cent feel their career trajectory depends on how data-driven they're.

That expectation puts descriptive analytics in a different position. It's no longer enough to publish a dashboard and hope people interpret it correctly. Teams increasingly need analytics experiences that support self-service access without sacrificing consistency.

That's a much harder design problem than it sounds, because ease of access is only useful when the underlying data and definitions are stable.

The Data Quality And Governance Challenge Behind Descriptive Analytics

This is the part that often gets skipped because it's less exciting than AI features. It's also the part that decides whether descriptive analytics is genuinely useful or just visually tidy.

Why data quality defines the value of descriptive analytics

If the numbers are wrong, late, incomplete, or inconsistent across systems, descriptive analytics becomes a confidence problem. Leaders stop trusting what they see. Teams argue over definitions. Reports multiply because nobody believes the first one.

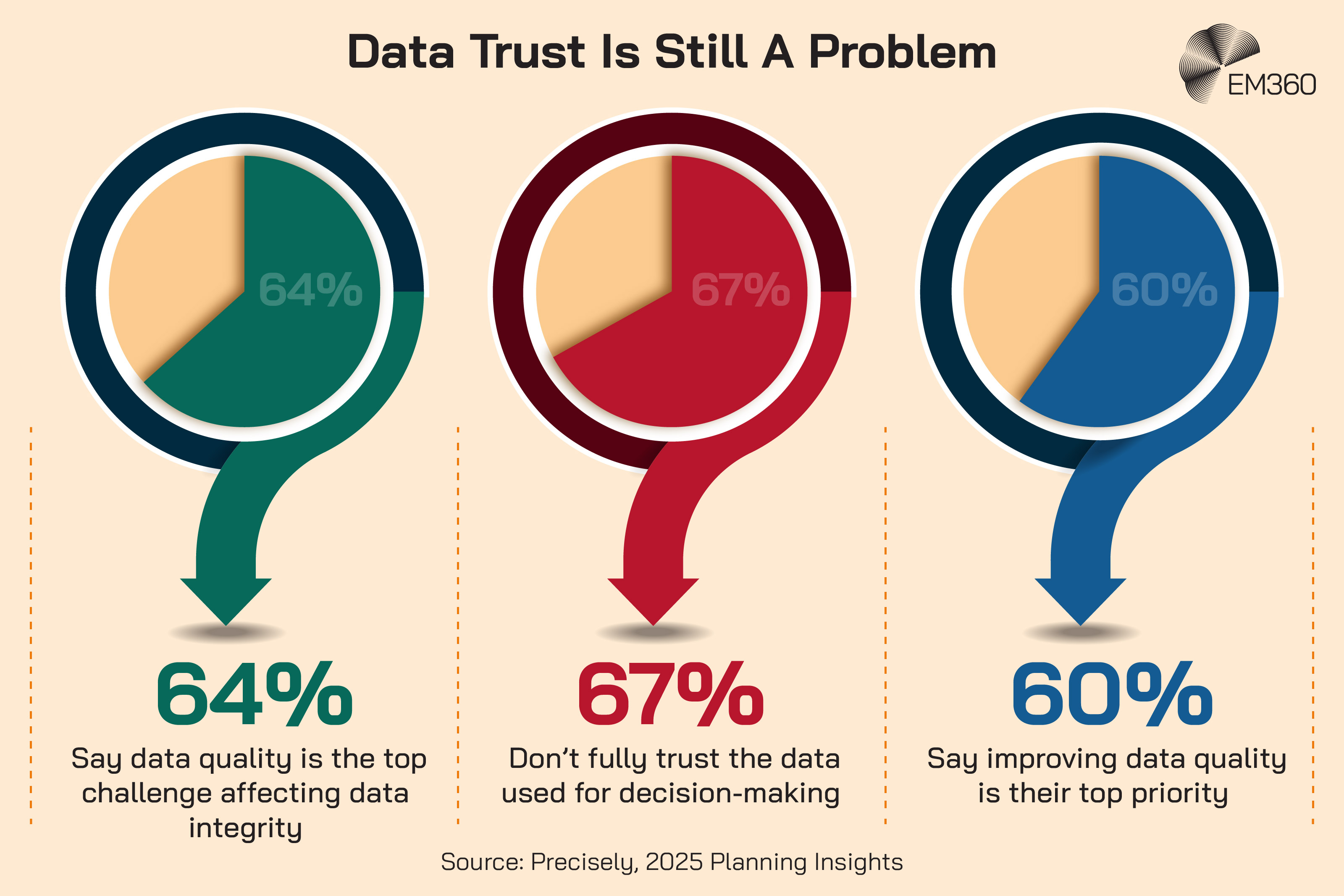

Precisely’s 2025 Planning Insights puts real numbers behind that. In its research with more than 550 data and analytics professionals, 64 per cent said data quality is the top challenge affecting data integrity, 67 per cent said they don't completely trust the data used for decision-making, and 60 per cent said data quality is their top priority.

That's not a niche technical issue. It's a strategic problem. Descriptive analytics is supposed to create a shared view of reality. If teams don’t trust the inputs, it does the opposite.

Governance, compliance, and risk considerations

Good descriptive analytics also depends on governance. Someone has to define metrics, manage lineage, control access, and keep reporting logic consistent over time. Otherwise the same revenue number means different things in different dashboards, and the business ends up making decisions with false confidence.

BARC’s survey shows why governance has moved back to the centre. Practitioners are placing more weight on governance, data quality, and data literacy than on trendier ideas because those basics determine whether analytics can scale responsibly.

This is especially important in regulated industries and enterprise environments with complex architecture. The more systems feed the reporting layer, the more discipline is needed to keep the story coherent.

The risk of scaling bad data with AI

AI doesn't remove this problem. In many cases, it amplifies it.

McKinsey’s 2024 global AI survey found that 65 per cent of respondents said their organisations were regularly using generative AI, and 44 per cent said they had already experienced at least one negative consequence from its use. The most commonly reported consequence was inaccuracy.

That matters for descriptive analytics because AI summaries, natural language querying, and automated insights all sit on top of existing data structures. If those structures are messy, you don't get trustworthy explanation at speed. You get confusion at speed.

Common Challenges And Limitations Of Descriptive Analytics

Descriptive analytics is essential, but it does have limits. Treating it as the full answer creates its own problems.

Limited forward-looking insight

The first limit's obvious. Descriptive analytics explains what happened. It doesn't tell you what's likely to happen next, and it doesn't recommend the best course of action. That's why organisations layer diagnostic, predictive, and prescriptive methods on top.

There's nothing wrong with that limit, so long as it's recognised. Trouble starts when leaders expect backward-looking reports to answer forward-looking questions on their own.

Over-reliance on static reporting

Another common problem is static reporting that records activity without helping anyone act on it. Dashboards can become wallpaper very quickly. Everyone has access. Few people change anything because of what they see.

This is one reason embedded analytics and AI-generated summaries are getting attention. They're attempts to make descriptive analytics more usable, not just more available.

Data silos and fragmented visibility

The third problem is fragmentation. Enterprise data is often spread across platforms, teams, and ownership models. That makes it difficult to create a single view of performance, particularly when different systems use different rules, refresh cycles, or definitions.

Salesforce’s survey speaks to the business impact of that fragmentation. It found that business leaders are under rising pressure to use data, but many still don't feel equipped to find, analyse, and interpret what they need.

So the challenge isn't just “having dashboards.” It's creating a reporting environment that people can actually trust and use.

How To Get More Value From Descriptive Analytics

For most enterprise teams, the opportunity isn't to replace descriptive analytics. It's to tighten it up, connect it to decisions, and make it more usable across the business.

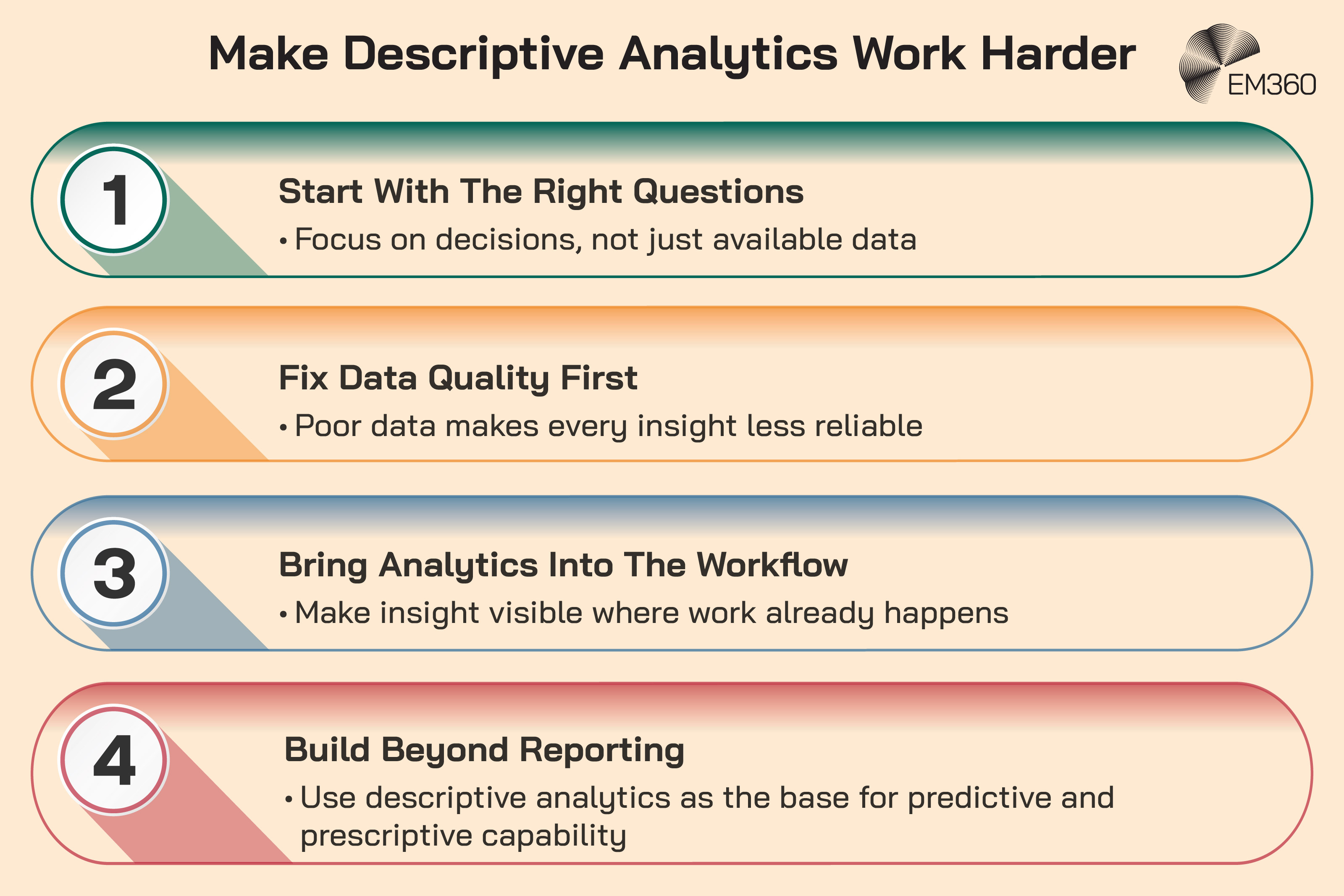

Focus on business questions, not just data collection

A surprising amount of reporting exists because data was available, not because a business question needed answering. Better descriptive analytics starts with sharper questions.

- Which metrics signal risk?

- Which trends matter to margin, resilience, customer retention, or service quality?

- Which patterns need explanation or action?

That shift sounds small, but it changes the purpose of the reporting layer. It moves analytics from documentation towards decision support.

Invest in data quality before advanced analytics

This is the least glamorous recommendation and probably the most useful. If data quality is poor, advanced analytics won't rescue the situation. It’ll just make the gaps more expensive.

The research from Precisely and BARC makes that point clearly. Teams still struggle with trust, consistency, and governance, and they know those issues affect every analytics layer above them.

Integrate analytics into everyday workflows

People are more likely to use analytics when it appears where they already work. That means pulling descriptive insight into operational tools, not expecting everyone to visit a separate BI environment out of discipline.

The demand is already there. Salesforce’s survey found strong support for analytics embedded directly into work applications and for more natural interaction with data.

Build toward predictive and prescriptive maturity

Descriptive analytics shouldn’t be the ceiling. It should be the starting point for more advanced decision-making. Once the business has trusted definitions, governed data, and strong reporting discipline, it becomes much easier to add diagnostic, predictive, and prescriptive layers with confidence.

IBM makes that sequencing explicit. Organisations generally move through descriptive understanding before applying predictive and prescriptive methods.

Final Thoughts: Descriptive Analytics Is Only As Powerful As The Decisions It Enables

In an AI-driven enterprise, descriptive analytics isn't the old part of the stack that smarter tools will eventually outgrow. It's the part that decides whether smarter tools deserve to be trusted in the first place.

That brings us back to the opening tension. Everyone wants faster answers and more intelligent systems. Fair enough. But speed isn't the same as clarity, and intelligence isn't the same as shared understanding.

Organisations that treat descriptive analytics as a strategic capability, grounded in trusted data, usable workflows, and clear governance, will be in a much stronger position to get real value from AI instead of just more noise.

That's also where the bigger opportunity sits. The companies that win here won't be the ones with the flashiest dashboards. They’ll be the ones that know what happened, trust what they're seeing, and can move from that reality with confidence.

For leaders working through that shift, EM360Tech’s wider coverage of data strategy, analytics maturity, and AI adoption is built to help keep those decisions grounded.

Comments ( 0 )