Most enterprises don’t have a data shortage. There are dashboards everywhere. Alerts firing constantly. Customer feedback coming in through forms, calls, chats, reviews, behavioural analytics, and support tickets. Security teams are watching logs, endpoints, identities, and networks.

Operations teams are tracking uptime, latency, dependencies, and cost. On paper, it should be easier than ever to see what’s coming. And yet organisations still miss the things that matter early enough to do anything useful with them. Because what they have is a meaning shortage.

That’s the tension. More signals haven’t automatically led to better decisions because many systems still reward volume over judgment. They collect. They categorise. They report. But they don’t always help people distinguish between what's merely happening and what's starting to matter.

That’s where AI signal detection is changing the picture. Not because artificial intelligence suddenly makes enterprise systems clairvoyant, and definitely not because it removes the need for human judgment. But because it can spot subtle patterns across noisy, fragmented data much faster than traditional approaches can.

The real value appears when those weak signals are turned into actionable intelligence that a team can trust enough to act on.

What Weak Signals Actually Look Like In Enterprise Systems

A weak signal is an early indicator that something may be shifting, but not loudly enough for a conventional system to treat it as urgent.

That matters because enterprises are usually very good at recognising strong signals. A major outage is a strong signal. A confirmed breach is a strong signal. A flood of customer complaints is a strong signal. Those things arrive with enough force that most teams can’t ignore them even if they want to.

Weak signals are different. They tend to arrive quietly. A customer doesn’t complain, but their tone changes across a few service interactions. A login pattern looks slightly off, though not dramatic enough to trigger a fixed rule. A service becomes just a little slower under certain conditions, but only for certain user journeys.

A market doesn’t collapse, but the language buyers use starts shifting in ways that suggest priorities are moving. None of those moments means much on its own. Together, they can mean everything.

That’s the point people often miss. Weak signals rarely stand alone. Their meaning comes from context, timing, and correlation. One support chat with a mildly frustrated tone may mean very little. The same tone appearing alongside reduced engagement, slower response to renewal outreach, and lower usage starts to look a lot more like churn in its early stages.

The same logic applies in cybersecurity, infrastructure, compliance, and commercial strategy. What looks small in isolation can become obvious once the surrounding pattern is visible.

Why Traditional Analytics Miss What Matters

Traditional analytics aren't useless. They’re just built for a different job.

Most conventional systems rely on predefined rules, threshold alerts, and historical reporting. That makes them useful for tracking known conditions. If CPU usage crosses a certain line, raise an alert. If suspicious activity matches a known pattern, escalate it. If survey scores drop below a target, flag the trend. That’s fine as far as it goes.

The problem is that these systems are strongest when the organisation already knows what it’s looking for. They detect the expected, the measurable, and the historically legible. They’re much weaker when the risk or opportunity appears in a form that's ambiguous, low-confidence, or spread across multiple channels and moments.

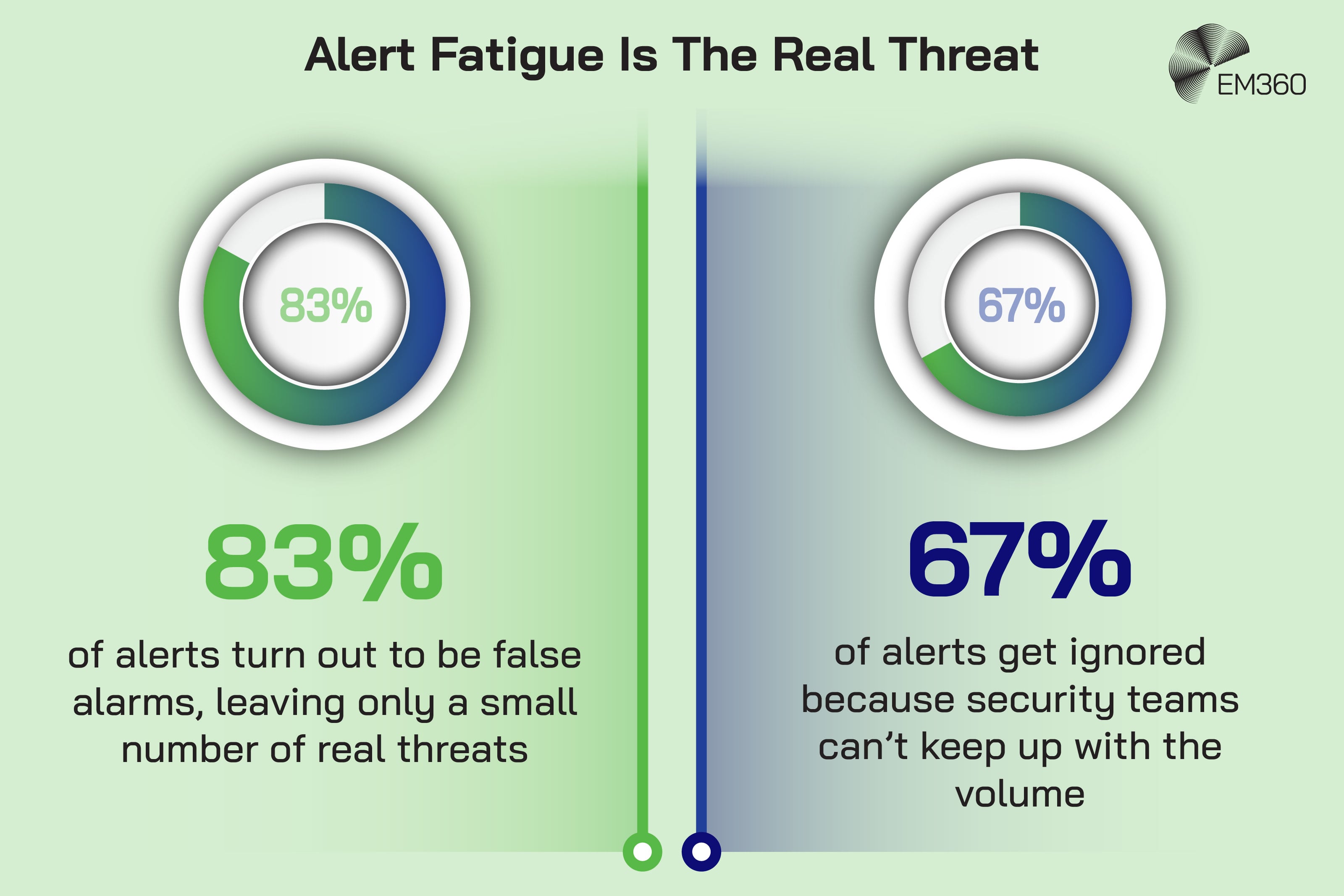

That creates a very familiar enterprise problem. Security teams drown in alert fatigue because systems produce too much noise and too little prioritisation. Experience teams end up with dashboards full of lagging indicators and surveys that tell them how customers felt after the damage was already done.

Operations teams see the failure once it is big enough to breach the threshold, not when the pattern first begins drifting. The issue isn't that organisations lack data. It’s that many of their systems still struggle to interpret it in context.

This is one reason the value gap around enterprise AI still exists. McKinsey’s 2025 global State of AI survey found that 88 per cent of respondents say their organisations regularly use AI in at least one business function, but only 39 per cent report EBIT impact at enterprise level. BCG tells a similar story from a different angle.

Three quarters of executives rank AI or generative AI as a top-three strategic priority for 2025, yet only 25 per cent report significant value. The gap isn't just about adoption. It's about whether organisations can turn raw signals into decisions that change outcomes.

How AI Identifies Weak Signals In Noisy Data

What AI does well here isn't magic. It's scale, pattern recognition, and correlation.

A human can recognise that something feels off. An experienced analyst, operations lead, or customer strategist often can too. But people struggle when that signal is spread across thousands of events, millions of interactions, or multiple systems that were never designed to speak to each other cleanly.

AI is useful because it can process those patterns far faster and across far more data than a human team realistically can. In plain terms, there are four capabilities that matter most.

First, AI can recognise patterns across large datasets. That means it can spot recurring combinations of behaviour that don’t look serious one event at a time, but do look meaningful in aggregate.

Second, it can handle anomaly detection without relying only on rigid thresholds. Instead of waiting for something to become obviously abnormal, modern systems can learn what normal looks like under varying conditions and then detect granular deviations from that baseline.

Dynatrace, for example, describes anomaly detection as a process of continuously learning baselines across applications, services, and infrastructure, factoring in variables like geolocation, browser type, operating system, bandwidth, and user actions.

Third, AI can correlate signals across time, entities, and channels. Datadog’s Cloud SIEM documentation shows this clearly in security. Its content anomaly detection analyses incoming logs for deviations from historical patterns, while its broader detection logic is built around finding subtle differences that might otherwise disappear into normal log volume.

That matters because many weak signals aren't single events. They're sequences.

Fourth, it can work with unstructured data through natural language processing. That means voice transcripts, chat logs, emails, call summaries, and written comments can become analysable at scale. This is why conversational intelligence has become such a strong use case.

It lets organisations pick up changes in sentiment, intent, friction, and demand that don't fit neatly into traditional reporting fields.

That doesn't mean AI understands meaning in a human way. It doesn’t. What it does is identify patterns that humans would struggle to find consistently at scale. The interpretation still matters. The governance still matters. But pattern recognition at this level is a real capability, not a marketing phrase.

The Real Shift From Detection To Actionable Intelligence

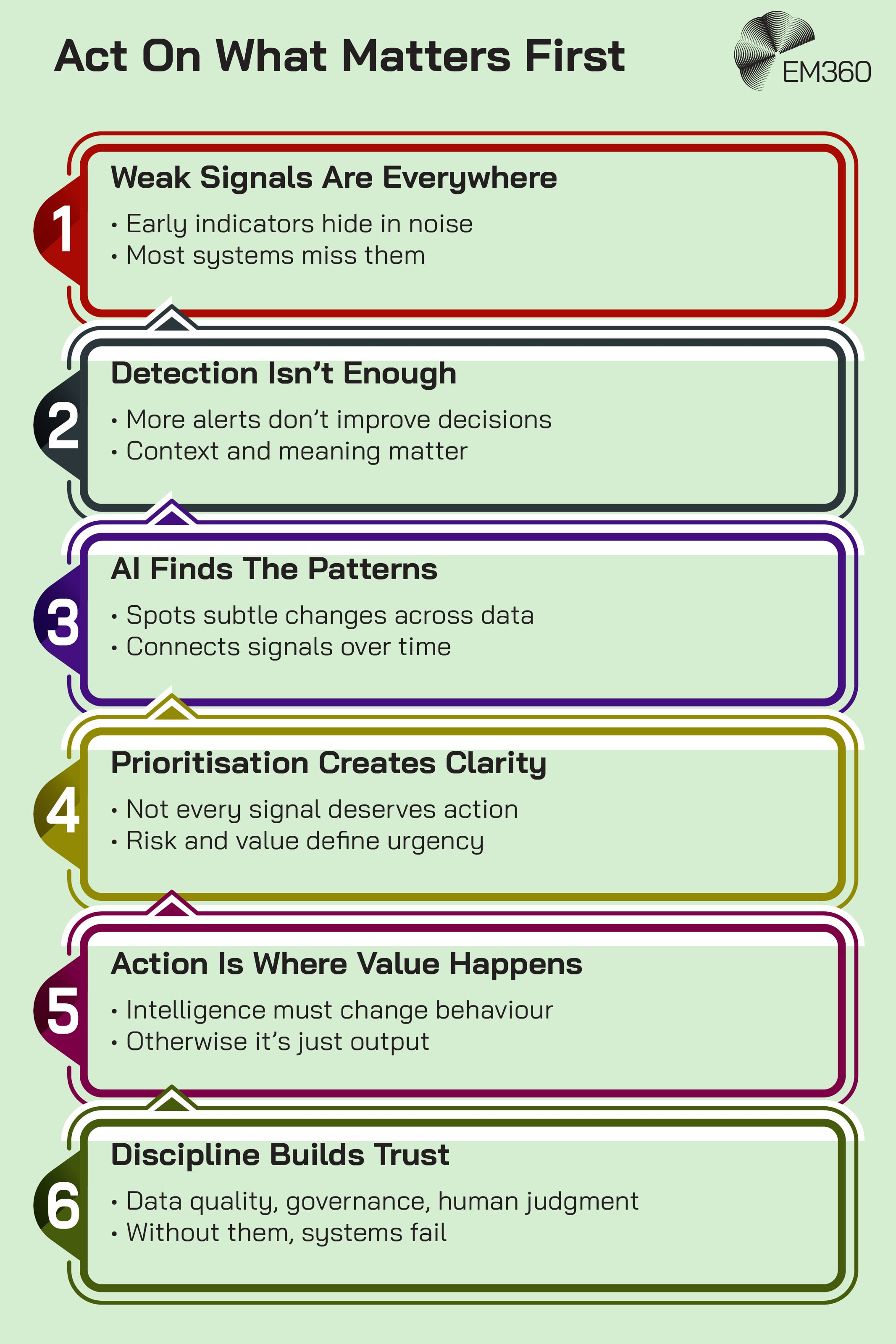

This is where the conversation needs to get more precise. Detection isn't the same thing as intelligence. And intelligence isn't the same thing as action.

Actionable intelligence is information that’s been prioritised, contextualised, and made usable enough to inform a decision or trigger a response. That distinction matters because many organisations still stop at the detection layer. They find the signal, put it on a dashboard, maybe add an alert, and assume the hard part is done. It usually isn’t.

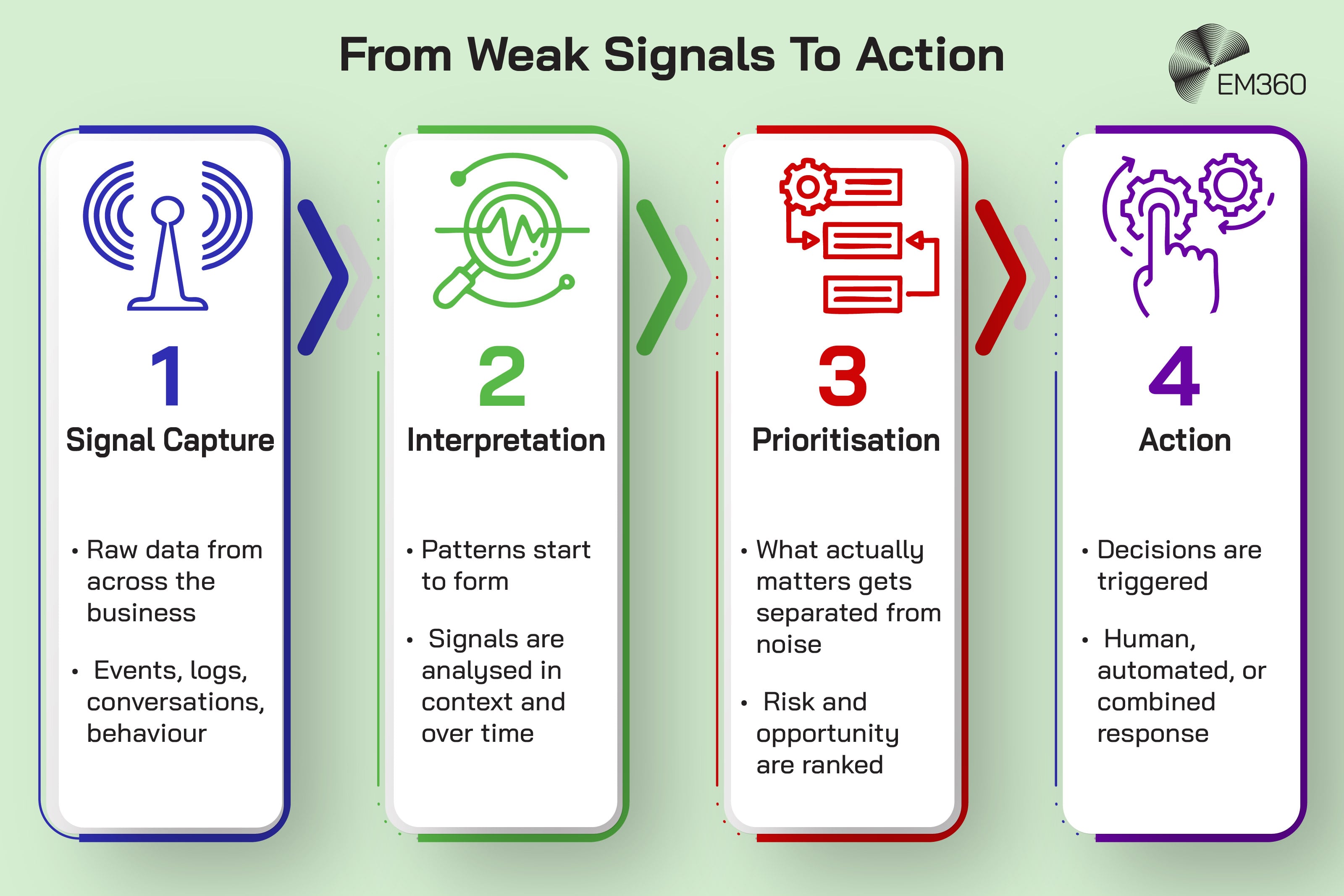

The move from weak signal to useful action tends to happen in four stages.

The first is signal capture. This is the raw data layer. Events, logs, transcripts, behaviours, transactions, usage patterns, and environmental telemetry all sit here.

The second is interpretation. This is where pattern recognition enters. The system begins asking whether the signal is isolated, whether it matches a known sequence, whether it is changing over time, and whether it connects to anything else that might raise or lower its significance.

The third is prioritisation. This is where a signal becomes strategically useful. A change in sentiment from a low-value customer segment doesn't deserve the same response as a change among at-risk enterprise accounts.

A minor anomaly in a low-impact system doesn't deserve the same urgency as unusual behaviour touching identities, production environments, or sensitive data.

The fourth is action. That action may be human, automated, or blended. It may mean escalating a case, triggering an investigation, prompting an agent with next best action, adjusting a workflow, or beginning remediation. What matters is that the signal changes behaviour.

This is exactly where many enterprise AI programmes stall. They generate outputs, but don’t connect them to workflows, ownership, or decision rights. BCG’s warning is blunt but accurate here. It's not enough to invest in models if the surrounding operating model remains untouched.

That's why the value gap stays open. Intelligence only becomes valuable once it actually changes what the organisation does next.

Where This Is Already Delivering Value

The strongest case for this topic isn't theoretical. It's already visible across multiple enterprise domains.

Customer intelligence and experience

Customer experience is one of the clearest examples because the signals have always been there. The problem was getting them out of scattered conversations and behavioural traces before the organisation lost the customer.

Medallia’s 2026 State of Customer Experience work shows the strain clearly. While 66 per cent of CX practitioners believe experiences improved last year, only 17 per cent of consumers agree.

The same body of research points to a broader issue: over half of consumers believe companies should infer satisfaction from behaviours and signals, not surveys alone. That matters because surveys are declining in usefulness just as organisations need richer intelligence.

This is where AI-supported conversational intelligence becomes valuable. Medallia says teams that use conversational intelligence data are 63 per cent more likely to say they’re exceeding their goals than teams that don't, yet only 30 per cent use that data frequently.

Their 2025 research also found that 64 per cent of CX practitioners plan to increase their use of conversational intelligence, rising to 73 per cent among fast-growing brands. That's a very practical example of weak signals becoming useful.

Tone shifts, unresolved friction, repeated phrases, silent dissatisfaction, and emerging intent all become easier to identify before they show up as churn or lost revenue.

Cybersecurity and threat detection

Security teams have lived with the signal problem for years. The difference now is that attackers are moving faster and defenders need better ways to detect subtle, low-and-slow patterns before they become major incidents.

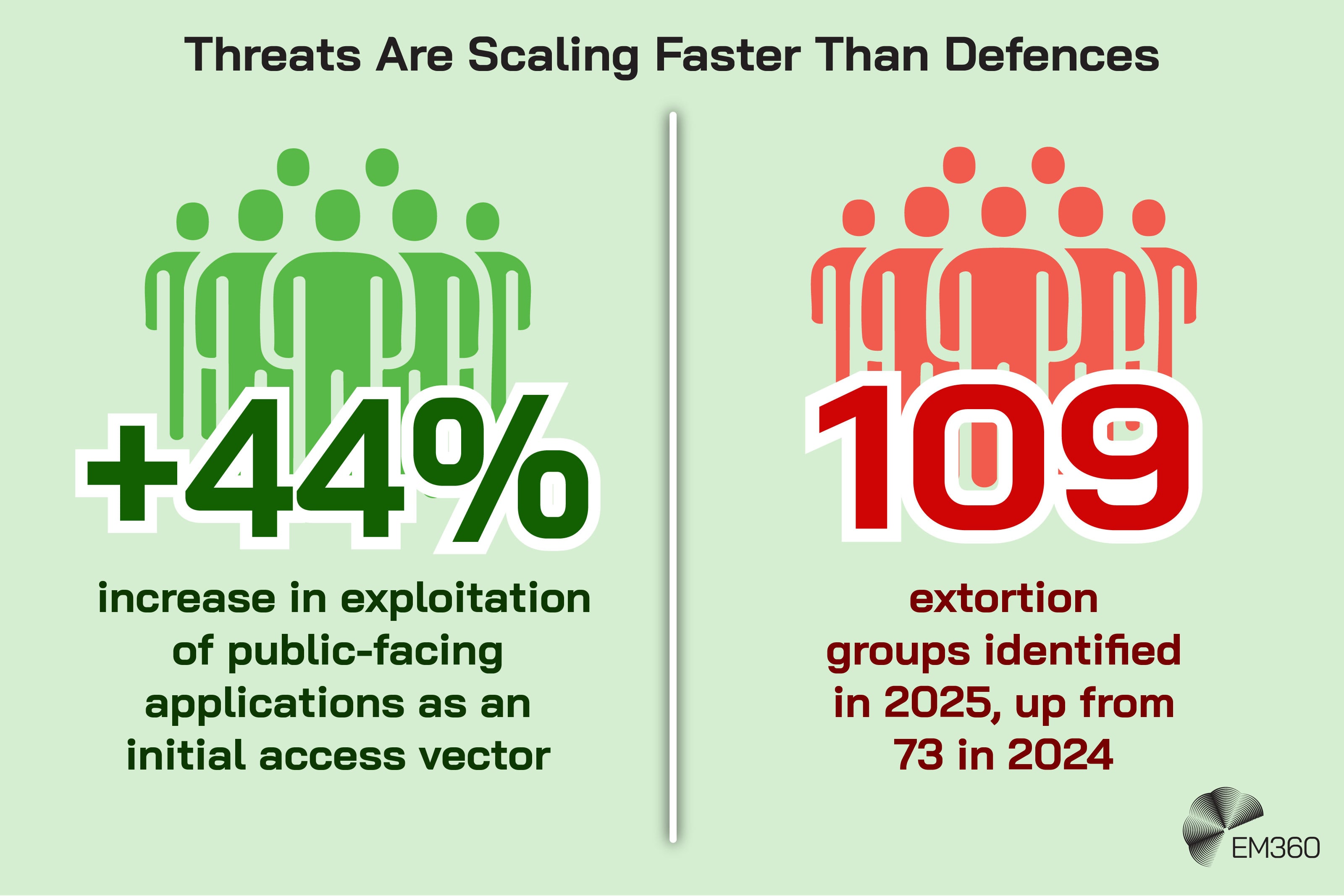

IBM’s 2026 X-Force Threat Intelligence Index found that exploitation of public-facing applications rose 44 per cent year over year as an initial access vector, and that X-Force identified 109 distinct extortion groups in 2025, up from 73 in 2024. Those numbers matter because they show how crowded and fragmented the threat environment has become.

When the number of actors rises and the attack surface keeps expanding, detecting only the obvious isn't enough.

That's why sequence detection, behavioural analytics, and anomaly-based correlation matter so much. In practical terms, the weak signal may be an odd change in access behaviour, a subtle deviation in log content, or a low-frequency pattern that doesn't trip a static threshold on its own.

What turns it into useful intelligence is the ability to connect those pieces before the attacker reaches the headline stage.

Observability and preventive operations

Infrastructure and application operations are another strong fit because the best outcome is often preventing visible failure altogether.

Dynatrace has been explicit about this shift, framing recent advances in AIOps as a move from reactive operations to preventive operations. Its latest platform positioning goes further, talking about trusted agentic automation that helps organisations move toward autonomous operations while keeping teams in control.

away the branding and the underlying idea is simple: small system changes matter more when you can detect them early enough to intervene before users feel the impact.

That's a useful way to explain weak signals to an enterprise reader. A slight performance drift, an unusual dependency pattern, a behavioural change in a service, or an abnormal sequence of events isn't necessarily a crisis. But it can be the opening line of one.

AI-supported observability is useful because it helps teams catch the line while it is still short.

Risk, compliance, and regulated environments

Regulated environments show why this topic isn't only technical. It's also about trust, accountability, and governance. The FDA’s Emerging Drug Safety Technology Program is focused specifically on the use of artificial intelligence and other emerging technologies in pharmacovigilance.

The agency says industry and regulators face challenges from ever-increasing case volumes and that AI may help make safety surveillance more efficient and effective by capturing, aggregating, and analysing larger and more diverse datasets.

Importantly, the FDA also points to signal detection and evaluation as an area of clear interest and pairs that with concerns around governance, transparency, data quality, bias mitigation, model performance, and human accountability.

That balance matters. In regulated settings, weak signal detection isn't just about being earlier. It's about being early in a way that remains defensible.

The Risks Of Getting Signal Detection Wrong

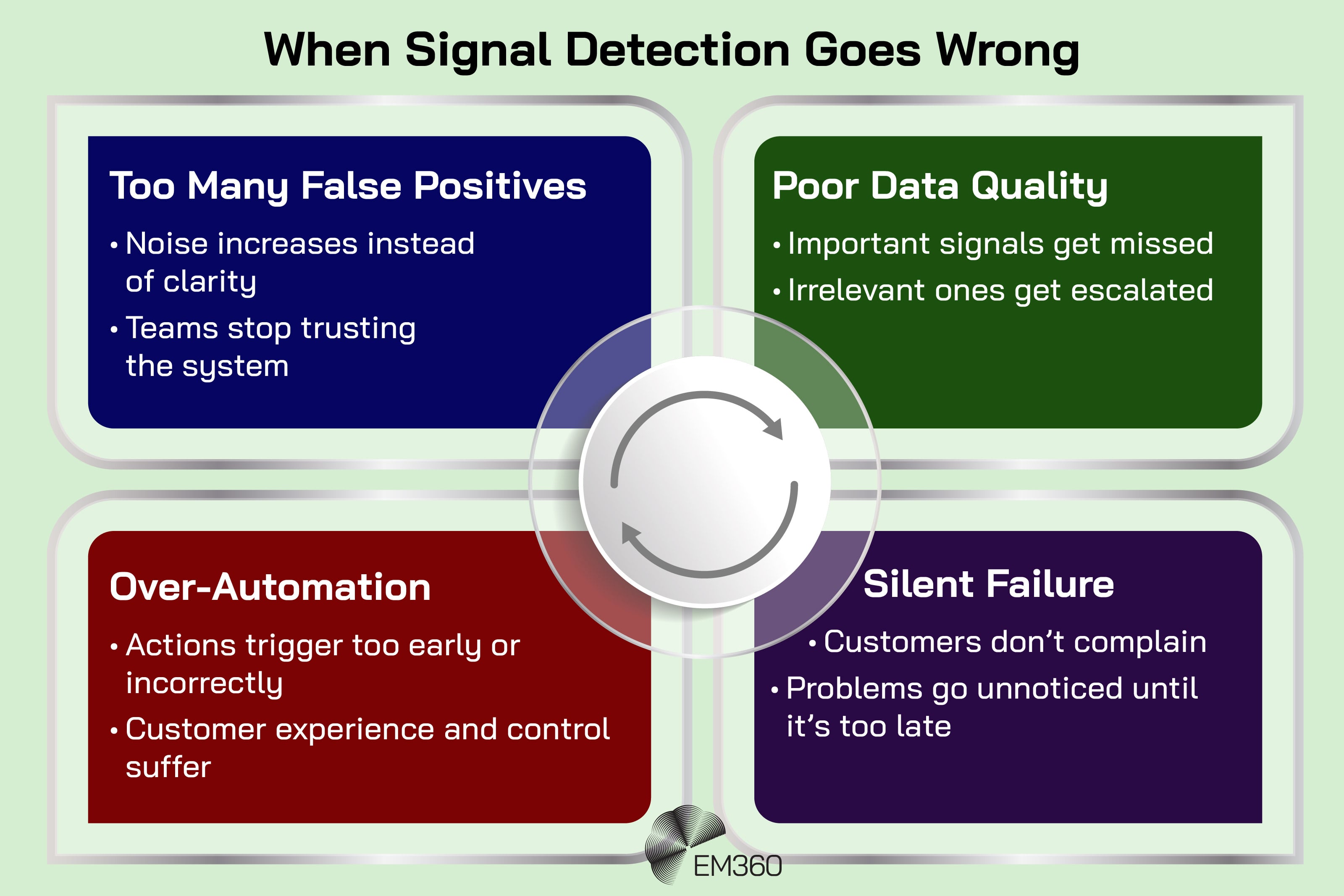

There’s a tendency in enterprise AI conversations to talk as if earlier detection is automatically better. It isn’t.

If a system produces too many false positives, teams stop trusting it. If it over-triggers, it creates a new form of noise rather than reducing the old one. If it’s trained on poor or incomplete data, it can miss the signals that actually matter while confidently escalating the ones that don't.

If it automates action too aggressively, it can degrade the very experience or control environment it was supposed to improve.

Customer experience gives a good example of that problem. Qualtrics’ 2026 Consumer Experience Trends research found that nearly one in five consumers who used AI for customer service saw no benefits from the experience, a failure rate almost four times higher than for AI use in general.

The same research says fewer than one in three consumers now provide direct negative feedback after a bad experience, and 30 per cent say nothing at all. That means organisations can't afford systems that only respond to explicit complaints. But they also can't afford AI that automates badly and makes the silence worse.

Weak signal detection is probabilistic by nature. It doesn't offer certainty. It offers earlier visibility into what may be emerging. That's useful, but only if organisations treat it with the right level of discipline. Governance matters. Validation matters. Human review still matters.

Good systems don't remove judgment. They make judgment better informed and better timed.

What Enterprises Need To Get Right

The organisations that get the most value from this are usually not the ones with the biggest pile of data. They're the ones with the clearest route from signal to decision.

First, they connect signals to decisions, not just dashboards. They know which signals are supposed to influence retention, incident response, remediation, risk escalation, cost control, or compliance review. Without that link, intelligence remains decorative.

Second, they invest in data quality and integration. This sounds boring because it is boring, but it is still true. Deloitte’s 2026 State of AI in the Enterprise says worker access to AI rose by 50 per cent in 2025 and that the share of organisations with 40 per cent or more of projects in production is expected to rise sharply in the next few months.

That scale makes weak-signal detection more useful, but it also makes bad data more expensive.

Third, they define ownership. Someone has to own interpretation, prioritisation, and response. Otherwise the signal gets passed around until it becomes yesterday’s problem.

Fourth, they keep a human in the loop where judgment matters most. This isn't because humans are always better at pattern recognition. Often they aren't. It's because accountability, trade-off decisions, and contextual judgment still need stewardship.

Fifth, they build feedback loops. A signal model that's never checked against outcomes will drift. Teams need to know which signals proved meaningful, which produced noise, and where the system needs recalibration. That's how weak-signal detection becomes stronger over time rather than just more automated.

Why This Matters Now

This matters now because enterprises are moving into the uncomfortable middle stage of AI adoption.

The experimentation phase is no longer the whole story. Deloitte’s report mentioned earlier points to widening access and growing production maturity. McKinsey shows that AI use is now widespread across organisations, while interest in agents is high.

Microsoft’s 2025 Work Trend Index says 24 per cent of leaders report their companies have already deployed AI organisation-wide, and that 82 per cent are confident they will use digital labour to expand workforce capacity in the next 12 to 18 months.

The same report says 81 per cent expect agents to be moderately or extensively integrated into AI strategy over that period. That's a meaningful shift. It means AI is moving closer to workflows, operations, and execution. As that happens, the quality of signal detection starts to matter more, not less.

If AI is going to influence customer interactions, security investigations, operations, and risk decisions, then organisations need to get much better at distinguishing weak but meaningful signals from cheap noise.

This is also why ROI pressure has intensified. BCG’s research makes that clear. AI remains a top strategic priority, but most organisations are still not seeing substantial value. One reason is that they’re treating AI as a productivity layer or content layer without solving the harder problem beneath it: how to recognise what matters early enough to act.

Weak signal detection isn't the whole answer, but it is increasingly one of the missing pieces between experimentation and measurable business impact.

Final Thoughts: Intelligence Only Matters When It Changes Decisions

Enterprises don't lack signals. They lack the ability to recognise which ones matter early enough to do something useful with them.

That has always been the deeper problem. More data did not solve it. More alerts did not solve it. More dashboards definitely did not solve it. What changes the picture is the ability to detect subtle patterns across fragmented systems, understand their likely significance, and connect them to action before they become obvious to everyone else.

That's why this matters beyond analytics. As AI becomes more deeply embedded in workflows, infrastructure, and customer operations, the organisations that pull ahead will not be the ones that detect the most. They will be the ones that respond to the right signals first, with enough confidence to act and enough discipline to know when not to.

For readers trying to make sense of how that shift is playing out across enterprise AI, data, security, and infrastructure, EM360Tech continues to bring together the kind of grounded analysis and practitioner perspective that helps separate real operational change from the usual noise.

Comments ( 0 )