Consumer protection usually starts from a pretty reasonable idea. If companies disclose the risks, explain the trade-offs, and give people a choice, consumers can decide what they’re willing to accept.

That logic works in a lot of situations. People can compare prices. They can read reviews. They can judge quality, at least roughly. They can decide whether a product seems worth the money, whether a service feels trustworthy, or whether a brand has earned their confidence.

Cybersecurity looks like it should fit that model.

But it doesn’t.

We keep treating cybersecurity as though it’s something consumers can navigate through transparency and choice. In reality, the risks usually sit inside systems they can’t see and can’t realistically evaluate.

Why Cybersecurity Became a Consumer Protection Issue

Cybersecurity isn’t just a technology risk anymore. It’s a consumer harm issue.

Part of the reason is that the consequences are no longer abstract. The Federal Trade Commission said consumers reported losing more than $12.5 billion to fraud in 2024, up 25 per cent from the year before. The FBI’s Internet Crime Complaint Center reported 859,532 complaints in 2024, with reported losses exceeding $16.6 billion, a 33 per cent increase from 2023.

Those numbers matter because they show what digital harm looks like once it leaves the security team’s dashboard and reaches ordinary people. This isn’t just about compromised servers or exploited vulnerabilities in the abstract.

It’s about drained accounts, stolen identities, manipulated transactions, and months or even years spent trying to recover from something the consumer had no real chance of spotting in advance.

The channels are becoming more ordinary too, which arguably makes the whole thing more unsettling. In April 2025, the FTC reported that consumers lost $470 million in 2024 to scams that started with text messages. That’s five times higher than the amount reported in 2020.

That’s why regulators are starting to treat cybersecurity less like a narrow technical specialty and more like a public trust issue. It now sits much closer to product safety and financial safety than the industry used to admit.

When software, devices, and digital services shape how people work, bank, communicate, travel, and manage their homes, weak security stops being just an internal IT problem. It becomes part of the duty organisations owe the people who rely on those systems.

The Traditional Consumer Protection Model: Transparency And Choice

The traditional consumer protection model is built on informed consent.

A company provides information. A consumer reviews it. Then the consumer decides whether to go ahead.

That model makes sense when the risk is visible enough to judge. A food label can help someone compare ingredients. A product review can help them spot quality issues. Pricing, warranties, return policies, safety warnings, and independent reviews all give people signals they can actually use.

Even when those decisions aren’t perfect, the basic logic still holds. The consumer can see the object of the decision, understand the broad trade-off, and act on the information in front of them.

Digital consent borrows heavily from that same framework. Privacy notices, terms of service, cookie banners, and product disclosures all assume that if users are told enough, they can make meaningful decisions about the risks they’re accepting.

The problem is that cybersecurity isn’t like a misleading label or an overpriced product. It isn’t a visible feature of the thing being bought. It’s a condition of the systems behind it. And once that’s true, the whole equation changes.

Why Cyber Risk Breaks The Transparency Model

Cyber risk is difficult to evaluate because most of the information that matters is either hidden, too technical, or constantly changing.

A consumer can’t look at a banking app and tell whether the vendor’s identity architecture is robust. They can’t inspect a connected camera and judge whether the update mechanism is secure. They can’t easily know how many third parties handle their data, whether a supplier has weak access controls, or whether a software dependency is carrying a known vulnerability.

And to be fair, even organisations with experienced security teams struggle with that kind of assessment.

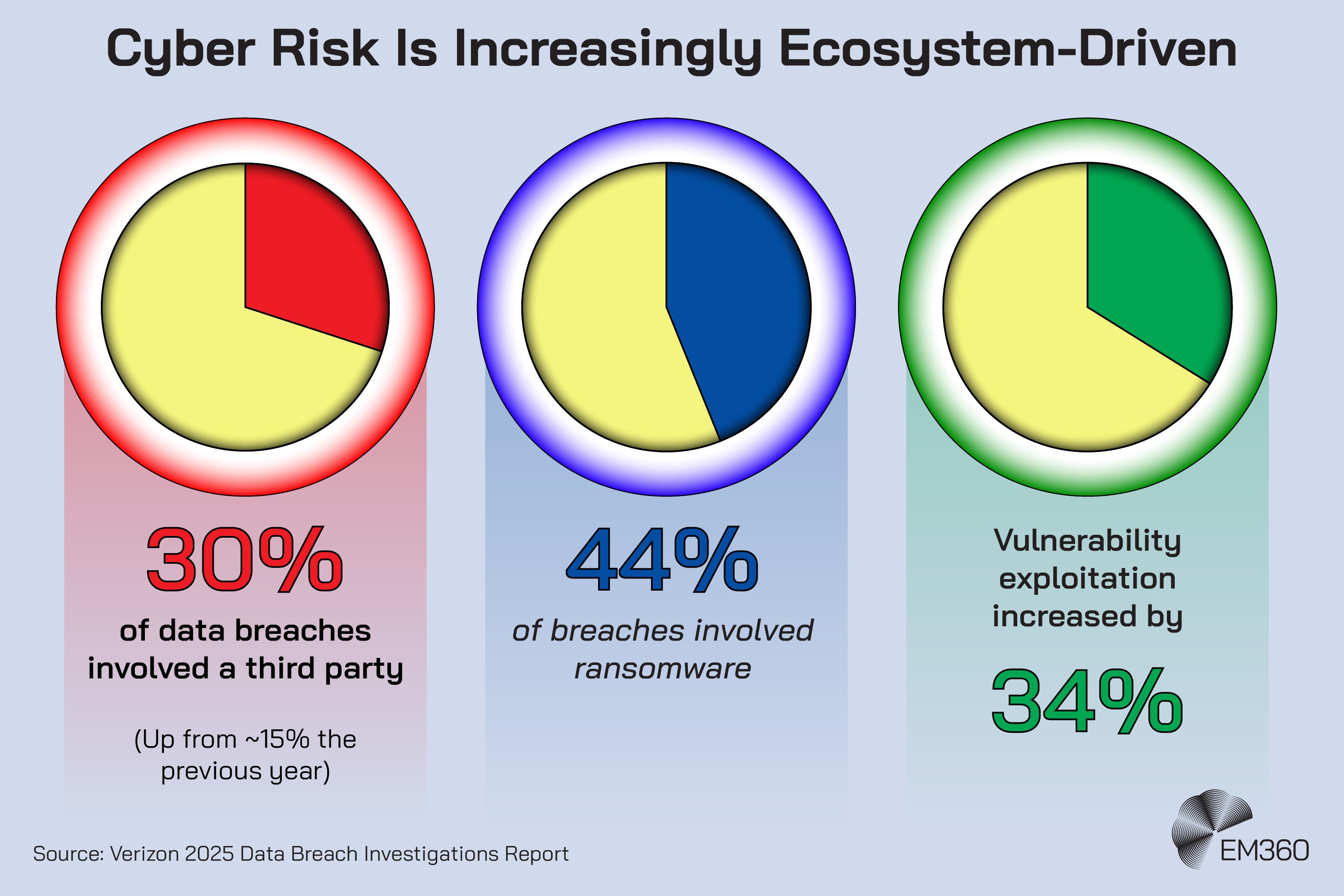

Verizon’s 2025 Data Breach Investigations Report found that third-party involvement appeared in 30 per cent of breaches, up from roughly 15 per cent the year before. It also found a 34 per cent rise in vulnerability exploitation, with ransomware present in 44 per cent of breaches.

That’s exactly the point. The risk is increasingly distributed across ecosystems rather than sitting neatly inside one product or one company. A consumer isn’t being asked to judge a single vendor in isolation. They’re being asked, without really being asked, to trust an entire digital supply chain they can’t see.

And this is where the transparency model starts to buckle. You can disclose as much as you like, but disclosure is only useful when the person receiving it can meaningfully interpret it and act on it.

Pew’s research on data privacy shows just how weak that assumption has become in practice. More than half of Americans, 56 per cent, say they often click “agree” on privacy policies without reading them. Another 61 per cent say those policies are ineffective at explaining how companies use people’s data.

That’s not evidence that people don’t care. It’s evidence that the mechanism itself is failing.

Capability Vs Responsibility In Cybersecurity

This is the distinction that matters most.

Consumers are capable of making informed choices when meaningful information exists. That’s not the same thing as saying they should be responsible for evaluating every form of risk.

People can compare prices. They can notice patterns in reviews. They can decide whether a product feels well made or whether a company has a reputation worth trusting. Those are real consumer judgments, and they matter.

But expecting them to evaluate software architecture, encryption practices, identity controls, cloud configuration, breach detection maturity, or third-party vendor risk is something else entirely. That isn’t consumer choice in any meaningful sense. It’s risk transfer dressed up as transparency.

Pew’s digital knowledge research makes that gap harder to ignore. Americans answered a median of five out of nine questions correctly in a 2023 study on AI, cybersecurity, and major tech topics. While most could identify a strong password, far fewer could recognise an example of two-factor authentication.

That doesn’t mean the public is unintelligent. It means cyber risk sits inside a level of technical complexity that most people were never meant to audit for themselves.

So the issue isn’t competence. It’s structure.

When a system assumes consumers can protect themselves against invisible, specialist risk, it quietly shifts responsibility away from the organisations that created or control that risk in the first place. And once that happens, the language of choice starts doing work it can’t actually support.

What Happens When Security Depends On Perfect User Behaviour

Security teams already know this lesson inside the enterprise.

They know phishing awareness matters, but they also know awareness alone isn’t enough. That’s why mature organisations invest in phishing-resistant authentication, email filtering, identity controls, and automated detection. They know password hygiene matters, but they also know users will reuse passwords, forget them, or take shortcuts.

That’s why the market keeps moving toward passwordless authentication and stronger default controls. In other words, security engineering already assumes that systems shouldn’t depend on perfect human behaviour.

The same principle should apply to consumer protection.

If a product is only safe when the user constantly spots subtle signs of fraud, understands complex permissions, configures every setting correctly, and interprets vague disclosures with expert precision, that product isn’t genuinely safe. It’s simply offloading security work onto the person least equipped to carry it.

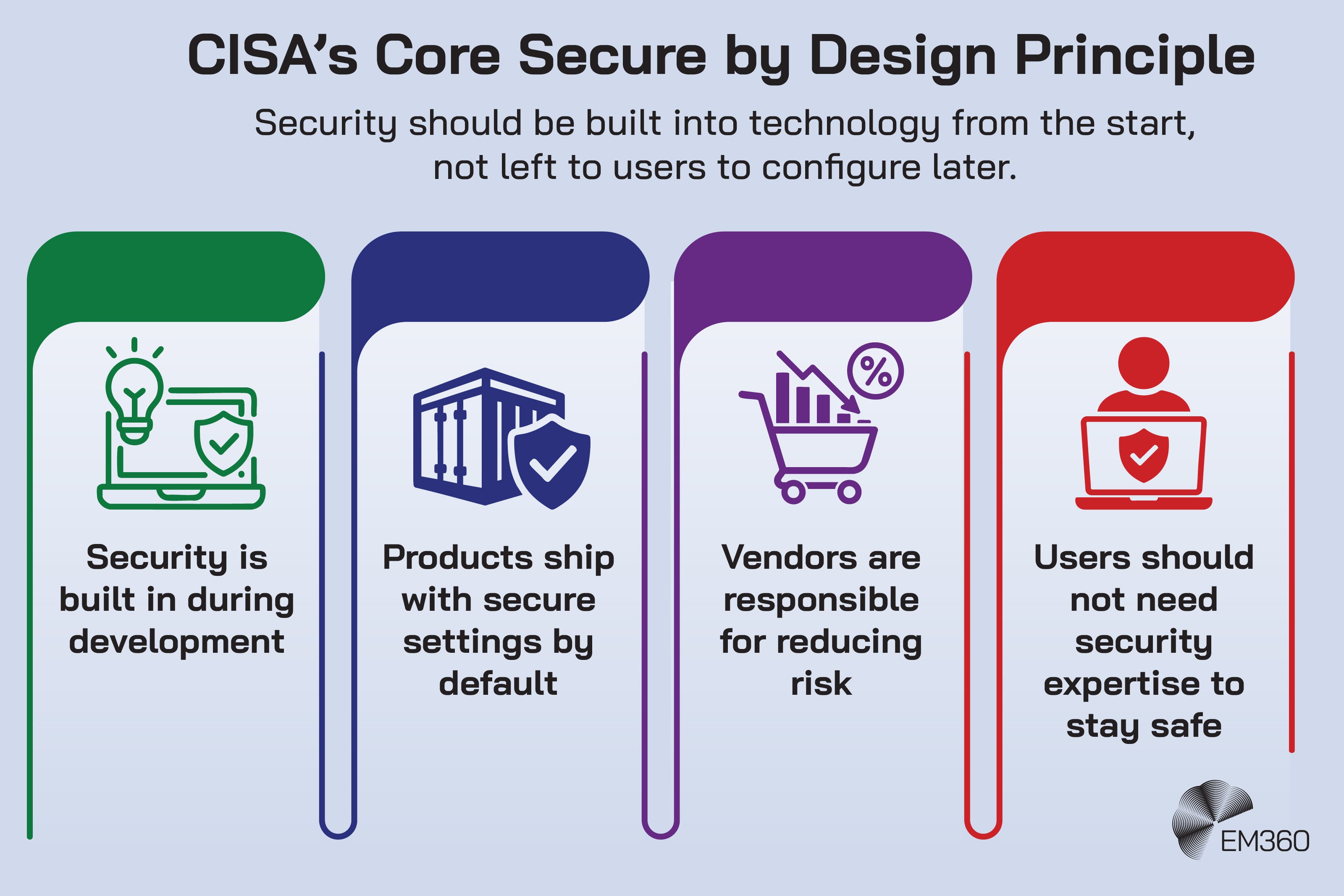

CISA’s secure-by-design guidance makes this point unusually clearly. The agency says secure by design moves much of the security burden to technology providers and reduces the chances that customers will fall victim to security incidents. In an earlier joint statement with international partners, CISA also argued that manufacturers should take ownership of security outcomes and shift the burden of security away from customers.

That’s not a fringe idea anymore. It’s becoming the mainstream logic of cybersecurity governance.

The Shift Toward Secure-By-Design And Vendor Accountability

The policy environment is moving in the same direction.

In the European Union, the Cyber Resilience Act is explicitly framed around the fact that consumers and businesses struggle to determine which products are cybersecure and to set them up securely. The Act aims to address inadequate cybersecurity in many products and the lack of timely security updates.

It also requires hardware and software to be designed to be foundationally secure, with measures such as security by default, access control, cryptography, and automatic updates. That’s a major shift in emphasis. It doesn’t abandon transparency, but it no longer treats transparency as the main protection.

You can see the same pattern in the United States. The FCC’s U.S. Cyber Trust Mark programme is designed to help buyers identify eligible smart products that meet recognised cybersecurity standards. In January 2026, the FCC opened the filing window for Cybersecurity Label Administrator applications, pushing the programme into its next phase.

The label itself isn’t the whole answer, of course. A badge on a box won’t fix weak governance or poor product design. But the programme matters because it reflects a broader recognition that the market can’t rely on consumers to decode device security unaided.

The FTC’s enforcement posture points the same way. In February 2026, the agency issued its second report to Congress on work to fight ransomware and other cyberattacks, underlining its role in consumer protection and data security enforcement.

The direction of travel is pretty hard to miss now. More responsibility is moving upstream, toward manufacturers, software providers, and the organisations that profit from digital systems. That isn’t anti-consumer. It’s what a more realistic consumer protection model looks like.

Why This Matters For Enterprise Technology Leaders

For CIOs, CISOs, and other technology leaders, this shift changes the frame.

Cybersecurity is no longer just an operational function sitting somewhere behind the business. It’s increasingly part of product quality, governance, brand trust, and organisational accountability.

And that has practical consequences.

A weak security posture is no longer only a threat to uptime or compliance. It can directly shape whether customers trust your services, whether regulators view your practices as reasonable, and whether your organisation is seen as reducing risk or passing it on.

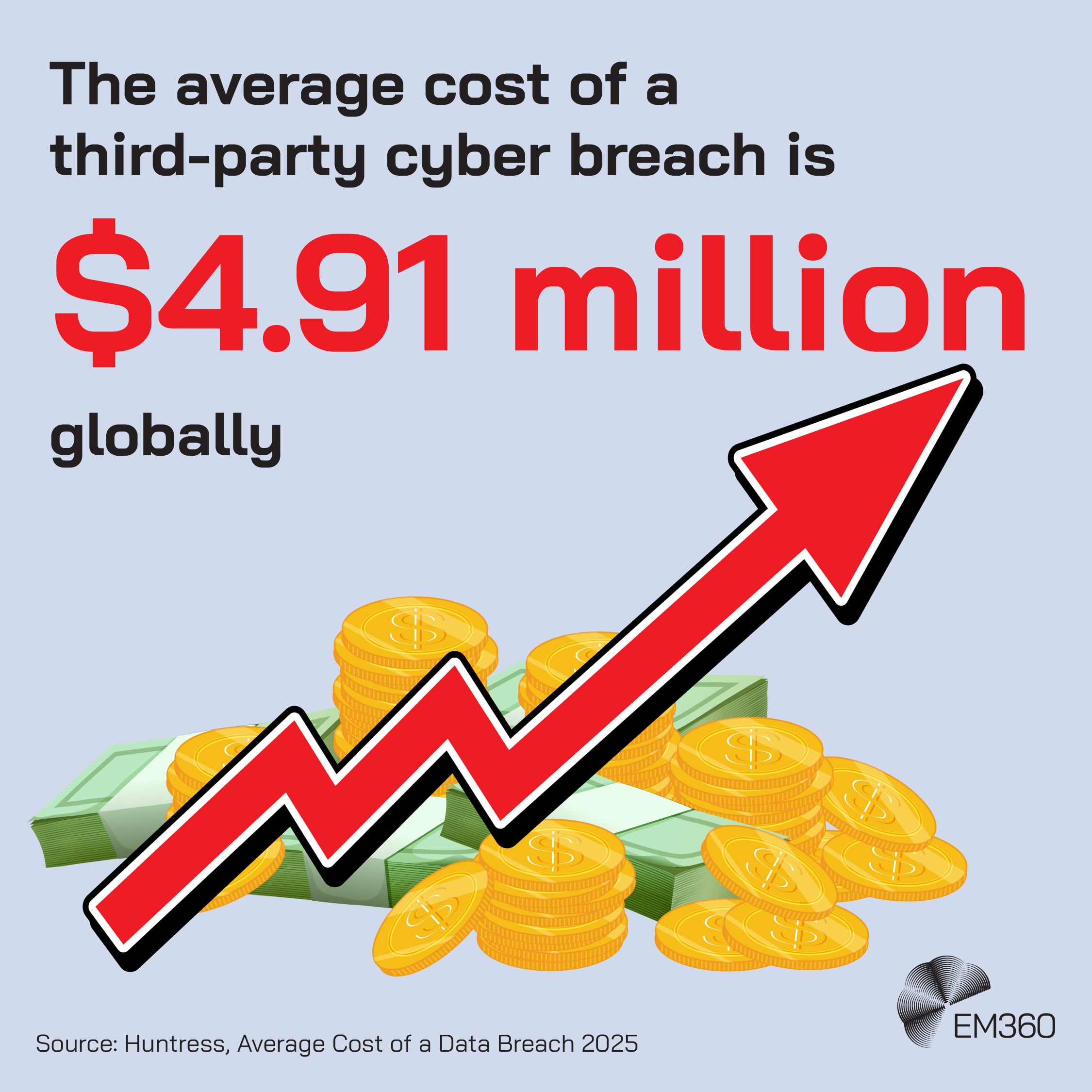

This matters even more in ecosystems with complex third-party relationships. If breaches increasingly involve partners, suppliers, perimeter devices, and inherited vulnerabilities, then enterprise cybersecurity leadership has to stretch beyond the four walls of the organisation.

It has to include procurement, product design, vendor governance, software lifecycle decisions, and the default experience users are given when they first encounter a product. That’s why the consumer protection angle should matter to enterprise leaders even if they don’t work in a consumer-facing brand.

The underlying question is the same across sectors: are we building digital systems that ordinary people can use safely without needing specialist knowledge to defend themselves?

If the answer is no, then awareness campaigns and better disclosures will only get you so far.

Final Thoughts: Cybersecurity Requires Systems That Protect People By Default

Cybersecurity is quietly forcing a rethink of how consumer protection works in the digital economy.

If digital safety depends on users understanding complex technical risks, the system will keep failing them. The responsibility has to sit earlier in the chain, with the organisations that design, build, and operate the technology people rely on every day.

For enterprise leaders, that makes cybersecurity part of something bigger than risk management. It becomes part of how organisations build trust, meet their obligations to customers, and create systems people can rely on without needing to understand how they work.

That shift is only going to accelerate, and it is exactly the kind of change EM360Tech continues to examine as cybersecurity moves from an IT discipline to a core element of digital accountability.

Comments ( 0 )