In the world of computer science, dynamic programming is more than just a buzzword. It's a systematic approach that empowers problem solvers to tackle complex problems by breaking them down into smaller, more manageable pieces. But what is dynamic programming? How does it work, and why is it so powerful?

In this article, we’ll delve deep into dynamic programming and its meaning, providing examples to help you grasp this crucial concept in the world of algorithms and programming.

What is Dynamic Programming? Definition

Dynamic programming is a computer programming technique that solves algorithmic problems by breaking them down into smaller subproblems and solving each subproblem one by one.

It's a method of mathematical optimisation and methodology for computer programming that applies to issues that can be broken down into either overlapping subproblems or optimum substructures.

Let's break down what dynamic programming is to get a better understanding of what it entails:

Dynamic: The term "dynamic" here shows that the approach adapts to the problem and modifies its strategy as it progresses. It doesn't rely on a fixed, predetermined set of rules but rather evaluates and reevaluates its options as it goes along.

Programming: In this context, "programming" does not refer to writing code in a programming language. Instead, it harks back to the term's origins in mathematical optimization and planning, where "programming" refers to the act of making a plan.

Overlapping Subproblems

When a set of equations is broken down into smaller groups of equations, overlapping subproblems are referred to as equations that reuse portions of the smaller equations several times to arrive at a solution.

These are subproblems that are solved multiple times during the execution of the algorithm. Dynamic programming solves each subproblem only once and stores the results in a data structure, such as an array or a table, for future reference.

It makes intelligent decisions while trying to optimise a certain criterion or value, breaking down complex problems into simpler subproblems, solving each subproblem only once and storing the results to avoid redundant computations.

Dynamic Programming Examples

To better grasp the concept of dynamic programming, let's explore a few examples of dynamic programming where it is commonly applied.

1. Fibonacci Sequence

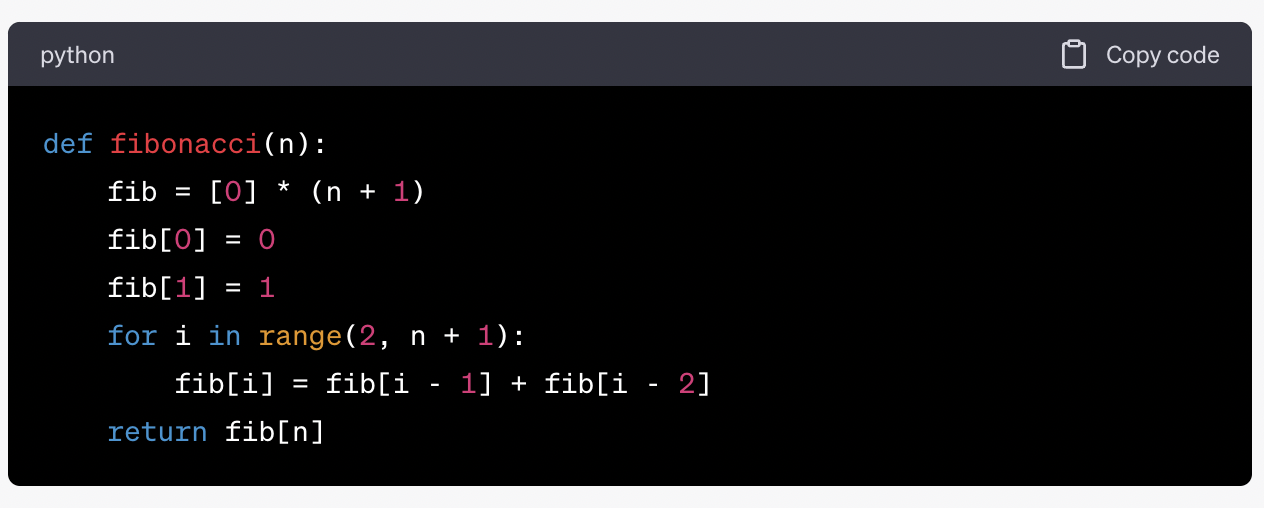

The Fibonacci sequence is a classic example that demonstrates the power of dynamic programming. The sequence starts with two initial numbers, 0 and 1, and each subsequent number is the sum of the two preceding ones. The sequence begins as follows: 0, 1, 1, 2, 3, 5, 8, 13, 21, ...

Calculating the Fibonacci number using a recursive approach can be highly inefficient because it involves redundant calculations of smaller Fibonacci numbers. However, dynamic programming allows us to optimize this process by storing previously computed Fibonacci numbers in an array. This way, we avoid recalculating values we've already determined.

By using dynamic programming, we can compute Fibonacci numbers efficiently and avoid the exponential time complexity associated with the naive recursive approach.

2. Longest Common Subsequence

The longest common subsequence (LCS) problem is a classic example of a dynamic programming problem in the field of computer science. Given two sequences, find the longest subsequence present in both of them.

For example, consider the sequences "ABCD" and "ACDF." The longest common subsequence is "ACD," which has a length of 3.

Dynamic programming provides an efficient way to solve the LCS problem by breaking it down into smaller subproblems. We create a matrix where each cell represents the length of the LCS for a subset of the sequences. By filling in this matrix systematically, we can find the length of the LCS and reconstruct the subsequence itself.

3. Knapsack Problem

The knapsack problem is a classic optimization problem in computer science and mathematics. It involves selecting a subset of items from a set of items, each with a weight and a value, to maximize the total value while staying within a specified weight limit.

Dynamic programming is commonly used to solve the 0/1 knapsack problem, where each item can be either included or excluded from the knapsack.

The dynamic programming approach involves creating a table where each cell represents the maximum value that can be obtained with a specific weight limit and a subset of items.

By iteratively filling in this table, we can determine the maximum value that can be achieved and identify which items should be included in the knapsack to achieve this maximum value.

Key Elements of Dynamic Programming

To effectively implement dynamic programming, there are some key elements and characteristics you should keep in mind:

1. Optimal Substructure

Dynamic programming problems exhibit optimal substructure, which means that the optimal solution to the overall problem can be constructed from the optimal solutions of its subproblems. In other words, if you can find the optimal solution to a smaller version of the problem, you can use it to find the optimal solution to the larger problem.

2. Memoisation

Memoization is a technique used in dynamic programming to store the results of expensive function calls and return the cached result when the same inputs occur again. It's particularly useful for optimizing recursive algorithms. Memoization helps reduce redundant calculations by storing the results of subproblems in a data structure like an array or dictionary.

3. Bottom-Up Approach

In dynamic programming, you can choose between a top-down (recursive) approach and a bottom-up (iterative) approach. While both approaches are valid, the bottom-up approach is often preferred because it avoids the overhead of recursive function calls and typically has better memory usage characteristics.

4. Tabulation

Tabulation is the process of filling in a table or array with the results of subproblems in a systematic manner. It's a common technique used in dynamic programming to solve problems that can be broken down into smaller overlapping subproblems.

Final Thoughts

Dynamic programming is a powerful problem-solving technique that is widely used in computer science and mathematics. Whether you're working on optimising recursive algorithms, finding the longest common subsequence, or tackling classic problems like the knapsack problem, dynamic programming is a valuable tool in your problem-solving arsenal.

Understanding its principles and applications can greatly enhance your ability to tackle a wide range of computational challenges.

Comments ( 0 )