AI in security isn’t a future trend. It’s already sitting inside day-to-day cybersecurity operations, quietly influencing what gets flagged, what gets ignored, and what gets escalated. Many organisations are already relying on platforms and services from providers like Sophos to help manage that complexity at scale.

Yet when an incident turns into a real business problem, it still comes down to people. Someone decides whether to shut down a system. Someone decides what “material impact” means. Someone owns the call.

That’s the tension security leaders are living with right now. Attackers are moving faster and scaling their efforts with automation. Defenders are drowning in data, alerts, and competing priorities. AI can help close the speed gap, but it can also create a new kind of fragility if teams treat it like a replacement for judgement instead of a partner to it.

Why AI Is Becoming Essential to Security Operations

Security teams didn’t adopt AI because it’s fashionable. They adopted it because the work expanded and the headcount didn’t.

Modern organisations produce a continuous stream of security telemetry. Logs from endpoints, identity providers, cloud platforms, email gateways, SaaS applications, network devices, and security tools all pile into the same funnel. Even well-run teams hit the same wall: there’s too much signal, too much noise, and not enough time to manually connect the dots.

That’s why security operations centre (SOC) leaders keep circling back to the same promise. AI can take the messy, high-volume parts of the job and compress them into something a human can actually act on.

The volume problem AI is designed to solve

Most security teams don’t need more alerts. They need fewer, better ones.

In practice, volume creates two risks at once. First, analysts miss things because they can’t see everything. Second, analysts become numb to noise, which means the “real” alert doesn’t feel urgent until it’s too late.

AI is at its best when it reduces that cognitive load. It can cluster similar events, identify patterns across disparate sources, and highlight the anomalies that deserve human attention. That doesn’t remove the need for expertise. It protects expertise from being wasted on repetitive sorting and context gathering.

That’s also why expecting people to manually compensate for scale and deception doesn’t work. As Chester Wisniewski, Director and Global Field CISO at Sophos, puts it, “We can’t expect users to hyper analyse URLs to decide if an O is a zero before they click. It’s just an unrealistic expectation.”

Speed now shapes outcomes, not just accuracy

Speed matters because attackers aren’t waiting politely while teams investigate. Automation has shortened the time between initial access and meaningful impact, especially in environments where identity misuse and credential abuse give attackers a head start.

It’s also why AI-enabled social engineering is such a practical threat. Microsoft’s 2025 Digital Defense Report cites testing where AI-automated phishing emails achieved significantly higher click-through rates than standard attempts.

That pressure is only increasing. Wisniewski notes in the Security Strategist episode that, “The amount of time we have to think about it and identify if an alert is dangerous is going down and down and down.”

When attacks scale and the pace accelerates, defenders need faster triage and faster investigation just to stay even. AI can help shrink the time-to-detect and time-to-decide, but only if it’s embedded into how the team works, not bolted on as another dashboard.

Where AI Adds the Most Value Today

When Cyber Insurance Decides

Why underwriting now depends on measurable EDR, MDR and incident response maturity across the business.

The clearest value of AI automation isn’t that it “thinks like an analyst”. It’s that it handles the parts of the analyst’s job that are slow, repetitive, and data-heavy, then hands back something smaller and more actionable.

If you’re trying to get real value out of AI, focus on where it consistently improves outcomes right now: data triage, investigation acceleration, and operational sustainability.

Triage and prioritisation at machine speed

Triage is where good SOCs win time. AI can help sort, cluster, and rank alerts so humans start with what matters most. That includes:

- Correlating events across tools to reduce duplicates.

- Identifying likely false positives based on known benign behaviour.

- Ranking alerts by context, such as privilege level, asset criticality, and unusual access patterns.

- Grouping related activity into a single incident thread.

This is where alert triage becomes less about reacting and more about prioritising. It is also where AI helps remove the “everything is urgent” problem that burns teams out.

Accelerating investigation and analysis

Investigation is where good teams often lose time, not because they lack skill, but because the work is inherently slow.

AI can speed up the early investigative steps that typically consume hours:

- Translating questions into queries for logs and security data.

- Summarising a chain of events into a clean narrative that an analyst can validate.

- Helping interpret suspicious scripts or command sequences by identifying intent and likely outcomes.

- Pulling relevant context from large datasets so the analyst isn’t searching blind.

This doesn’t replace threat hunting. It makes threat hunting faster, especially when the team is dealing with multiple incidents at once.

Reducing analyst fatigue and operational drag

There’s a quiet truth many leaders avoid saying out loud. Even strong teams get tired. They miss things when they’re overloaded. They cut corners when the queue never clears.

Inside the Ransomware Stack

Unpack the tools, processes, and telemetry needed to rapidly identify, contain, and neutralize ransomware across the estate.

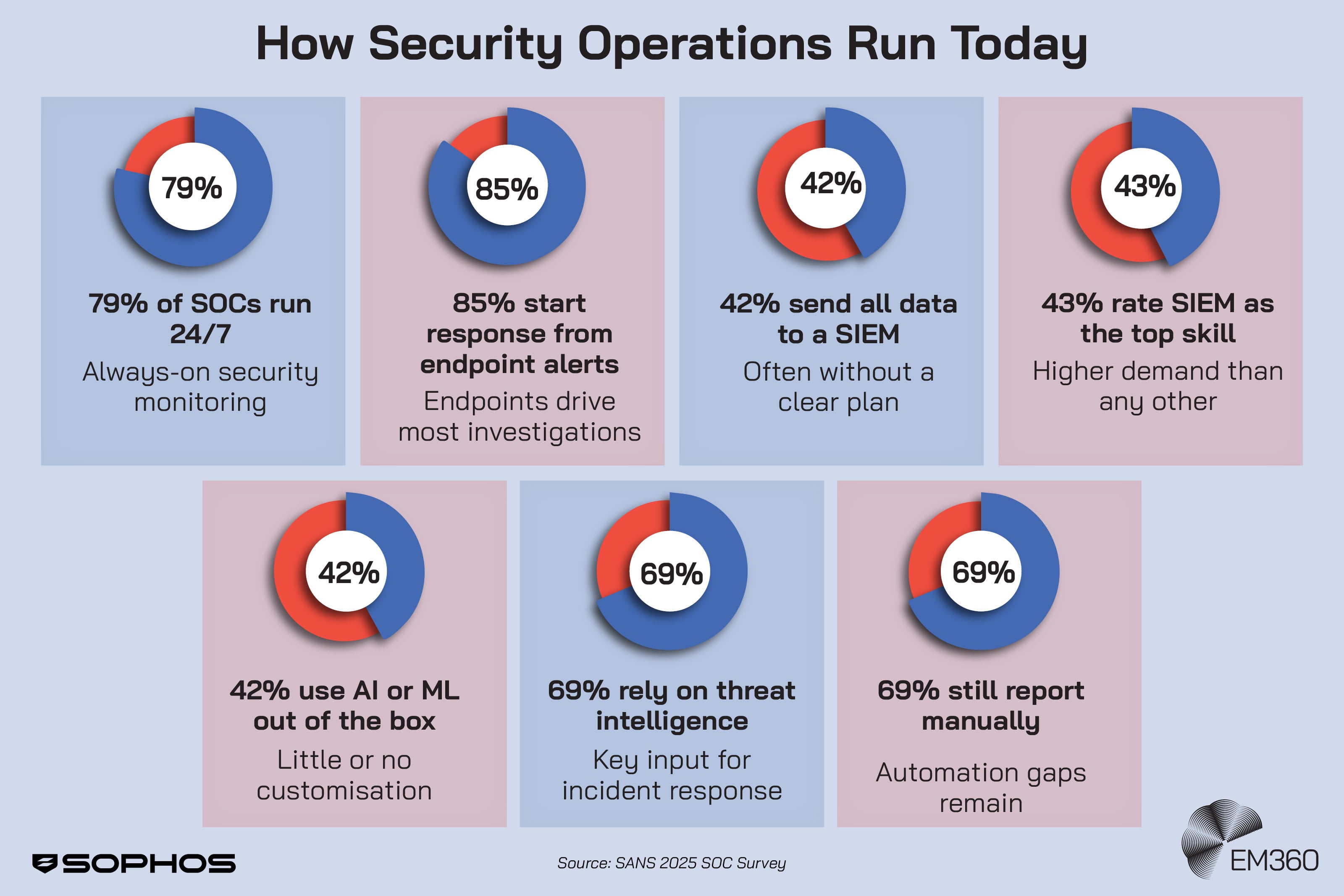

The 2025 SANS SOC Survey highlights how many SOCs still rely on manual or mostly manual processes for reporting metrics, which is time-consuming and hard to scale. That kind of work steals time from detection and response. It also slows down communication with leadership, which means decisions take longer.

Used well, AI removes the operational drag that drains a team’s energy. It doesn’t just make the SOC faster. It makes it more sustainable.

Where Human Judgment Remains Non-Negotiable

AI can process data at speed. It can summarise. It can suggest. What it can’t do is own consequences.

This is the boundary security leaders need to make explicit. The goal isn’t to minimise human involvement. It’s to protect human judgement for the moments where it matters most.

Risk acceptance and business context

No model can decide what your organisation can tolerate.

Even if AI can estimate likelihood and potential impact, it can’t know the business context that shapes risk decisions. It doesn’t understand which systems are politically sensitive, which data sets are reputational landmines, or which “temporary exceptions” have become permanent over time.

Humans make the call on risk acceptance because they’re accountable to customers, regulators, and boards. AI can support that call, but it cannot replace it.

Escalation, response, and trade-off decisions

Incident response isn’t a technical workflow. It’s an organisational decision-making sprint.

Should you isolate a system and risk downtime, or keep it running while you investigate? Do you notify stakeholders now, or wait until you’ve confirmed scope? Do you prioritise containment, recovery, or forensics?

These are trade-offs with legal, operational, and reputational consequences. They require leadership ownership, not automated action.

This is why incident response needs humans at the centre, even in AI-augmented environments. Automation can accelerate steps. It shouldn’t dictate outcomes.

Interpreting intent and ambiguity

Attackers often succeed in the grey areas.

They exploit legitimate tools. They blend in with normal behaviour. They use real credentials. They create situations where “it could be nothing” and “it could be everything” look uncomfortably similar.

AI Snake Oil or Real Defense?

Unpacks the gap between marketing claims and measurable value as enterprises adopt AI and machine learning for cyber risk reduction.

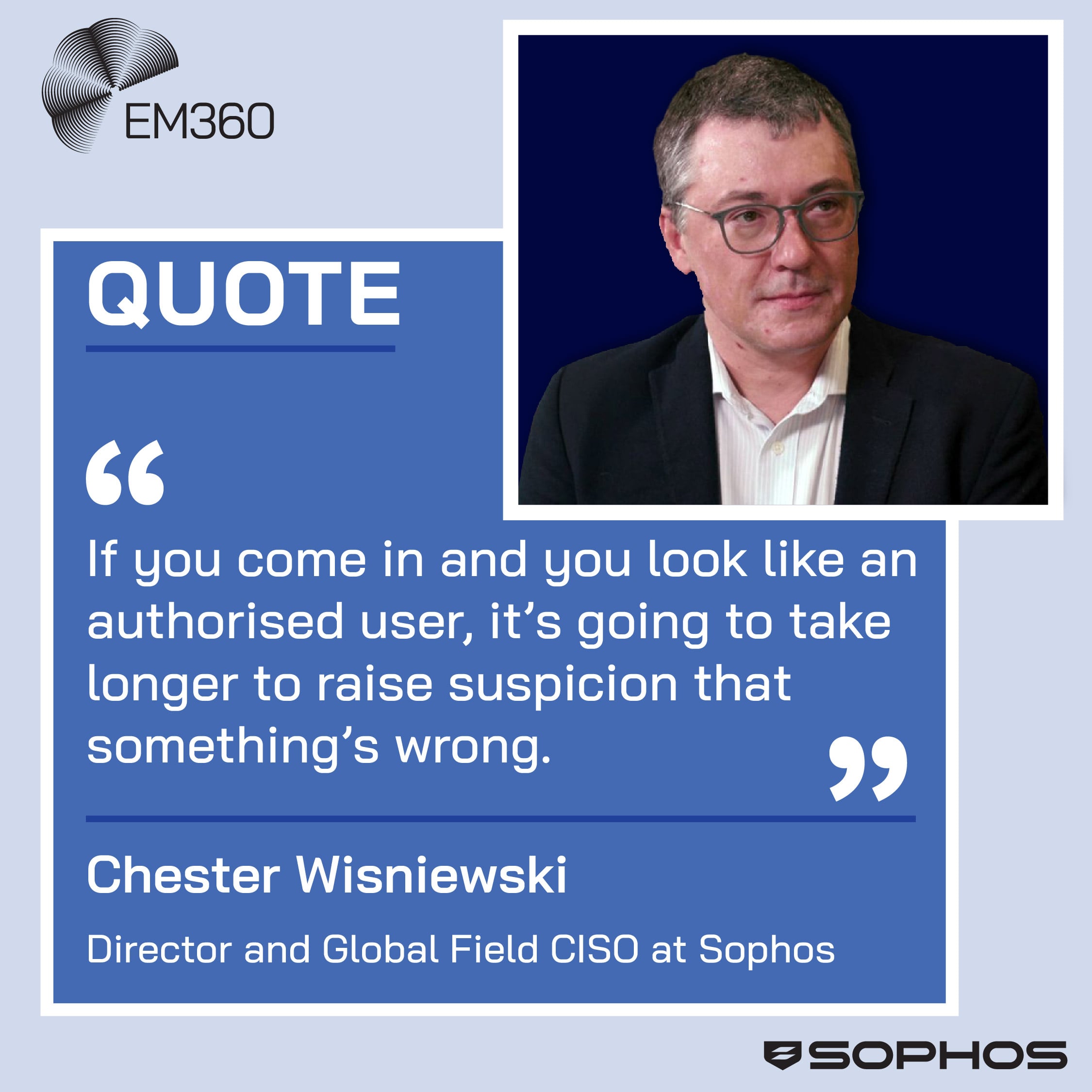

As Wisniewski explained while chatting with Richard Stiennon, “If you come in and you look like an authorised user, it’s going to take longer to raise suspicion that something’s wrong.”

AI can help flag anomalies, but when signals conflict, human intuition, experience, and scepticism still matter. That’s especially true when the organisation has to decide whether to treat an alert as an incident, a misconfiguration, or business-as-usual noise.

The Risk of Overreliance on Automation

AI doesn’t fail loudly. And that’s part of the danger.

Overreliance isn’t about trusting AI once. It’s about building a security posture that assumes AI will always catch what matters.

When automation hides blind spots

Unchecked automation can create false confidence.

If a team assumes the model will spot the pattern, they stop looking for what the model isn’t trained to recognise. If the model consistently downranks a certain alert type, that alert type becomes invisible over time. If the model’s outputs are treated as “truth” rather than “input”, the team’s critical thinking atrophies.

The risk isn’t AI making a mistake. The risk is the organisation designing a system where nobody notices the mistake until the damage is done.

That tension is why over-automating judgement is risky. As Wisniewski puts it, “We can’t expect users to hyper analyse URLs to decide if an O is a zero before they click. It’s just an unrealistic expectation.”

Tool sprawl without workflow clarity

Adding AI tools doesn’t automatically improve outcomes.

In fact, it can increase operational complexity if the workflows don’t change. Teams end up with more dashboards, more alert streams, more places to look, and more ambiguity about who owns what.

The measurable value comes from integration and clarity: what the tool does, what the human does, and how decisions move from signal to action. Without that, AI becomes another layer of noise.

What Coevolution Looks Like in Practice

Why Identity Now Drives Breaches

Real breach data shows identity, not exploits, is now the primary attack path. How CISOs must realign controls, MFA, and MDR to match.

Coevolution is not a philosophy. It’s an operating model.

It means designing security workflows where AI supports human capability, and humans provide oversight, context, and accountability. It also means planning for the fact that both attackers and defenders will keep improving their use of AI.

Designing human-in-the-loop workflows

A practical way to start is to define decision thresholds.

Ask two questions for each workflow:

- Where does speed matter more than certainty?

- Where does consequence demand human sign-off?

For example, AI can automate enrichment, correlation, and prioritisation. It can suggest likely causes and recommended actions. But when it comes to disabling accounts, isolating systems, or declaring an incident, there should be a clear human gate.

Human-in-the-loop isn’t about slowing things down. It’s about ensuring the fastest actions don’t become the most dangerous ones.

Training analysts to work with AI, not around it

If analysts don’t trust AI, they ignore it. If they trust it too much, they stop thinking.

The skill shift is learning how to validate AI output quickly, challenge assumptions, and use AI as a tool for speed without surrendering judgement. That includes:

- Knowing what the model is good at and what it routinely misses.

- Spot-checking summarisation and correlation logic.

- Treating AI outputs as hypotheses that must be tested, not conclusions to be accepted.

This is less about “AI training” and more about disciplined analysis in a faster environment.

Governance, validation, and accountability

This is where many organisations are still catching up.

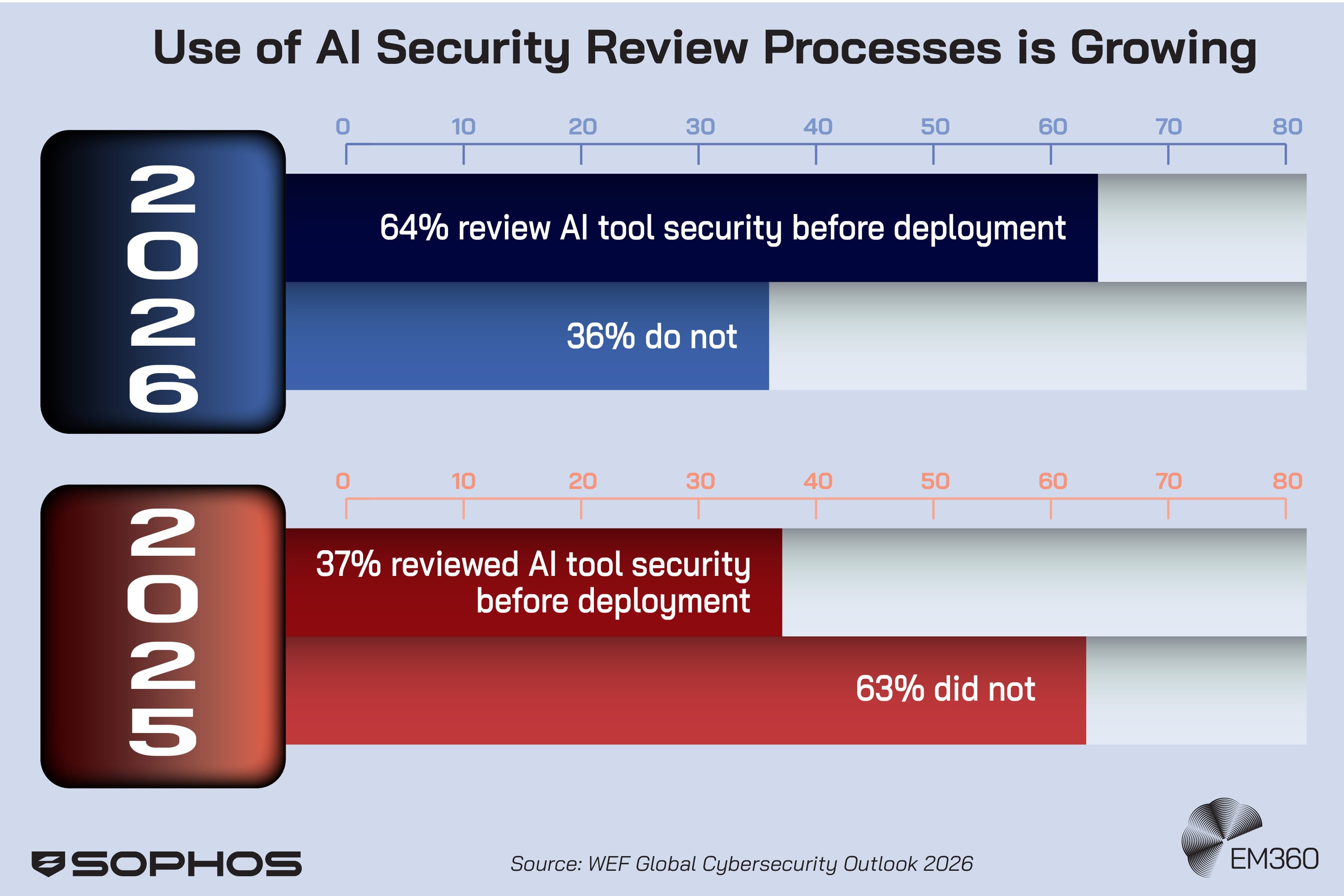

The World Economic Forum’s Global Cybersecurity Outlook 2026 notes a sharp increase in organisations assessing the security of AI tools, rising from the previous year. That’s a signal that governance is moving from an abstract concern to a practical requirement.

AI governance should answer simple, blunt questions:

- Who owns the tool’s outputs?

- How is it tested, monitored, and updated?

- What data does it use, and what data does it expose?

- How do we audit decisions that were influenced by AI?

If the organisation can’t answer those questions, it’s not ready to depend on AI for high-stakes security decisions.

Why Coevolution Is a Leadership Responsibility

Most AI conversations get stuck at the tooling layer. CISOs don’t have that luxury.

Coevolution is a leadership responsibility because it touches strategy, risk appetite, operating model design, and board-level accountability. It also changes what “good” looks like for a security team.

Aligning people, process, and technology

AI creates leverage. It also exposes weak foundations.

If identity governance is messy, AI triage will struggle. If logging is inconsistent, AI analysis will mislead. If incident response playbooks are unclear, AI will accelerate confusion instead of resolution.

The goal is alignment. People need the right skills. Processes need clear decision points. Technology needs integration that supports the workflow rather than complicating it.

That’s the coevolution part. When AI improves, your workflows and oversight need to improve with it. Otherwise, you’re just moving faster into the same problems.

Final Thoughts: Resilience Depends on Shared Intelligence

AI in security is already reshaping how cybersecurity operations run, but it hasn’t changed the core truth of incident response. Outcomes still hinge on human judgement, accountability, and context.

The organisations that handle this shift best won’t treat AI as a replacement for people or a shortcut around governance. They’ll treat it as an accelerant for better decisions, backed by clear boundaries and leadership ownership. That’s what resilience looks like when both attackers and defenders are moving at machine speed.

That balance is reflected in how providers like Sophos think about applying AI across detection, response, and day-to-day operations, and in the broader conversations security leaders are having about what sustainable defence actually requires. EM360Tech exists to support those conversations, bringing practitioner insight and real-world experience together so leaders can make clearer decisions under pressure.

Comments ( 0 )