Patch management should be boring by now. It’s been part of IT since the first systems started breaking in repeatable ways, and we’ve had decades to industrialise it. Yet for many teams, patch management still feels like a weekly fight with time, tooling, and unfinished lists.

At Action1, we see this tension play out daily across organisations that thought patching was “handled,” only to discover the operational drag never really went away. The reason isn’t a shortage of products that promise automation. It’s that patching has quietly changed shape.

Today’s environments don’t behave like the patch cycles they were designed for. Endpoints roam. Users work off-VPN. Laptops go dark for days. Meanwhile, vulnerability exploitation keeps getting faster. A device that’s compliant on Monday can be exposed by Tuesday, simply because the world moved under it.

That’s the frustration at the centre of modern patching. It isn’t just about pushing updates. It’s about maintaining patch compliance in an environment that refuses to sit still.

Patch Management Was Built for a Different Era

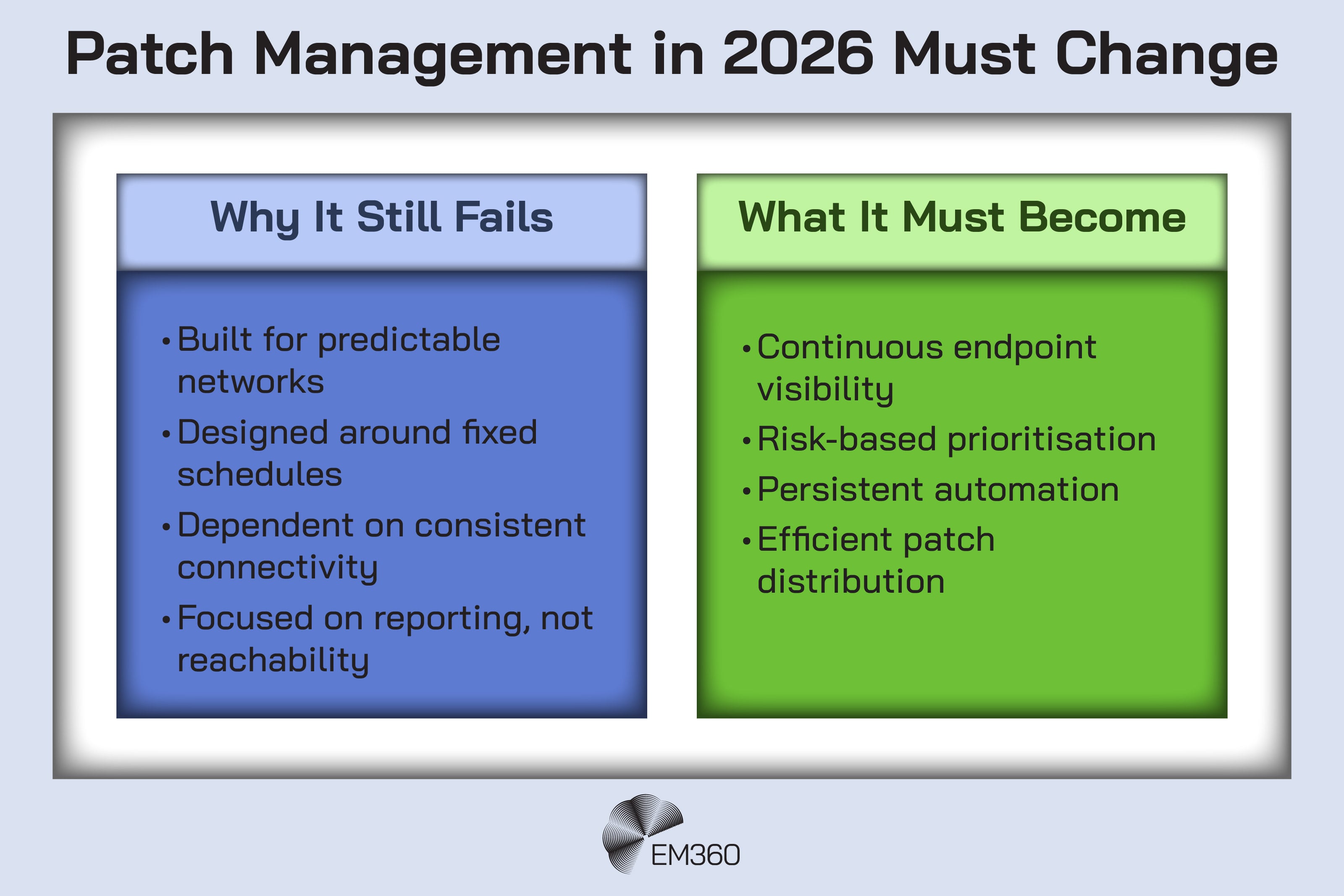

Traditional enterprise patching assumed something that’s increasingly rare: predictable reachability.

When most endpoints lived on office networks and stayed online during set windows, patch cycles were a practical compromise. You could scan, test, schedule, deploy, and report. You could build muscle memory around “monthly updates” and the occasional emergency release. If a machine missed the window, it wasn’t ideal, but it was recoverable because it was still part of a stable estate.

Hybrid work and distributed infrastructure break those assumptions. The estate is no longer a single place. It’s a moving pattern of devices, networks, and user behaviour. That changes the job from “run the patch cycle” to “keep the organisation continuously close to safe,” even when you don’t control when devices appear.

It’s worth noting that this complexity isn’t new to security standards bodies. NIST’s patch management guidance frames patching as preventive maintenance. But also as an organisational programme with planning, prioritisation, testing, and communication — not just a technical task. That’s a polite way of saying what most IT teams already know: patching is governed by people, process, and risk tolerance as much as tooling.

So when patch management feels “unsolved,” it’s often because the operating model hasn’t caught up. The workflow is still built around a world where endpoints behave, networks are predictable, and time moves slowly.

The Exploitation Gap Is Shrinking

Patching used to feel like a race against releases. Now it’s a race against exploitation.

You can see that shift in how defenders prioritise. Many security teams increasingly treat “known exploited” as its own category, because it maps to what attackers are actually using in the wild, not what’s theoretically vulnerable. The US Cybersecurity and Infrastructure Security Agency (CISA) formalised that approach with its Known Exploited Vulnerabilities (KEV) catalogue, which is updated as exploitation is confirmed.

The impact on operational workload is immediate. Every new KEV entry isn’t just another patch. It’s a new prioritisation decision that competes with everything else the team is doing. When vendors release security updates alongside reports of active exploitation, the decision window gets even tighter. You’re no longer asking, “Can we schedule this next week?” You’re asking, “How long can we afford to wait?”

Reframing Patch Management

Why automated, cloud-native patching is now a core control for securing dispersed, high-availability infrastructure.

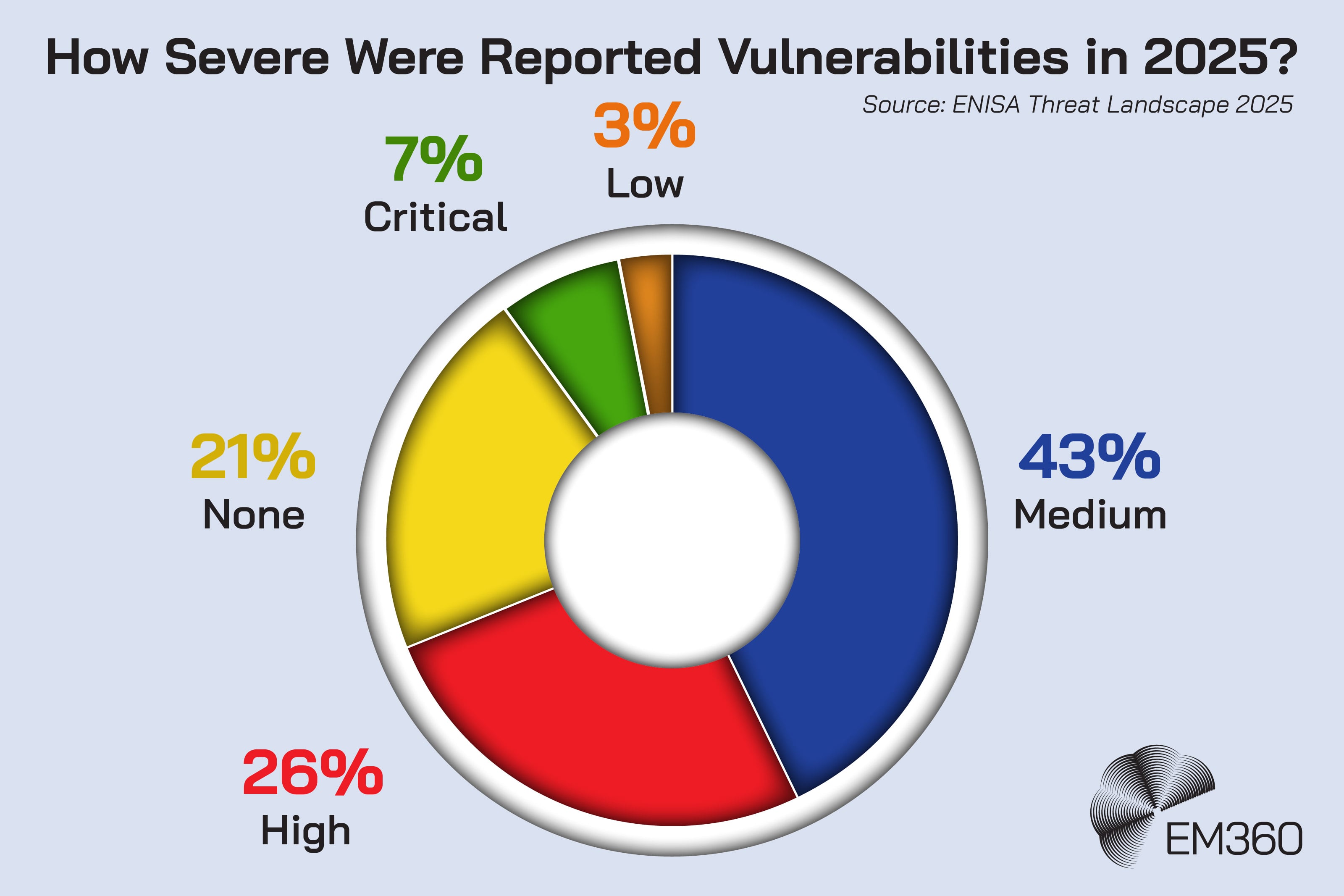

ENISA’s Threat Landscape reporting has also been blunt about the broader direction of travel. Recent editions highlight the speed and scale at which vulnerabilities are weaponised as part of the modern threat environment. That matters because it reframes patching as a continuous resilience discipline, not a periodic hygiene task.

This is where a lot of patch programmes start to buckle. The estate gets larger and more distributed at the same time the threat window gets smaller. That combination creates the most dangerous kind of pressure: work that must be done quickly, across devices you can’t reliably reach, using processes built for stability.

Remote Endpoints Break Traditional Patch Workflows

Remote work didn’t just move people. It moved the centre of gravity for patching.

Off-VPN devices are the obvious problem. If your patch workflow still assumes that “connected” means “on the corporate network,” you’ve already lost coverage. Many endpoints spend most of their time outside those boundaries, especially laptops that live on home networks, hot spots, and shared Wi-Fi.

Then there are intermittently connected machines. Devices that sleep, roam, or go offline for extended periods don’t just miss maintenance windows. They develop drift. They accumulate unpatched software, outdated third-party apps, and configuration gaps. When they finally reconnect, they often come back as time capsules.

That creates the classic patching illusion: visibility without compliance. You can have dashboards that look reassuring, while a meaningful slice of the estate remains effectively unmanaged because it isn’t reachable when it matters. And when those endpoints do reappear, they don’t arrive politely. They land in the middle of a workday, on unpredictable networks, with users who don’t want to be interrupted.

That’s why patch compliance is no longer a scheduling problem. It’s a reachability and persistence problem. If your automation is built around a calendar, it’s always going to struggle against an estate that doesn’t respect the calendar.

The right question to ask in 2026 isn’t, “Do we automate patching?” It’s, “Can our automation keep working when devices disappear, reappear, and behave like the real world?”

Patch Distribution at Scale Is the Hidden Bottleneck

Even when you get prioritisation and reachability right, patching still has a quieter failure mode: delivery.

Patches aren’t just decisions. They’re packages that need to move across networks. At scale, that creates friction in places many organisations underestimate:

- Branch offices and smaller sites can’t absorb repeated large downloads without impact.

- Remote users pulling updates individually can turn a routine patch day into a bandwidth problem.

- Third-party application updates can stack up quickly, and the same content gets downloaded again and again across endpoints.

This is where patch management stops being only a security workflow and starts behaving like a content distribution problem. If the distribution layer is inefficient, patching will feel slow, unreliable, and labour-intensive, no matter how clean the policy looks on paper.

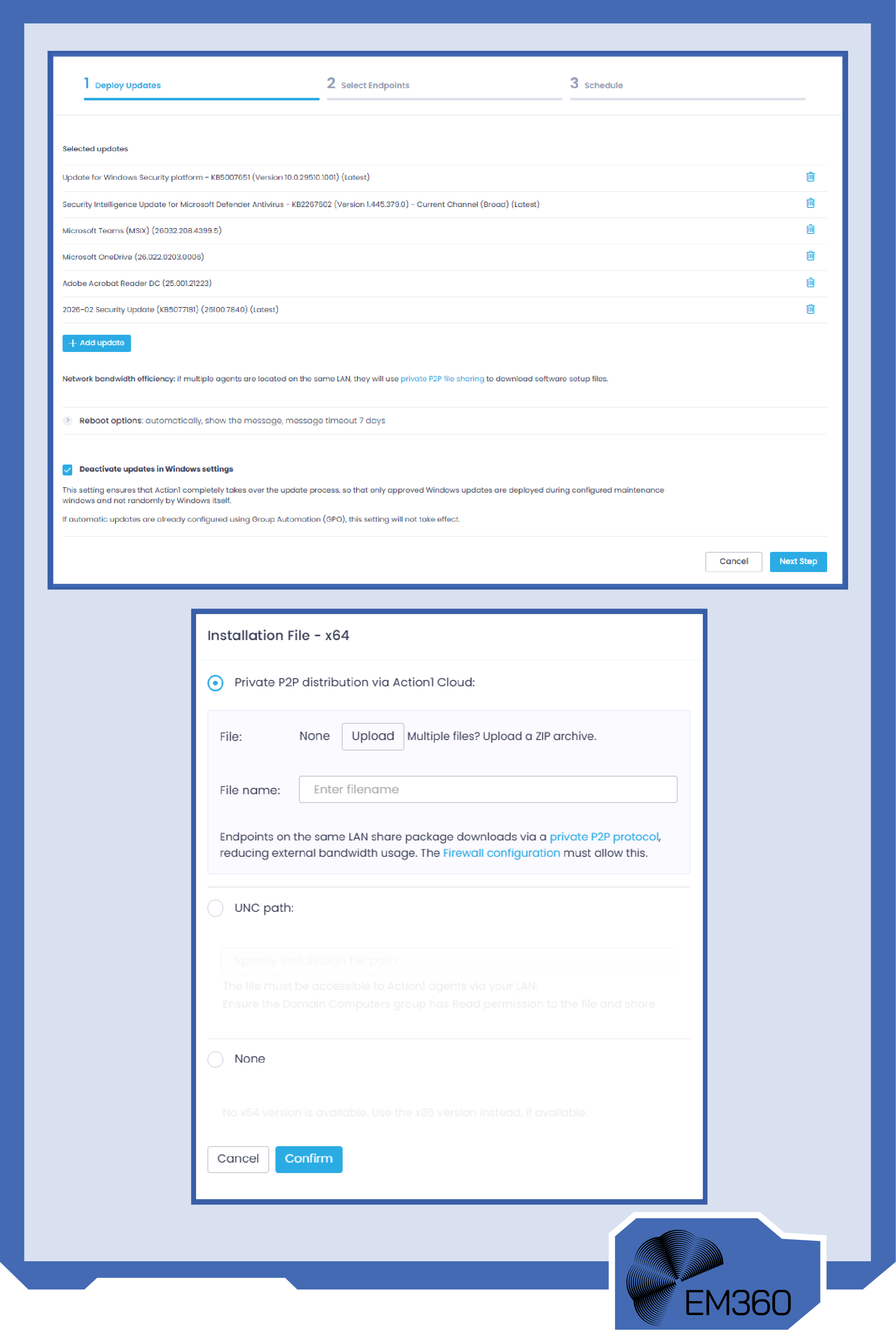

The industry has been moving toward smarter distribution patterns for a while, including peer-assisted delivery models. Microsoft’s Delivery Optimization is one mainstream example of the idea: endpoints can share update content locally, reducing the need for every device to pull everything from the internet.

Microsoft has even published outcomes from its own environment, noting that a large share of update content can come from peer devices rather than external downloads when peer caching is used.

That matters for a simple reason: patch distribution is often the difference between “we scheduled it” and “it actually finished.” If delivery is slow or fragile, endpoints fall behind, users get interrupted, and IT teams end up doing the most expensive thing in patching: manual recovery work.

What Modern Patch Automation Must Actually Deliver

Modern patch automation can’t just be faster. It has to be more complete.

In 2026, real automated patch management needs five things working together:

- First, continuous visibility. Not “we scanned last week,” but “we can see what’s out there, even when it’s not on our network.”

- Second, risk-based prioritisation. Not every update is equal, and teams need a way to align action to real-world threat signals, including known exploited vulnerabilities.

- Third, policy-driven deployment. Automation must apply intent consistently, without turning patching into a daily series of one-off decisions.

- Fourth, reliable distribution. A patch that can’t reach endpoints efficiently becomes a backlog item, and backlogs are where attackers thrive.

- Fifth, compliance reporting that’s actually defensible. Not just a dashboard, but evidence you can stand behind when you’re asked what was patched, when, and where the gaps are.

This is also where peer-assisted delivery becomes more than a “nice to have.” Action1’s approach includes private peer-to-peer file sharing, designed to distribute updates across endpoints on the same network so organisations can reduce bandwidth strain and improve patch reliability. That’s not a replacement for automation. It’s part of making automation hold up under real conditions.

And for teams that are tired of procurement-heavy decisions before they can even test outcomes, Action1’s model is also structurally interesting: the first 200 endpoints are free forever, with no feature limitations and no credit card requirement. That lowers the cost of learning.

So you can validate whether your patch workflow improves before you ask the organisation to scale it.

Why “Junior Task” Thinking Is the Real Risk

Patch management is often treated like a junior sysadmin responsibility. In isolation, that makes sense. Many patch tasks are repeatable and procedural.

The problem is what patching represents now.

When attackers routinely chain known vulnerabilities into initial access, patching becomes a frontline control. It’s a foundational part of vulnerability management, and it influences how quickly the organisation can reduce exposure when something new breaks in public.

That’s not a junior risk. It’s an enterprise risk management issue.

When patching is under-resourced, it becomes reactive. Teams spend their time chasing exceptions, fixing failed deployments, and hunting for devices that drifted off the map. The work turns into firefighting, and firefighting is always more expensive than prevention.

The organisations that get patching right don’t necessarily have the biggest security budgets. They treat patch governance as a leadership concern. They define acceptable risk windows. They agree on prioritisation rules. They invest in systems that reduce manual effort, not just systems that create nicer reports.

At that point, patching stops being a chore and starts being operational maturity.

Final Thoughts: Patch Management Fails When Infrastructure Outpaces Process

Patch management doesn’t fail because teams don’t care. It fails because we still treat it like scheduled maintenance in a world that no longer runs on schedules.

Infrastructure now shifts daily. Devices disappear and return on their own terms. Exploitation windows compress without warning. When patching is built around static cycles, it will always lag behind a dynamic threat landscape. That gap is where risk lives.

The real shift isn’t technical. It’s philosophical. Patching has to move from a recurring task to a continuously optimised system. That means visibility that doesn’t depend on VPN presence. Prioritisation that reflects real-world exploitation. Distribution models that don’t choke networks or stall endpoints. And automation that reduces operational drag instead of creating more dashboards to manage.

Modern patch management is really about operational resilience. It’s about designing workflows that assume movement, not stability.

If your team is still fighting the same patch battles every month, the conversation around what automation should look like is already underway. The broader Security Strategist discussions at EM360Tech continue to unpack this shift, and the way vendors like Action1 are rethinking patch automation reflects a simple truth: patching isn’t a junior task. It’s a structural control.

Comments ( 0 )